In 2013, Stewart Butterfield sent a memo to his team before Slack’s internal launch. It wasn’t about features or growth metrics. It was about what it would feel like to use the product when something went wrong. He wanted the team to imagine every failure state, every moment of confusion, every place a user might feel stupid. Then fix those things before anyone else saw them.

That memo, later made public, has been cited so often in startup circles that it’s become wallpaper. People nod at it and move on. What they miss is that Butterfield wasn’t writing a mission statement. He was describing a literal operating procedure that Slack followed for years, and it’s a big part of why a chat tool built by a failed gaming company became a $27 billion acquisition target.

The Setup

Slack was not supposed to exist. Butterfield and his team had been building a multiplayer game called Glitch. When Glitch failed in 2012, the internal communication tool they’d built to coordinate the game’s development was the only thing left standing. They decided to productize it.

This origin story gets told as serendipity. It wasn’t. What it was, actually, was a team that had spent years using their own tool under real pressure and complaining about it constantly. They knew exactly where it broke down because they’d lived those breakdowns. The first version of Slack that went to outside beta users wasn’t a speculative product. It was a response to a long list of grievances the team had catalogued about their own working lives.

The beta launched in August 2013. Within 24 hours, 8,000 companies had signed up. That number gets cited as proof of product-market fit. What it actually proved is that a lot of people had the same complaints Butterfield’s team had, and were desperate for someone to take those complaints seriously.

What Happened

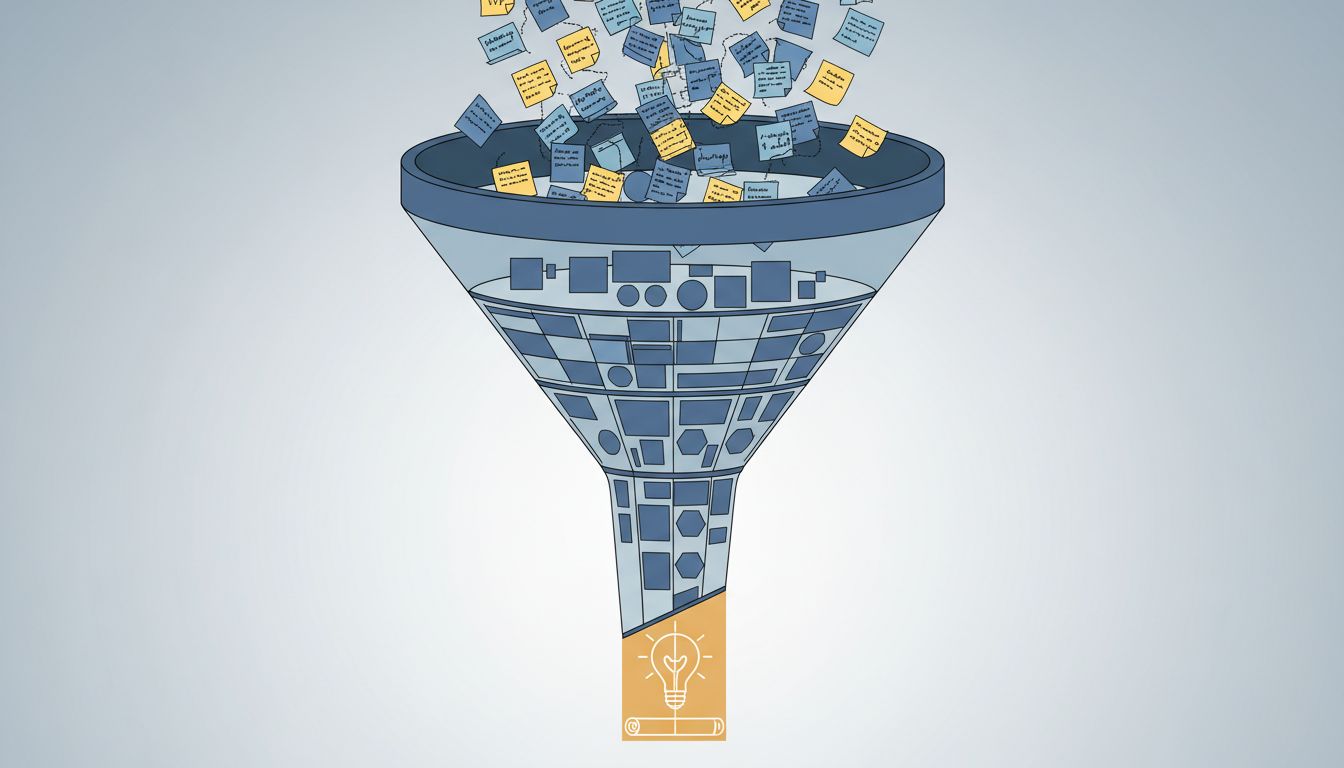

Slack’s early growth strategy was, in the plainest terms, a complaint intake system with product engineering attached to it.

The support team in the early days wasn’t a cost center. It reported directly to product leadership. Every complaint, every confused support ticket, every “why does this do that” email was categorized and counted. When a category hit a threshold, it moved to the top of the engineering queue. Not because of a formal process, but because the founders treated support volume as the most honest signal they had about where the product was actually failing.

This sounds obvious. It isn’t. Most startups in that era (and this one) treat support as damage control. You staff it minimally, you write FAQs to deflect tickets, and you consider a low support volume a success. Slack treated high support volume as research. A complaint wasn’t a cost. It was a data point that a user had cared enough to tell you something true.

The specific example that gets lost in the Slack mythology is how they handled onboarding complaints. Early users were routinely confused about channels, about the difference between direct messages and group messages, about how to find conversations they’d already had. These weren’t edge cases. These were patterns showing up in hundreds of tickets a week.

Instead of writing better documentation, Slack redesigned the onboarding flow. Then they did it again. Then again. The first-run experience went through significant revision multiple times in the first 18 months because the support queue kept showing them that explanations weren’t the problem. The product structure was the problem. That’s a hard thing to hear when you’ve built the product structure. Most teams don’t hear it. They keep updating the FAQ.

Why It Matters

Here’s the thing about complaint-driven product development that the startup mythology usually gets wrong: it’s not about being nice to customers. It’s about information quality.

Most product teams are flying on low-quality signals. NPS scores tell you sentiment but not cause. Analytics tell you what users did, not why they stopped. A/B tests tell you which version of a thing got more clicks, which is a different question entirely from whether the thing is actually good. (There’s a reason optimization tools often end up optimizing for manipulation rather than improvement.)

Complaints are high-quality signals because they’re voluntary, specific, and costly to produce. When someone takes the time to write a support ticket, they’ve already decided the friction of complaining is worth it. That’s a strong prior that the problem they’re describing is real and significant. The user who quietly churns tells you nothing. The user who complains is handing you a diagnosis.

Slack also understood something about complaint demographics that most companies miss. The users who complain are not a random sample. They’re the engaged users, the ones who are trying hard enough to get value from the product that they’re frustrated when they can’t. Fixing the problems they surface disproportionately benefits the users most likely to stick around, pay for upgrades, and recommend the product to others.

Ignoring those users, or treating their complaints as noise, is how you optimize for the wrong population.

What We Can Learn

The Slack case study is sometimes taught as a story about listening to customers. That framing is too soft. It obscures the operational discipline required to actually execute this.

Listening is passive. What Slack did was build a pipeline where complaints generated engineering work. That requires several things most startups don’t have: a support team with enough standing to influence product decisions, a categorization system rigorous enough to turn qualitative complaints into quantitative signals, and founders willing to hear that the core product is wrong rather than just under-documented.

That last one is the hard part. There’s a significant difference between a complaint that tells you a feature needs a tooltip and a complaint that tells you the feature needs to be redesigned or removed. The first is easy to act on. The second requires admitting that work you’ve already shipped was wrong. Most product teams are institutionally bad at the second one.

Butterfield’s team was willing to do the second one repeatedly. The onboarding was redesigned. The channel structure was reconsidered. Notification defaults were changed based on complaints about anxiety and distraction long before the broader conversation about notification overload became a standard product concern.

The practical takeaway isn’t complicated, even if the execution is: treat your support queue as your primary user research channel, not your last resort. Categorize complaints systematically. Count them. Set thresholds that automatically escalate categories to product review. And when the data shows that the product itself is wrong, believe it.

Growth hacking gets the headlines. Slack’s actual growth driver in those early years was a simpler and less glamorous thing: they made the product less frustrating, repeatedly, because frustrated users kept telling them exactly where to look.