In the spring of 2002, a small team at Amazon got an unusual assignment. Jeff Bezos wanted every internal team to expose their data and functionality through service interfaces, and to design those interfaces as if they were being built for external developers. The punishment for not complying was being fired. This wasn’t a product launch. It was a stress test disguised as an organizational mandate, and it broke things spectacularly before any customer ever saw them.

That internal reckoning eventually became AWS. Which is to say: the act of deliberately subjecting your own product to hostile conditions before launch isn’t a nice-to-have engineering practice. For a certain class of company, it’s the actual origin story.

The Setup

Most startups treat pre-launch as a period of careful preparation. You build, you polish, you add features, you schedule the announcement. The implicit assumption is that your job is to make the product as good as possible before the world sees it, and that “good” means complete and stable.

This assumption is wrong in a way that becomes expensive fast.

What you’re actually doing during pre-launch, whether you know it or not, is rehearsing your own biases. Your team built the product, so your team knows how it’s supposed to work. You test the happy path because you designed the happy path. You load the system with realistic data because you have a realistic mental model of your users. The result is a product that works perfectly for the people who made it and fails in unpredictable ways for everyone else.

The companies that figure this out early adopt a different pre-launch posture: treat the product like an adversary.

What Netflix Did That Most People Misremember

Netflix’s Chaos Monkey is now famous enough that it’s become a cliché in engineering circles. But the actual history is worth revisiting, because most retellings miss the uncomfortable part.

In 2010, Netflix was in the middle of migrating its infrastructure from a self-managed data center to AWS. The move was motivated by a series of database corruptions in 2008 that had taken the service down for several days and shipped broken DVDs to customers. They had been badly burned by a single point of failure they hadn’t known was there until it collapsed.

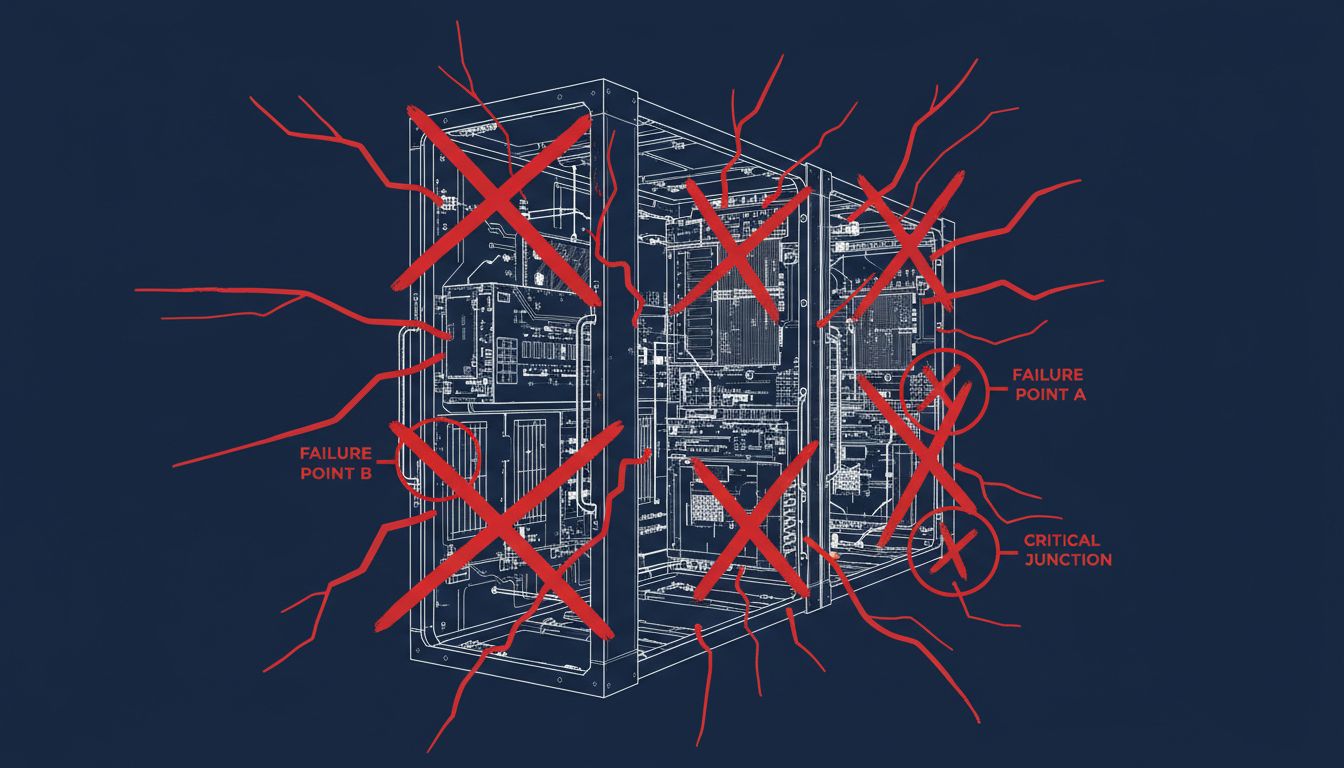

Chaos Monkey was built by engineers Cory Bennett and Ariel Tseitlin specifically to solve the problem of not knowing where your fragile points are. The tool randomly terminated virtual machine instances in the production environment, forcing the system to recover automatically. If it couldn’t recover, that was information. Painful information, but information.

Here’s the part that gets glossed over: they ran it during business hours. Not in a sandbox. Not in staging. In production, against live traffic, with real users watching movies. The logic was that if you only break things when nobody’s around, you never actually test your ability to recover under real conditions. You test your ability to recover when everyone has gone home.

This was a deliberate choice to create real pain in order to find real weaknesses. The Chaos Monkey program eventually evolved into a full suite of tools they called the Simian Army, which included Chaos Gorilla (which simulated the failure of an entire availability zone) and Latency Monkey (which introduced artificial delays). They were building a product that was designed to fail gracefully, and the only way to verify that was to make it fail, repeatedly, on purpose.

The result was a system that could survive the actual AWS outages that happened in 2011 and 2012, events that took down large portions of the internet, while Netflix kept streaming.

Why This Is Harder Than It Sounds

Describing Chaos Engineering to a founder whose product isn’t live yet usually produces one of two responses. The first is nodding along and then doing nothing, because deliberately breaking things feels counterproductive when you’re still trying to build them. The second is treating it as an infrastructure problem, relevant to Netflix but not to a five-person team with a B2B SaaS app.

Both responses miss the point.

The principle behind Chaos Engineering isn’t really about distributed systems or AWS. It’s about the epistemological problem of knowing what you don’t know about your own product. Netflix’s real insight wasn’t technical. It was that untested assumptions are the same as unacknowledged risks, and that the only way to convert one into the other is to force a confrontation.

For a small startup, that confrontation doesn’t require engineering tools. It requires deliberate adversarial thinking, applied early. What happens if your biggest customer tries to do something you never anticipated? What happens if three simultaneous users trigger the same database write? What happens if your payment processor goes down mid-checkout? These aren’t edge cases. They’re the first page of the incident log for almost every product that ships.

Slack did something structurally similar before launch with a closed beta that wasn’t actually a soft touch. They invited companies to use the product and then actively watched where people got confused, where they stopped, where they gave up. The team treated every support ticket as a signal that something in the product was broken, not just the sender. This sounds obvious, but most startups treat support tickets as customer service problems. Slack treated them as product failures that needed to be fixed before the next person hit the same wall. Defensive programming is the code-level version of the same instinct: you write for the conditions you haven’t anticipated, not just the ones you have.

What We Can Learn

The startups that run serious pre-launch adversarial testing share a few habits worth stealing.

First, they separate the people doing the testing from the people who built the thing. The engineers who wrote the code are the worst possible people to find edge cases in it. They know too much. You want someone who will try to pay with a declined card and then immediately try again, because that’s what users do and it’s almost certainly not something you tested.

Second, they define failure in advance. Netflix didn’t run Chaos Monkey and then argue about whether the recovery time was acceptable. They set a threshold and measured against it. Without a pre-defined standard, every failure gets rationalized. You tell yourself it’s minor, it’s fixable, it’s not representative. Defining what failure looks like before you test is the only way to make the results honest.

Third, and this is the uncomfortable one: they actually fix what they find. Chaos Engineering run by a team that lacks the authority or the will to act on the results is just an elaborate way to document how bad things are. The practice is only valuable if the organization treats the findings as genuine blockers.

The Amazon story is instructive here. Bezos didn’t run a user study or commission a report. He issued a mandate with a real consequence attached, and he gave teams the time and resources to comply. The forcing function had teeth.

Most startups will not have the discipline to do this, which is exactly why the ones that do tend to survive the first real stress test from the market. Breaking your own product before launch is uncomfortable. It surfaces things you don’t want to know. It slows down the timeline. It sometimes causes you to throw out work you’re proud of.

It is also, almost without exception, cheaper than finding out those same things in front of customers who have options.