The Setup

In 2019, a mid-sized payments processing company (one of several that have shared versions of this story at engineering conferences, with enough detail to reconstruct what went wrong) deployed a new fraud detection service. The service sat between transaction intake and payment authorization. If it went down or started returning garbage, real money would either be blocked incorrectly or fraudulent transactions would sail through.

The engineering team was not cavalier about observability. They had logging. They had dashboards. They had alerts. By any reasonable external measure, they looked like a team that took reliability seriously. They were logging service start and stop events, individual transaction IDs, HTTP status codes, error rates from their internal health endpoint, and CPU and memory utilization at thirty-second intervals.

Six days after a routine deployment, a compliance analyst doing a manual audit noticed that fraud flags had essentially stopped being issued. Transactions that would have previously triggered review were clearing automatically. The system had been failing silently the entire time.

What Happened

The fraud detection service had a dependency on a third-party risk scoring API. During the deployment, a configuration change had introduced a subtle version mismatch in how the service parsed the API’s response schema. When the risk API returned a score above a certain threshold, the parsing code threw an uncaught exception. But here is the critical part: the service was designed to fail open. If it couldn’t complete a risk assessment, it defaulted to approving the transaction rather than blocking it, on the theory that false positives (blocking legitimate payments) were more costly to the business than brief gaps in fraud detection.

None of this was visible in the logs because of three compounding decisions made during the original build:

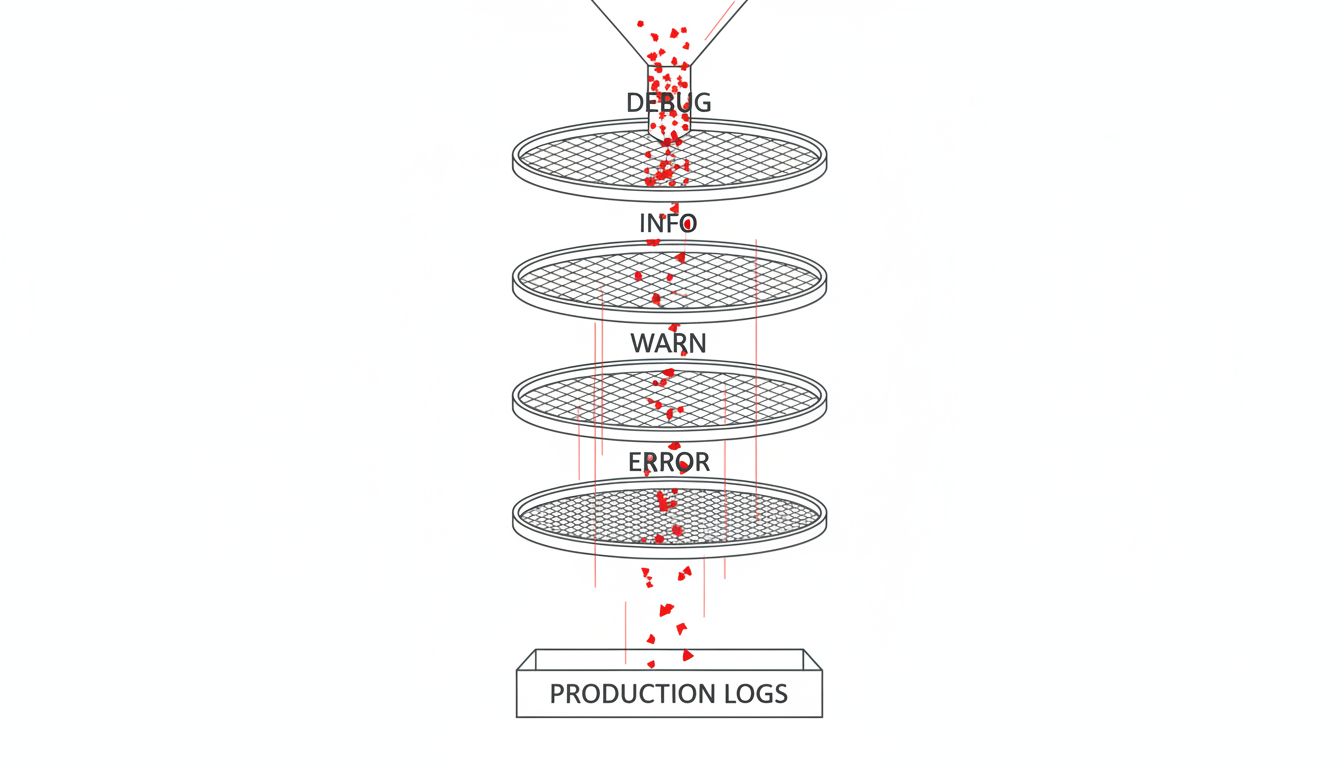

First, the error handling around the API response parser caught the exception and logged it at DEBUG level, not ERROR. DEBUG logging was disabled in production to manage storage costs. The errors were being swallowed completely.

Second, the health endpoint the team monitored only checked whether the service could accept requests and return a 200. It did not check whether the fraud scoring logic was actually executing successfully. The service was technically healthy by every metric they watched.

Third, the team’s alerting was built around error rate thresholds. Since the errors were never surfacing, the error rate stayed at zero. Zero errors looked like success.

For six days, the logs showed a healthy, high-throughput service. They just didn’t show that the most important thing the service was supposed to do had stopped working.

Why This Keeps Happening

This is not a story about a careless team. It is a story about a logging strategy that optimized for the wrong things.

Most logging strategies grow organically. A developer adds a log line when they’re debugging something. Another developer adds error logging wherever exceptions are caught. Someone realizes storage costs are climbing and disables verbose logging in production. Over time, you end up with a system where what gets logged reflects what was convenient to log during development, not what you actually need to diagnose failures in production.

There’s also a deeply ingrained conflation of “no errors logged” with “no errors occurring.” These are not the same condition, and treating them as equivalent is how you end up with six days of silent fraud. Errors you don’t log are not errors you’ve fixed.

The fail-open design choice deserves some scrutiny too. Fail-open versus fail-closed is a real architectural tradeoff, and fail-open is often the right call in payment systems. But it carries an obligation: if your system is designed to silently absorb failures and keep processing, you need instrumentation that is aggressively designed to surface those absorbed failures. The team had the policy without the visibility layer that makes the policy safe.

This connects to a broader problem with how teams think about health checks. A service that can receive requests and return a 200 is not necessarily a service that is doing its job. Your health check should reflect your service’s actual function, not just its ability to respond to pings. A fraud detection service is healthy when it is successfully scoring transactions, not when it can acknowledge a request.

What You Can Actually Do

The good news is that the fixes here are concrete. You don’t need a new platform or a six-month observability overhaul.

Log the absence of expected things, not just errors. If your fraud service is supposed to produce a risk score for every transaction, log when it doesn’t. A counter that tracks “transactions processed without a risk score” would have caught this failure within hours. The most useful logs are often the ones that record what should have happened but didn’t.

Treat log levels as a contract, not a suggestion. DEBUG is for development. If something can fail silently in production and affect a core business function, it gets logged at WARN or ERROR, always. Go back through your exception handlers right now and ask whether anything that catches a meaningful failure is logging at DEBUG or INFO and quietly continuing.

Make your health checks reflect actual function. For every service you run, ask: what is the one thing this service must do correctly for the business to work? Build a check around that thing. For a fraud service, run a synthetic transaction through the scoring logic on a schedule and verify the output is within a reasonable range. For a payment gateway, check that a test authorization actually completes the full flow. Your CI pipeline passing doesn’t mean your software works, and your health endpoint returning 200 doesn’t either.

Build alerts around business metrics, not just technical metrics. The engineering team was watching error rates. Nobody was watching fraud flag rate. If you had told them “the number of transactions flagged for fraud review dropped to zero this week,” they would have immediately known something was wrong. Map your most critical business outputs to metrics you actually monitor. Drops to zero, or sudden drops of any significant percentage, should page someone.

Audit your fail-open and fallback logic specifically. Wherever your system is designed to degrade gracefully, you need logging that is explicitly designed to make that degradation visible. Graceful degradation is a feature. Invisible degradation is a bug.

The payments team eventually rebuilt their observability layer with these principles, adding business-level metrics tracking alongside their technical ones, rewriting health checks to exercise actual scoring logic, and auditing every exception handler to enforce consistent log levels. The changes took a few weeks of careful work.

The more important shift was the mental model. They stopped treating logging as something you add when you’re debugging and started treating it as a first-class design question: what does “working correctly” look like, and how will we know if it stops being true? Answer that question during design, not after the six-day incident.