The Setup

In 2023, a mid-sized fintech company (one whose name you’d recognize if you work in payments) quietly assembled what they called a “prompt engineering team.” Three people. Dedicated budget. A Notion workspace with folders named things like “production prompts” and “prompt v2 final FINAL.” They were building internal tooling to help their compliance analysts summarize regulatory filings, flag anomalies in transaction data, and draft first-pass responses to partner inquiries.

Six months in, something awkward happened.

A new technical writer joined the company. Her first week, she was given access to the Notion workspace to understand how the tools worked. Within a few days, she came to her manager with a question that stopped the room: “Who owns the style guide for these prompts?”

Nobody had an answer. Because nobody had thought to ask.

She wasn’t being difficult. She was doing exactly what technical writers are trained to do: look at a body of text that is supposed to communicate instructions reliably and ask who is responsible for making sure it does that consistently.

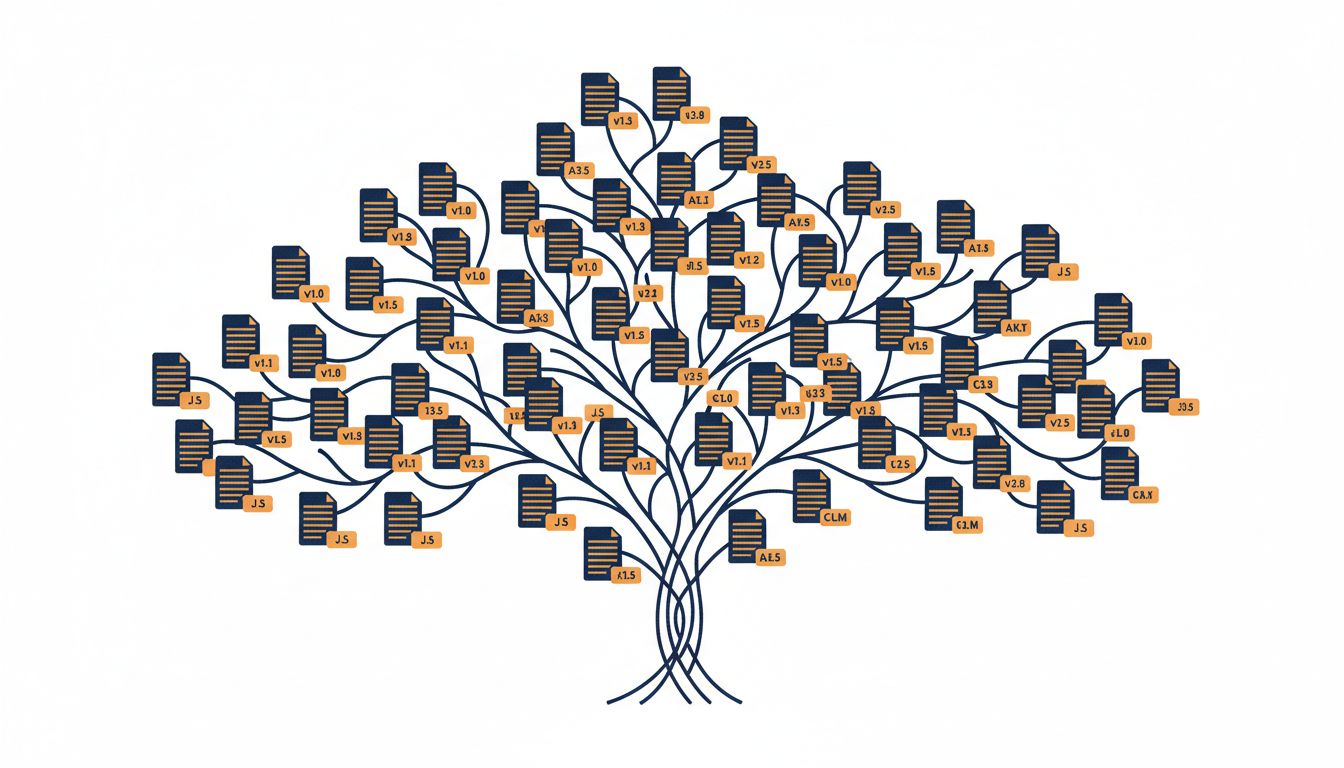

The prompts, she pointed out, had no version history anyone could trace. They had no owner listed. Different analysts had modified them for their own workflows without telling anyone. There were three versions of the “transaction anomaly” prompt living in different folders, and nobody knew which one was in production.

What Happened

The team did what most teams do when they realize they have an organizational problem. They scheduled a meeting. Several meetings, actually.

What came out of those meetings was not a new approach to prompt engineering. It was a documentation system. They assigned prompt ownership. They created a changelog. They wrote a style guide that specified things like how to phrase conditional instructions, how to structure examples within a prompt, and when to use numbered lists versus prose for multi-step instructions. They added a review process before any prompt went into production tooling.

The technical writer ran the whole thing.

Within two months, the quality of outputs from their AI tooling improved noticeably enough that the compliance team lead mentioned it unprompted in a quarterly review. The improvement wasn’t from better prompts in some abstract technical sense. It was from treating prompts the way mature engineering organizations treat code: with authorship, review, versioning, and deprecation policies.

The prompt engineering team lead, to his credit, said something worth quoting in the retrospective: “We were so focused on what to say to the model that we forgot to ask whether anyone would remember what we’d said, or why we’d said it.”

Why This Matters

This story is not unique. Variations of it are playing out in companies everywhere that have added AI tooling to real workflows. A team discovers that LLMs are useful. Someone figures out that the right phrasing makes them significantly more useful. That person becomes the de facto “prompt expert.” Their prompts live in a shared doc, or a spreadsheet, or someone’s personal notes app. Nobody else fully understands them. The expert leaves or gets busy, and suddenly nobody knows why the customer service prompt includes a specific instruction not to mention refund timelines.

This is the exact situation that gave rise to documentation practices in software development. Before version control and style guides and API documentation standards, codebases looked the same way. Knowledge lived in people’s heads. Onboarding was oral tradition. Changes broke things for reasons nobody could trace.

The techniques that fixed that problem, clear authorship, structured formatting, explicit reasoning, maintenance schedules, are exactly what prompt engineering needs. The reason most teams haven’t applied them is that “prompt engineering” sounds like engineering, which sounds like it belongs to the technical team, which means it inherits none of the documentation culture that technical writers spent decades building.

But look at what good prompt engineering actually involves. You are specifying behavior for a system that other people will use, in language that needs to be precise enough to produce consistent results, maintained over time as the system and requirements change. That is a documentation problem. The “system” you’re documenting for just happens to be a language model instead of a human reader.

The skills transfer almost one-for-one. Technical writers know how to write instructions that work for readers who have different backgrounds and context than the writer. They know how to structure conditional logic in prose. They know that examples are often clearer than rules. They know the difference between describing what something is and describing what someone should do with it. All of this applies directly to writing prompts that work reliably.

What You Can Learn From This

If you’re managing a team that uses AI tools in any serious way, here is a practical framework based on what the fintech team eventually built.

Assign ownership. Every prompt that touches a real workflow should have a named owner. Not a team. A person. That person is responsible for knowing what the prompt does, why it’s written the way it is, and whether it still works.

Write down the reasoning. The most common failure mode is a prompt that says something specific, like “always respond in three sentences or fewer,” with no record of why that constraint exists. When the constraint causes problems six months later, nobody knows if it’s safe to change. Document the intent alongside the instruction.

Version it. This does not require elaborate tooling. A changelog in the same document as the prompt is enough to start. Date it. Note what changed and why. This turns prompt maintenance from archaeology into engineering.

Build a review process before production. The fintech team’s rule was simple: no prompt goes into a tool that other people use without at least one other person reading it with fresh eyes. Not to approve it, necessarily, but to surface assumptions the author can’t see.

Treat deprecation seriously. Old prompts don’t disappear. They sit in shared folders and get copied into new projects by people who don’t know their history. Create a clear way to mark a prompt as deprecated and point to its replacement.

None of these steps require a technical writer on staff. They require treating prompts as artifacts that matter, which means giving them the same basic care you give any other artifact your team produces and depends on.

The fintech team’s technical writer didn’t bring new AI knowledge to the problem. She brought the assumption that written instructions should be treated like written instructions. That assumption, obvious in retrospect, was the thing the team had been missing for six months.

You probably have prompts that look a lot like their Notion folders did before she arrived. The fix is not a better prompting technique. It’s admitting you have a documentation problem and solving it like one.