The Simple Version

A vector database stores numbers that represent the meaning of things, not the things themselves. When an AI searches through it, it finds items that are conceptually similar, not just keyword-identical.

What a Vector Actually Is

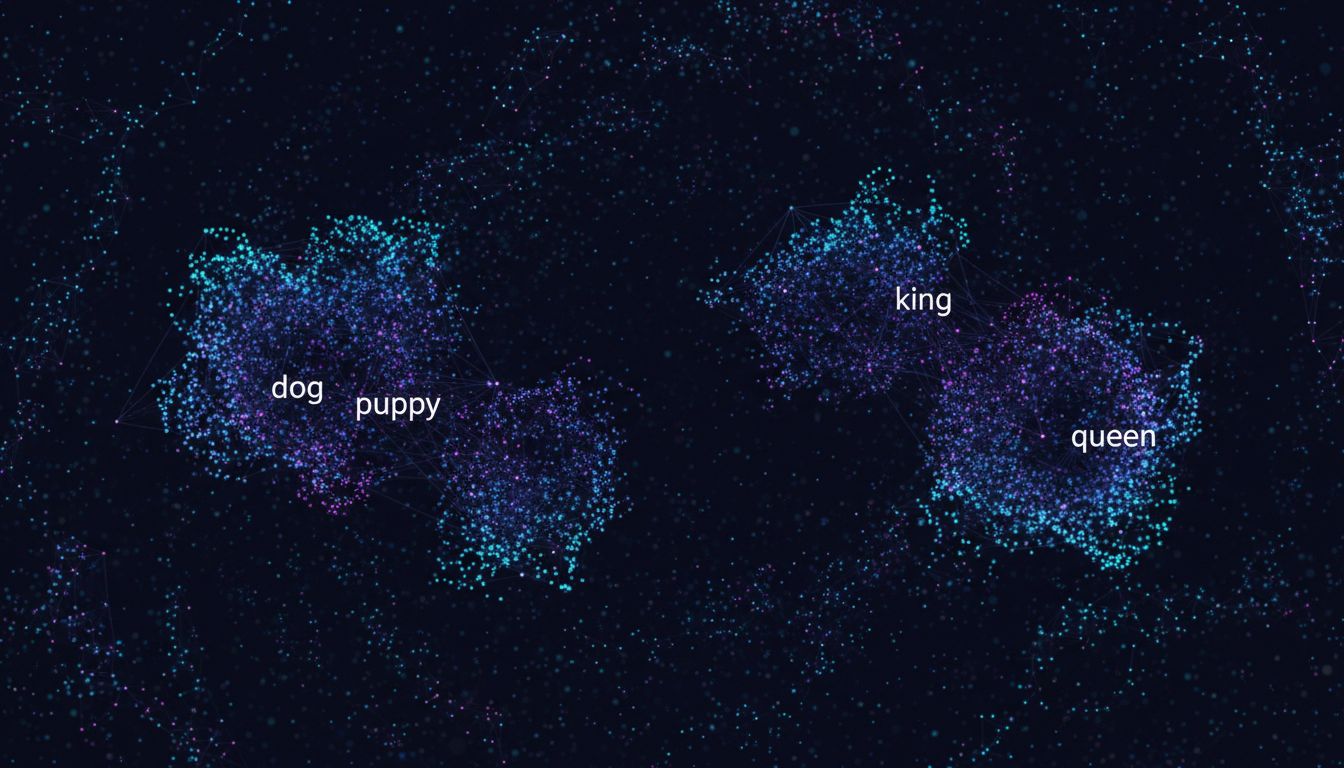

Imagine you want to teach a computer that “dog” and “puppy” are related, even though they share no letters. Traditional databases can’t do this. They store exact values and match on exact criteria. Either the word is there or it isn’t.

Neural networks solve this by encoding meaning as position in space. After training on enormous amounts of text, a model learns that “dog” and “puppy” tend to appear in similar contexts, around similar words, in similar sentences. It encodes that relationship by placing them near each other in a high-dimensional coordinate space. Each word, sentence, image, or audio clip gets translated into a list of numbers, typically several hundred to several thousand of them, that describes its location in that space.

That list of numbers is a vector. The database stores vectors.

A concrete example: the word “king” might be represented as a vector like [0.23, -0.87, 0.45, …] across 768 dimensions. “Queen” sits nearby. “President” is in the same neighborhood. “Banana” is far away. The distance between two vectors corresponds to semantic difference.

What the Database Actually Contains

A vector database entry typically has three components. First, the vector itself, which is a dense array of floating-point numbers. Second, an identifier that links back to the original content, whether that’s a document, an image file, a product listing, or a customer record. Third, optional metadata that can filter searches without relying on the vector alone, things like a publication date, a category, or a user ID.

What the database does not store is the original content in a searchable text form. That lives elsewhere. The vector database is essentially an index of meaning that points back to the real data.

When you query a vector database, you don’t type in keywords. You submit your own vector, derived from your query through the same model that created the stored vectors, and ask for the N nearest neighbors. The database returns the vectors (and their associated IDs) that are closest in that high-dimensional space. Nearest in this context usually means smallest cosine distance or Euclidean distance, depending on the implementation.

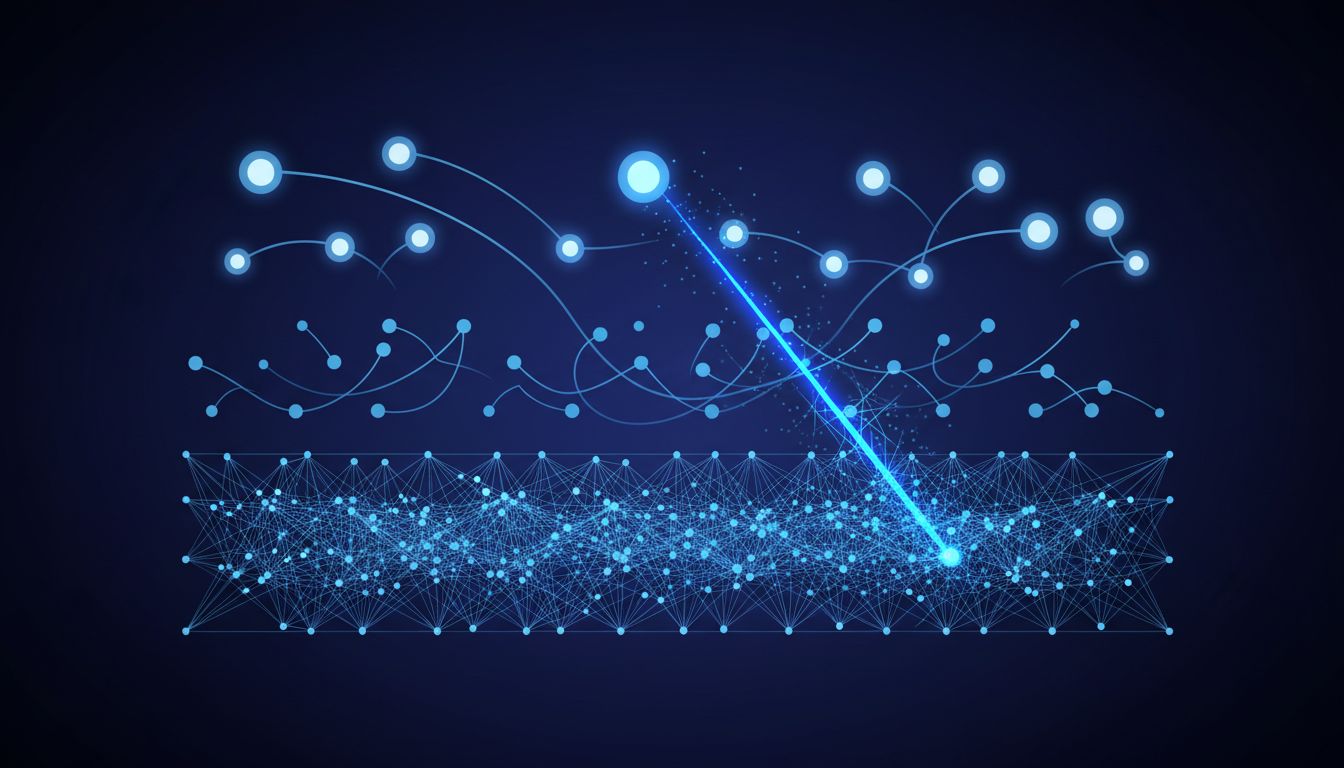

The engineering challenge is that doing this comparison across millions or billions of vectors in real time is computationally expensive. Specialized indexes like HNSW (Hierarchical Navigable Small World graphs) and IVF (Inverted File Index) solve this by making approximate nearest-neighbor lookups fast enough to use in production. You trade a small amount of accuracy for orders-of-magnitude speed improvements.

Why This Matters for AI Applications

The reason vector databases are everywhere right now comes down to a specific problem: large language models have a knowledge cutoff and a limited context window. You cannot stuff an entire company’s documentation into a single GPT-4 prompt.

The workaround, called retrieval-augmented generation (RAG), works like this. You take all your documents and convert them to vectors using an embedding model. You store those vectors in a vector database. When a user asks a question, you convert their question to a vector, find the most semantically similar document chunks, pull the actual text of those chunks, and inject them into the prompt alongside the question. The model then answers based on that retrieved context.

This is why a customer service chatbot can answer questions about a product manual it was never trained on. It’s not reasoning from memory. It’s looking things up in a vector-indexed document store and synthesizing an answer from what it finds. The quality of that system depends heavily on how well the embedding model captures meaning and how the documents were chunked before indexing.

Pinecone, Weaviate, Qdrant, and Chroma are dedicated vector databases built around this workload. PostgreSQL now offers vector search through the pgvector extension. Redis, Elasticsearch, and MongoDB have added vector capabilities. The fact that nearly every database vendor has rushed to add this feature tells you how central it has become to production AI systems.

The Limitations Worth Understanding

Vectors are only as good as the model that created them. If your embedding model was trained primarily on English text, it will encode English meaning well and other languages poorly. If it was trained on general web data, it may cluster medical terminology in ways that don’t reflect clinical distinctions a doctor would care about. Domain mismatch is a real and frequent problem.

Vectors also have no sense of time or factual accuracy. A vector database will happily store and retrieve embeddings of false statements, outdated information, and contradictory claims with equal confidence. Semantic similarity does not imply truth. A query about vaccine safety will retrieve documents that are semantically near that topic regardless of whether those documents are peer-reviewed studies or misinformation. The retrieval mechanism is neutral on correctness, which is a meaningful limitation in high-stakes applications.

There’s also the black box problem. You cannot inspect a vector and understand why two items are considered similar. The 768 individual dimensions don’t correspond to human-readable concepts. This makes debugging vector search failures genuinely difficult. If your system retrieves irrelevant results, tracing why requires testing embedding quality, chunk size, similarity thresholds, and model choice in combination, none of which give clean diagnostic signals. This is somewhat related to a broader pattern with AI reasoning: the less interpretable the process, the harder it is to trust the output.

What to Take Away

A vector database is an index of compressed meaning. It trades human-readability for the ability to answer the question “what is conceptually related to this?” at scale and speed. The storage format is numerical coordinates in high-dimensional space. The retrieval mechanism is geometric proximity. The practical value is connecting language models to information they were never trained on.

Understanding this makes the behavior of AI systems more legible. When a chatbot retrieves the wrong context and produces a confident but incorrect answer, it’s not hallucinating in the way people usually mean. It found the nearest vector and got unlucky. The fix is usually in the embedding pipeline or the chunking strategy, not in the model itself.