The Mismatch Between the Name and the Reality

When engineers first encounter vector databases, most assume the name refers to something about performance or scale. It doesn’t. The word “vector” is doing precise mathematical work here, and understanding it changes how you think about AI-powered search, recommendations, and retrieval.

A vector database stores vectors: arrays of floating-point numbers, typically hundreds or thousands of values long. A single document might be represented as an array of 1,536 numbers. An image might produce 512. A user’s listening history on a music platform might compress into 256. These numbers don’t describe the content in any human-readable way. They encode where that content sits in a high-dimensional geometric space, and that geometry is where the real work happens.

What an Embedding Actually Is

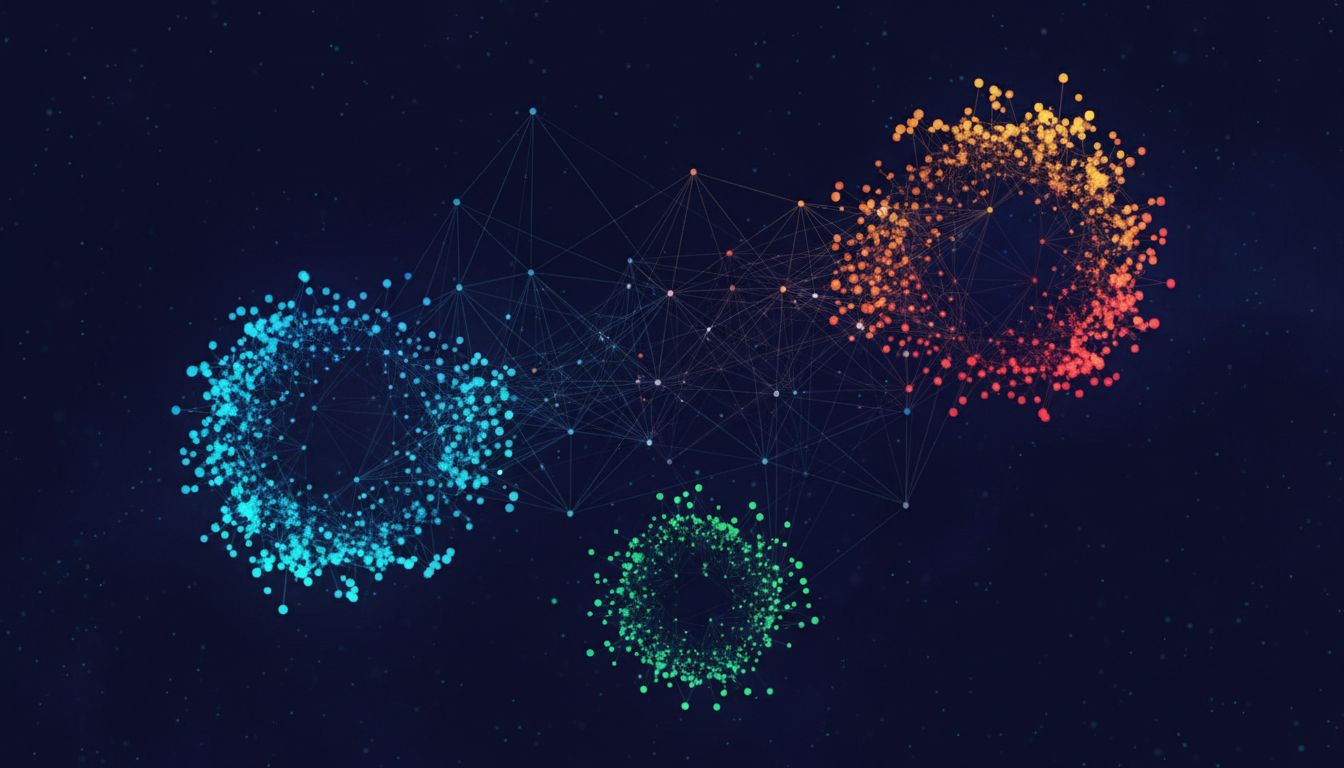

Before data enters a vector database, it passes through an embedding model. This is a neural network trained to compress meaning into a fixed-size numerical representation. OpenAI’s text-embedding-ada-002 model, for instance, converts any piece of text into a 1,536-dimensional vector. Two sentences with similar meanings will produce vectors that point in roughly the same direction in that space. Two sentences about entirely different topics will produce vectors that point in very different directions.

This is the core idea. Similarity in meaning becomes proximity in space. “The dog chased the cat” and “A canine ran after a feline” will sit close together geometrically, even though they share almost no words. A keyword search would miss this entirely. A vector search finds it naturally.

The embedding model does something that turns out to be surprisingly hard to do any other way: it encodes semantic relationships without requiring explicit rules about what “similar” means. You don’t write those rules. The model learned them from training data. The database then stores and indexes the resulting vectors so you can search them quickly.

What Gets Stored Alongside the Vector

The vector alone isn’t terribly useful without knowing what it represents. So in practice, vector databases store two things together: the embedding itself and a metadata payload pointing back to the original content.

Pinecone, Weaviate, Qdrant and others all handle this similarly at the conceptual level. You insert a vector plus a metadata object. The metadata might contain the original text, a document ID, a timestamp, a user ID, whatever context you need to retrieve and use the result. When a query comes in, the database finds the vectors geometrically closest to the query vector, then returns those metadata payloads so your application can actually do something useful with the answer.

The query itself follows the same path. You take your search text, run it through the same embedding model, and compare the resulting vector against everything in the database. The comparison metric is usually cosine similarity (which measures the angle between vectors, making it scale-invariant) or Euclidean distance (which measures absolute spatial separation). For most text applications, cosine similarity behaves better.

The Index Is Where Performance Lives

Storing vectors is trivial. The engineering challenge is finding the closest ones quickly when your database contains tens of millions of entries. Comparing a query vector against every stored vector directly (brute-force nearest-neighbor search) is accurate but scales as O(n) with the size of your dataset. At production scale, that’s unusable.

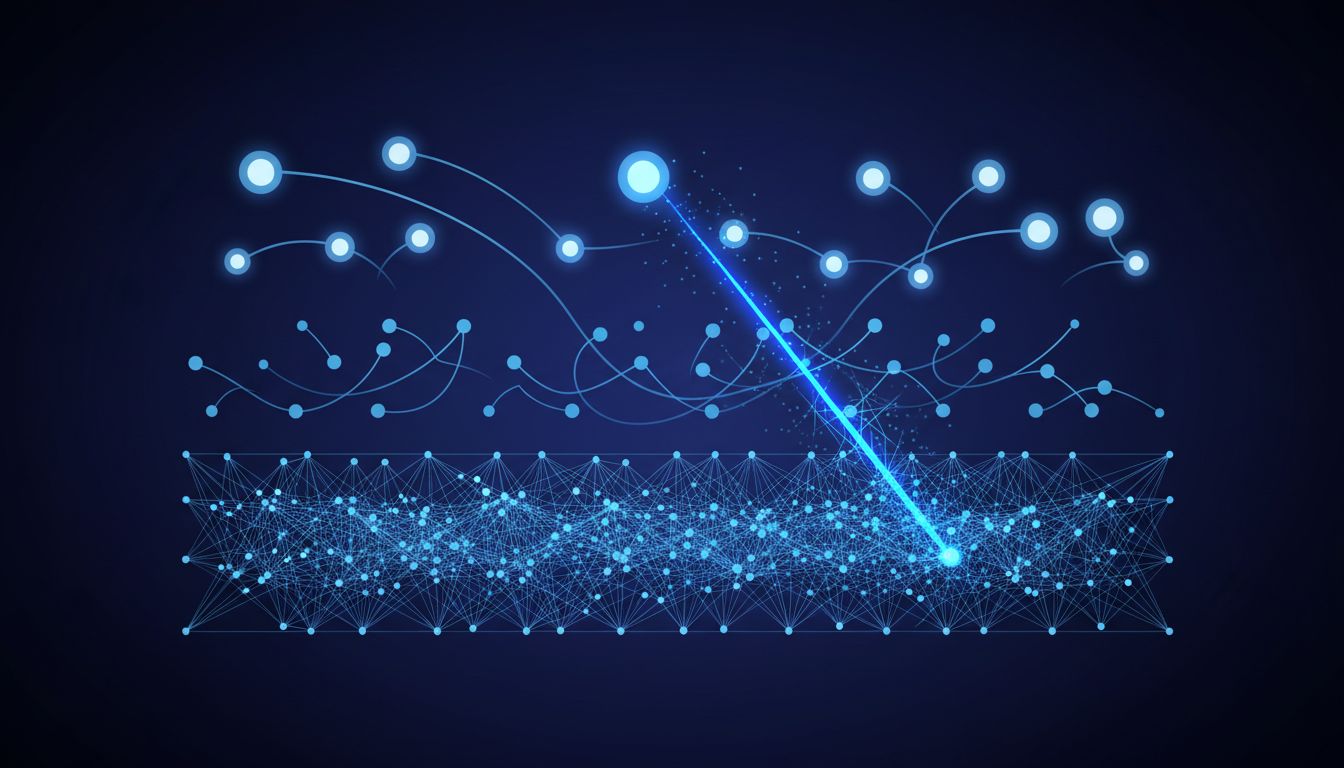

Most vector databases solve this with approximate nearest neighbor (ANN) algorithms, and the most widely used is HNSW (Hierarchical Navigable Small World). HNSW builds a multi-layer graph structure over the vectors during indexing. Higher layers contain sparse, long-range connections; lower layers contain denser, short-range connections. A query traverses from the sparse top layers down to the dense bottom layers, pruning the search space at each step. This yields search times that are logarithmic rather than linear, at the cost of occasionally missing the absolute best match.

The “approximate” qualifier matters. You’re trading a small amount of recall accuracy for a large gain in speed. For most real-world applications, finding the 10 most semantically relevant documents out of millions in under 100 milliseconds is far more valuable than finding the perfect 10 in 30 seconds. Whether that tradeoff is acceptable depends entirely on your use case.

Why This Architecture Exists at All

Vector search isn’t new. Recommendation systems at companies like Netflix and Spotify have used embedding-based retrieval internally for years. What changed recently is that large language models made embeddings cheap and accessible. You can now embed arbitrary text, images, or audio through an API call, without training your own model.

This is why vector databases became a distinct product category: the demand for semantic search infrastructure spiked at exactly the moment that foundation models made good embeddings available to everyone. The retrieval-augmented generation (RAG) pattern, where you feed an LLM relevant context retrieved from a vector store before generating a response, turned vector databases from a specialized tool into infrastructure that almost any AI application needs.

The practical implication is that you’re often running two systems in parallel: a traditional database holding your actual content and records, and a vector database holding representations of that content’s meaning, used purely for retrieval. They need to stay in sync, which introduces operational complexity worth thinking about before you commit to the architecture.

Understanding what these systems actually store makes it obvious why they’re designed the way they are. They’re not general-purpose databases with a clever indexing scheme bolted on. They’re purpose-built for a specific kind of query: given a meaning, find the closest meanings we have. Everything else follows from that.