Chess grandmasters have been losing to computers since 1997. AI can now read medical scans, write legal briefs, and predict protein structures that stumped scientists for decades. Yet if you text a chatbot “Oh sure, that’s a totally great idea,” dripping with sarcasm, there’s a real chance it will thank you for the positive feedback and move on. That gap, between computational power and human understanding, is one of the most fascinating and practically important stories in modern technology.

Understanding why AI stumbles here matters for anyone building products, writing for AI systems, or making decisions about where automation actually helps you. (And it matters for understanding why the economics of AI-powered support tools are more complicated than they look on a spreadsheet.)

The Two Very Different Problems AI Is Actually Solving

Here’s the key insight most people miss: chess and conversation are not just different in degree. They’re different in kind.

Chess is what researchers call a “closed domain” problem. It has a fixed number of pieces, a defined board, and rules that never change. Every possible state of the game can, in theory, be evaluated. There is always a correct answer. When AlphaZero learned to play chess, it practiced against itself millions of times inside a perfectly consistent universe.

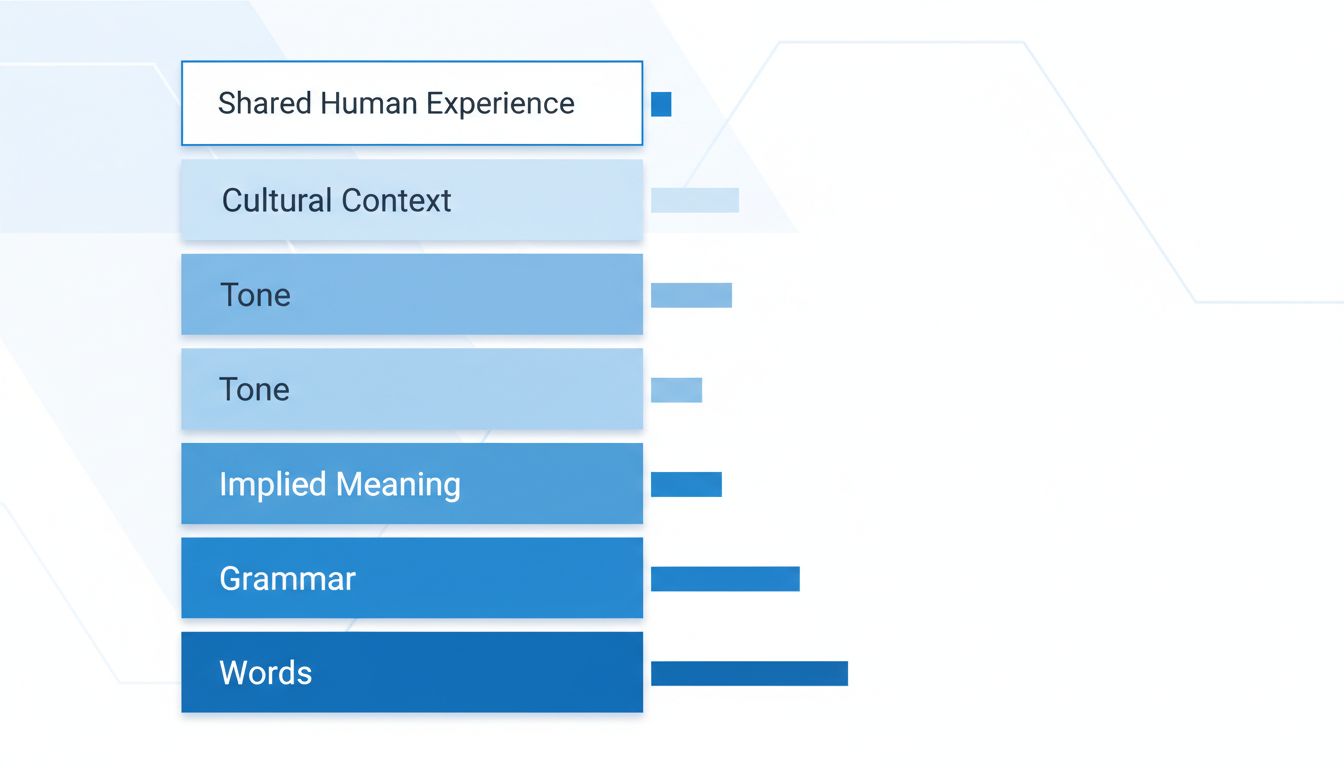

Language is the opposite. When you say “Oh great, another Monday,” the meaning lives almost entirely outside the words themselves. It lives in your tone, your history with Mondays, whether you normally love your job, what happened last Monday, and a hundred tiny cultural signals your conversation partner picks up without thinking. There is no rulebook. The “correct” interpretation changes depending on who you are and who is listening.

AI systems trained on text are essentially learning statistical patterns, which words tend to follow which other words, and which phrases tend to appear in which contexts. That is genuinely powerful. But sarcasm is specifically designed to mean the opposite of what the words say. It weaponizes context. It assumes shared human experience. Statistical pattern-matching struggles badly with anything built to subvert surface meaning.

Why Context Is Harder Than Complexity

You might assume that a more powerful AI, one trained on more data, would eventually “get” sarcasm. More data should mean more context, right?

Partially. Modern large language models have gotten meaningfully better at detecting obvious sarcasm in text, especially when it’s heavily signaled with phrases like “Oh, brilliant idea” after something clearly goes wrong. But nuanced sarcasm, the kind you use with close friends or colleagues, still regularly slips through. And there’s a structural reason for that.

Human communication is built on what linguists call “common ground,” the vast shared background knowledge two people bring to a conversation without ever stating it. When your friend says “Yeah, your new commute sounds amazing” after hearing it takes two hours each way, they’re not accessing language. They’re accessing a shared understanding of what two-hour commutes feel like, what your face does when something is actually amazing versus terrible, and probably six months of conversations about your job search.

AI has no commute. It has no Tuesday afternoons. It has text. This is why machine learning algorithms that shape what you see online can optimize engagement beautifully while completely misreading the emotional texture of what people are actually saying about a topic.

There’s also a multimodal problem. Humans detect sarcasm through vocal tone, facial expression, timing, and physical context. Text-based AI strips most of that away. Even when researchers add sentiment analysis, training models to detect emotional tone from word choice, sarcasm often looks positive in the data. The words “great” and “wonderful” and “perfect” are being used. The model sees what look like happy signals.

What This Means If You’re Building with AI

If you’re designing a product, integrating an AI tool, or deciding where to trust AI outputs, here’s a practical framework for thinking about where sarcasm detection (and context-dependence more broadly) will bite you.

Step 1: Identify how closed or open your domain is. Tasks with clear rules and measurable outcomes, fraud detection, image classification, code completion, are where AI performs reliably well. Tasks that require reading human emotional state, social context, or subtext are where you need human review or explicit design for failure.

Step 2: Consider your user’s communication style. Customer feedback, social media monitoring, and open-ended survey responses are full of irony, sarcasm, and deadpan humor. If you’re feeding any of that into an automated sentiment analysis pipeline and making decisions based on the output, you are definitely getting some of it wrong.

Step 3: Build in human checkpoints at the context-dependent moments. This doesn’t mean abandoning automation. It means thinking carefully about where the actual costs are when the AI gets it wrong. A false positive on a chess move costs you the game. A false positive on a sarcastic customer complaint can cost you the customer.

Step 4: Write for clarity when you’re talking to AI systems. If you’re using AI tools in your own workflow, literal, direct language will serve you better than your natural conversational style. Save the wit for your colleagues.

The Deeper Lesson About Intelligence

There’s something genuinely useful in sitting with this puzzle. AI’s weakness with sarcasm isn’t a bug waiting to be patched. It’s a window into what human intelligence actually does that we rarely stop to appreciate.

You understand sarcasm because you’ve lived a life. You’ve been disappointed enough times to recognize the specific flavor of disappointment that wraps itself in cheerful words. You’ve watched people’s faces. You’ve been in rooms where something went badly and everyone laughed because that was the only thing to do. That accumulation of embodied, emotional, social experience is what you’re drawing on in the half-second it takes to hear “Oh, perfect timing” and know the speaker means the opposite.

No training dataset captures that. At least not yet, and possibly not ever in the way we might hope.

This doesn’t mean AI isn’t useful, it clearly is, enormously so. But it does mean that the questions worth asking aren’t “is AI smarter than humans” but “what specifically is this tool good at, and where does it need you.” The gap between beating grandmasters and understanding a knowing eye-roll is actually a very useful map for figuring that out.