There’s a phenomenon that researchers have been quietly documenting for a couple of years now, and it deserves more attention than it gets. AI models, particularly large language models deployed at scale, tend to get worse at specific tasks over time. Not because the underlying model changes. Because the population of people using it changes, and the entire system is built around feedback loops that treat popularity as a proxy for quality.

This is not a complaint about AI hype. It is a technical problem with a specific shape, and understanding that shape matters if you’re building anything serious on top of these systems.

The Core Mechanism: Feedback Loops That Optimize for Volume

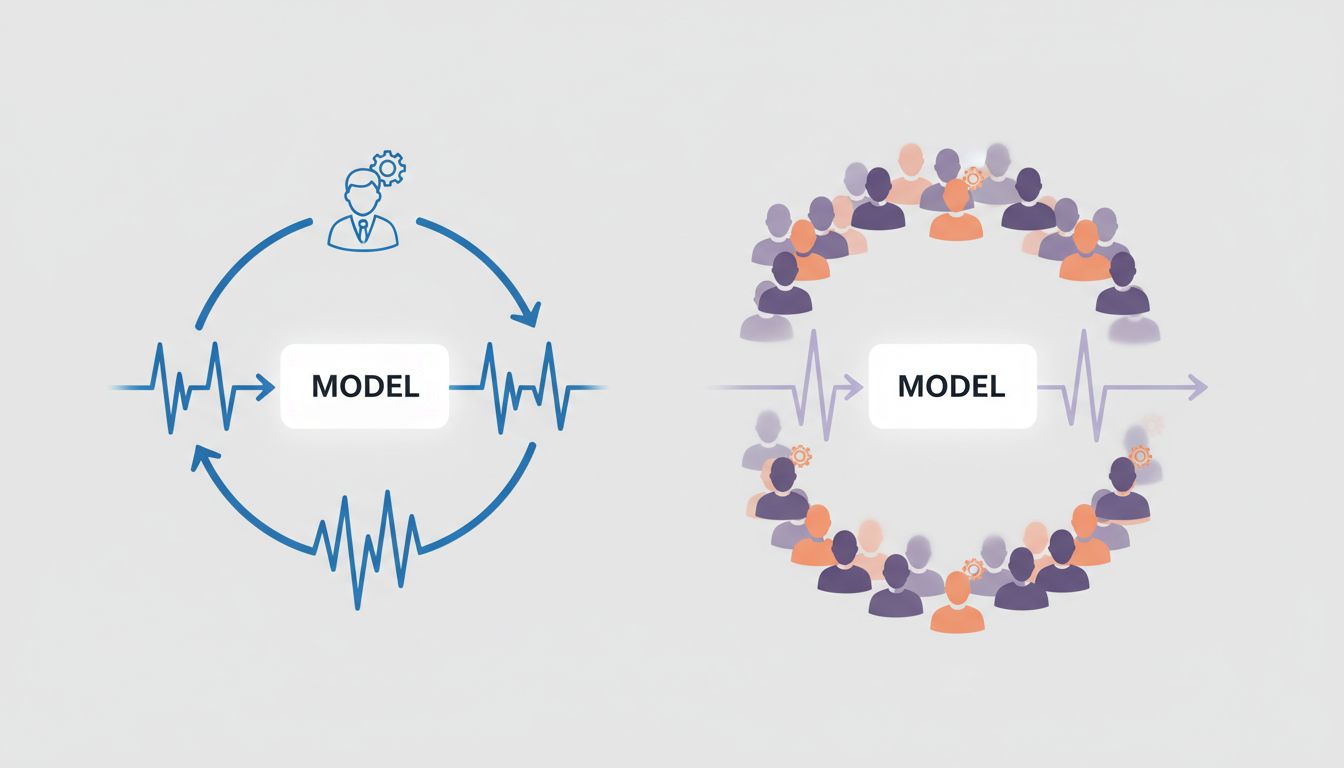

Most deployed AI systems are not static. They receive feedback, either explicitly through thumbs-up/thumbs-down ratings, or implicitly through engagement signals like whether users continued a conversation, clicked a result, or came back the next day. This feedback gets aggregated and used, in various ways, to steer future model behavior through fine-tuning, reinforcement learning from human feedback (RLHF), or simply by reranking outputs.

The problem is that feedback is not uniformly distributed. A small number of users who have highly specific, technical needs represent a tiny fraction of total interactions. A much larger population of casual users with broad, vague requests dominates the signal. When the model optimizes toward the majority signal, it gets better at serving that majority and measurably worse at the tail cases. The tail cases are often the ones where AI is most valuable.

This is not a hypothetical. Researchers studying GPT-4’s behavior between March and June 2023 found statistically significant degradation in its ability to solve certain math and coding problems over that period. OpenAI pushed back on the interpretation, but the underlying data, published by Lingjiao Chen and colleagues at Stanford, showed clear performance shifts. The debate about causation is ongoing, but the phenomenon of drift is not seriously disputed.

Why ‘More Data’ Doesn’t Fix This

The intuitive response is that you should just train on more data, or incorporate better feedback. But this runs into a fundamental tension: the same properties that make a model useful to millions of casual users are often in direct conflict with the properties that make it useful to experts.

A model that handles ambiguous, underspecified requests gracefully will learn to fill gaps with plausible-sounding content. That’s good for someone asking for a blog post outline. It’s corrosive for someone trying to debug a subtle concurrency issue in Rust, where the plausible-sounding answer is exactly the kind of answer that wastes hours.

More data can actually make this worse. When you train on a larger pool that skews toward casual use cases, the model’s representation of what a “good” response looks like shifts toward what satisfies casual users. You can try to counteract this with careful curation, but curation is expensive and has its own biases.

The Prompt Stability Problem

There is a second, related issue that affects users even when the underlying model doesn’t change at all. Prompts that work well under one serving configuration stop working reliably when the infrastructure around the model changes. This happens because the model’s outputs are sensitive to context in ways that are difficult to predict and even harder to document.

Companies running these models at scale are constantly making infrastructure changes: quantization to reduce serving costs, context window adjustments, system prompt modifications to reflect new policies. Each of these can shift output distributions in ways that invalidate carefully tuned prompts. The user’s workflow breaks without any warning, and there’s no changelog they can consult.

This is worth understanding in detail if you’re building anything production-grade on top of these APIs. The instability is structural, not incidental.

The Goodhart’s Law Version of AI

Goodhart’s Law states that when a measure becomes a target, it ceases to be a good measure. AI deployment is running a live experiment in what happens when you apply this to intelligence itself.

User satisfaction ratings are a measure of perceived quality. When you optimize for them at scale, you get a model that is very good at seeming helpful. Seeming helpful and being helpful are correlated but not identical. A model that confidently produces a wrong answer with good formatting will outscore a model that hedges appropriately on hard questions, at least among users who don’t know the domain well enough to evaluate accuracy.

This dynamic pushes models toward a particular failure mode: they become more fluent and less reliable at the same time. The outputs sound better. The error rate on hard problems goes up. This is genuinely difficult to measure because the standard benchmarks are run on the base model, not the RLHF-tuned deployment version that users actually interact with.

The Distribution Shift Nobody Talks About

Here is the version of this problem that gets the least attention: as AI tools become mainstream, the population of users shifts in ways that change what the system is actually being optimized for.

Early adopters of AI coding assistants were, broadly speaking, developers who had strong opinions about correctness and were quick to notice and report failures. The feedback they generated reflected a reasonably high bar. As these tools spread to a larger population that includes people with less experience evaluating code quality, the feedback distribution changes. The model gets rated highly for producing syntactically correct code that doesn’t actually do what was requested, because many users aren’t in a position to run it and find out.

This is not a criticism of those users. It is a description of a systems problem. The evaluation signal degrades as adoption grows, which means the model can silently get worse on the dimensions that matter to expert users, while every aggregate metric looks fine.

What Providers Can Do, and Why Many Won’t

There are genuine technical approaches to this. Separating feedback signals by user cohort and weighting them differently is one. Maintaining separate fine-tuned variants for different use-case profiles is another. Running continuous benchmarks on versioned model snapshots against fixed test suites would at least make the degradation visible.

The reason these approaches are underinvested is partly technical complexity and partly incentive structure. A provider who documents that their model got worse at expert-level tasks between Q1 and Q2 is taking on reputational risk. The incentive is to aggregate metrics in ways that show improvement or at least stability, and to roll out changes without detailed changelogs. This is not unique to AI companies. It is a standard product management posture.

The honest answer is that providers who serve both mass-market and expert use cases are facing a genuine product segmentation problem, and most of them are handling it by making implicit choices that favor the larger user base without acknowledging the tradeoff.

What This Means

If you are building seriously on top of AI APIs, a few things follow from this analysis.

First, version lock matters more than it’s given credit for. If a specific model version is working well for your use case, pinning to it and treating upgrades as a deliberate migration decision is not paranoia. It is basic engineering discipline.

Second, your own evaluation suite is not optional. Relying on the provider’s benchmarks to tell you whether the model is good for your task is like relying on a restaurant’s Yelp average to tell you whether they can handle your food allergy. The aggregate signal doesn’t tell you what you need to know. Build a test suite that reflects your actual use case and run it every time you update anything.

Third, the gap between how these systems perform in demos and how they perform in production tends to widen over time, not narrow. The demo is run on the best current model, with careful prompting, on a favorable task distribution. Production involves all the messy edge cases that the demo glossed over, running on a model that is being continuously adjusted by signals that have nothing to do with your use case.

The underlying models are genuinely impressive, and they are improving on many dimensions. But deployment at scale introduces dynamics that the benchmark numbers don’t capture. Understanding those dynamics is a prerequisite for building anything that has to work reliably, not just impressively.