You ask an AI the same question twice. You get two different answers. Most explanations stop at “it’s random by design” and leave you there. That’s true but incomplete. Randomness is one knob on a much larger mixing board, and understanding the full board makes you a significantly better prompt writer.

1. Temperature Is the Famous Culprit, But It’s Just the Start

Temperature is a parameter that controls how much an AI model samples from lower-probability word choices when generating text. A temperature of 0 means the model almost always picks the most statistically likely next token. A higher temperature, say 0.8 or 1.0, means it occasionally reaches for less expected words, which produces more varied and often more creative output.

This is worth understanding because you can often control it. Many APIs expose temperature directly. OpenAI’s API, for instance, lets you set it between 0 and 2. If you need deterministic, factual outputs, lower it. If you’re generating creative copy where variety is good, raise it. The randomness is a deliberate design choice, not a flaw in the system.

2. Your Prompt Is Underspecified (and the Model Is Filling the Gaps)

AI models don’t ask clarifying questions before they answer. They infer intent from whatever you give them, then commit to an interpretation. If your prompt is ambiguous, the model picks an interpretation and runs with it. Ask the same ambiguous question twice and it may pick a different interpretation each time.

“Write a summary of this document” is underspecified. Summary for whom? How long? What level of technical detail? The model makes those calls. If you want consistent output, you need to specify the things you’d normally leave implicit. Think of it less like talking to a colleague who knows your context and more like writing a legal brief where every assumption has to be stated.

3. Context Window Position Changes What the Model Weighs

Research on large language models has found that they don’t treat all parts of their context window equally. The phenomenon, sometimes called “lost in the middle,” describes how models tend to weight information at the beginning and end of a long prompt more heavily than information in the middle. If you have a long system prompt followed by lots of text, the model may effectively underweight something you placed in the middle.

This matters practically: if you’re pasting in a long document and asking questions about it, the section you care about most might be getting less attention than you think. Restructure your prompt so the most important instructions and the most relevant text are near the top or the bottom, not buried.

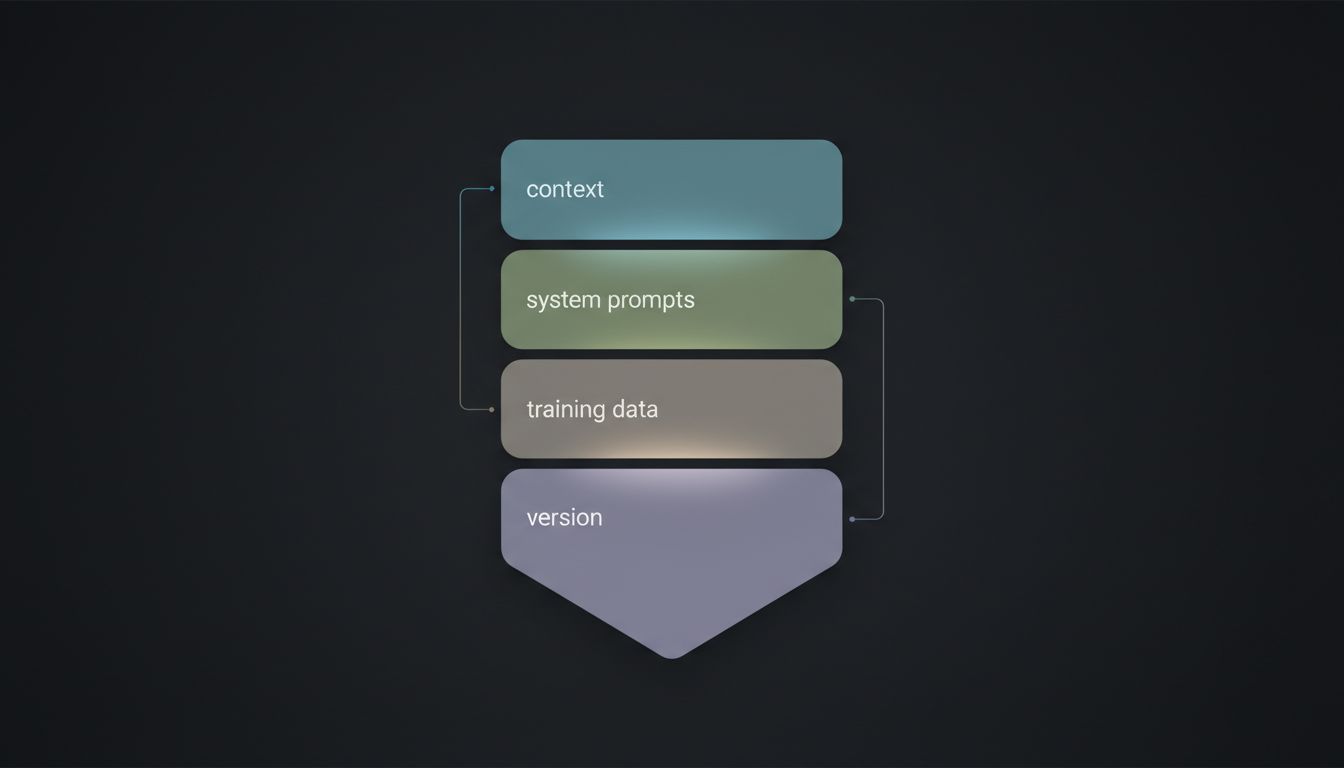

4. Model Versions Change Underneath You

When a company updates a model, the old version and the new version are different neural networks. They share architecture and training approach, but their weights are different. This means “GPT-4” in January and “GPT-4” in September might give meaningfully different answers to the same question, because you’re talking to different models that happen to share a name.

This catches a lot of teams off guard when they build workflows around a model’s behavior. If you need stability across time, pin to a specific model version. OpenAI, Anthropic, and Google all offer versioned model identifiers precisely for this reason. Using the generic “latest” alias is convenient until it isn’t.

5. System Prompts and Deployment Context Are Often Invisible to You

When you use an AI through a product interface rather than directly via an API, there’s almost always a system prompt running in the background that you can’t see. That system prompt shapes tone, constrains topics, and sometimes strongly biases outputs toward certain formats or positions. The same underlying model will behave quite differently depending on what’s in that hidden layer.

This is why asking ChatGPT through the consumer interface and asking GPT-4 through the API can feel like talking to different assistants even at the same temperature setting. The consumer product has guardrails, personas, and formatting instructions baked in. If you’re comparing model outputs seriously, you need to control for this layer, which means using the API directly and being explicit about your own system prompt.

6. The Model’s Training Data Has Real Gaps and Seams

Large language models are trained on massive but finite datasets with a cutoff date. Within that data, coverage is uneven. Topics that appear frequently in training data get more confident, more consistent treatment. Topics that appear rarely, or that appeared in inconsistent ways across sources, produce more variable outputs.

This is why an AI might give you crisp, consistent answers about Python syntax (massively overrepresented in training data) and noticeably wobbly answers about a niche regulation or an obscure historical event. It’s not being evasive. It’s generating from a thinner slice of training signal, which means more variance. When you notice inconsistency in a domain, treat it as a signal to verify independently rather than assuming the model has authority it may not actually have.

What to Actually Do With This

These six factors give you a real checklist. Before you conclude that an AI is unreliable, ask: Did I set temperature appropriately for this task? Was my prompt specific enough to constrain interpretation? Am I working with a pinned model version or a floating one? Is there an invisible system prompt shaping outputs? Is this a topic with thin training coverage?

Most inconsistency is traceable to one of these causes. And most of them are fixable on your end without waiting for the model to improve.