You ask your AI assistant to summarize a report. It gives you five bullet points. You ask again, word for word, and you get four bullet points in a completely different order with slightly different phrasing. You didn’t change anything. The AI did. If you’ve ever felt like your AI tools have a short memory or a loose relationship with consistency, you’re not imagining it. The variation is real, it’s intentional, and once you understand why it happens, you can actually control it.

This connects to a broader truth about how AI products are designed. Like a lot of tech behavior that seems random or broken, there’s a deliberate architecture underneath. If you’ve ever read about how AI chatbots are trained to say they don’t know something, you’ll recognize the same principle at work here: what looks like a flaw is usually a considered engineering decision.

The Number Behind the Randomness

Every major AI language model has a setting called temperature. It’s a number, usually ranging from 0 to 2, and it controls how much randomness gets injected into the model’s output. Think of it like a dial that goes from “precise and predictable” at one end to “creative and unpredictable” at the other.

Here’s the basic mechanics. When an AI generates text, it doesn’t pick each word randomly from a dictionary. It calculates probabilities across thousands of possible next words and then selects from that distribution. A low temperature makes the model almost always pick the highest-probability word. A high temperature flattens that distribution so less likely words get a real shot at being chosen.

At temperature 0, you’ll get the same answer every time you ask the same question. At temperature 1 or higher, every response is a fresh roll of the dice.

Most consumer AI tools set temperature somewhere in the middle by default, which is why you see variation but not total chaos.

Why High Temperature Isn’t a Bug

You might wonder why anyone would want randomness baked into a tool meant to help you work. The answer is that deterministic (always-the-same) outputs are actually terrible for a lot of use cases.

If you’re using AI to brainstorm ideas, write marketing copy, draft multiple versions of an email, or generate creative content, you want variation. You want the model to explore the space of possible answers, not just hand you the statistical average every time. High temperature is what lets you generate ten different taglines and pick the best one instead of getting the same tagline ten times.

This is the same logic behind why the most successful apps got big by solving problems nobody was willing to admit they had. The “problem” of AI inconsistency that users complain about is actually the solution to a different problem: creative monotony. The variation is the feature.

That said, high temperature is genuinely unhelpful when you need reliable, factual, or structured output. If you’re asking an AI to extract data from a document, translate text accurately, or follow a strict format, randomness is your enemy.

How to Control Temperature Yourself

If you’re using a developer API (like OpenAI’s, Anthropic’s, or Google’s), you can set temperature directly in your API call. It looks something like this:

"temperature": 0.2

Lower numbers for precision. Higher numbers for creativity. It’s one of the simplest and most powerful levers available to you.

If you’re using a consumer product like ChatGPT, Claude, or Gemini and don’t have direct access to the temperature setting, you can approximate the effect through your prompts:

- To reduce variation: Add phrases like “be consistent,” “use the exact format below,” or “respond the same way each time.” Giving the model a strict structure to follow narrows the probability distribution in practice.

- To increase variation: Ask for multiple versions explicitly. “Give me five different ways to phrase this” encourages the model to spread across its probability space.

- To anchor outputs: Give the model examples of exactly what you want. Few-shot prompting (showing the model two or three examples of ideal responses) acts almost like reducing temperature because you’re loading the probability distribution toward a specific style.

The Practical Framework for Choosing Your Settings

Here’s a simple framework you can apply right now.

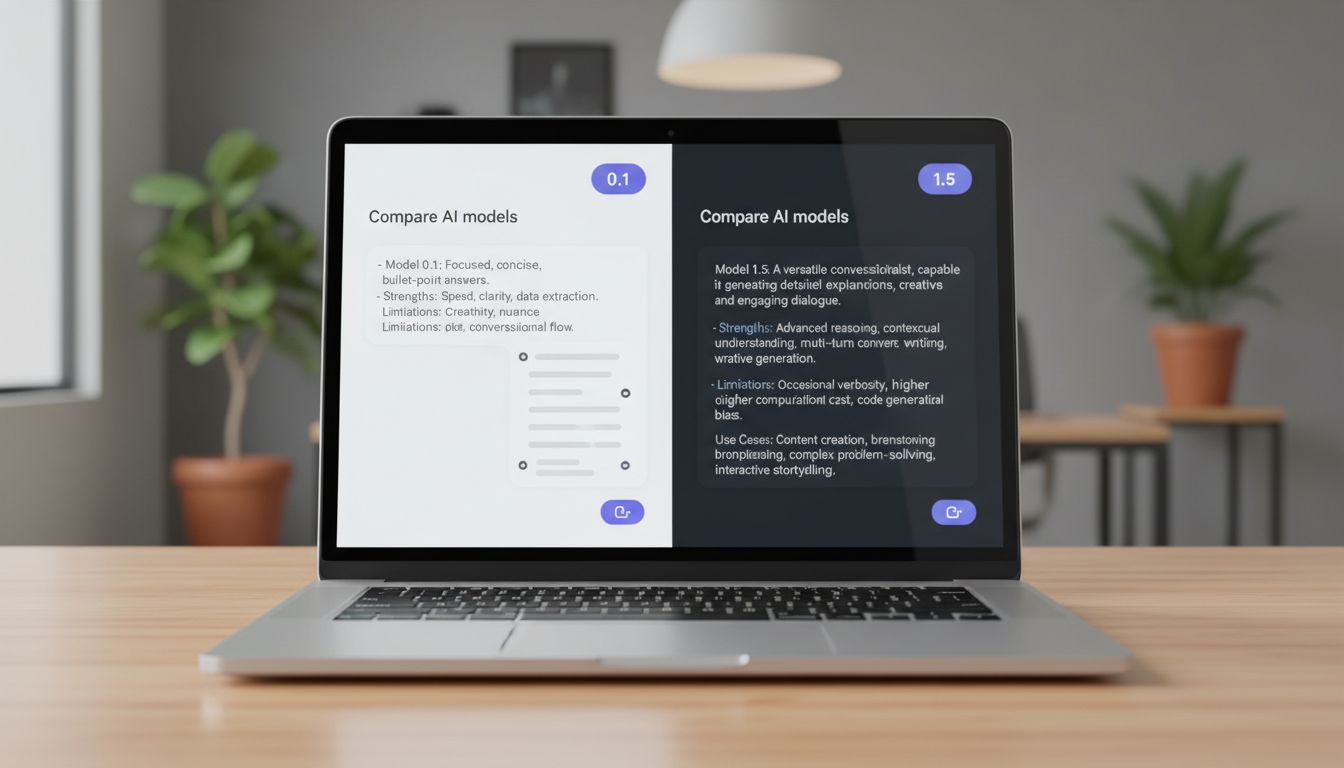

Use low temperature (0 to 0.3) when: - Extracting specific information from a document - Generating code that needs to be syntactically correct - Producing structured data (JSON, tables, lists) - Answering factual questions where accuracy matters - Following a strict template

Use medium temperature (0.4 to 0.7) when: - Writing professional emails or summaries - Explaining concepts to different audiences - Editing or improving existing text - General Q&A where some nuance is welcome

Use high temperature (0.8 to 1.5) when: - Brainstorming ideas or product names - Writing creative content, stories, or marketing copy - Generating diverse options to choose from - Exploring unconventional angles on a problem

If you’re building AI into a workflow or product, AI chatbots give different answers to the same question because of a number you can control, and understanding that number is the difference between a tool that frustrates users and one that feels reliable.

One More Variable Worth Knowing

Temperature isn’t the only knob that affects output variation. Two others worth knowing about are Top-P and Top-K.

Top-P (also called nucleus sampling) limits the model to choosing from only the words that together account for a certain percentage of the probability mass. A Top-P of 0.9 means the model only considers words whose combined probability adds up to 90%, cutting off the long tail of unlikely options.

Top-K simply caps how many word options the model considers at each step. A Top-K of 50 means it only ever chooses from the 50 most likely next words.

Most platforms let you adjust these alongside temperature, and they interact with each other. In practice, adjusting temperature is the most intuitive starting point. Once you’re comfortable there, you can experiment with Top-P to fine-tune.

The core insight to take away is this: AI inconsistency isn’t a reliability problem waiting to be fixed. It’s a dial waiting to be set. Now that you know it exists, you can stop being surprised by variation and start using it strategically. The AI isn’t being random. It’s being exactly as random as someone decided it should be, and with the right settings, that someone can be you.