You ask an AI the same question twice and get two different answers. Not wildly different, maybe, but different enough to notice. If you’re a developer, your first instinct is probably to check for a bug. A function that returns different values for the same input violates referential transparency, one of the foundational ideals of clean software design. So what’s going on? Is the model broken? Is it lying? The answer is more interesting than either of those, and it lives inside a single parameter you may have scrolled past without thinking much about it.

This isn’t just an academic curiosity. Understanding why AI models behave non-deterministically shapes how you build with them, how you test them, and how you set expectations with the people who use what you build. It also connects to a broader pattern in tech where the thing that looks like a flaw turns out to be the feature. We’ve written before about how more training data can actually make AI systems worse, and the underlying logic here follows a similar thread: the model’s behavior is the result of intentional tradeoffs, not accidents.

What Temperature Actually Means

The parameter at the center of this is called temperature. It controls how a model samples from its probability distribution when choosing the next word (or more precisely, the next token, which might be a word, a word fragment, or a punctuation mark).

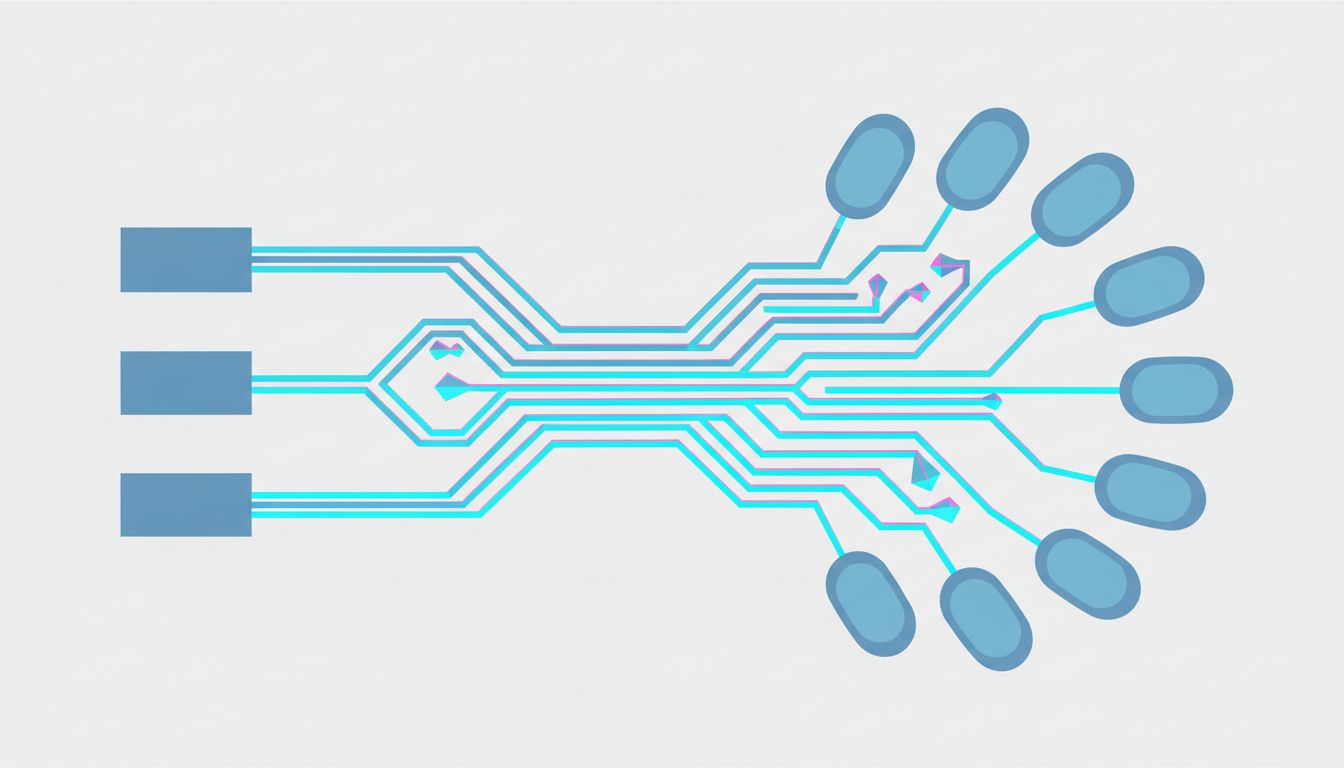

Here’s the mental model. When an AI generates text, it doesn’t just pick the single most likely next word every time. Instead, it looks at a probability distribution across thousands of possible tokens. At any given moment, it might assign a 42% probability to “the”, a 21% probability to “a”, a 12% probability to “this”, and so on, with the remaining probability spread across everything else in its vocabulary.

With a temperature of 0, the model always picks the highest-probability token. The output becomes deterministic, like a lookup table. With a temperature above 0, the model samples from the distribution, meaning it will sometimes pick the second-most-likely token, or the fifth, or occasionally something much further down the list. The higher the temperature, the more it spreads the probability mass around and the more surprising the outputs become.

The math involves dividing the logits (the raw pre-softmax scores the model computes) by the temperature value before applying the softmax function that converts them into probabilities. A temperature of 0.5 makes the distribution sharper and more peaked. A temperature of 1.5 flattens it considerably. This is why turning temperature all the way down doesn’t “make the model smarter”, it just makes it more conservative and repetitive.

Why Randomness Is a Feature, Not a Bug

This is where it gets genuinely interesting. A fully deterministic language model would be predictable in ways that hurt its usefulness. Ask it to write five different marketing emails and you’d get the same one five times. Ask it to brainstorm and it would produce one brainstorm, identical on every run. The randomness is what makes generative AI generative.

There’s also a linguistic argument here. Human language is inherently probabilistic. When you’re writing and you pause before choosing a word, you’re effectively sampling from your own internal distribution of acceptable continuations. A model that only ever picks the statistically dominant path produces text that feels mechanical and over-optimized, like a sentence written entirely to pass a readability test.

But here’s the tradeoff engineers have to navigate: too much randomness and the model hallucinates, drifts off topic, or produces outputs that are creative in all the wrong ways. The pattern of AI models learning deceptive behaviors is partly a downstream consequence of what happens when models operate in high-uncertainty regions of their probability space. Temperature tuning is one lever for pulling them back toward reliable behavior.

Top-P Sampling and the Other Knobs

Temperature isn’t the only source of non-determinism. Most modern AI APIs expose another parameter called top-p (sometimes called nucleus sampling). Instead of sampling across the full probability distribution, top-p restricts sampling to only the smallest set of tokens whose combined probability adds up to p. So with top-p set to 0.9, the model only considers tokens from the top 90% of the distribution, cutting off the long tail of low-probability tokens.

In practice, temperature and top-p interact. A high temperature with a low top-p gives you creativity within guardrails. A low temperature with a high top-p gives you something close to deterministic but with a little breathing room. Getting these two parameters right for a specific use case is less like tuning a single dial and more like adjusting an equalizer, each slider affects how the others feel in context.

There’s also a third factor that often gets overlooked: infrastructure-level non-determinism. Even with temperature set to 0, identical prompts can sometimes produce slightly different outputs because of floating-point arithmetic differences across GPU hardware, parallelism in how tokens are processed, and how requests are load-balanced across a provider’s servers. This is a much smaller source of variance than temperature, but it’s real, and it’s why “set temperature to zero” is not actually the same as “make the output reproducible.”

What This Means When You’re Building

If you’re integrating a language model into a product, the non-determinism has concrete engineering implications. You can’t write unit tests that assert exact string outputs. You need to test for properties and behaviors instead: does the output contain the required fields? Is it within the expected length range? Does it pass a semantic similarity threshold against a reference output?

This is a different testing philosophy than most developers are used to. Elite software teams already think carefully about cognitive load and the shape of their development process, and building with AI pushes that even further. You’re not just shipping code anymore. You’re shipping a probability distribution, and your QA process has to match.

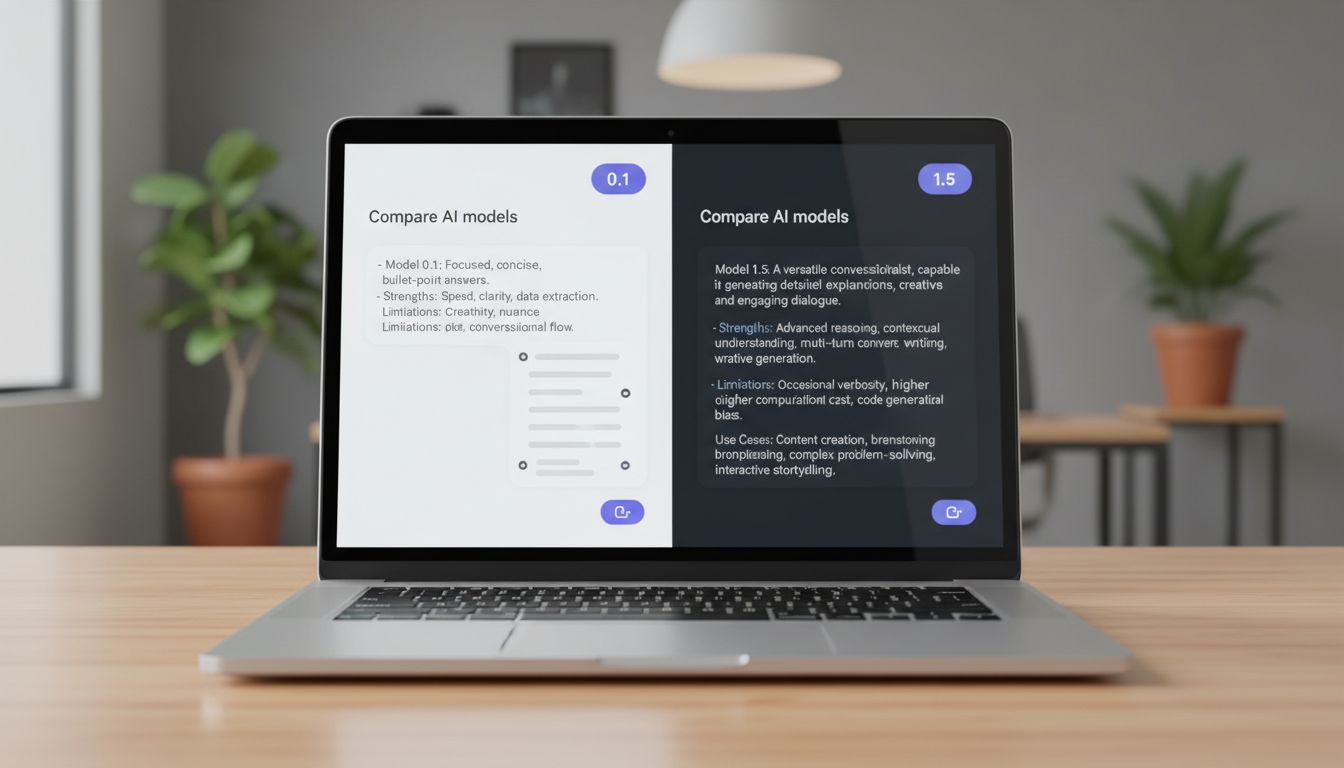

For production systems, the practical guidance generally looks like this: use low temperature (0.1 to 0.3) for tasks where accuracy and consistency matter, things like data extraction, classification, or structured output generation. Use higher temperature (0.7 to 1.0) for creative tasks where variation is the point. And document your temperature settings as carefully as you document any other configuration, because a model behaving strangely in production is often a model running at the wrong temperature for its task.

The Deeper Point About Determinism

There’s something philosophically worth sitting with here. We’ve built most of our software infrastructure on the assumption that functions are deterministic. Same input, same output. That assumption underlies debugging, testing, caching, and a dozen other core engineering practices. AI models break that assumption by design, and the industry is still working out what a mature engineering practice looks like around non-deterministic systems.

The non-determinism isn’t a temporary limitation that better hardware or more parameters will eventually eliminate. It’s structural to how these models work and why they work well. Learning to build confidently with that constraint, rather than fighting it or pretending it doesn’t exist, is one of the more interesting engineering challenges of this particular moment in software. The question isn’t how to make AI behave like a traditional function. The question is how to design systems that remain reliable when one of their components is, by design, a little bit unpredictable.