What Attention Residue Actually Is

In 2009, organizational psychologist Sophie Leroy published research on something she called attention residue. The concept is straightforward once you hear it: when you switch from task A to task B, part of your cognitive resources stays anchored to task A. You are physically sitting at task B, but your brain hasn’t fully let go of the previous context. That leftover cognitive load is the residue.

Leroy’s experiments showed that people who switched tasks before fully completing the first one performed significantly worse on the new task. The incomplete task kept pulling at working memory, the mental scratchpad your brain uses to hold active information. The more urgent or unresolved the first task felt, the heavier the residue.

This isn’t a soft, hand-wavy productivity concept. Working memory has measurable capacity limits, which cognitive scientists often describe using George Miller’s classic formulation: roughly seven items, plus or minus two. Attention residue is essentially a memory leak in that system. You context-switch, and instead of deallocating the old task’s resources, they stay allocated, quietly consuming capacity you need for what’s in front of you.

The reason this matters specifically in tech is that software development, product design, and most technical knowledge work require holding large amounts of context simultaneously. A developer debugging a race condition might need to track five threads of execution, two possible state transitions, and the business logic that triggered the bug, all at once. That’s already pushing the limits of working memory. Any residue from a Slack thread they just answered is genuinely costly in a way it wouldn’t be for work with a shorter cognitive stack.

How the Research Evolved Beyond the Original Paper

Leroy’s work opened a door, and subsequent research walked through it in useful directions. One important finding: the residue isn’t fixed in size. It scales with how strongly you felt the need to finish the interrupted task. If you were deeply engaged and stopped mid-problem, the residue is heavier. If you had reached a natural stopping point, it’s lighter.

This has a practical implication that many companies haven’t absorbed yet. It’s not just about how often you switch tasks. It’s about where in the task’s arc you interrupt yourself. A developer who closes a feature branch at a good commit point and then answers email is in a very different cognitive state than one who stops mid-refactor to join a meeting.

Researchers have also found that simply writing down where you were and what you were thinking before switching tasks substantially reduces residue. The act of externalizing the state, getting it out of working memory and onto paper or a notes file, frees up cognitive resources even though you haven’t finished the task. Think of it as serializing your mental state to disk before a process switch. The brain, apparently, relaxes its grip on a problem once it’s convinced the problem has been saved somewhere.

What Tech Companies Are Actually Doing About This

The companies doing the most sophisticated work here are operating at two levels: environmental design and workflow structure.

On the environmental side, the push toward notification batching is the most visible example. Rather than allowing Slack or Teams to interrupt continuously, some engineering organizations have moved to explicit “office hours” for communication, where a developer might only check messages at 11am and 3pm. This is sometimes called async-first communication, and async-first teams have been building around the idea that interruption is the real bug for longer than the productivity discourse has caught up to.

On the workflow structure side, the more interesting interventions involve what you might call completion checkpointing. Before a meeting or a context switch, engineers at some organizations are encouraged to spend five minutes writing a brief status note for themselves: what they were working on, what they were thinking, what the next step was going to be. This is essentially the “offloading to external storage” trick that the research supports, formalized into a team practice.

Some companies have gone further and restructured their meeting schedules entirely around minimizing mid-task interruptions. The logic is that a two-hour deep work block interrupted once in the middle produces dramatically less output than two consecutive one-hour blocks. Meeting-free blocks, when organizations treat them seriously, exist precisely because of this math.

There’s also been genuine investment in physical environment design. Open-plan offices, which dominated tech campuses for over a decade, are particularly brutal for attention residue. Every ambient conversation is a potential involuntary context switch. The retreat toward hybrid work, partially driven by pandemic necessity, has had an accidental benefit here: many developers report significantly longer uninterrupted focus periods when working from home, not because home is quieter but because ambient office noise triggers involuntary attention shifts in a way that chosen background noise doesn’t.

The Perverse Incentive Hidden Inside Collaboration Tools

Here’s the uncomfortable part. The tools that tech companies build for productivity, and often use internally, are structurally opposed to reducing attention residue.

Slack, Teams, Jira, GitHub notifications, pull request comments: these are all designed around the assumption that faster response times are better. Badges, notification counts, and unread indicators are explicit pressure to context-switch. The tools aren’t malicious about this. The design logic is coherent: if someone is blocked on your review, a faster response unblocks them. The problem is that this optimization is entirely local. It optimizes for the blocked person without accounting for the cost imposed on the reviewer.

This is a classic externality problem in software form. The cost of the interruption is paid by the interrupted person, but the benefit accrues to the person requesting attention. From an individual’s perspective, sending a ping is nearly free. From the team’s aggregate perspective, everyone pinging everyone creates a system where nobody maintains deep focus for long enough to do the work that actually requires it.

Some companies have started treating this as a systems design problem rather than a culture problem. GitHub’s review request routing, for example, can be configured to consolidate notifications and batch them. Some engineering teams have built internal tooling that only surfaces non-urgent requests during designated windows. The underlying idea is to re-internalize the externality: make the cost of requesting someone’s attention more visible, so that the requester considers whether the interruption is actually necessary right now.

The Eight-Hour Focus Myth and What Companies Get Wrong

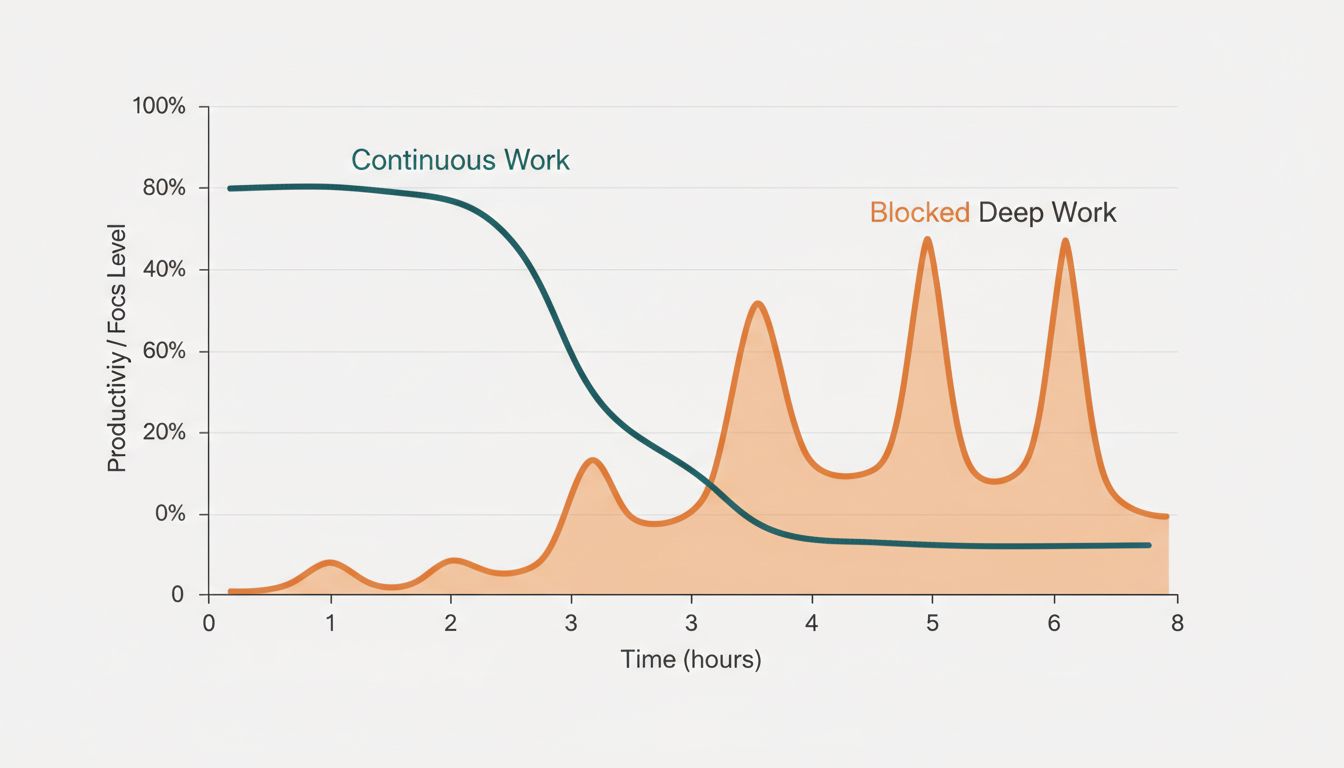

The framing in a lot of productivity writing, including from tech companies promoting their own practices, treats the goal as maintaining focus for eight or more consecutive hours. This is the wrong target.

The research on cognitive endurance suggests something more nuanced. Sustained attention on demanding cognitive tasks degrades significantly within ninety minutes to two hours for most people. The goal shouldn’t be eliminating all task-switching. It should be structuring task-switches to minimize residue and scheduling demanding work during peak cognitive hours.

This is where the “eight hours of focus” framing misleads people who are trying to apply it. A developer who forces themselves to stare at a hard problem for eight hours isn’t outperforming one who works in focused ninety-minute blocks with genuine breaks between them. They’re likely producing worse work after hour four while believing they’re being disciplined.

The more defensible goal is protecting the first one to two hours of deep work in a session from interruption, ensuring that the task being done during those hours is actually the highest-value task (not busywork dressed up as focus), and then allowing lighter tasks or communication to fill the remaining time. That’s a very different system from “no distractions all day.”

The Zeigarnik Effect and Why Incomplete Tasks Haunt You

Attention residue has an interesting cousin in the Zeigarnik effect, named after Soviet psychologist Bluma Zeigarnik, who observed in the 1920s that waiters remembered unpaid tabs better than completed orders. The brain allocates persistent attention to unfinished business. This is useful in some contexts (it’s why cliffhangers work in storytelling) and actively harmful in knowledge work.

The connection to tech workflows is direct. A developer who leaves a bug half-investigated at the end of the day will often find themselves mentally returning to it during non-work hours. This isn’t productive rumination. It’s the cognitive equivalent of a process that never properly terminates, sitting in the background consuming resources.

Some of the practices that reduce attention residue also mitigate the Zeigarnik effect. Writing a clear next-steps note before stopping work gives the brain something like a closure signal. The task is “saved.” There’s a known re-entry point. This is why developers who keep good task notes, not elaborate systems but simple “where I left off” files, often report feeling less cognitively loaded outside of work hours.

Organizations that have internalized this tend to build it into their engineering culture as documentation hygiene rather than as a psychological intervention. The effect is the same either way.

What This Means

Attention residue is a real, measurable cognitive phenomenon, not a productivity influencer’s repackaging of “focus is good.” The research identifies a specific mechanism: switching tasks before reaching a natural stopping point leaves working memory partially allocated to the previous task, degrading performance on whatever comes next.

Tech companies are responding at different levels of sophistication. The least sophisticated response is culture-based: “we value deep work, please don’t interrupt each other.” This fails because it doesn’t change the structural incentives of the tools people use. The more sophisticated response redesigns those tools and schedules to reduce involuntary context switches, establish natural stopping points before switches happen, and give people mechanisms to externalize their cognitive state before they switch.

The eight-hours-of-continuous-focus framing is mostly wrong and counterproductive. The real goal is protecting the conditions under which difficult cognitive work can actually happen: minimal residue, appropriate task sequencing, and enough cognitive slack to do something genuinely hard before the day’s demands accumulate.

If you work in technical knowledge work, the single most actionable change is probably the simplest: before you switch tasks, spend two minutes writing down where you are and what the next step was going to be. It’s not glamorous. It costs almost nothing. The research says it works, and the mechanism is clear enough that you can reason about why.