You’ve been there. A piece of software you loved in beta ships as a finished product and feels slower, clunkier, more cluttered. You’re not imagining it. This happens often enough to be a pattern, and the explanation isn’t that developers get careless. It’s that the incentives change completely the moment a product moves from beta to release.

Understanding why this happens will make you a smarter evaluator of software, a better collaborator with engineering teams, and if you build products yourself, it will help you avoid one of the most common and preventable quality failures in the industry.

Who Uses a Beta and What They Want From It

Beta users are not typical users. They opted in. They’re curious, technically comfortable, and most importantly, they’re there because they find the software interesting rather than necessary. They want to poke at things. They want the new version of something they already care about, or they want early access to something they think will become important.

This self-selection does something powerful for software quality: it creates a feedback loop where the people testing the product are uniquely motivated to report what’s wrong and unusually tolerant of what is broken. A beta user who hits a rough edge is likely to file a bug report. A typical consumer who hits that same rough edge is likely to uninstall the app and leave a one-star review.

Developers, consciously or not, build for their audience. During beta, they’re building for people who want raw capability and don’t mind roughness. Feedback tends to be specific and technical. The signal is high quality. The result is that development teams spend beta cycles fixing real, structural problems reported by people who can articulate them clearly.

What Changes When You Ship to Everyone

The moment a product goes to general availability, the audience expands by orders of magnitude, and that expansion changes everything the team is optimizing for.

First-time, non-technical users don’t report bugs the same way. They describe feelings: it feels slow, it feels confusing, the button is in a weird place. That feedback is genuinely valuable, but it’s harder to action quickly. Instead of finding and fixing root causes, teams often respond with surface-level changes: bigger buttons, added tooltips, simplified menus. These changes can degrade the experience for the users who loved the beta.

The metrics change too. During beta, teams often track depth of usage, bug counts, and crash rates. After launch, the pressure often shifts to daily active users, retention percentages, and conversion numbers. Those are legitimate metrics, but optimizing for them can mean adding features that broaden appeal rather than deepening quality.

There’s also organizational pressure that didn’t exist during beta. Marketing has made promises. A launch date has been announced. Partners are waiting on integration access. In that environment, the question stops being “is this as good as it can be?” and starts being “is this good enough to ship?” Those are not the same question.

The Feature Addition Problem

One of the most reliable ways a final release degrades from its beta is feature accumulation. Beta versions tend to be leaner, because the team hasn’t yet received all the requests that come in from stakeholders once launch is imminent.

What happens in the weeks before general availability is a negotiation. Sales wants one more integration. The enterprise team needs an admin dashboard. Someone executive-level wants to see a feature competitor X already has. Each individual request is reasonable in isolation. Collectively, they turn a focused product into something bloated.

Slack’s early versions (before its public launch in 2013) were tightly scoped around a specific communication workflow. The team iterated publicly and gathered feedback that shaped a focused product. Stewart Butterfield built Slack by treating every complaint as a product roadmap, but the distinction is that early complaints came from people who wanted the core thing to be better, not people who wanted it to become a different thing. As Slack grew into an enterprise product, features multiplied and the original simplicity eroded. That’s not a unique story.

The Performance Regression Nobody Talks About

Beta versions are frequently faster than their final counterparts, and this is almost never accidental.

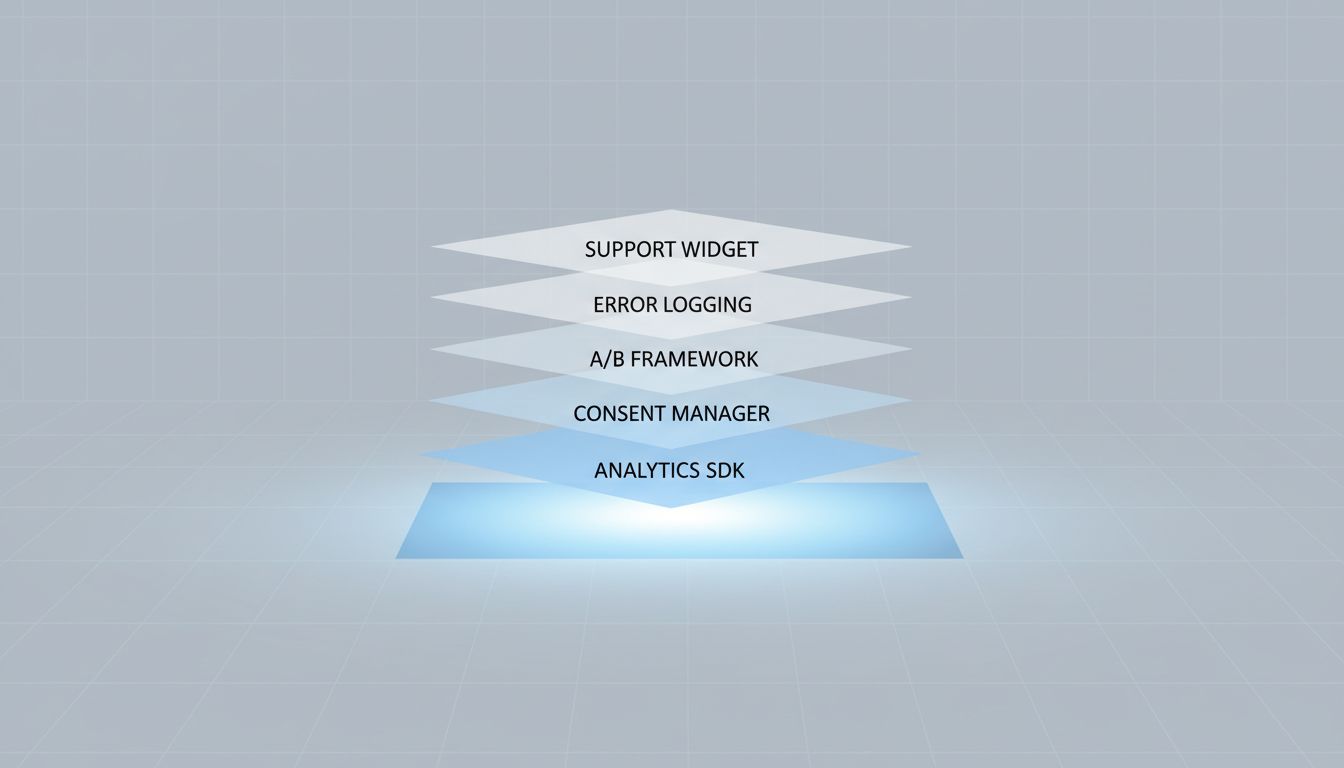

During beta, analytics instrumentation is often minimal. The tracking code that later gets added to measure user behavior, funnel drop-off, and feature adoption adds latency. So does the A/B testing framework, the consent management platform, the customer support chat widget, and the cookie banner. None of these are irrational additions. All of them slow the product down.

There’s also the question of defensive code. As a product reaches more users, edge cases multiply. Developers add validation, fallback handling, and error logging. Each addition is a correct response to a real problem. Their cumulative weight is real.

Tech support tells you to reboot because software was designed to leak, and fixing that is harder than it sounds. The same principle applies here: the overhead of running production software at scale is structurally different from running a contained beta, and much of the performance cost is genuinely unavoidable.

What is avoidable is letting these additions accumulate without deliberate performance budgeting. Some teams do this well, setting explicit targets for page load time or interaction latency and treating regressions as blocking issues. Many teams don’t, and users notice.

The Organizational Handoff

Here’s something that often gets overlooked: the people who built the beta are not always the people who own the final release.

In larger companies, a product can move from an internal skunkworks team to a full product organization as it approaches launch. The original developers understood their design decisions at a level that’s hard to transfer. The new team inherits code and documentation but not intuition. They make locally sensible decisions that collectively drift the product away from what made the beta compelling.

Even in smaller companies, the act of launching changes which voices have the most influence. Pre-launch, engineers and designers dominate. Post-launch, support tickets, sales calls, and executive requests compete for the roadmap. This isn’t dysfunction. It’s the normal expansion of a product into a business. But if nobody is explicitly protecting the core experience that beta users valued, it erodes.

What You Can Do About This

If you’re building software, the most practical thing you can do is treat beta quality as a baseline, not a phase. That means two specific commitments.

First, document what made your beta compelling. Not in vague terms like “clean UI” or “fast,” but specifically. What was the page load time? What was the task completion rate for your core workflow? Which features were used most? Commit to maintaining those numbers through launch. If a new addition degrades them, make that tradeoff visible and deliberate rather than letting it happen by default.

Second, preserve your beta feedback channel after launch. Beta users are some of your most valuable users. Many companies abandon their beta community the moment GA ships, losing exactly the people who can tell them when quality is slipping. Keeping a dedicated channel for these users, and actually reading it, gives you an early warning system that no analytics dashboard provides.

If you’re evaluating software, the beta-to-release quality drop is a signal worth paying attention to. A company that ships a finished product notably worse than its beta has a prioritization problem, and that problem rarely fixes itself. The reverse is also true: a company that ships a release that’s genuinely better than its beta in the ways that matter has demonstrated real discipline. That’s a team worth betting on.

What This Means

The beta-better-than-release phenomenon isn’t about developer carelessness. It’s the product of three converging forces: a motivated, self-selected test audience that generates high-quality feedback; organizational pressures that accumulate features and analytics overhead before launch; and an incentive shift that moves teams from optimizing for quality to optimizing for growth metrics.

Knowing this, you can make smarter decisions. If you’re building, set quality baselines during beta and defend them. If you’re evaluating, read the beta reviews as carefully as the launch reviews. The gap between the two is often the most honest thing a company can tell you about itself.