Model compression is sold as a free lunch. Take a large model, squeeze it down with quantization or pruning, deploy it on cheaper hardware, save money. The benchmarks usually hold up. Accuracy drops a percent or two. Acceptable.

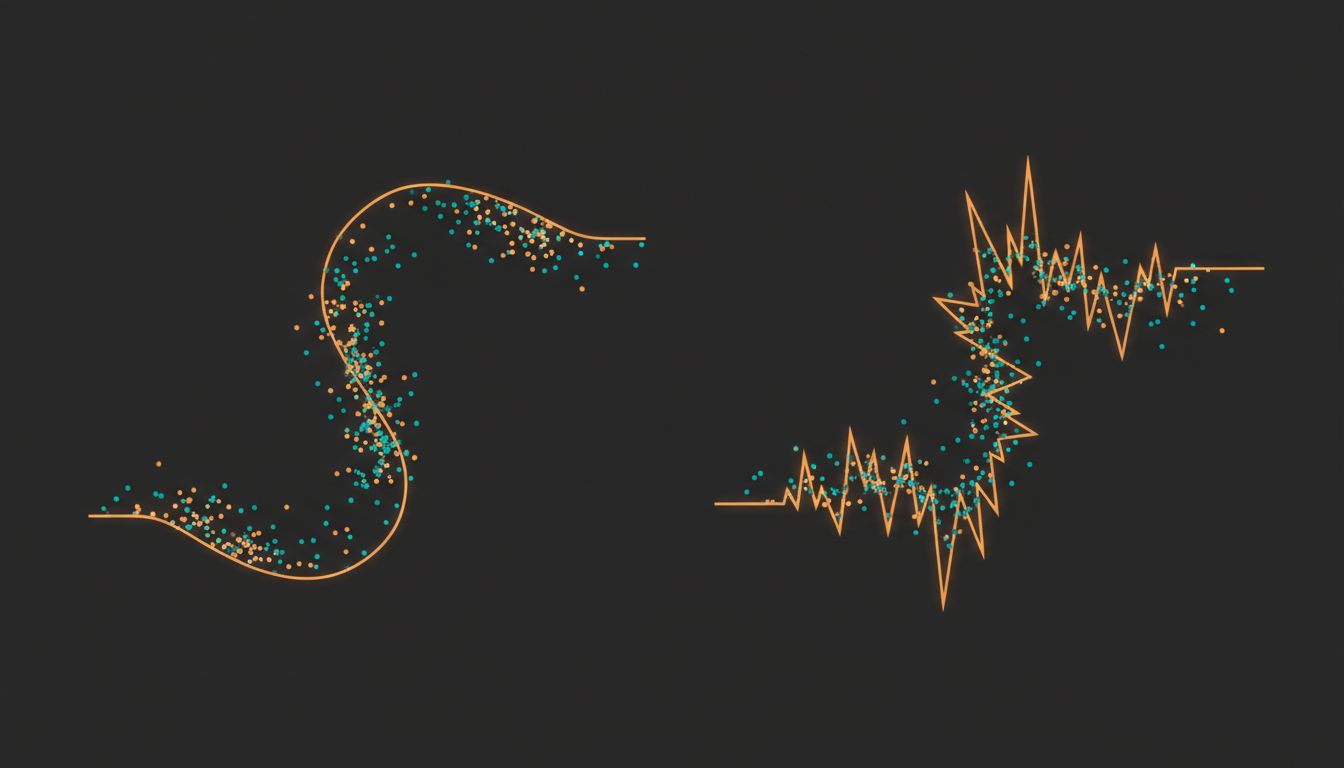

What the benchmarks don’t capture is that compression doesn’t uniformly deflate a model like a tire losing air. It reshapes the model’s internal geometry in ways that are uneven, hard to predict, and occasionally alarming. The model that comes out the other side is not just smaller. It’s different in kind.

Here’s what actually changes.

1. Quantization Doesn’t Lose Information Evenly Across the Model

When you quantize a model from 32-bit floats to 8-bit integers (or lower), you’re mapping a continuous range of values onto a much coarser grid. The math is straightforward. The problem is that different layers in a neural network have very different value distributions, and quantization hits them unevenly.

Attention layers in transformer models tend to produce activations with sharp outliers, a small number of values that sit far outside the typical range. When you quantize these, the outliers force the scaling factor high, which compresses the resolution available for the majority of values clustered near zero. Research into LLM.int8() and similar techniques found this problem specifically in transformer models at scale: a small fraction of dimensions carry disproportionate weight, and naive quantization destroys exactly that signal.

The practical result is that quantization doesn’t degrade the model uniformly. Some capabilities hold up well. Others fall apart. You won’t know which until you test specifically for them, and most benchmark suites aren’t designed to catch the collapses.

2. Pruning Removes Weights, but Redundancy Was Doing Real Work

The intuition behind pruning is that neural networks are massively overparameterized, so you can remove a large fraction of weights with minimal cost. This is true. It’s also incomplete.

Redundancy in a network isn’t pure waste. Some of it functions as robustness, multiple pathways that encode similar information so the network can recover from noise or distributional shift. When you prune aggressively, you can shorten the model without touching its benchmark performance on clean test data, while simultaneously making it brittle on anything slightly out of distribution.

A pruned model trained on standard image classification might lose several percentage points of accuracy when you add a small amount of Gaussian noise to the inputs, even if its clean-image accuracy is nearly identical to the original. The safety margin got pruned away along with the “redundant” weights. For production systems handling real-world inputs, that’s not a minor footnote.

3. Knowledge Distillation Transfers Behavior, Not Understanding

Distillation is the most intellectually interesting compression technique. You train a small student model to mimic the output distribution of a large teacher model, not just its final predictions but the soft probability scores across all classes. The idea is that those soft scores carry information about the teacher’s internal representations, not just which answer is right but how confident and uncertain it is across many possible answers.

This works surprisingly well. But the student model learns to reproduce the teacher’s behavior without necessarily learning the same internal structure. The student might get the right answers for entirely different internal reasons. This matters when the model encounters something genuinely novel. The teacher’s soft outputs don’t transfer the reasoning process, they transfer a compressed behavioral signature. The student model learns a different kind of pattern matching than the teacher used, and the two can diverge on cases that were never in the training distribution.

4. Compressed Models Have Different Failure Modes, Not Just More Failures

This is the point most deployment discussions miss. The assumption is that a compressed model is a strictly worse version of the original. Lower accuracy, same failure patterns. Scale down the trust accordingly.

That’s not what happens. Compressed models can fail on inputs where the original model was confident and correct, while matching or even slightly outperforming the original on other subsets of the data. Quantization can, in some cases, act as a form of regularization that improves generalization on certain tasks while degrading others. The failure surface changes shape, not just size.

This is operationally significant. If you validated your safety guardrails against the full-precision model, those validations don’t automatically transfer. You need to characterize the compressed model’s failure modes independently. Running both models against the same test suite and comparing aggregate accuracy numbers won’t catch this. You need to look at disagreement cases, inputs where the two models give different answers, and understand why they diverge.

5. The Compression Ratio That Works for One Task Will Betray You on Another

Benchmarks for compressed models typically focus on a primary task. Perplexity on a language benchmark, top-1 accuracy on ImageNet, F1 on a named entity recognition corpus. A compression scheme that preserves performance on the benchmark task can carve away capabilities on adjacent tasks that weren’t evaluated.

Multi-task models are especially vulnerable. A model fine-tuned to do both summarization and translation might compress well on summarization metrics while losing significant translation quality. The compression process has no way to know that translation was important. It only knows which weights are numerically small or activate infrequently on the training distribution it was given.

The practical discipline this demands is uncomfortable: you need to benchmark compressed models against every task you care about, not just the one you optimized for. This costs time and often gets skipped. The cases where it matters are also the cases you won’t see coming until a user finds them.

6. The Model You Ship Is Not the Model You Evaluated

There’s a gap between offline evaluation and production behavior that compressed models make wider. Full-precision models running in production match their evaluation environment reasonably well. Compressed models often run on different hardware (edge devices, consumer GPUs, specialized inference chips) with different numerical implementations of the quantized operations.

Numerical precision differences between evaluation and deployment hardware are real and documented. An 8-bit quantized model evaluated on one hardware target can produce different outputs on another, because the rounding behavior of integer arithmetic varies by implementation. Two devices running the “same” model can generate different token sequences for the same prompt. These differences are small per step but compound over a long generation.

This isn’t hypothetical. It’s the class of problem that bites teams who evaluate on cloud GPUs and deploy on mobile. The model they shipped is technically the same weights. It is not the same model. The version of this problem in distributed systems, where timing and ordering assumptions silently break things, has a long and painful history. Compressed model deployment is starting to develop its own version of that history.

Compression is worth doing. The cost and latency savings are real, and for many applications the tradeoffs are entirely acceptable. But the mental model of “smaller and approximately equivalent” is wrong in ways that matter. You’re not getting a deflated version of the original. You’re getting a different artifact that happens to score similarly on a benchmark. Know what you’re actually deploying.