Every developer I know has, at some point, set up the perfect note-taking system. Notion databases with linked references. Obsidian vaults with bidirectional graph views. Roam Research with its block-level transclusion. The tooling is genuinely impressive. And yet, when it comes time to actually remember what they captured, something consistently falls short. The notes are there. The memory is not.

This is not a workflow problem. It is not a tagging problem. It is not something a better folder structure will fix. The gap between digital note-taking and actual memory retention is rooted in neurobiology, and understanding it requires thinking less like a product manager and more like a systems architect examining what happens at the hardware level. If you have ever wondered why tech workers report dramatically better recall with paper notebooks than with digital tools, the answer is not nostalgia. It is encoding depth.

The Encoding Depth Problem

In cognitive science, there is a concept called levels of processing, first described by Craik and Lockhart in 1972. The core idea is that memory retention is not binary. It is a function of how deeply you process incoming information at the moment of capture. Shallow processing, like recognizing a word’s font or hearing its sound, produces weak memory traces. Deep processing, like analyzing what a word means and relating it to something you already know, produces durable ones.

Handwriting forces deep processing almost by default. When you write by hand, your motor cortex, your visual cortex, and your language centers are all firing simultaneously. More critically, the physical constraint of writing speed (roughly 25 words per minute versus 70 or more for typing) forces you to summarize, paraphrase, and prioritize in real time. You cannot transcribe everything. You have to understand it first.

Typing removes that constraint. Studies from Princeton and UCLA (Mueller and Oppenheimer, 2014) found that students who took notes on laptops tended toward verbatim transcription, capturing more raw text but retaining and understanding significantly less. The laptop users were essentially functioning as high-fidelity audio recorders. The handwriters were functioning as compilers, transforming input into a more processed, abstracted representation before storing it.

Why Apps Cannot Just Simulate Constraint

The obvious product question is: why not just build a slow typing mode? Cap the input rate. Force paraphrasing. Add friction deliberately. Some apps have tried versions of this. They have largely failed to move the needle on retention.

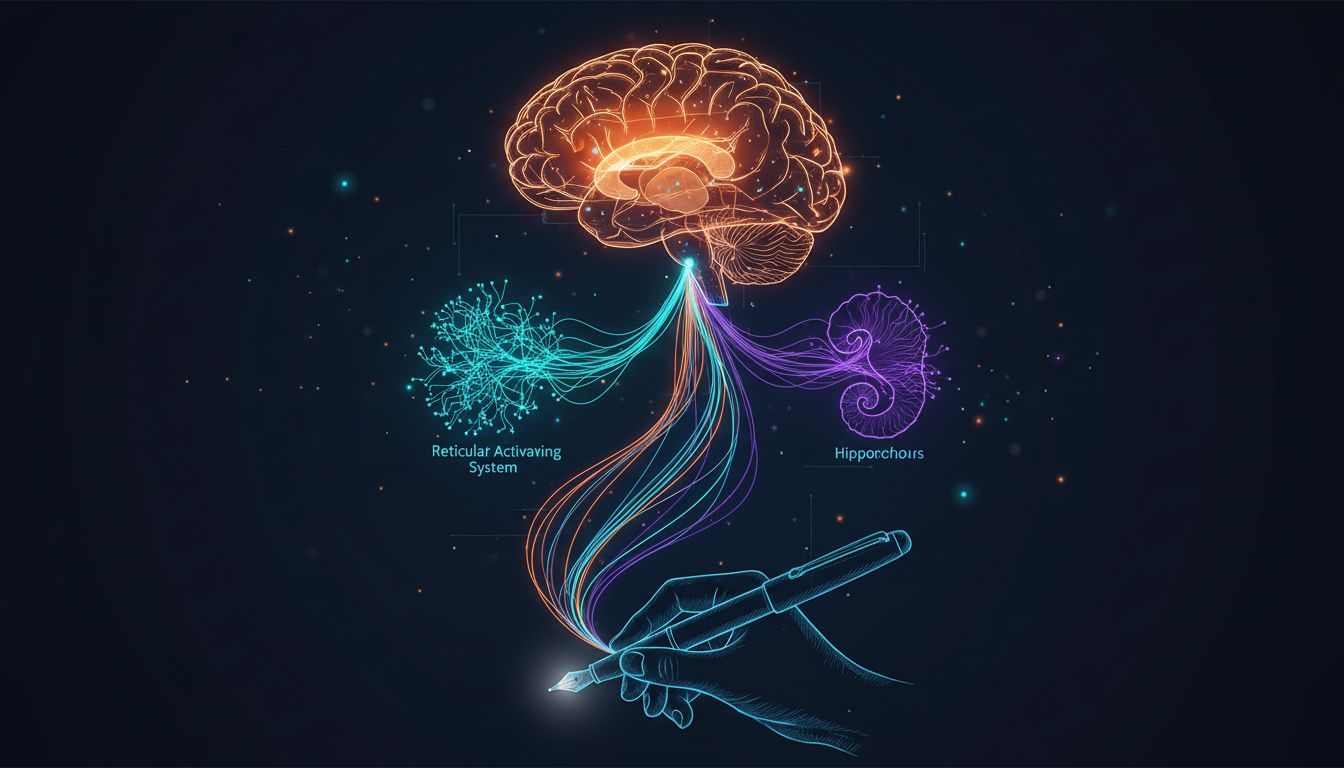

The reason is that cognitive load does not work like a simple dial you can turn up through interface restrictions. The benefit of handwriting is not the slowness per se. It is the specific motor engagement that co-occurs with the slowness. The act of forming letters by hand activates the reticular activating system (RAS), a network of neurons in the brainstem that acts as a relevance filter for what gets consolidated into long-term memory. It is essentially a biological write-through cache with a priority queue, and physical letter formation is one of the signals that bumps items up in priority.

No keyboard interaction produces that signal. Touchscreen stylus input comes closer, which is why some researchers have found iPads with Apple Pencils narrowing (though not closing) the gap. But even there, the uniform smoothness of glass removes the haptic variability of pen on paper, and that variability matters more than it sounds. Your hand knows the difference between writing on textured paper and writing on glass, and that sensory difference contributes to the distinctiveness of the memory trace.

The Retrieval Architecture That Nobody Talks About

There is a second problem that is arguably more interesting from a systems design perspective. Memory retrieval is context-dependent. You remember things more easily when the conditions at retrieval match the conditions at encoding. Psychologists call this encoding specificity. Developers might think of it as key matching in a lookup table where the key includes environmental metadata, not just the content.

When you write something by hand in a physical notebook, the memory trace carries contextual metadata: where you were sitting, what the ambient noise was like, what the pen felt like, the specific shape of your handwriting that day. When you try to recall it later, any of those contextual cues can serve as a retrieval key. The memory is indexed redundantly across multiple sensory dimensions.

Digital notes stored in Notion or Obsidian exist in a perceptual environment that is nearly identical every time you open the app. Every entry looks roughly the same. The font is consistent. The background is the same shade of white. There is almost no contextual metadata attached to the encoding event because the encoding environment is deliberately, and somewhat ironically, frictionless. The apps are optimized for capture speed, which is exactly the wrong optimization for memory retention.

This connects to something worth reading about if you are interested in how productive remote workers actually structure their days: the most effective practitioners deliberately introduce friction into their digital workflows rather than eliminating it. Counter-intuitive, but consistent with the neuroscience.

What This Means for How You Actually Work

None of this means you should throw your note-taking app into the trash. Digital tools are genuinely better at certain things: full-text search, cross-referencing, sharing with a team, and long-term storage without physical degradation. The argument is not that handwriting beats typing at everything. It is that handwriting beats typing at one very specific and very important thing, which is getting information from your working memory into durable long-term storage.

A practical synthesis looks something like this. Use digital tools for capture during fast-moving contexts, meetings, standups, or anything requiring later searchability. Then, for anything you actually need to remember and reason about later, including architectural decisions, design principles, or anything you might be asked to defend in six months, transcribe it by hand afterward. Not copy it. Transcribe it, meaning rewrite it in your own words from memory and notes combined.

This process, sometimes called the generation effect, reliably improves retention beyond either method alone. You are forcing a second encoding pass with deeper processing. It is the cognitive equivalent of writing a unit test that actually exercises the code path you care about rather than just asserting the function exists.

The painful irony is that the note-taking app ecosystem has invested enormous engineering effort into features, backlinks, graph visualization, AI summarization, and nested databases, that address the storage and retrieval problem while largely ignoring the encoding problem. It is a bit like notification systems that are optimized to keep you engaged rather than actually informed. The metric being optimized is not the one that actually matters to the user.

Until someone builds an input mechanism that genuinely replicates the motor-cognitive engagement of handwriting, the most honest thing the industry could do is acknowledge the tradeoff clearly. Some things are worth capturing fast. Other things are worth learning slowly. Your brain already knows the difference. The apps just have not caught up yet.