The Setup

In 2019, Google Health published research on an AI system trained to detect skin conditions from photographs. The team had access to something most medical AI researchers can only dream about: enormous volumes of real-world clinical images, the kind of messy, varied data that represents how dermatology actually works across diverse patient populations.

The logic was intuitive. Train on more data, cover more variation, build a more robust model. This is the foundational assumption behind most large-scale AI development. More is more.

Except it wasn’t.

When researchers evaluated the model against smaller systems trained on carefully curated, standardized datasets, the performance gap was not what anyone expected. The smaller models, working from far less data, matched or outperformed the larger one on several key diagnostic tasks. The culprit was not the algorithm. It was the data itself.

What Happened

Real-world clinical images come with real-world problems. Lighting varies. Camera quality varies. Some photos are taken by specialists who know what they’re capturing, and some are taken by patients in bathroom mirrors. Metadata is inconsistent. Diagnostic labels, attached by different clinicians at different institutions, reflect different standards and different levels of certainty.

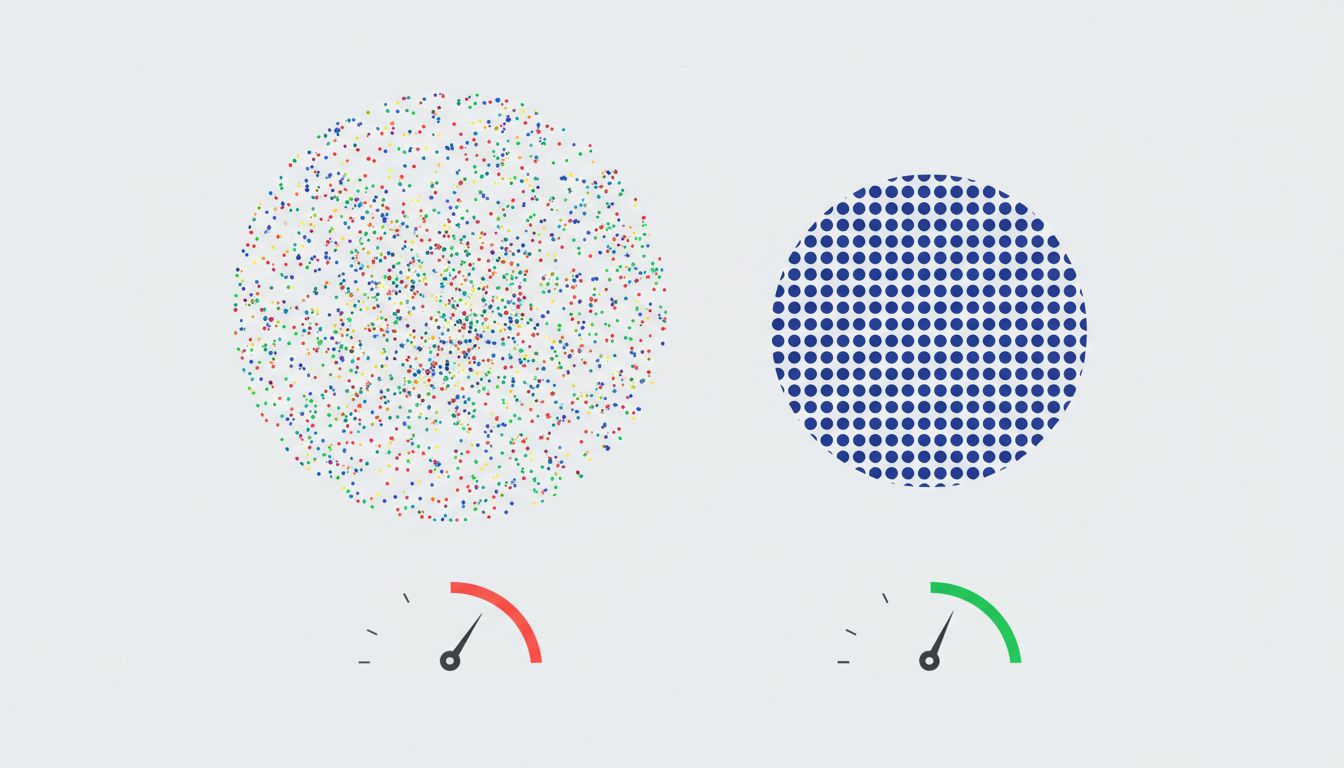

When you train a model on this kind of data at scale, you are not just teaching it to recognize skin conditions. You are teaching it to recognize all the noise that correlates with those conditions in your specific dataset. A model trained primarily on images from certain clinical settings may learn to associate image quality, lighting conditions, or even equipment artifacts with particular diagnoses. It is pattern-matching on the wrong patterns.

This phenomenon has a name: shortcut learning. The model finds the path of least resistance through the training data, which is not always the path that generalizes to the real world.

The curated datasets, despite being smaller, had been cleaned to remove these confounds. Images were standardized. Labels were reviewed. The signal-to-noise ratio was higher. The models trained on them learned something closer to the actual underlying medical signal.

This is not an isolated finding specific to dermatology. Researchers at Stanford and MIT have documented similar dynamics in radiology AI, where models trained on large hospital datasets absorbed institutional biases so thoroughly that they performed significantly worse when deployed at a different hospital that used different imaging equipment. The model had learned the scanner, not the disease.

A particularly striking example emerged from a chest X-ray study where an AI system appeared to achieve impressive pneumonia detection accuracy. Later analysis revealed it had partially learned to flag images that contained a small metal token used by one hospital to mark patient positioning. That token correlated with certain patient populations in the training data. The model was, in part, detecting a piece of metal rather than a disease.

Why It Matters

The conventional narrative around AI scaling goes like this: gather more data, train bigger models, get better results. This narrative is not wrong exactly, but it is dangerously incomplete. It describes what happens when your data quality scales with your data quantity. That condition is rarely met in practice.

For anyone building or buying AI systems, this creates a specific practical problem. Bigger training datasets are expensive to assemble, so organizations that can afford scale have a presumed advantage. If that advantage is illusory, or worse, if it becomes a liability, decisions made on the assumption that more data means better performance will fail quietly and expensively.

The failure mode is subtle because these models do not fail obviously. They produce confident outputs. Their aggregate accuracy metrics may look reasonable. The problems surface in deployment, in the specific cases where the model’s learned shortcuts diverge from reality, which is precisely where reliability matters most.

This connects to something broader about how we evaluate AI systems before releasing them. A model that scores well on a benchmark derived from the same distribution as its training data can still be learning entirely the wrong things. If you want to know what a model actually learned, you need evaluation data that breaks the shortcuts, not data that rewards them. That is much harder to build, and it gets skipped far more often than it should.

What We Can Learn

If you are making decisions about AI systems, whether you are building them, procuring them, or deploying them, here is what the dermatology research teaches you.

Question the data before you question the algorithm. When a model underperforms expectations, the instinct is usually to change the architecture or tune the hyperparameters. Audit the training data first. Look for spurious correlations. Ask what else changed in the environment when the conditions you care about changed.

Treat data curation as a core engineering task, not a preprocessing step. The teams that built the smaller, better-performing dermatology models did not have less work to do. They had different work to do: reviewing labels, standardizing image capture conditions, removing examples where the ground truth was ambiguous. This work is unglamorous and time-consuming. It is also frequently the difference between a model that works and one that performs.

Evaluate on distribution shift deliberately. If your model will be deployed in a different context than where it was trained (and it almost always will be), test it there explicitly before you rely on it. The hospital-to-hospital performance collapse in radiology AI is not a story about bad models. It is a story about models that were never tested outside the environment they learned.

Recognize that scale is a tool, not a guarantee. Scaling data works when quality scales with quantity. When it doesn’t, you get a larger model that has learned a more elaborate version of the wrong thing. The researchers at Google, Meta, and elsewhere who have documented this increasingly treat data curation as the primary lever, not data volume.

The practical implication for anyone evaluating an AI system: ask the vendor or the team not just how much data the model was trained on, but how the training data was curated and what steps were taken to identify and remove spurious correlations. If the answer is vague, that tells you something.

More data can absolutely produce better models. But the relationship is conditional, and the condition is quality. Pouring more water into a bucket with a hole in it does not give you more water. It gives you a larger mess to clean up.