In 2015, Spotify launched Discover Weekly and made something feel almost impossible: a weekly playlist of 30 songs you had never heard, almost all of which you liked. For many users, it was uncanny. For engineers, it was a lesson in what happens when you stop thinking about music as a catalog and start thinking about it as a space.

The core technology behind that experience was word embeddings, borrowed almost directly from natural language processing. Specifically, Spotify’s team adapted an algorithm called word2vec, originally developed by researchers at Google in 2013 to understand relationships between words in text. The insight was strange but elegant: if you can treat listening sessions the way a linguist treats sentences, then songs become words, and the “meaning” of a song becomes its position in a high-dimensional space.

That phrase, high-dimensional space, is where most explanations lose people. So let’s slow down.

What an Embedding Actually Is

Start with a simpler example. Suppose you want to represent colors mathematically. You could describe any color with three numbers: its red, green, and blue components. Pure red is (255, 0, 0). A muddy olive green is something like (128, 128, 0). The color exists as a point in a three-dimensional space, and colors that look similar to each other are close together in that space.

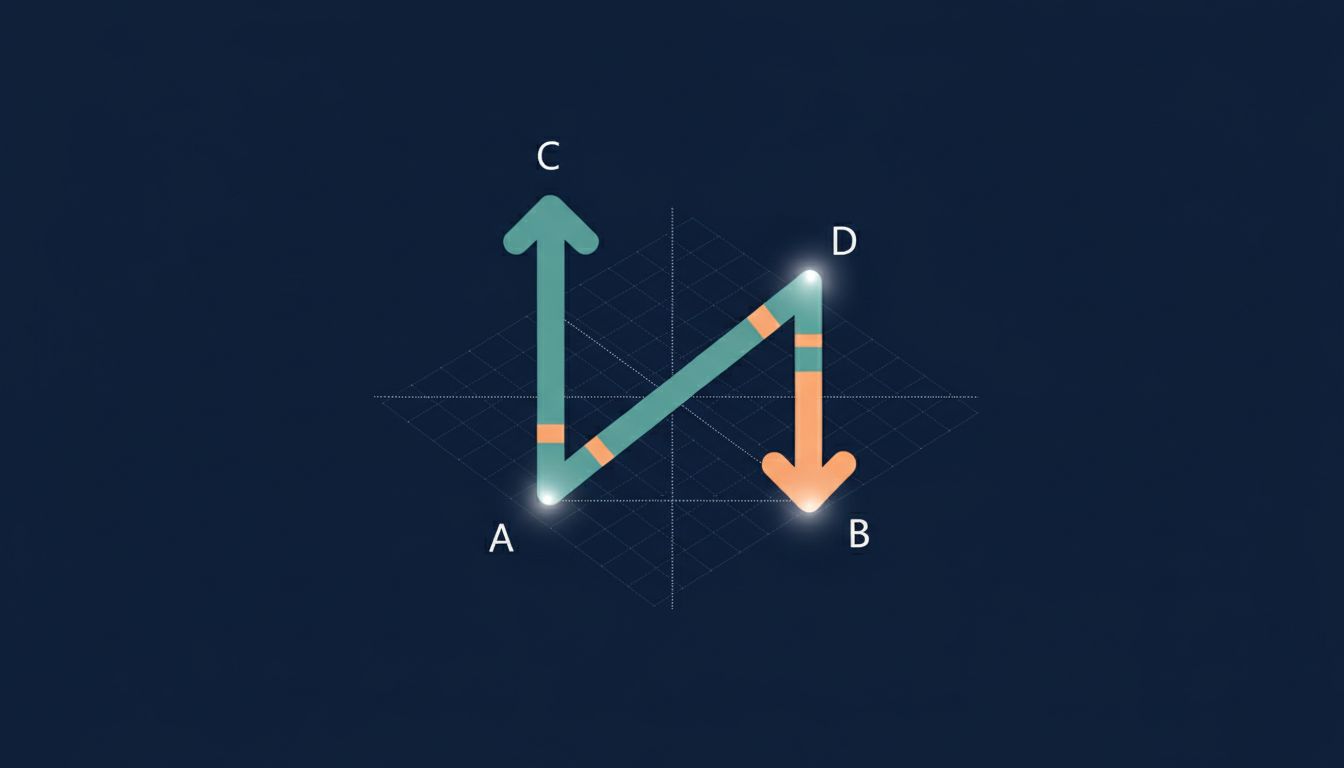

An embedding does exactly this, but for things that don’t have obvious numerical properties, like words, songs, or user preferences. You assign each item a list of numbers (a vector), train a model to position similar items near each other, and then use the resulting geometry to make inferences.

The word2vec insight, which Spotify’s team extended, was that you can learn these positions by watching what appears next to what. In text, words that appear in similar contexts end up with similar vectors. “Dog” and “puppy” cluster together because they appear near words like “leash,” “bark,” and “walk.” Nobody hand-coded that relationship. The model inferred it from patterns in millions of sentences.

Spotify replaced sentences with listening sessions. If a user played Radiohead, then Portishead, then Massive Attack in a single session, those three artists end up positioned near each other in the model’s learned space. Do that across hundreds of millions of listening sessions, and you get a map of music where proximity means something real: not genre, exactly, not tempo or key, but something more like “the kind of person who listens to one tends to listen to the other.”

Why Geometry Does the Work

Once you have a good embedding space, you get a lot for free. “Similarity” becomes distance. You can ask: which songs are closest to the ones this user already loves? You can also do arithmetic. The famous word2vec demo showed that the vector for “king” minus “man” plus “woman” points very close to “queen.” Spotify’s music space has analogous structure. If you take an artist’s vector and move in certain directions, you reliably find artists with related energy but different genre, or similar genre but different energy.

This is the part that surprises people when they first encounter it: the model was never told what “energy” or “genre” meant. It never saw a taxonomy. It learned structure purely from co-occurrence patterns in human behavior, and that structure turned out to align remarkably well with human intuition.

Discover Weekly’s accuracy wasn’t magic. It was a consequence of training on a very large and very honest dataset. Listening behavior is more truthful than ratings or reviews. People don’t finish songs they don’t like. They skip, they repeat, they queue. That signal is precise in ways that stars and thumbs aren’t.

What the Spotify Case Teaches Engineers

The reason this story matters beyond Spotify is that embeddings are now infrastructure. They’re inside search engines, recommendation systems, fraud detection pipelines, customer support tools, and most modern LLM-powered products. If you’re building anything that involves finding “similar” items or understanding meaning at scale, you’re almost certainly working with embeddings or you should be.

But the Spotify case carries a warning too. It’s easy to treat embeddings as a black box you drop in, let the vendor manage, and call done. That’s a mistake. The embedding space you use encodes the biases and assumptions of whatever data it was trained on. Spotify’s model learned from real listening behavior, which means it also learned from whatever patterns existed in that behavior, including the fact that some genres and artists receive more plays because of algorithmic promotion rather than genuine preference. The geometry of the space reflects reality and the distortions in reality simultaneously.

There’s also the question of dimensionality. Word2vec and most practical embedding models compress meaning into a few hundred dimensions. The original word2vec paper used 300. Modern text embedding models often use 768 or 1536. These numbers aren’t magic; they represent a tradeoff between expressiveness and computational cost. More dimensions can capture more nuance, but they also require more data to train well and more compute to search at runtime. If you’re thinking about adding a vector database to your stack, understanding what your embedding model actually encodes is the prerequisite question you need to answer before worrying about infrastructure.

The Limits of the Map

Embeddings are representations, not ground truth. Spotify’s model doesn’t know that a song is melancholy or triumphant in any meaningful sense. It knows that users who listen to one set of melancholy songs also tend to listen to others. The emotion is in the listener, not the vector. The model captures a shadow of that emotion through behavior.

This matters when you’re building something that has to be reliable. An embedding-based search might return results that are geometrically close in a way that feels semantically wrong to a domain expert. Medical search is an obvious case: terms that co-occur frequently in clinical notes aren’t necessarily the ones a physician would consider similar. Tailoring embeddings to a specific domain, through fine-tuning on domain-specific data, is often necessary before they’re actually useful.

Spotify understood their domain well. The listening session as a unit of data made intuitive sense for music discovery. That’s not a given in every application. The teams that use embeddings well are the ones that have a clear theory of what co-occurrence means in their specific context, what signal their training data actually captures, and where the geometry will mislead them.

What Spotify built in 2015 is now a textbook example precisely because it made the abstract concrete. They took a mathematical representation that existed in NLP research, applied it to a domain where the input data was clean and behavioral, and produced a product that felt human. The numbers were never describing music. They were describing people’s relationship to music. That distinction is everything.