In early 2023, Microsoft made a bet so large it reshaped the valuation of an entire industry. The company committed roughly $10 billion to OpenAI and began integrating GPT-4 across its entire product surface: Word, Excel, Outlook, Teams, PowerPoint, and Windows itself. The marketing term was Copilot. The promise was that AI would become your collaborative partner across every task in the Microsoft 365 suite.

By most measures of user adoption, the rollout has been uneven at best. Enterprise customers have been slow to enable it. IT administrators have raised consistent concerns about data handling and hallucination risks. Surveys of knowledge workers show persistent skepticism about whether the outputs can be trusted without careful review. The most common complaint is not that Copilot is useless, but that using it responsibly requires so much verification that the time savings disappear.

So why did Microsoft ship it anyway, at this scale, this fast?

The Setup

To understand the decision, you have to think about what Microsoft’s real product was in early 2023. It wasn’t Word. It wasn’t Teams. It was the narrative that Microsoft had stopped being the company that missed the internet, missed mobile, and arrived late to cloud computing.

Microsoft’s stock had already climbed dramatically under Satya Nadella, largely because investors came to believe the company could adapt. The OpenAI partnership and the Copilot rollout were not primarily engineering decisions. They were thesis-confirmation events for an investor class that had been watching Google, Meta, and Amazon all signal their own AI commitments. Microsoft needed to signal loudest and fastest.

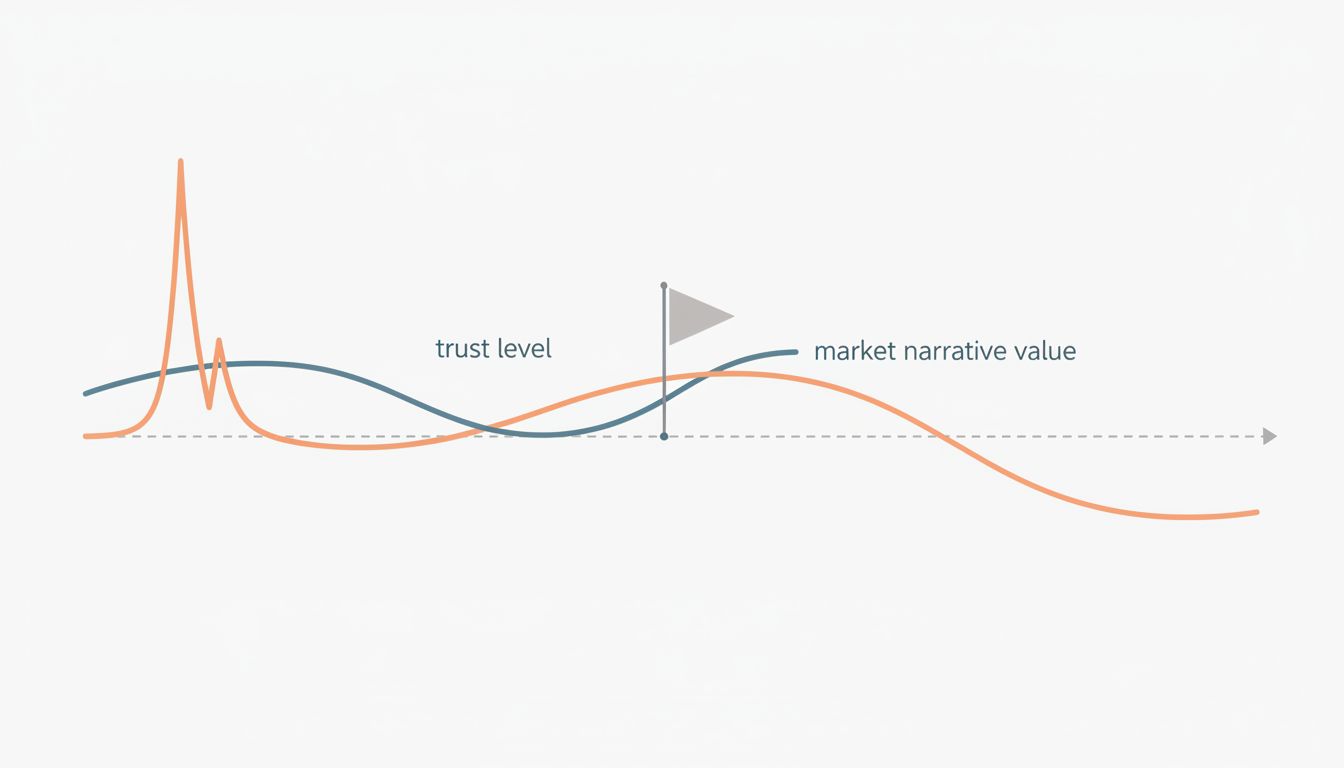

This is the hidden mechanic that most coverage misses: when a company’s market capitalization is built partly on a future-capability story, shipping a product is sometimes less about the product working than about proving the capability exists. The product is evidence. The real output is confidence.

What Happened

Microsoft priced Copilot for Microsoft 365 at $30 per user per month on top of existing subscriptions, an aggressive number for features that many enterprise users found inconsistent. The company began including Copilot+ branding across new PC hardware, tying AI capability to hardware refresh cycles. It renamed the Windows Start button adjacent UI to Copilot. At one point, it mapped a dedicated Copilot key onto new keyboards, before quietly walking that back as the standalone app strategy evolved.

The pattern here is familiar if you’ve watched Microsoft’s product history: heavy commitment to a brand before the underlying experience fully justifies the brand. Microsoft did this with Surface, with Cortana, with Teams during the pandemic. Some of those bets worked. Cortana is essentially gone. Teams survived because remote work created a forcing function that had nothing to do with the product’s intrinsic quality.

But the Copilot situation is instructive in a specific way that Cortana wasn’t. Microsoft isn’t hiding the trust problem. Its own documentation for enterprise Copilot deployment includes extensive guidance on building “human review workflows” into AI-assisted tasks. The company openly acknowledges that outputs require verification. It ships the feature and the disclaimer simultaneously.

This is not cognitive dissonance. It’s a deliberate posture. The message to enterprise IT is: we know this isn’t fully reliable yet, here’s how to use it responsibly. The message to the market is: we are the company doing this at scale. Both messages are true, and they serve different audiences.

Why It Matters

There’s a useful framing from software architecture called “optimistic UI,” where an interface updates immediately to reflect an action before the server has confirmed it, betting that the confirmation will arrive and rolling back gracefully if it doesn’t. Microsoft’s Copilot strategy is something like optimistic market positioning. Ship the capability, claim the narrative position, and let the product quality catch up to the promise.

The risk in optimistic UI is the rollback. If the server never confirms, users see a jarring correction. The risk in optimistic market positioning is similar: if the product quality doesn’t close the gap, the brand absorbs the damage of the gap.

What makes this particularly interesting is the trust dimension. Users don’t distrust Copilot because it’s new. They distrust it because it sometimes produces fluent, confident, wrong answers, the classic large language model failure mode. And that failure mode is load-bearing for the entire use case. If you can’t trust a writing assistant’s output without reading it as carefully as you’d read your own draft, the assistant hasn’t saved you work. It’s added a reviewing step to work you could have done yourself.

Microsoft knows this. The engineering teams at OpenAI know this. The research community has been writing about hallucination and calibration in language models for years. The decision to ship broadly anyway reflects a calculation that the strategic value of market position outweighs the product value of waiting until the trust problem is solved.

That calculation might even be correct. Big tech companies have a long history of shaping markets by moving early, even when the early product is rough. And it’s genuinely possible that the hallucination problem improves significantly over the next few years, at which point Microsoft will already own the enterprise workflow integrations and the institutional familiarity.

But there’s a cost that this framing tends to undercount: the users who form their lasting mental model of AI assistants during this early period. Adoption research consistently shows that initial experiences with a category of tool set expectations that are hard to revise upward. A developer who tries Copilot in 2024, finds it unreliable for their specific workflow, and stops using it isn’t just a lost user today. They become the skeptical senior engineer who counsels their team against AI tools in 2026, when the tools might genuinely be worth using.

Default settings and initial experiences carry disproportionate weight in how users relate to products long-term, and the same principle applies to first encounters with entire product categories.

What We Can Learn

The lesson isn’t that Microsoft made a mistake. It’s that the incentive structure of public technology companies creates a systematic pressure to ship AI features for audiences other than users. Investors, analysts, enterprise procurement cycles, board narratives, and competitive benchmarking all create demand for visible AI capability that has almost nothing to do with whether the feature improves someone’s Tuesday afternoon.

This matters for anyone building software right now. If you’re a product manager feeling pressure to add an AI feature because a competitor just announced one, the honest question to ask is: who is this feature actually for? If the answer is “our next funding deck” or “the press release” or “so we can say we have it,” that’s not automatically wrong. But it’s worth being clear about it, because the product decisions that follow from “this is for users” are very different from the product decisions that follow from “this is for positioning.”

For users, the practical takeaway is simpler: when a major tech company ships an AI feature with extensive caveats and trust-building documentation baked in from day one, that documentation is not fine print. It’s the actual instruction manual. The feature is in early access whether or not it’s labeled that way.

Microsoft will probably be fine. The enterprise relationships are deep, the integration surface is enormous, and the underlying model capability is genuinely improving. But the Copilot rollout is a clean example of a dynamic that will repeat across the industry for years: features built for market signals, shipped to users, with trust as a problem to be solved in a future update.