The intuition behind machine learning feels obvious: more data means better models. More examples, more patterns, more signal. Companies have spent billions on this premise, racing to assemble ever-larger datasets. But researchers keep running into a stubborn counterexample. In certain conditions, adding more training data makes models perform measurably worse. Not slightly worse. Significantly worse.

This isn’t a fringe finding. It shows up across domains, from image classifiers to language models to recommendation systems. Understanding why it happens requires abandoning the idea that data is straightforwardly good, and thinking more carefully about what a model is actually doing when it trains.

The Problem Has a Name: Distribution Shift

The most common culprit is distribution shift. A model trained on data from one context gets evaluated in another, and the new data it was given during training turns out to be a poor representation of the real world it needs to operate in.

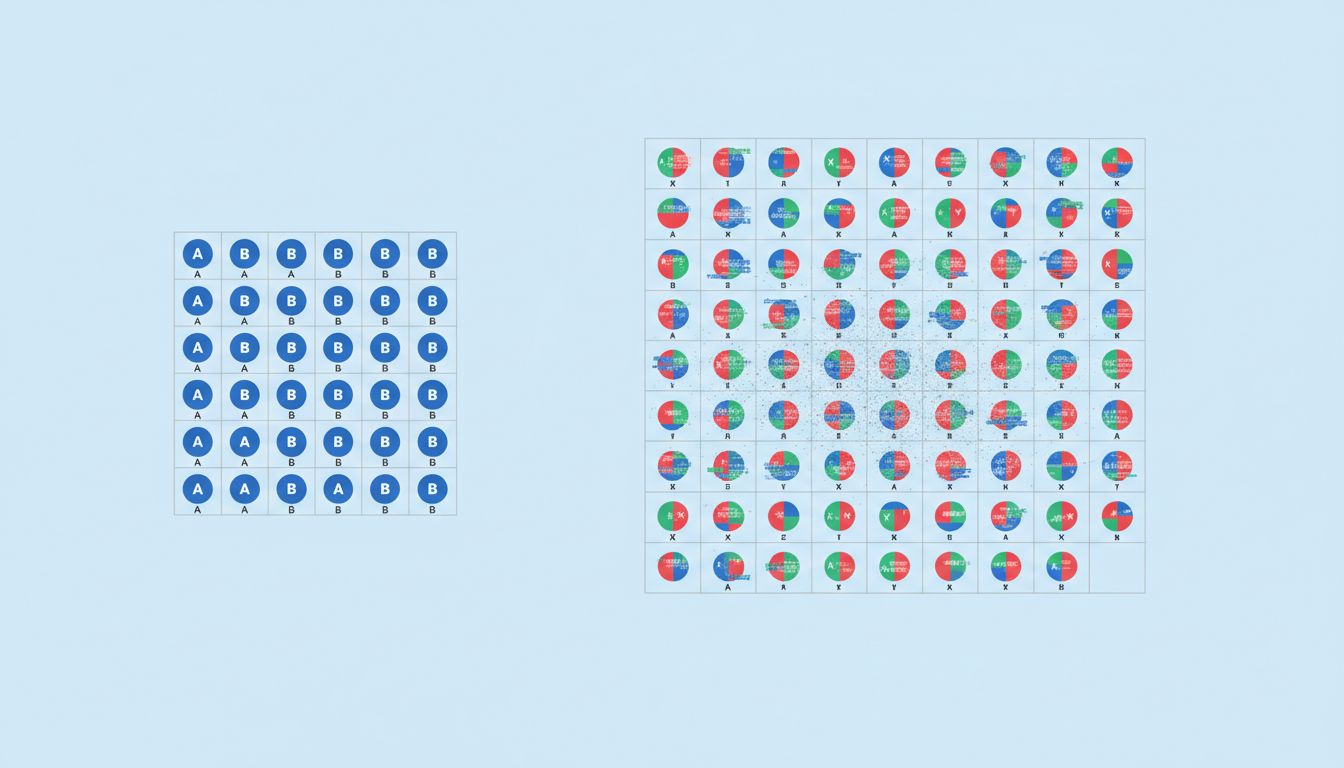

Here’s a concrete version of the problem. Suppose you’re training a medical image classifier on hospital records. You start with a clean, carefully curated dataset from a single institution. The model performs well. Then you add data from dozens more hospitals, collected under different imaging equipment, different protocols, different patient demographics. You’ve increased your dataset size tenfold. But now your model has learned not just the medical patterns you care about but also equipment artifacts, institutional biases, and labeling inconsistencies that don’t generalize. Performance drops.

This is precisely what researchers found in several radiology AI studies over the past several years. Models trained on diverse multi-site data often underperformed models trained on smaller, carefully controlled single-site datasets when evaluated on held-out samples from a new institution. The additional data added noise that the model couldn’t distinguish from signal.

Overfitting to the Wrong Things

Distribution shift is one mechanism. Overfitting is another, and the relationship between the two is tighter than most people realize.

Overfitting traditionally means a model memorizes the training data too closely and fails to generalize. The classic fix is more data, because more examples force the model to find patterns that are genuinely stable rather than quirks of a small sample. This is why the “more data” intuition exists and why it’s often correct.

But there’s a subtler version. If the additional data you’re adding is low quality, mislabeled, or drawn from a different distribution than your target task, then adding more of it doesn’t reduce overfitting. It shifts what the model overfits to. Instead of memorizing noise in a small clean dataset, the model learns to fit a large noisy one. The training loss goes down. Performance on the thing you actually care about goes down too.

Language models have run into this at scale. Researchers working on the Chinchilla paper at DeepMind found that many large language models had been substantially undertrained relative to their size. The community had been scaling parameters while keeping dataset sizes comparatively fixed. But the inverse problem is equally real: if you’re pulling from lower-quality web data to pad your training corpus, you can actively contaminate a model’s learned representations. The model starts optimizing for statistical patterns that are artifacts of how the internet is written rather than the underlying structure of language or reasoning.

The Label Noise Trap

One of the more underappreciated ways that more data becomes worse data is through label noise. In supervised learning, your model is only as good as the labels it trains on. When datasets are small, teams can often hand-verify annotations. When they scale to millions or billions of examples, that’s no longer possible. Labels get crowdsourced, automated, or inferred, and error rates creep up.

A series of experiments across different benchmark tasks has shown that even moderate label error rates (somewhere in the range of 10-15%) can cause significant performance degradation, particularly for minority classes in imbalanced datasets. This is a problem that gets worse as datasets scale because the absolute number of corrupted labels grows, and the model sees those corruptions repeatedly across training epochs.

The counterintuitive result: a smaller dataset with high label quality often beats a larger one with noisy labels. This is especially true for tasks where the model needs to learn fine-grained distinctions. The additional examples aren’t giving the model more information about the right answer. They’re giving it conflicting information about what the right answer even is.

When the Task Is the Variable, Not the Data

There’s a third mechanism that gets less attention: task mismatch. This comes up sharply in transfer learning and fine-tuning, which are now standard practice for deploying large models in specific applications.

The premise of transfer learning is that a model pre-trained on a broad task (say, predicting the next word in text scraped from the internet) will develop representations useful for narrower downstream tasks (say, classifying customer support tickets). This usually works. But the pre-training data and the downstream task can be in tension.

If you pre-train on a very large, very general corpus, your model may be excellent at tasks that resemble that corpus and poor at tasks that differ from it in meaningful ways. Adding more general pre-training data can actually widen the gap between what the model has learned and what your specific task requires. Fine-tuning helps, but the initialization matters. Several research teams working on scientific and technical language tasks have found that domain-specific models trained on smaller corpora outperform generalist models trained on orders of magnitude more data, precisely because the additional data in the generalist case is not just unhelpful but actively shapes the model’s representations away from the target domain.

What This Means for How We Build Models

The practical implication isn’t that data is bad or that you should train on less of it. The implication is that data quality, data relevance, and data distribution matter more than raw size, and in many real-world scenarios these properties degrade faster than volume grows.

This is a structural problem for the current moment in AI development. The cheapest path to more data is scraping more of the internet. But the internet’s distribution doesn’t match the distribution of most deployment environments. Medical records, legal documents, scientific literature, manufacturing sensor data, customer support conversations: none of these look like the average web page, and models trained primarily on web data carry that mismatch into every specialized application they’re adapted for.

The smarter approach, which is increasingly what serious ML teams are doing, is to invest in data curation rather than data accumulation. Deduplication, quality filtering, domain stratification, careful label audits. These are unglamorous, expensive, and difficult to automate well. They also tend to produce substantially better models than simply acquiring more raw data.

The lesson is old and appears in other engineering contexts: garbage in, garbage out. But the scale of modern AI systems has made the garbage harder to see and the consequences harder to trace. When your training dataset has a hundred billion tokens, you don’t get to inspect it. You only find out something went wrong when the model ships and starts behaving oddly in production. Understanding the mechanisms by which more data becomes worse data is the first step toward building pipelines that don’t create that problem in the first place.