The most dangerous idea in AI right now is also the most intuitive one: that more data makes models smarter. It sounds reasonable. It maps onto how humans learn. It justifies enormous infrastructure budgets. It is also frequently wrong, and the field keeps relearning this the hard way.

My position is simple: indiscriminate data scaling is a form of technical debt that gets paid at inference time, and smaller, carefully curated models often outperform their bloated counterparts on real tasks because of their constraints, not despite them.

Scale Introduces Noise That Compounds

When you train a model on hundreds of billions of tokens scraped from the web, you are not feeding it knowledge. You are feeding it a probability distribution over human text, including all the contradictions, errors, SEO-optimized garbage, and confidently-stated misinformation that humans produce at industrial scale.

The model has no way to distinguish a careful explanation of quantum mechanics from a Reddit comment that gets the physics backwards but sounds authoritative. Both get weighted. Both influence the gradient updates that shape the model’s behavior. This is not a fixable bug in the training loop. It is an architectural consequence of learning from raw signal volume.

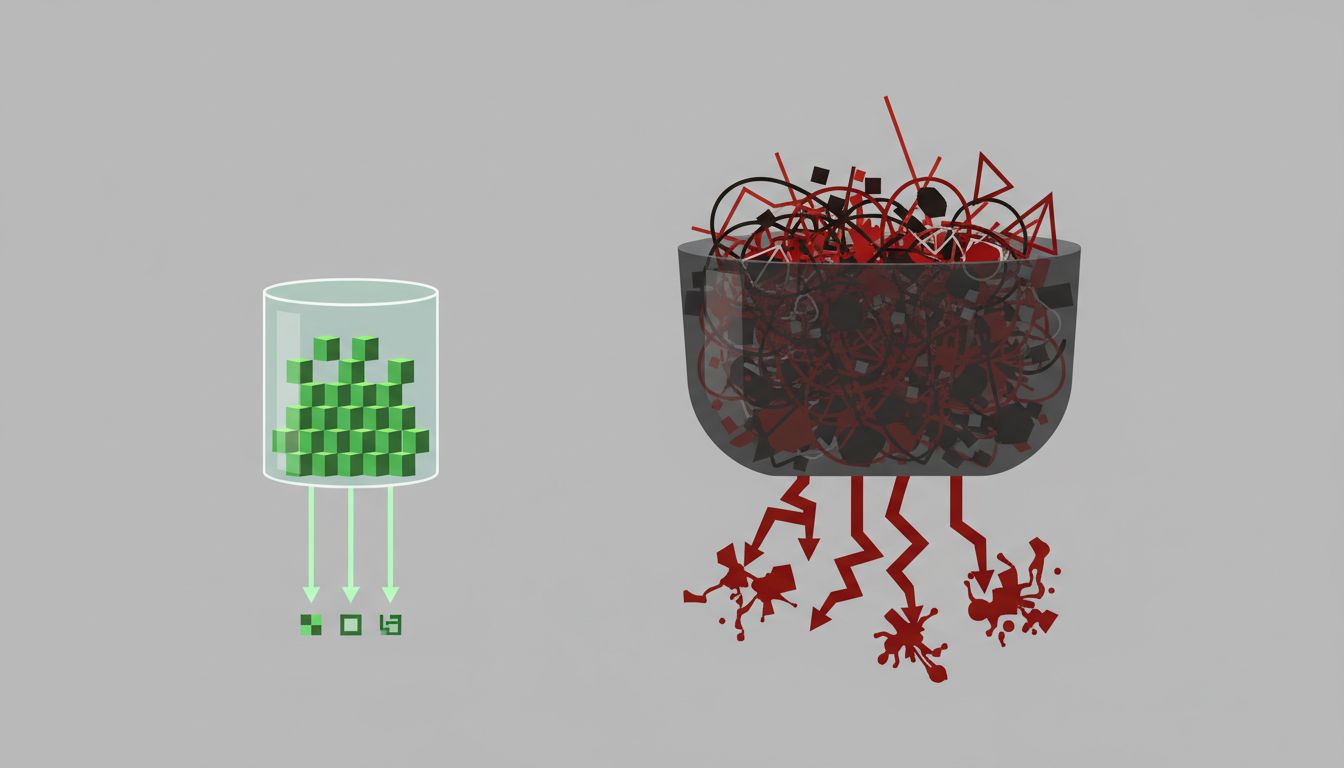

Smaller models trained on curated corpora sidestep this entirely. Phi-2, Microsoft’s 2.7 billion parameter model released in late 2023, outperformed models many times its size on coding and reasoning benchmarks. The researchers attributed this explicitly to training data quality, specifically a synthetic dataset designed to reduce noise and reinforce structured reasoning patterns. The model did better because it learned from less, not more.

Larger Models Learn Shortcuts That Generalize Poorly

There is a concept in machine learning called shortcut learning (sometimes called Clever Hans learning after the horse that appeared to solve math problems but was actually reading handler cues). When you give a model enough data, it gets very good at finding statistical regularities that correlate with correct answers in the training distribution but fall apart on anything slightly outside it.

A model trained on massive datasets has more opportunities to learn these shortcuts because the shortcuts become more statistically reliable at scale. The model that has seen ten million examples of a question pattern does not need to understand the underlying concept. It just needs to match the pattern well enough to minimize loss during training.

Smaller models, particularly those trained on deliberately diverse or out-of-distribution examples, are forced to learn more generalizable representations because they cannot rely on brute-force pattern matching. The constraint is the feature.

Data Pipelines Are Where Assumptions Hide

Here is the practical problem that practitioners rarely talk about publicly: at scale, you lose visibility into what your model is actually learning. When your training corpus is large enough, you cannot audit it. You cannot know which documents are contradicting each other, which sources are overrepresented, or which demographic biases have been baked in through differential data availability.

Small, curated datasets force you to make explicit decisions about what to include and why. Those decisions are auditable. They are arguable. You can run ablations (training the model with and without specific data subsets) to understand what each component contributes. At the scale of CommonCrawl or the web-scraped corpora used for frontier models, that kind of analysis becomes computationally and logistically intractable.

This is a software engineering problem as much as a machine learning one. A codebase you cannot reason about is a liability, not an asset. The same is true for training data. The assumption that you can compensate for opacity with volume is wishful thinking that the benchmark numbers sometimes expose and sometimes hide.

The Counterargument

The strongest version of the opposing view is that scale is still winning where it counts. GPT-4, Claude, Gemini, the frontier models, are trained on enormous datasets and they are genuinely more capable than smaller alternatives on a wide range of tasks. Emergent capabilities, behaviors that appear suddenly at certain scales without being explicitly trained for, suggest there are qualitative shifts that only happen when you push past certain data and parameter thresholds.

This is true. I am not arguing that scale never works. I am arguing that scale is not the mechanism people think it is, and that treating it as the primary lever produces increasingly poor returns while generating systems that are harder to understand, correct, and specialize.

The emergent capabilities argument also has a troubling empirical track record. Several capabilities once described as emergent have later been shown to be artifacts of how benchmarks were scored, not genuine phase transitions in model ability. The apparent magic of scale sometimes dissolves under more careful measurement.

Frontier labs know this, which is why the research direction that has gained the most traction recently is not “scrape more of the internet” but rather synthetic data generation, constitutional AI, and instruction tuning on small, high-quality datasets. The scaling laws that dominated thinking from 2020 to 2022 have been quietly revised in the direction of data quality as a co-equal variable with data quantity.

What This Actually Means for Practitioners

If you are building on top of AI models, the implication is not that you should always reach for the smallest model available. It is that “bigger training corpus” is not a reliable proxy for “better for your use case.” A model fine-tuned on a few thousand high-quality examples from your specific domain will often outperform a general-purpose model trained on orders of magnitude more data, on your specific tasks.

This is not a niche finding. It is reproducible, it is well-documented in the fine-tuning literature, and it is why teams that actually ship AI-powered products tend to invest heavily in data curation rather than data accumulation.

More data is easy. Better data is hard. The field conflated the two for long enough that the assumption got embedded in how we talk about model capability, how we allocate research budgets, and how we evaluate progress. Unpacking that conflation is not a minor correction. It changes what we should actually be building.