The simple version

When you train an AI model on too much data, or the wrong kind of data, the model can actually get worse at the thing you’re trying to make it do. This isn’t a bug in any one system. It’s a structural property of how machine learning works.

Why more data feels like it should always help

The intuition is reasonable. More data means more examples, more examples means the model sees more of the world, and a model that’s seen more of the world should make better predictions. This is roughly how it works in the early stages of training. Feed a model more labeled images of cats, and it gets better at identifying cats. That part is real.

The problem is that this relationship doesn’t hold indefinitely, and in several specific situations it actively reverses. Understanding why requires getting a little bit into how these models actually learn.

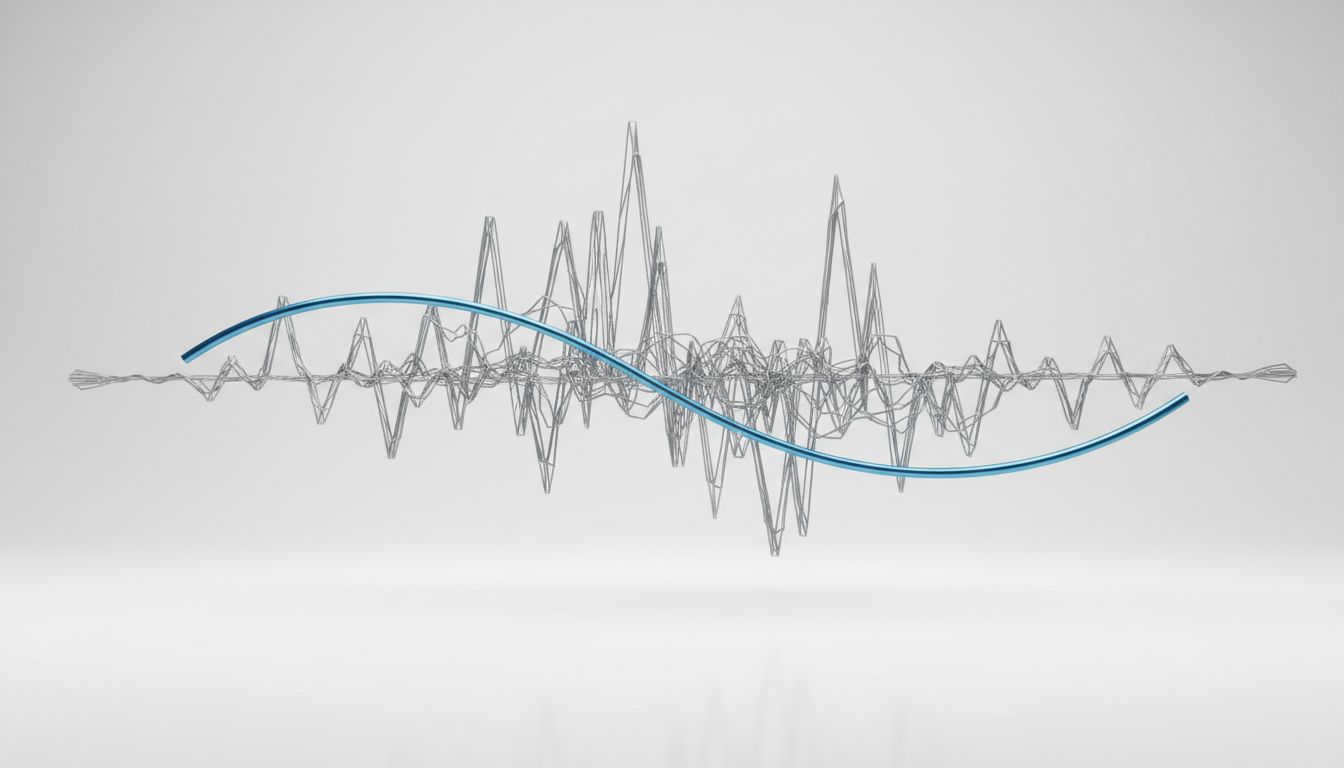

A machine learning model, at its core, is a function that maps inputs to outputs by adjusting millions (or billions) of internal numerical parameters. During training, the model looks at examples, makes a prediction, compares that prediction to the correct answer, and adjusts its parameters to do a little better next time. Repeat this process enough times across enough examples, and the model learns patterns that generalize to new inputs it hasn’t seen before.

The key word there is generalize. The goal isn’t to memorize the training data. It’s to extract the underlying signal from it.

The overfitting problem, explained plainly

Overfitting is when a model learns the training data too well. Instead of learning the general pattern (“cats tend to have pointed ears and whiskers”), it learns the specific quirks of the training examples (“cat images tend to have this particular JPEG compression artifact in the upper-left corner”). The model performs brilliantly on data it has already seen, and poorly on anything new.

More data doesn’t automatically fix overfitting. In fact, if the additional data you’re adding is low-quality, mislabeled, or from a different distribution than the problem you’re solving, it can make things worse. You’re not giving the model more signal. You’re giving it more noise to memorize.

Here’s a concrete example. Say you’re training a model to detect fraudulent financial transactions. Your initial dataset is 100,000 transactions, carefully labeled by domain experts. You then scrape together another 2 million transactions from a different source, labeled automatically by a weaker heuristic system. Your dataset just grew by 20x. But if that new data contains systematically wrong labels, or reflects a different population of transactions (different geography, different fraud patterns, different legitimate spending habits), the model will adapt to that new, messier signal. You may end up with a model that’s more confident and less accurate than your original.

This is called dataset shift or distribution mismatch, and it’s one of the most common ways that well-intentioned data collection efforts backfire in production.

The catastrophic forgetting problem

There’s a second, related phenomenon worth understanding. When you take a model that’s already been trained and continue training it on new data, you risk erasing what it previously learned. This is called catastrophic forgetting, and it’s a genuine unsolved problem in machine learning research.

The mechanism is straightforward once you see it. Training adjusts the model’s parameters to minimize error on the current batch of data. If the new data looks different from the old data, the parameter adjustments that improve performance on the new data will, by necessity, move the parameters away from where they were for the old data. The model’s weights are finite. There’s no separate “memory” for old knowledge and new knowledge. It’s all the same parameters.

This is part of why AI systems that seem to learn continuously can actually lose previously reliable capabilities. A model that was excellent at answering questions about 2020 events might become noticeably worse at that task after being updated with 2023 data, depending on how the fine-tuning was done.

When the data itself is the problem

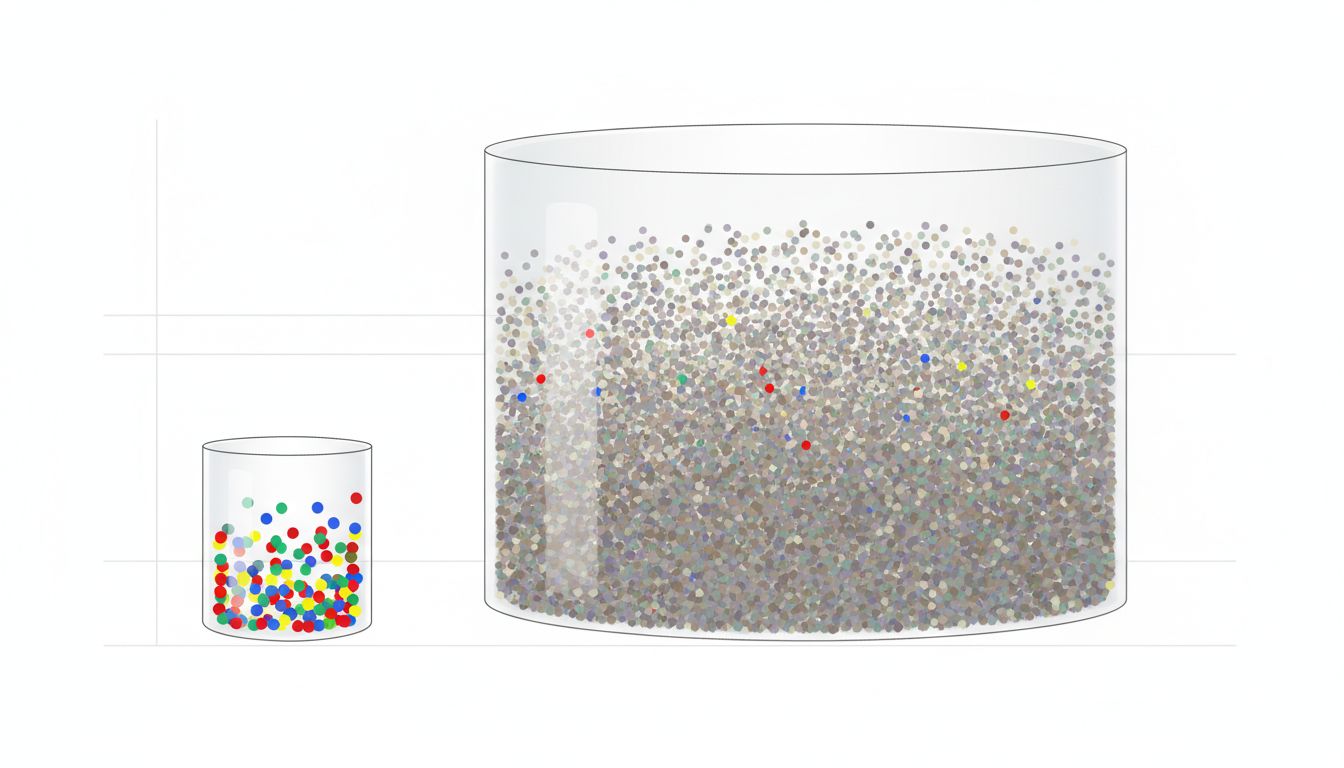

Something that doesn’t get discussed enough is data quality as a compounding issue at scale. At small dataset sizes, a few bad examples don’t move the needle much. At massive scale, systematic biases in data collection become load-bearing structures in the model’s worldview.

Large language models trained on web-scraped text are a good case study. The web is not a neutral sample of human knowledge. It over-represents English speakers, younger demographics, people who have internet access and time to write things online, and communities that congregate around certain platforms. It under-represents domain experts (who mostly publish behind paywalls), non-English speakers, older knowledge encoded in physical formats, and careful reasoning (because careful reasoning takes longer to write and gets fewer clicks than confident assertions).

When you train on more web data, you don’t necessarily get a better model. You sometimes get a model that’s more confidently wrong in patterned ways, because the patterns of confident wrongness are well-represented in the training corpus.

The scaling laws research from groups like DeepMind (particularly the Chinchilla paper from 2022) made this concrete in a surprising way. The researchers found that many large language models at the time were undertrained relative to their size. They were using too many parameters for the amount of data, rather than the other way around. But the more important takeaway was that the composition and quality of data mattered as much as raw volume. A smaller model trained on better-curated data could outperform a larger model trained on more but worse data.

What this means in practice

If you’re building something on top of AI models, or thinking about AI systems for a specific domain, the practical implications are worth sitting with.

First, more data is not a substitute for better data. A team that spends its budget on data cleaning and curation will often outperform a team that spends the same budget on raw data collection. This is counterintuitive because data collection feels like progress in a way that data cleaning doesn’t, but the engineering reality is clear.

Second, when an AI system starts performing worse after an update or after ingesting new information, the first question to ask is about the data that was added, not the model architecture. In my experience, this is almost always the culprit, and it’s almost always the last place people look.

Third, there’s no shortcut around evaluation on held-out data that looks like your actual deployment conditions. A model can show excellent metrics on a test set and still fail badly in production if the test set doesn’t reflect what it will actually encounter. The training data determines what the model knows. The evaluation data determines whether you know that the model knows it. Those are different problems and they need different solutions.

More is not better. More of the right thing, carefully evaluated, is better. That’s a harder problem, but it’s the actual problem.