You get a notification. You check your phone. Nothing important. You put it down. Thirty seconds later, you check again anyway. If you have ever caught yourself doing this and wondered why, you are not dealing with a habit. You are dealing with a system that was explicitly engineered to produce that exact behavior, and it is working precisely as intended.

Tech Companies Engineered Your Brain’s Reward System. Now They’re Quietly Trying to Undo It.

The Skinner Box in Your Pocket

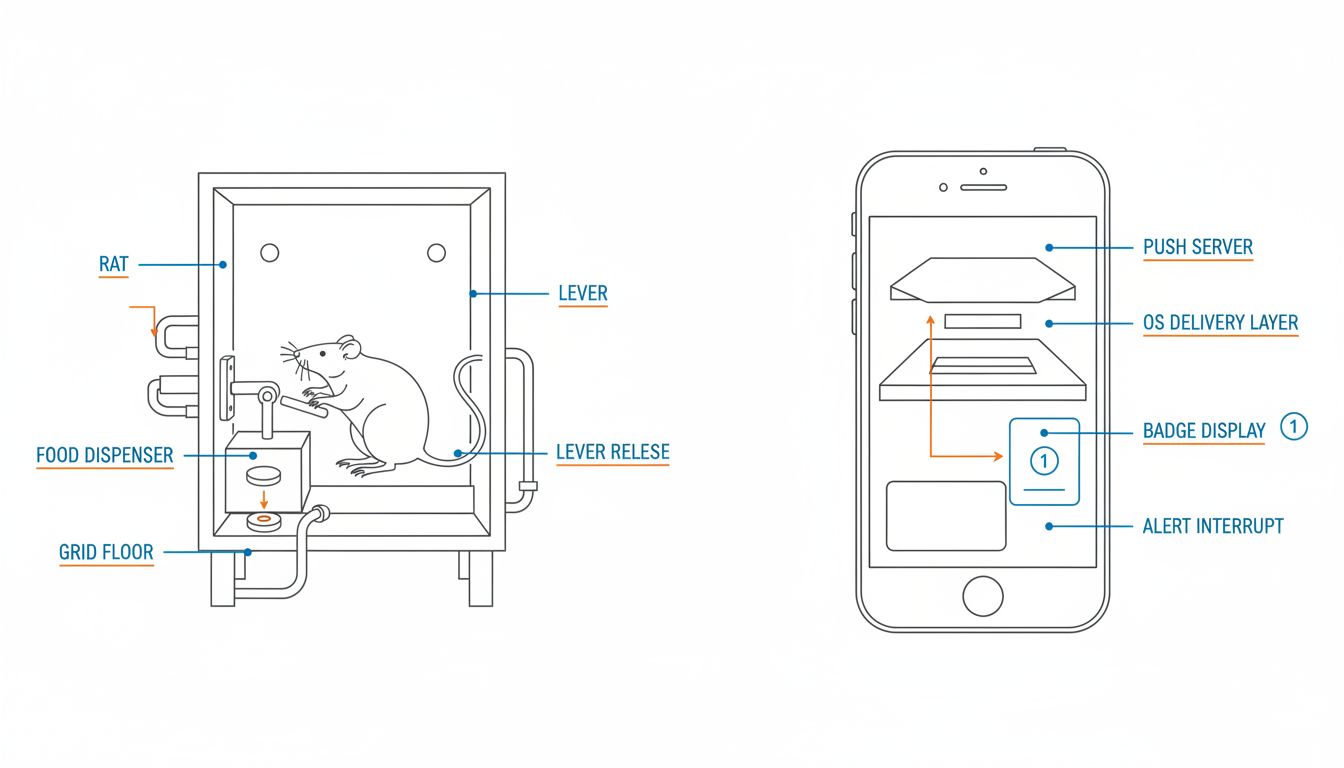

In behavioral psychology, there is a concept called operant conditioning, which is the process of shaping behavior through rewards and punishments. B.F. Skinner demonstrated this famously with rats in boxes, training them to press levers by delivering food pellets on various schedules. The most addictive schedule he found was not the one that always delivered food. It was the one that delivered food unpredictably. Variable ratio reinforcement, as it is called, produces the highest response rate and the most resistance to extinction (meaning the behavior persists even when rewards stop coming).

Now look at your notification system. Sometimes a notification is a message from someone you love. Sometimes it is a promotional email. Sometimes it is a reminder you set yourself and forgot about. Sometimes it is nothing you care about at all. The ratio of meaningful to meaningless is variable, unpredictable, and entirely outside your control. That is not a design flaw. That is the design.

The engineers who built these systems understand this deeply. The term they use internally is “engagement,” but what they are actually measuring is conditioned response rate. Every time you pull down the notification shade, you are a rat pressing a lever.

How the Architecture Reinforces the Conditioning

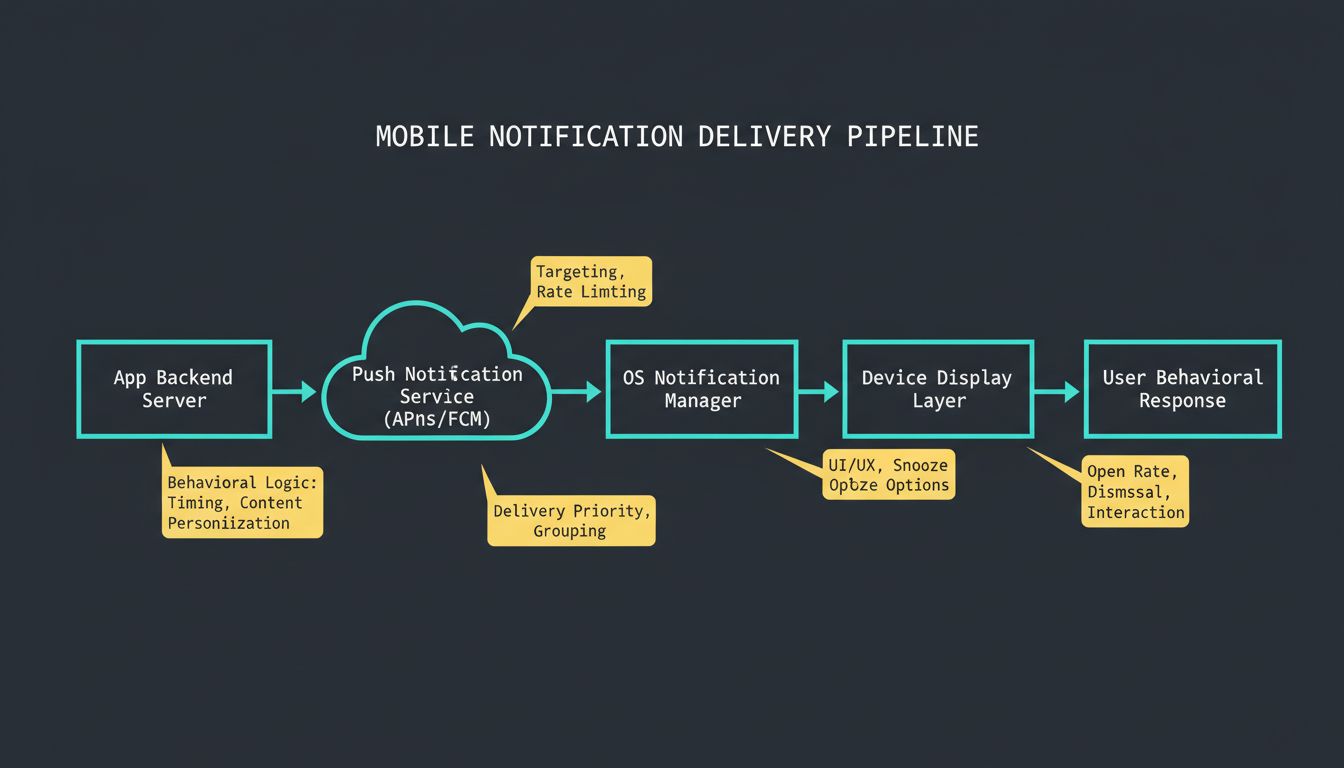

Let us get specific about how notification systems are actually built, because the architecture itself encodes the behavioral design.

Modern mobile operating systems implement a notification delivery pipeline that routes messages from app servers through a centralized push notification service (APNs on iOS, FCM on Android) to the device. Apps register for delivery, specify priority levels, and pass payloads. The OS handles display logic.

Here is what is interesting: the priority system gives apps enormous control over interruption level. A notification can be silent (delivered to the notification center but no alert), low (summary delivery, batched), high (immediate alert, wakes screen), or time-sensitive (breaks through focus modes). Apps are supposed to use these responsibly. In practice, almost every app defaults to the highest interruptible priority it can get away with, because interruption drives opens, and opens drive engagement metrics.

This is not an accident of implementation. As we have explored before in Simple Apps Aren’t Simple. Here’s What’s Actually Going On Behind the Scenes., the apparent simplicity of a notification badge conceals layers of deliberately optimized infrastructure whose purpose is behavioral throughput, not user utility.

The badge count (that red number on an app icon) is a separate psychological mechanism. It exploits what psychologists call the Zeigarnik effect, which is the tendency for incomplete tasks to occupy mental attention more than completed ones. A badge creates an open loop in your brain. The only way to close it is to open the app.

// What the app developer sees as a success metric

const engagementScore = (notificationsSent / totalUsers)

* openRate

* sessionDuration;

// What the user experiences

const cognitiveLoad = interruptions * contextSwitchCost;

The developer optimizes the first equation. The user absorbs the cost of the second. These interests are structurally opposed.

Why “Just Disable Notifications” Misses the Point

The common advice is to turn off notifications. This advice is correct but incomplete, because it treats the symptom rather than understanding the mechanism.

The conditioning does not disappear when you disable notifications. It shifts. People who disable app notifications often find themselves compulsively opening apps to check manually, sometimes more frequently than they would have checked if notifications were on. The checking behavior has been reinforced so thoroughly that it now runs without the external trigger. You have internalized the lever-pressing.

This is why the most effective approach, as detailed in Why the Most Productive Remote Workers Deliberately Create Digital Friction in Their Workflow, is not just disabling notifications but restructuring the entire environment to make compulsive checking costly rather than effortless. Physical distance from devices, app deletion, grayscale mode, and scheduled check-in windows are all forms of environmental design that work against the conditioning rather than just muting one of its triggers.

There is also something worth naming about asymmetry of intent. The teams building notification systems are not careless or naive. They are staffed with behavioral scientists, A/B testing frameworks, and long-horizon engagement data that most users will never see. The sophistication of the machine you are up against is genuinely remarkable, and treating it as a neutral tool that simply needs configuration is a category error.

What Responsible Notification Design Would Actually Look Like

It is worth asking what a notification system optimized for user benefit rather than engagement would look like, because the answer reveals how far current systems are from that goal.

First, it would implement strict urgency classification enforced at the OS level, not self-reported by apps. A message from a contact you have communicated with in the last 24 hours might qualify as time-sensitive. A marketing push from an app you have not opened in two weeks would not.

Second, it would aggregate notifications by type and deliver them in scheduled batches by default, similar to how email digest modes work. The cognitive cost of 40 separate interruptions is not 40 times the cost of one. It is closer to 400 times, because each interruption triggers a full context switch (the mental equivalent of a CPU flushing its cache to handle an interrupt).

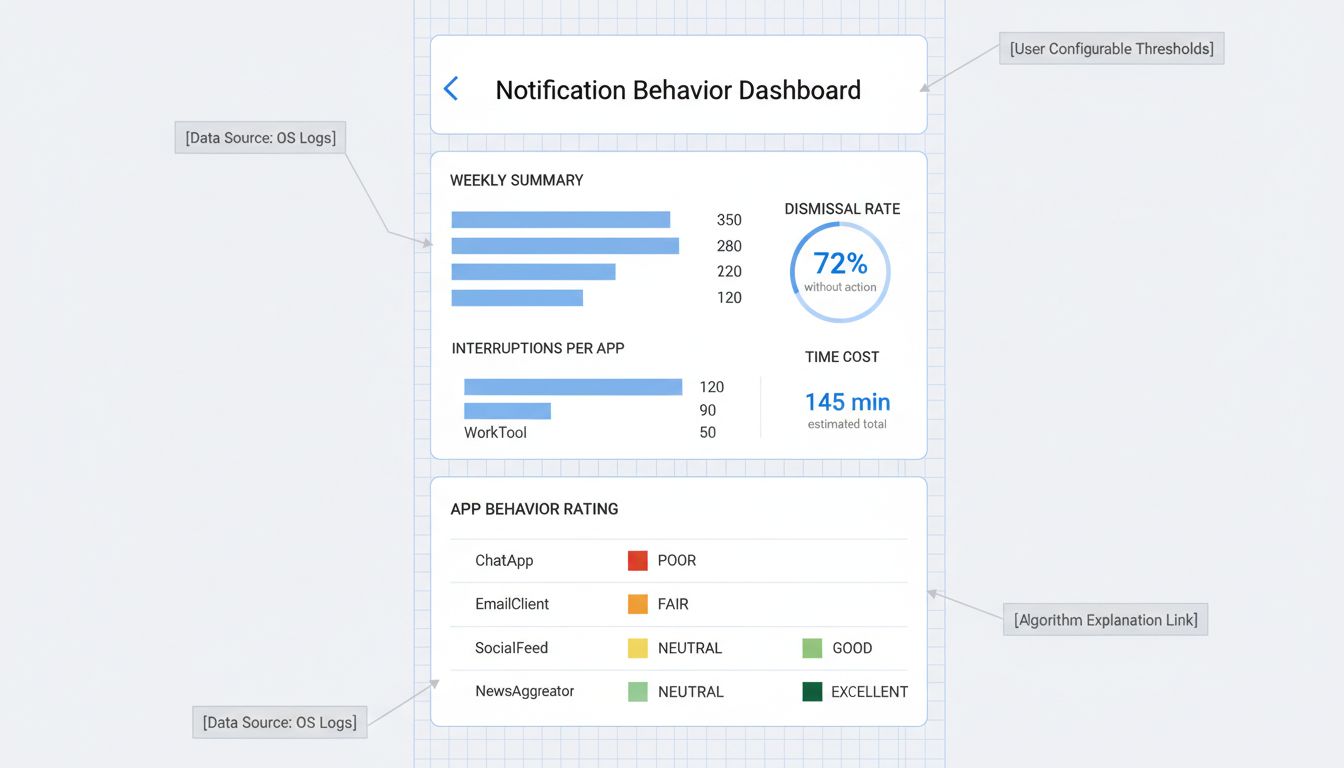

Third, it would make the behavioral data visible to users. If you could see a dashboard showing “this app generated 87 interruptions this week that you dismissed without acting on,” you would have the information needed to make a rational decision. Opacity is not a neutral design choice.

Some of this is beginning to happen, partly because regulators are paying attention and partly because the most sophisticated users are opting out entirely, creating product pressure to compete on something other than compulsion. Tech companies are beginning to recognize that the engagement-at-all-costs model has a user retention ceiling, and that attention burned too aggressively today is attention unavailable tomorrow.

The Code Is Doing What It Was Asked to Do

Here is the thing that sits uncomfortably once you see it clearly. The engineers who implemented these systems mostly wrote clean, correct code. The notification pipeline works exactly as specified. The A/B tests ran honestly. The metrics reflected reality. The problem is not a bug. It is the specification itself.

The specification was written to maximize engagement, which is a proxy metric for revenue, which was optimized because that is what the product roadmap asked for. At every level, people were solving the problem they were given. The behavioral conditioning was not a side effect. It was the output of a system optimized end-to-end for a goal that was never quite aligned with yours.

Understanding this does not make you immune to it. But it does change the nature of the problem. You are not fighting your own weakness or your lack of discipline. You are navigating a system that was engineered by very smart people with considerable resources to produce the behavior you are trying to change. That framing matters, because it means the solution is also architectural, not just motivational.

The lever is still there. Whether you press it is at least partly up to you, if you are deliberate enough about it.