The Hype Obscures Something True

Prompt engineering got rebranded as a discipline somewhere around 2022, and with that came a small industry of courses, certifications, and job postings. The framing implies you need to learn something fundamentally new. You mostly don’t, if you’ve spent time writing good technical documentation.

The core skill in both cases is the same: communicate intent to a system that cannot ask clarifying questions. A README doesn’t interrupt you to ask what you meant. Neither does a well-structured API contract. An LLM can ask clarifying questions in a conversational session, but in practice, especially in production pipelines where you’re calling the API programmatically, your prompt is a one-shot specification. You write it, the model executes against it, and the quality of the output is largely determined by the quality of that specification.

If you’ve been writing software for more than a few years, you’ve already internalized most of what makes a prompt effective. The vocabulary is different. The mechanism is alien. But the underlying discipline is one you’ve been practicing every time you wrote a function signature, a docstring, or a ticket description that someone else could actually act on.

What Documentation and Prompts Are Both Actually Doing

Good documentation solves a context problem. The person reading your docs doesn’t have your assumptions, your codebase familiarity, or your mental model of the problem. Your job is to transfer enough context that they can proceed without you. This is why senior engineers write better docs than junior engineers, usually. Not because they write more, but because they’ve learned which assumptions are invisible and need to be stated.

Prompts solve the same problem, against a different audience. A large language model has broad world knowledge but zero context about your specific situation unless you provide it. When you write a prompt like “summarize this,” you’re leaving the model to guess at format, length, audience, level of technical detail, and what “this” even is in relation to what you want. That’s the equivalent of writing a function called process() that takes a generic object and hoping whoever calls it figures it out.

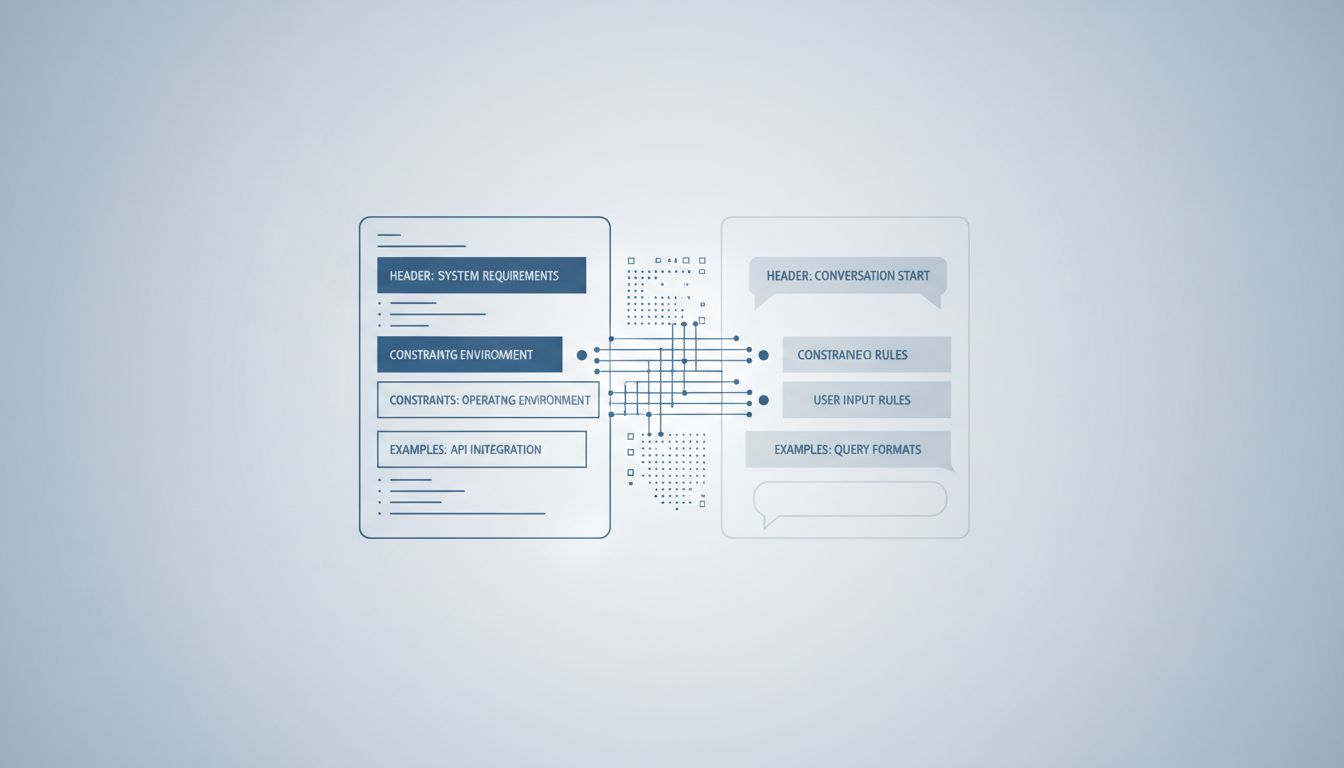

The documentation parallel runs deep. Consider the structure of a good API reference: it specifies the input format, the expected output, the constraints, the edge cases, and often an example. A well-constructed prompt does all of these things. “You are a technical writer. Given a raw git commit message, rewrite it as a one-sentence changelog entry suitable for non-technical users. Do not include branch names or ticket numbers. Example input: ‘fix: null ptr in payment handler when card_type is missing’. Example output: ‘Fixed a rare crash when processing certain payment methods.’” That’s not magic. That’s a function signature with a docstring and a test case.

The Specific Techniques Map Directly

If you’ve written documentation professionally, you already use these specific practices, and they transfer:

Role assignment is just audience targeting. When you write docs, you think about who’s reading. A getting-started guide for a new user reads differently than an internal architecture doc for a senior engineer. When you tell an LLM “you are a security engineer reviewing this code for vulnerabilities,” you’re doing the same thing. You’re constraining the model’s lens, giving it a perspective to evaluate from. The model doesn’t “become” a security engineer in any meaningful cognitive sense, but framing shifts which patterns in its training it weights more heavily.

Format constraints are your output schema. Technical writers know that unstructured output is hard to use. You specify whether something should be a list, a table, a numbered procedure, or prose, because the format carries meaning and affects usability. In a prompt, “respond in JSON with keys ‘severity’, ‘description’, and ‘suggested_fix’” is just schema definition. You’re specifying the return type. Any developer who’s written a protobuf definition or a TypeScript interface has done this already.

Worked examples are unit tests. The technique the research community calls “few-shot prompting” is giving the model two or three examples of input and desired output before presenting the real input. This is exactly what you do when you write example code in a README. You’re not explaining the logic, you’re demonstrating the contract. Show the input, show the expected output, and let the reader (or model) pattern-match from there. Anthropic, OpenAI, and others have all documented that including examples in prompts measurably improves output consistency, especially for tasks with non-obvious output formats.

Constraints prevent scope creep. Good documentation tells you what something does, but also what it doesn’t do. This is the “non-goals” section in a design doc. In prompts, explicit constraints do the same work: “do not suggest changes outside the function I’ve shown you,” “do not add features that weren’t in the original specification.” Without constraints, LLMs will often helpfully extrapolate in directions you didn’t want. This is the model equivalent of a new engineer who solves the stated problem and three adjacent problems you weren’t ready to address yet.

Where the Analogy Gets Complicated

The documentation framing is useful but not complete. There are ways prompting differs from documentation that matter, and ignoring them produces the wrong mental model.

The biggest difference is that documentation has a stable reader. You write for a specific version of a product, a specific audience, a specific context. LLMs are not stable. GPT-4 and GPT-4o have different default behaviors. The same prompt can produce different outputs across model versions, and providers update models without always changing the version name you’re calling. This means your prompts have a maintenance burden that traditional documentation doesn’t. A README written for v2 of your library can sit unchanged for years. A production prompt that worked well in March might behave differently in October, not because your prompt changed, but because the model did.

This connects to a broader point about what LLMs are actually doing. The model isn’t parsing your prompt like a compiler parses syntax. It’s producing a probability distribution over possible outputs, shaped by your prompt and by everything in its training. You can’t inspect that process. Documentation has a human reader you can at least imagine. A prompt has an audience you cannot fully model, which is a strange inversion of the usual situation.

The other complication is instruction-following vs. documentation-reading. A human reading your docs can catch a contradiction and flag it. An LLM will often try to satisfy conflicting instructions simultaneously, producing output that technically addresses both constraints but serves neither. You need to be more internally consistent in a prompt than you do in documentation, because there’s no reader who will push back before acting.

Chain-of-Thought Is Just Rubber Duck Debugging

One technique that sounds mysterious until you name it for what it is: chain-of-thought prompting. The idea is to ask the model to reason through its answer step by step before giving a final response. Research from Wei et al. at Google Brain (published 2022) showed that prompting models to show their reasoning improved performance on multi-step math and logic problems substantially, even compared to models that were larger by parameter count.

But developers have been doing this with humans for decades. Rubber duck debugging, the practice of explaining your problem out loud to an inanimate object, works because the act of articulation forces you to slow down and catch implicit assumptions. When you ask an LLM to “think through this step by step,” you’re doing the same thing. You’re asking the model to externalize its intermediate states, which both surfaces errors and produces a more reliable final answer.

The same logic applies to asking a model to identify potential problems with its own output before finalizing it. That’s a code review pass, structurally. You’re not trusting the first draft. You’re building in a review loop. Developers who’ve worked in environments with strong review culture find this intuitive immediately.

The Real Barrier Isn’t Technical

Here’s what I think is actually going on when developers struggle with prompting: the problem isn’t that prompting is hard. It’s that good documentation is hard, and most people aren’t as good at it as they think they are.

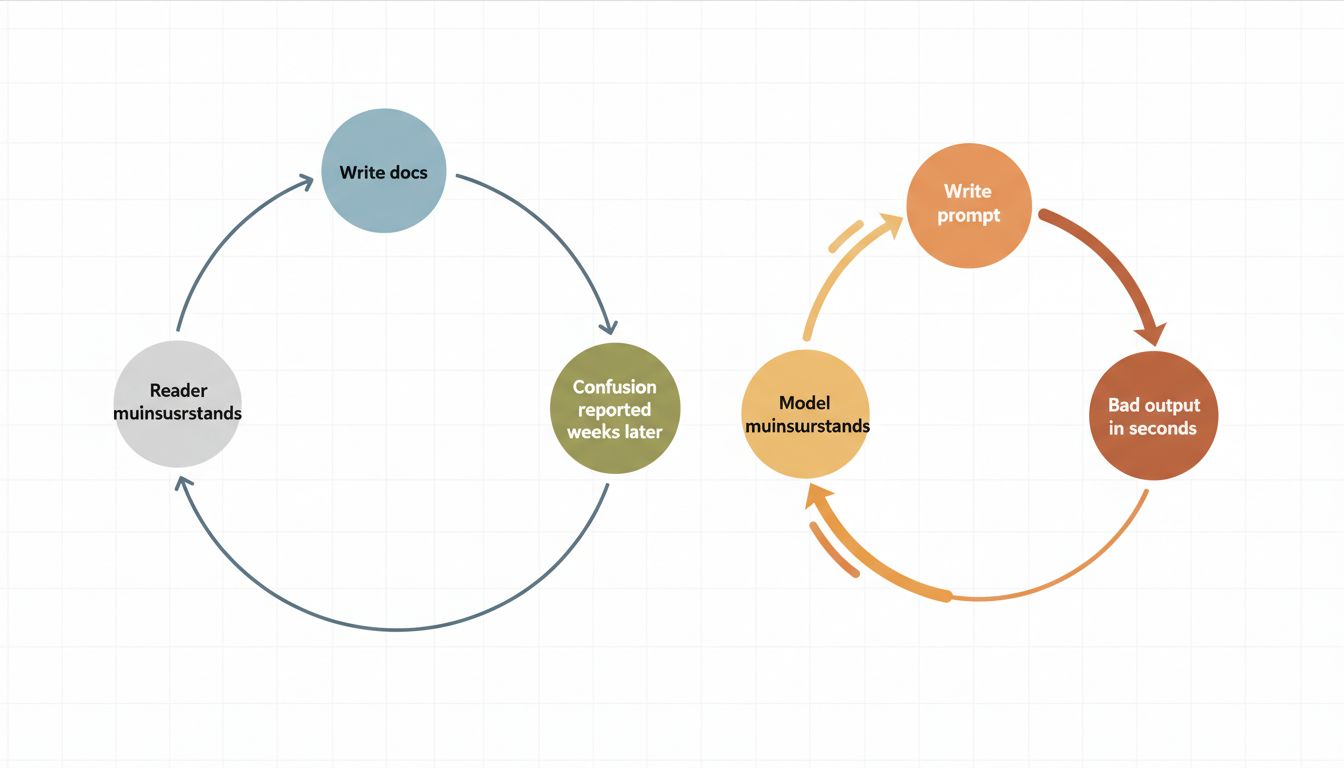

Writing a clear, unambiguous specification for a system that can’t ask questions is genuinely difficult. It requires knowing what you actually want (harder than it sounds), anticipating misinterpretation, and being precise without being brittle. Most developers write mediocre documentation most of the time, not because they lack skill, but because there’s rarely urgent feedback. Bad docs produce frustration later. Bad prompts produce bad output immediately.

That tight feedback loop is actually an advantage for learning. The iteration cycle on a prompt is seconds. You can try ten variations of a specification, see which one produces reliable output, and develop real intuition about what clarity looks like in practice. This is faster than learning documentation discipline the traditional way, which involves waiting months to see which of your docs actually helped someone and which ones everyone silently ignored.

The developers I’ve seen get good at prompting quickly are almost always the same ones who write careful commit messages, maintain their own READMEs fastidiously, and treat a ticket description as a real communication artifact. That’s not a coincidence. It’s the same muscle.

What This Means

The reframe matters because it changes what you invest in. If prompt engineering is a new mystical skill, you take a course and hope for transferable techniques. If it’s applied specification writing, you invest in getting better at expressing intent clearly, which improves your documentation, your ticket writing, your code comments, and your prompts simultaneously.

Concretely: before reaching for prompting tutorials, look at a prompt that isn’t working and ask yourself if you’d accept that as a function specification. Does it define input format? Output format? Constraints? Audience? Error handling? If a junior engineer handed you that spec and said “implement this,” would you send it back for more clarity? If yes, fix the prompt the same way you’d fix the spec.

The model is a very fast, very capable, somewhat unpredictable collaborator that will do exactly what you asked for, which is often not quite what you wanted. Every senior developer has worked with humans who operate this way. You already know how to write for them.