The Simple Version

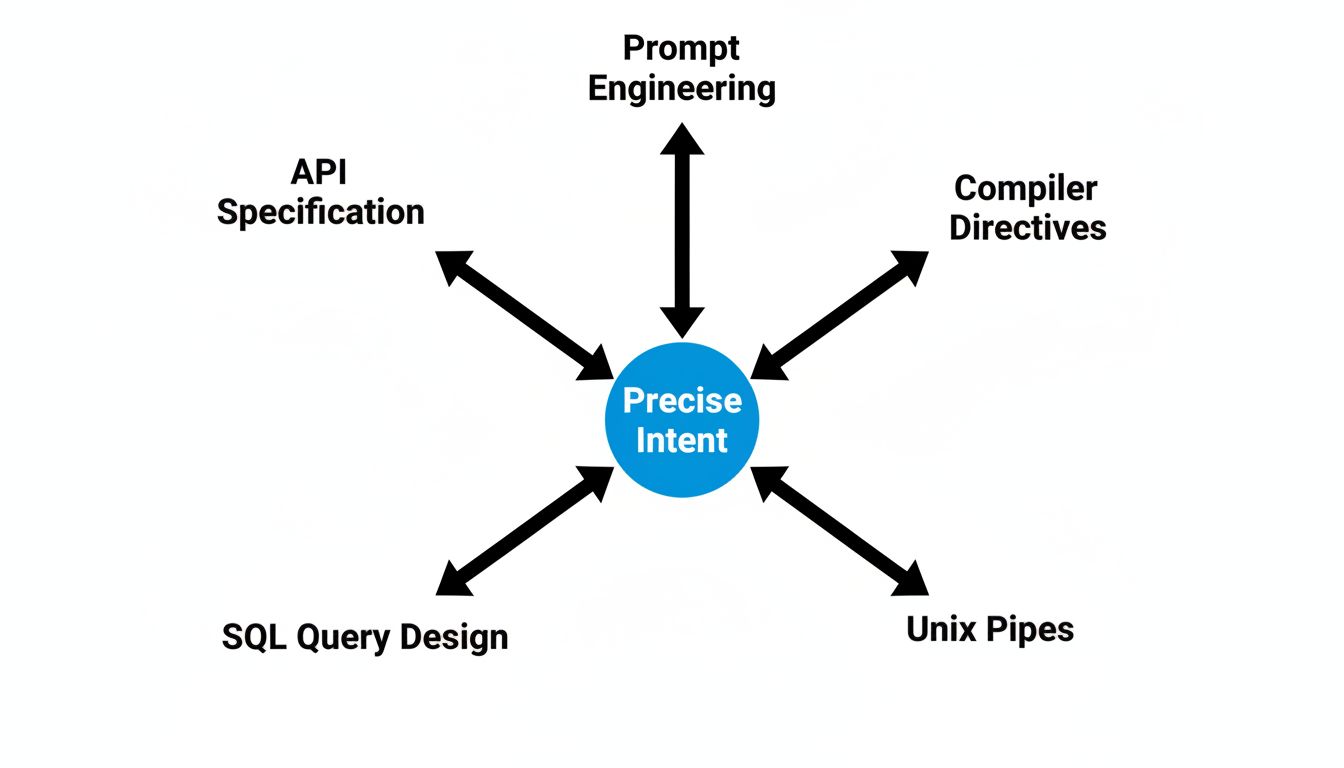

Prompt engineering is the practice of writing precise instructions to get reliable output from an AI model. It sounds novel, but it’s the same discipline engineers have applied to APIs, compilers, and query languages for fifty years.

Why It Feels Like a New Skill

When a technology is new enough to be mysterious, people treat adjacent skills as mysterious too. SQL was once considered a specialized craft. Writing a Makefile well was an art. Knowing how to structure a Unix pipe to get exactly the output you needed was a mark of expertise. Now these things are just table stakes.

Prompt engineering is at that same early stage where the skill is real but the mystique is inflated. The gap between a prompt that works and one that doesn’t can be significant. A vague instruction to a language model produces a vague result, just like a vague ticket to a developer produces a vague feature. But the underlying skill, specifying intent precisely for a system that will execute literally, is not new.

What’s new is the interface. Natural language is a looser, more ambiguous medium than Python or SQL, which means the engineering discipline has to compensate. You’re not fighting a compiler’s type system. You’re fighting a probabilistic system that will confidently fill in gaps you didn’t know you left.

What Engineers Already Know How to Do

Consider what good prompt engineering actually involves. You define the task clearly. You constrain the output format. You provide examples of what success looks like. You test against edge cases. You iterate when the output drifts from your intent.

That’s a spec. That’s a test suite. That’s debugging. The vocabulary is different but the loop is identical to what engineers do when writing documentation for an API, configuring a complex system, or specifying behavior for a compiler. The article “Prompt Engineering Is Just Documentation, and You Already Know How to Do It” makes a related point: the skills transfer directly, they just arrive wearing unfamiliar clothes.

The specific techniques that prompt engineers use also map cleanly to established practices. Chain-of-thought prompting (asking the model to reason step-by-step before answering) is essentially forcing the system to show its work, which is what any good code review process demands. Few-shot examples are training data at micro scale. Structured output constraints are schema validation. None of these are inventions. They’re adaptations.

Where the Craft Is Actually Hard

Saying that prompt engineering isn’t new doesn’t mean it’s easy. There are genuinely hard problems here.

The most significant is that the system you’re specifying behavior for is not deterministic. When you write a SQL query, the database does exactly what you asked. When you write a prompt, the model does something in the neighborhood of what you asked, weighted by patterns in its training data. That introduces a class of failure modes that traditional engineering disciplines don’t prepare you for well.

You also can’t fully inspect the system you’re instructing. A compiler will tell you exactly where your syntax broke. A language model will give you a confident, coherent response that’s subtly wrong in ways that are hard to catch programmatically. And as “The Prompt You Wrote Is Not the Prompt the Model Reads” explains, there’s a real gap between what you write and what the model actually processes, because tokenization, context windows, and system-level formatting all intervene before your instruction reaches anything resembling the model’s attention.

These are hard problems. They reward careful thinking, systematic testing, and precision with language. They’re just not uniquely hard in the way the current discourse implies.

The Marketing Problem

The inflation of prompt engineering as a discipline serves specific interests. Consultants can charge more for a novel skill than a familiar one. Bootcamps need something to sell. AI companies benefit when their tools appear to require specialized expertise, because that expertise creates lock-in and justifies adoption.

None of this is a conspiracy. It’s just how new technology gets positioned. The same thing happened with “data science” in the early 2010s, a real skill that absorbed genuine statistical rigor, some programming competence, and domain knowledge, then got repackaged as something rarer and more exotic than it was. Many data scientists were doing work that, in another era, would have been called statistics or business analysis.

Prompt engineering is following the same arc. The skill is real. The premium attached to its novelty is not.

What This Actually Means for You

If you’re an engineer or technical writer or product manager wondering whether you need to learn something entirely new, the answer is mostly no. You need to extend what you already know into a new context.

The things that make you good at writing clear specifications, debugging ambiguous systems, and thinking about what a downstream consumer of your output actually needs are the things that make you good at prompt engineering. The specific mechanics of few-shot prompting, output formatting, and temperature settings can be picked up in an afternoon. The underlying judgment takes years, and you may already have it.

The legitimate new skill is understanding how language models fail. They’re confidently wrong in specific, learnable ways. They’re sensitive to framing in ways that traditional systems aren’t. They degrade in ways that are probabilistic rather than deterministic, which means your testing and validation approaches need to adjust. That part is worth studying seriously.

But “understanding how a new system fails” is just engineering. We’ve always done that. We just used to call it something less marketable.