In 2013, Salesforce gave a product demo at Dreamforce, its annual user conference, that went exactly as planned. Transitions were smooth. Data loaded instantly. The presenter moved through the interface with the confidence of someone who had rehearsed the exact path they were walking. The crowd responded warmly. Several attendees later said it was the most impressive demo they’d seen all year.

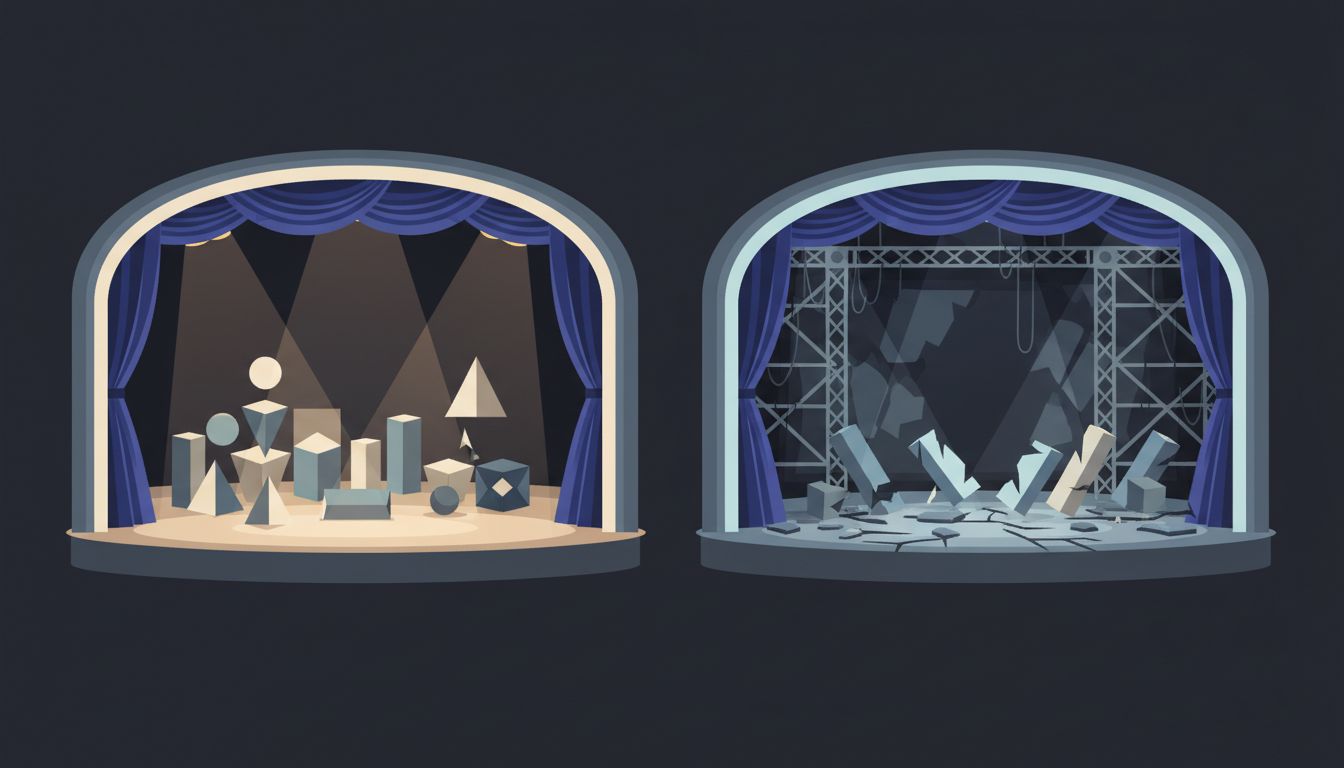

What those attendees didn’t know, and what nobody on stage mentioned, is that the demo ran on a purpose-built environment that had nothing to do with the production system actual customers used. This isn’t unique to Salesforce. It’s standard practice across the industry, and understanding why reveals something important about how software products fail.

The Setup

Salesforce, by 2013, was already the dominant CRM platform. It had real customers with real data and real complaints. Its status page regularly logged incidents. Customer forums were full of threads about slow load times, broken integrations, and features that behaved differently depending on which browser you used.

None of that existed in the demo environment.

Demo environments are engineered for a specific kind of success. The data is clean, minimal, and pre-loaded to look good. There are no edge cases, because nobody put edge cases in. The network conditions are controlled. The feature flags are set to show the best version of everything. In some cases, specific bugs that exist in production are patched in the demo environment and nowhere else, because fixing them in production would take too long and the conference was in three weeks.

The people building these demo environments aren’t doing anything dishonest in a legal sense. They’re doing exactly what they’re asked to do: make the product look compelling to an audience that needs to be convinced.

What Happened

The consequences of this practice showed up clearly in Salesforce’s enterprise rollouts throughout that era. Companies would sign six-figure contracts based on what they saw at Dreamforce or in a sales call. Then their IT teams would spend months trying to reproduce the behavior they’d been shown.

The integrations that snapped together in the demo required weeks of configuration in production. The reporting interface that showed clean dashboards immediately turned sluggish once it was connected to a company’s actual database with five years of messy records. Features that the sales team had demonstrated confidently were, in production, still in limited availability or had known issues that appeared only at scale.

This pattern, repeated across customer after customer, contributed to what enterprise software analysts started calling the “implementation gap.” It’s the span between what a customer expects based on the sales process and what they actually experience once the contract is signed. Independent research on enterprise software consistently finds that a large majority of CRM implementations take longer and cost more than buyers expected, and satisfaction rates drop sharply in the first year of use.

Salesforce is a convenient example because it’s large and well-documented, but the same dynamic plays out at companies of every size. Startup demos at Y Combinator Demo Day are frequently polished fictions. Enterprise vendors at trade shows run identical controlled environments. Even internal demos to executive stakeholders get the same treatment: a developer spends two days hardcoding the happy path so the VP doesn’t see what happens when the system gets unexpected input.

Why the Gap Exists

There are three structural reasons this happens, and they compound each other.

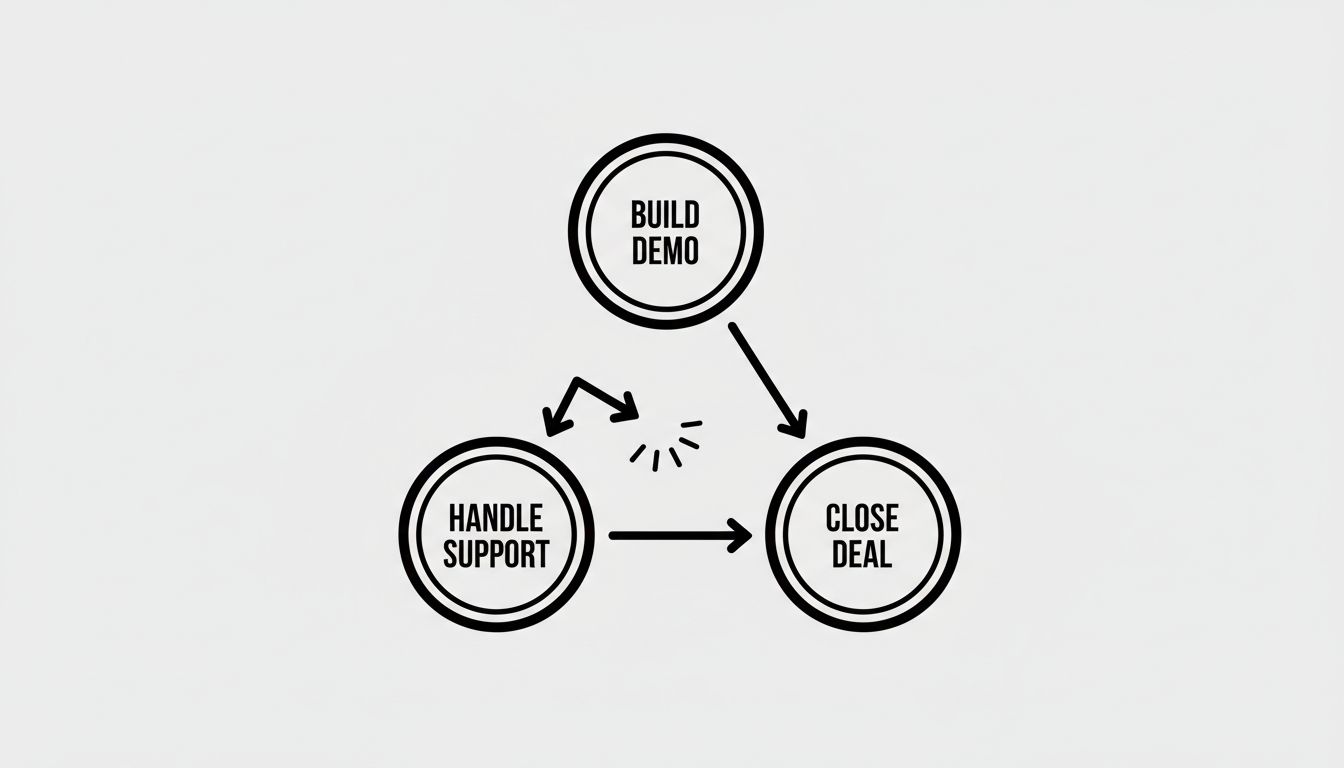

First, demos are evaluated on different criteria than products. A demo needs to be compelling in twenty minutes. A product needs to be reliable over years. These are fundamentally different engineering problems. When a team is preparing a demo, they optimize for the twenty-minute version. They can’t help it. That’s the problem they’ve been asked to solve.

Second, the people who build demos often aren’t the people who deal with production failures. A sales engineer or a developer advocate builds the demo environment. A different team handles the support tickets when customers can’t replicate the behavior they were shown. These two groups rarely sit in the same room and compare notes. The feedback loop that might fix this is broken by organizational structure.

Third, the sales cycle creates perverse incentives. A salesperson who shows a flawless demo closes the deal. A salesperson who shows a realistic demo, with the lag and the occasional error message and the feature that only half-works, loses the deal to a competitor who showed a flawless demo. The incentive structure actively punishes honesty.

The result is that every stakeholder in the process behaves rationally, and the collective outcome is a system that consistently misleads buyers. Nobody is lying, exactly. Everyone is just solving their immediate problem.

What Buyers Can Actually Do

If you’re evaluating software for your organization, the single most useful thing you can do is refuse the vendor’s demo environment. Ask to see the product running against a sample of your own data, in your own infrastructure, on a normal afternoon when nobody is watching. You will see a completely different product.

Specifically, ask the sales team to show you:

The error states. What does the system look like when something goes wrong? A product that handles errors gracefully is a product that was built by people who expected errors. A product that crashes or shows a blank screen or throws a raw stack trace is a product where error handling was an afterthought.

The feature at scale. The demo dataset probably has forty records. Your real use case has forty thousand. Ask what happens. If they can’t show you, that’s important information.

The integration in a real environment. If the vendor claims their product integrates with your existing tools, ask for that integration to be demonstrated against a staging version of your actual system, not their generic connector demo.

If the vendor resists these requests, that resistance is itself diagnostic. A team that’s proud of what they’ve built will generally welcome scrutiny. A team that’s hiding the gap between the demo and the product will find reasons to keep you in the controlled environment.

For teams building software, the lesson runs in the other direction. Your demo environment is a mirror showing you what your product could be. The gap between the two is a backlog. Treating that gap as technical debt worth tracking is one of the more honest things a development team can do. If you can make it work in the demo, you know what you’re aiming for. The question is whether you have the organizational will to actually get there.

The companies that close this gap fastest are the ones that make the same person responsible for building the demo and answering the support tickets. When the same engineer who demos the product on Tuesday also hears about the production failure on Thursday, the feedback loop that was broken by organizational structure gets repaired by personal accountability.

Salesforce, to its credit, has improved substantially over the years. Its uptime and reliability metrics are genuinely strong now, and its implementation ecosystem has matured. But the demo environment still exists. It always will, because the incentive to show your best self hasn’t changed. The gap just got smaller because someone, at some point, decided to treat it as a gap worth closing.