Vector databases are having a moment, and with that comes a lot of hand-wavy explanations about how they “understand” your data. They don’t. What they do is considerably more interesting, and considerably stranger, than the marketing copy suggests. Here’s what’s actually happening.

1. “Meaning” Gets Converted to Coordinates

Before a vector database can do anything useful, your text (or image, or audio) has to become a list of numbers. This is what an embedding model does. You feed it the sentence “the cat sat on the mat” and it returns something like a list of 1,536 floating-point numbers. That list is a point in a 1,536-dimensional space.

The remarkable thing is that this conversion preserves semantic relationships as geometric ones. Words and phrases that mean similar things end up near each other in that high-dimensional space, not because anyone hand-coded that proximity, but because the model learned it from patterns across enormous amounts of text. The database itself has no idea what “cat” means. It only knows coordinates. As the folks at Embeddings Are Doing More Work in Your Stack Than You Realize cover, these vectors are doing a lot of heavy lifting quietly.

2. Similarity Is Distance, and Distance Is Geometry

When you query a vector database, you’re not asking “find things that mean the same thing.” You’re asking “find the points closest to this point.” The most common ways to measure that closeness are cosine similarity (the angle between two vectors) and Euclidean distance (the straight-line distance between two points in space).

Cosine similarity is particularly useful for text because it ignores magnitude and only cares about direction. A short tweet and a long essay about the same topic can end up pointing in nearly the same direction even if their raw vectors have very different scales. This is not a metaphor. The math works out this way literally because of how the dot product normalizes.

3. High-Dimensional Space Behaves Nothing Like 3D Space

Here’s where your intuitions will betray you. In three dimensions, a sphere contains a predictable fraction of the volume of the cube surrounding it. In 1,000 dimensions, almost all the volume of a hypercube sits in the corners, not near the center. This is called the curse of dimensionality, and it’s not just a textbook concept. It has real consequences for search.

In very high dimensions, the distance between any two random points converges. Everything becomes roughly equidistant from everything else. Nearest-neighbor search starts to lose meaning because “nearest” stops being meaningfully different from “farthest.” This is why embedding models are carefully designed to pack useful information into fewer dimensions, and why the choice of dimensionality involves genuine engineering tradeoffs, not just picking a bigger number.

4. Exact Search Is Computationally Impossible at Scale, So Databases Approximate

If you have 100 million vectors and you want the 10 closest to your query vector, the brute-force approach is to compute the distance to all 100 million vectors and sort them. That’s both mathematically exact and completely impractical. So vector databases use approximate nearest neighbor (ANN) algorithms instead.

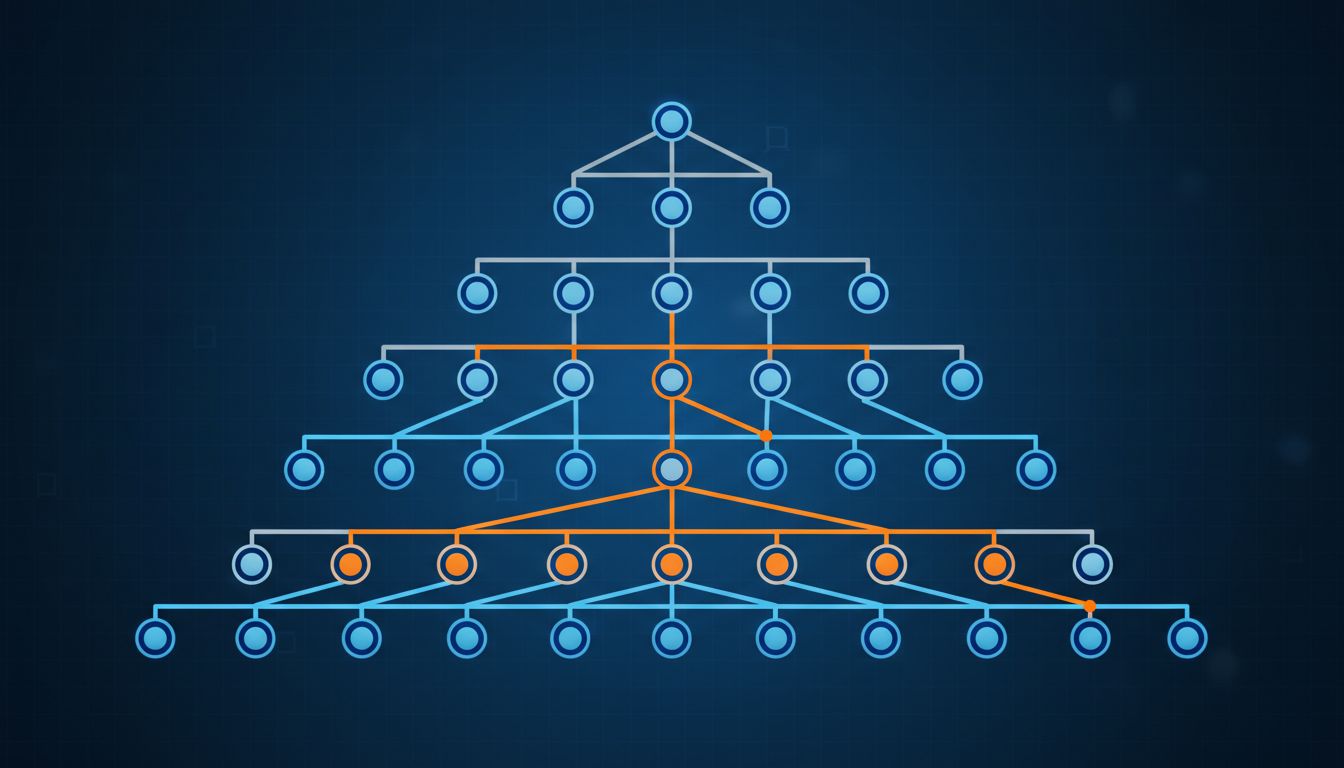

The most widely used approach is HNSW (Hierarchical Navigable Small World graphs), which builds a multi-layer graph where each layer is a progressively sparser view of the data. At query time, you enter at the top layer (few nodes, fast navigation), find approximate neighbors, then descend to lower layers for refinement. It’s structurally similar to a skip list. You get results that are almost always in the true top-10, and you get them in milliseconds instead of seconds. The tradeoff is a recall rate that tops out below 100%, which is a real engineering decision you have to make consciously. Pinecone, Weaviate, and Qdrant all use variants of HNSW for this reason.

5. The Index Is Not the Data, and That Distinction Matters for Updates

In a traditional relational database, adding a row is cheap. In a vector database, adding a vector means updating the index structure that makes fast search possible, and that’s significantly more expensive. With HNSW specifically, inserting new vectors requires connecting them into the graph, which involves finding their neighbors, which involves traversing the graph. This is why most production vector databases expose tunable parameters around index building versus query performance, and why some batch their inserts.

This also means deletes are awkward. Marking a vector as deleted without rebuilding the index is common, but it leaves “tombstoned” entries that still occupy space in the graph and can affect search quality over time. Many systems require periodic re-indexing to stay healthy, which has real operational cost. If you’ve thought carefully about Deleting Data Is One of the Hardest Problems in Software Engineering, this will feel familiar.

6. The Model That Created Your Vectors and the Query That Searches Them Must Match

This one trips people up in production. Vector databases don’t care how your numbers were generated. They’ll happily store vectors from any model. But similarity search only makes sense if your stored vectors and your query vectors live in the same geometric space, which means they must be produced by the same embedding model.

If you embed your documents with OpenAI’s text-embedding-3-large and then query with a different model (or even a different version of the same model), the coordinates won’t correspond to the same spatial structure. Your search results will be garbage, and they’ll be confident-looking garbage, which is the worst kind. This is why model versioning for embedding pipelines is a genuine operational concern, not a theoretical one. When embedding models get updated, the responsible path is to re-embed your entire corpus, which for large datasets means planning ahead and budgeting for it.

Vector databases are a genuinely clever solution to a hard problem: making high-dimensional geometric search fast enough to be useful. The abstraction of “semantic search” is convenient, but it obscures what’s actually happening. Under the hood, it’s coordinates, distance functions, approximate graph traversal, and careful engineering around the places where the math gets uncomfortable. That’s worth understanding before you build on top of it.