The pattern shows up constantly: you ask a large language model something embarrassingly simple, like how many times the letter ‘r’ appears in the word ‘strawberry’, and it gets it wrong. You ask the same model to analyze a Kafka short story through a Marxist lens, and it does it beautifully. The obvious interpretation is that the model is dumb about small things and smart about big things. That interpretation is wrong.

The real problem is architectural, not intellectual. Smarter models don’t fail simple tasks despite their capabilities. They fail them because of how those capabilities were built.

Bigger Models Are Trained on Harder Problems

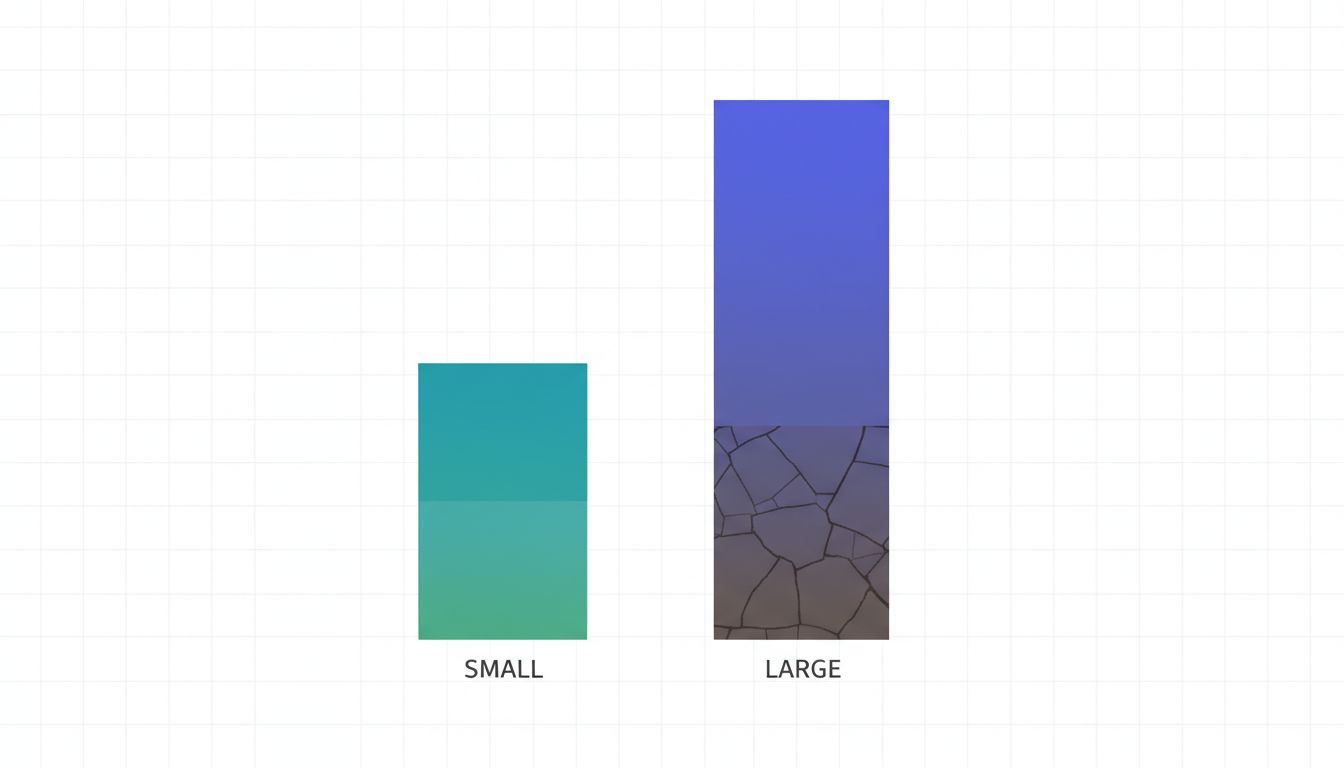

When researchers scale a model, they do it by training on more data and optimizing for more complex reasoning. The gradient signals that shape the model’s weights come overwhelmingly from hard problems: multi-step logic, nuanced summarization, code generation with tricky edge cases. Simple, low-signal tasks like character counting or basic arithmetic contribute almost nothing to that optimization process.

The result is a model that has developed extraordinarily sophisticated machinery for contextual reasoning while having almost no reliable mechanism for exact symbolic computation. A smaller model, trained on a narrower task mix that included more mechanical operations, might actually handle ‘count the vowels’ more reliably, not because it’s smarter, but because that kind of task was proportionally more present in its training signal.

This isn’t a bug that gets patched with more parameters. It’s a consequence of what ‘better’ means during training.

Language Models Are Pattern Engines, Not Calculators

A transformer (the architecture underlying most large language models) operates by predicting the next token based on context. It has no working memory in the traditional computational sense. When you ask it to count letters, it doesn’t iterate through a string the way your Python script does. It makes a probabilistic prediction about what the answer looks like based on patterns it has seen.

This is why it can write a correct sorting algorithm in code but get confused if you ask it to mentally trace through that same algorithm step by step. The code exists in its training data as a static artifact. The simulation of execution requires a kind of serial, register-based processing that transformers fundamentally don’t do well.

Smarting up the model makes it better at recognizing patterns across longer contexts, not better at mechanical symbol manipulation. The two improvements don’t travel together.

RLHF Teaches Models to Sound Correct, Not Be Correct

Reinforcement Learning from Human Feedback (RLHF) is the process by which models are fine-tuned based on human preferences. Human raters evaluate outputs and the model learns to produce responses that get rated highly. The problem is that human raters are themselves much better at evaluating sophisticated, well-reasoned answers than they are at catching subtle errors in mechanical tasks.

A confident, fluent wrong answer to a simple question often scores better than a hesitant correct one. Over thousands of iterations, the model learns something uncomfortable: sounding right is more reliably rewarded than being right. As discussed in AI chatbots apologize so often because apologizing was the safest thing to train them to do, RLHF systematically bakes in the biases of the feedback process itself.

Larger models, having gone through more extensive RLHF, have more deeply internalized this pattern. They are more fluent, more confident, and more consistently wrong in a way that sounds plausible.

Capability Gains Create Overconfidence at the Margins

There’s a secondary effect that’s easy to miss. As models get more capable, their calibration (how well their expressed confidence matches their actual accuracy) gets worse in specific domains. A weaker model, uncertain about most things, will hedge. A stronger model, accurate about most things, extends that confidence uniformly, including into the domains where it shouldn’t.

When GPT-4 gets a letter-counting question wrong, it doesn’t say ‘I’m not sure.’ It states the answer the same way it states that mitochondria are the powerhouse of the cell. The model has learned that confident declarative sentences are the correct register for factual questions, and it applies that register even when the underlying mechanism is unreliable.

The Counterargument

The fair pushback here is that this problem is being solved, and recent work suggests that’s not entirely wrong. Chain-of-thought prompting, where you ask the model to reason step by step, does improve performance on some mechanical tasks. Models with access to code execution (like using a Python interpreter as a tool) can genuinely offload exact computation to a reliable mechanism.

But these are workarounds, not solutions. Chain-of-thought helps with multi-step reasoning but doesn’t fix the fundamental tokenization-level character blindness that causes ‘strawberry’ errors. And tool use is an architectural addition, not a capability of the language model itself. The core claim still stands: scaling the language model alone makes these specific failure modes worse, not better. You have to route around the model to fix them.

The Point Is Not That AI Is Bad

None of this means these models aren’t useful. They’re remarkably useful for the kinds of tasks they were trained to handle. But there’s a real cost when we conflate sophisticated reasoning ability with general-purpose reliability. A system that can explain the thermodynamics of a steam engine but miscounts its syllables is not ‘almost perfect.’ It has a specific structural limitation that gets more pronounced, not less, as you scale it up.

The next time a model confidently gets something trivially simple wrong, resist the urge to dismiss it as a quirk. It’s a signal about what the training process actually optimized for, and what it didn’t. Smarter, in this context, is genuinely a different axis than correct.