You have seen it happen. A vendor walks into a conference room, opens a laptop, and proceeds to demo software that runs like a dream. Buttons respond instantly. Data loads cleanly. Every edge case resolves itself with quiet elegance. Three months later, the same software is deployed in your environment and it crashes when two people log in simultaneously. This is not a coincidence, and it is not incompetence. There is a specific, structural reason why demos work and production doesn’t, and once you understand it, you will never watch a software demo the same way again.

This pattern connects to a broader truth about how software companies make strategic decisions about what to show, what to hide, and what to ship. As we’ve explored before, software companies release buggy products on purpose and the business logic is airtight. The demo environment is just the upstream version of that same logic.

The Demo Is a Different Piece of Software

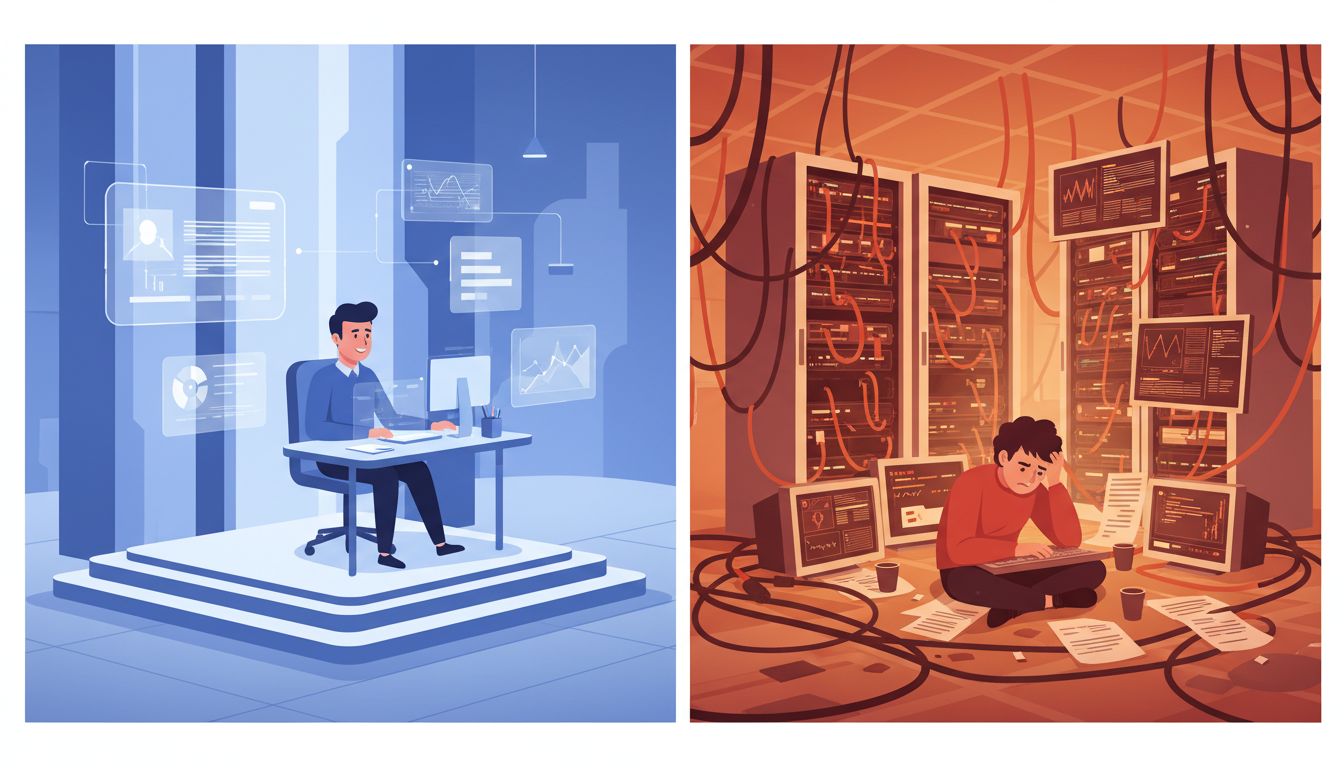

Here is the uncomfortable truth that most vendors will not say out loud: a demo environment is not a scaled-down version of production. It is a purpose-built theatrical set.

Demo environments typically run on local hardware or a tightly controlled cloud instance with no real network latency, no concurrent users, no legacy data, and no integrations with external systems. The database contains maybe 200 carefully curated rows, all formatted perfectly, with no nulls, no duplicate entries, and no weird edge cases from five years of real-world data entry.

In production, you have 800,000 rows, six of which have corrupted timestamps from a migration in 2019, forty users hitting the system at once, and an ERP integration that sometimes returns an HTTP 200 with an error message in the body instead of a proper error code. The software was never tested against that reality during the demo because the demo was never meant to simulate it.

This is not fraud, exactly. But it is a structured omission.

The Happy Path Problem

Every piece of software has a “happy path,” which is the sequence of steps a user takes when everything goes right. The vendor clicks through the exact same happy path in every demo, often rehearsed dozens of times. They know which features to highlight and, more importantly, which screens to skip.

This creates a systematic blind spot. The demo never shows what happens when a user uploads a file in the wrong format, or when the API they depend on returns a timeout, or when two users try to edit the same record at the same time. Those scenarios exist in every production deployment. They just don’t exist on demo day.

This relates to a deeper issue with how software is scoped and built. Successful startups deliberately make their first product worse than they could, focusing on the happy path because that’s what gets the sale or the funding. The problem is that the happy path is a minority of real usage. Studies of enterprise software adoption consistently find that error handling, edge cases, and integration failures account for 60 to 70 percent of actual support tickets after deployment.

Why Production Environments Are Fundamentally Hostile

Production is not just a bigger version of the demo. It is a different category of environment, and the differences matter in ways that compound on each other.

First, there is scale. Software that handles ten concurrent users doesn’t always handle a hundred. Race conditions, database lock contention, and memory leaks that are invisible at small scale become catastrophic at real scale. Many of these bugs literally cannot appear in a demo environment because they require the load profile of actual users to trigger.

Second, there is integration complexity. Modern enterprise software doesn’t run in isolation. It talks to identity providers, payment processors, data warehouses, legacy systems, and third-party APIs. Every one of those connections is a potential failure point. The demo runs with mocked integrations or none at all. Production runs with real ones that have rate limits, authentication token expiration, and undocumented behavior. This is partly why tech companies deliberately make their APIs difficult to use, with real business logic hiding in the implementation details.

Third, there is data entropy. Real production data is messy. It has been entered by humans who made typos, by imports that dropped certain fields, and by historical migrations that made tradeoffs nobody remembers anymore. Software that works perfectly on clean demo data can surface dozens of unhandled exceptions when it encounters real-world data for the first time.

The Organizational Gap That Makes It Worse

The technical issues are real, but there is also a human dimension that amplifies the problem significantly. The people who build and demo the software are almost never the people who deploy and maintain it.

Sales engineers who run demos are experts at the product’s strengths. They are not infrastructure engineers, and they are not familiar with your specific environment, your network topology, or your internal security policies. The people who do the actual deployment are often your own IT team, who may be seeing the software for the first time after the contract is signed.

This gap creates what’s sometimes called the “knowledge cliff,” where deep vendor expertise stops at the point of sale and shallow buyer expertise has to take over. Good documentation can bridge this gap, which is why the best developers treat documentation like source code and it shows in their output and earning potential. But most enterprise software documentation covers the happy path for the same reasons the demos do.

What You Can Do Before You Buy

The gap between demo and production is not inevitable. You can close it significantly with a few deliberate steps during the evaluation process.

Demand a proof-of-concept in your environment, not theirs. Any vendor worth working with will agree to this, even if it takes longer. Insist on connecting to your actual data sources, including the messy ones. Ask the vendor to demonstrate what happens when things go wrong, not just when they go right. What does the error message look like? How does the system recover? How does it log failures for debugging?

Ask to speak with a customer who is two years into their deployment, not one who just went live. The first six months of any software deployment have a honeymoon quality. The real experience shows up in year two, when the edge cases have accumulated and the initial implementation team has moved on.

Finally, build in a pilot phase with real production load before you commit fully. A controlled rollout to a subset of users will surface the production-specific issues while you still have time and leverage to address them.

The demo is a promise. Production is a test of whether that promise was made in good faith. The companies that pass that test are the ones worth keeping.