Some engineers write code that only they can understand, and they do it on purpose. Not because they’re bad at their jobs. Not because they ran out of time. Because making yourself the only person who can maintain a system is one of the most reliable career strategies available in software development, and the incentive structures that produce it are almost never addressed.

This isn’t a popular thing to say. The official story is that illegible code is always accidental, a product of deadline pressure, insufficient skill, or organizational dysfunction. But having worked with and alongside developers across many teams and company sizes, I’m confident that a meaningful subset of hard-to-read code is deliberate. It’s job security, written in Python or Go or whatever the stack happens to be.

Complexity Is Power

Knowledge asymmetry is leverage. If you are the only person who understands how the authentication service works, you cannot be fired without serious disruption. You get pulled into every critical meeting. Your estimates are accepted without scrutiny because no one can check them. Your opinion carries weight in architecture discussions because you have established yourself as the expert in at least one load-bearing system.

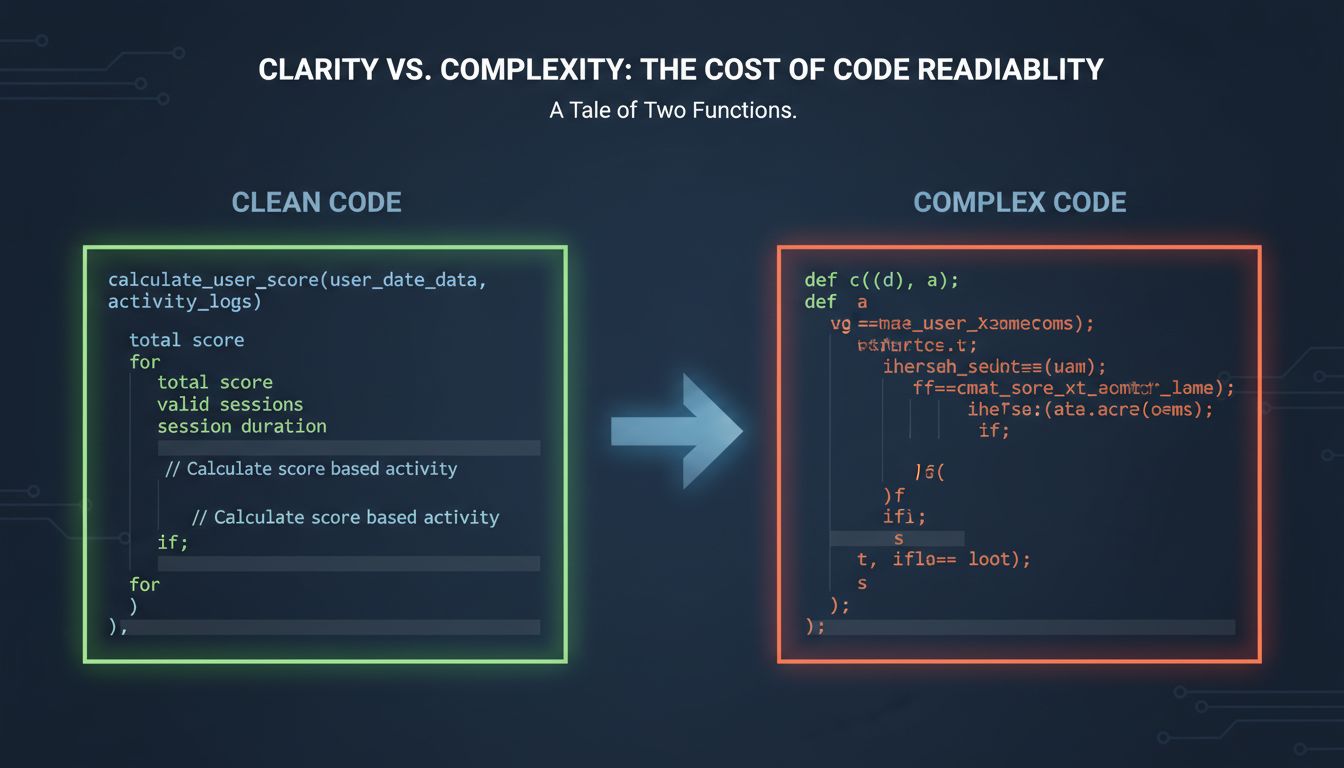

This is not a personality flaw unique to bad engineers. It’s a rational response to environments where job security is uncertain and individual visibility is how you survive. The behavior emerges from the incentive structure, not from malice. A variable named x where authorizationTokenExpiryTimestamp would have been clearer is sometimes just laziness. But a sprawling 600-line function with no comments, deeply nested conditionals, and side effects scattered across three abstraction layers is often a construction. Someone built that. It didn’t grow there.

Obfuscation Has a Specific Texture

Random sloppiness and deliberate obfuscation look different if you know what to look for. Sloppy code tends to be consistently sloppy across a codebase. Deliberate obfuscation tends to be selective. The engineer who writes impenetrable logic in the core billing module often writes perfectly readable utility functions in the shared libraries everyone uses. The opacity is concentrated where it matters most for their position.

Another tell: documentation voids. Well-intentioned but rushed code usually lacks documentation because the developer ran out of time. Deliberately obscured code tends to actively resist documentation. Commit messages are vague. Pull request descriptions explain what changed without explaining why. The function does something important and the name doesn’t tell you what. These aren’t oversights. They’re features of the design.

As a related example, consider a function signature like processData(obj, flag, callback) where the parameter names have been kept generic despite the function performing a very specific operation involving payment state transitions. That specificity isn’t hidden by accident.

The Organizational Conditions That Make This Possible

Deliberate obfuscation requires organizational cover to work. It thrives in a few specific conditions: large codebases where no one person can review everything, teams with high turnover where institutional knowledge is genuinely scarce, and review cultures where the reviewer’s job is to check for bugs rather than to understand and challenge design decisions.

Code review, as practiced in most teams, does almost nothing to prevent this. Reviewers are checking correctness, not comprehensibility. They’re asking “does this work” not “could someone other than the author maintain this in eighteen months.” Most teams don’t have a shared standard for what readable code even looks like, which means there’s no baseline against which opacity becomes visible as a problem.

This connects to something worth naming: many companies treat code readability as optional polish, something you do after the real work is done. In that environment, writing opaque code carries no real cost. There’s no mechanism that makes you pay for it.

The Long-Term Tax

The engineer who builds their little fortress of inscrutability does impose real costs. Every hour another developer spends reverse-engineering what a system does is an hour not spent building something new. Every time a bug fix requires the original author because no one else can safely modify the code, the team absorbs a scheduling dependency it didn’t need. At scale, across many engineers doing this across many systems, you get the kind of codebase that takes years off the lives of the people who inherit it.

The irony is that this strategy tends to work until it doesn’t, and when it stops working it stops badly. When the engineer who holds all the knowledge leaves, the company faces a knowledge gap that can take years to close. The leverage evaporates the moment they’re not there to exercise it.

The Counterargument

The strongest objection to this thesis is that I’m attributing deliberate intent to what is often just the gradual accumulation of shortcuts under pressure. Code gets complex because requirements change, because technical debt compounds, because the engineer who wrote it was working on six things at once and didn’t have time to refactor. Assuming bad faith where exhaustion explains it is unfair.

This is a real and important point. Most hard-to-read code is not a conspiracy. It’s the product of teams that never scheduled time for the kind of careful, readable work that good code requires. The structural pressure toward unreadable codebases is real and worth taking seriously on its own terms.

But “most” isn’t “all.” The structural explanation doesn’t fully account for the selective nature of the opacity, the specific places where documentation goes missing, the way certain engineers consistently produce code that only they can explain. Some of that is pattern. And patterns have causes.

What Actually Fixes This

The solution isn’t to accuse engineers of bad faith. It’s to change the conditions that make this strategy rational. Teams that rotate ownership of critical systems make knowledge hoarding structurally pointless. Organizations that evaluate engineers partly on how well they transfer knowledge change the incentive calculation. Code review standards that explicitly ask “would a new team member understand this in six months” make opacity visible as a deficiency rather than a neutral style choice.

The engineers who write obfuscated code aren’t villains. They’re people responding to environments that reward individual indispensability and punish the kind of collaborative, legible work that actually scales. Fix the environment and most of the behavior goes away. Leave the environment as it is and you’ll keep getting exactly the code you incentivize.