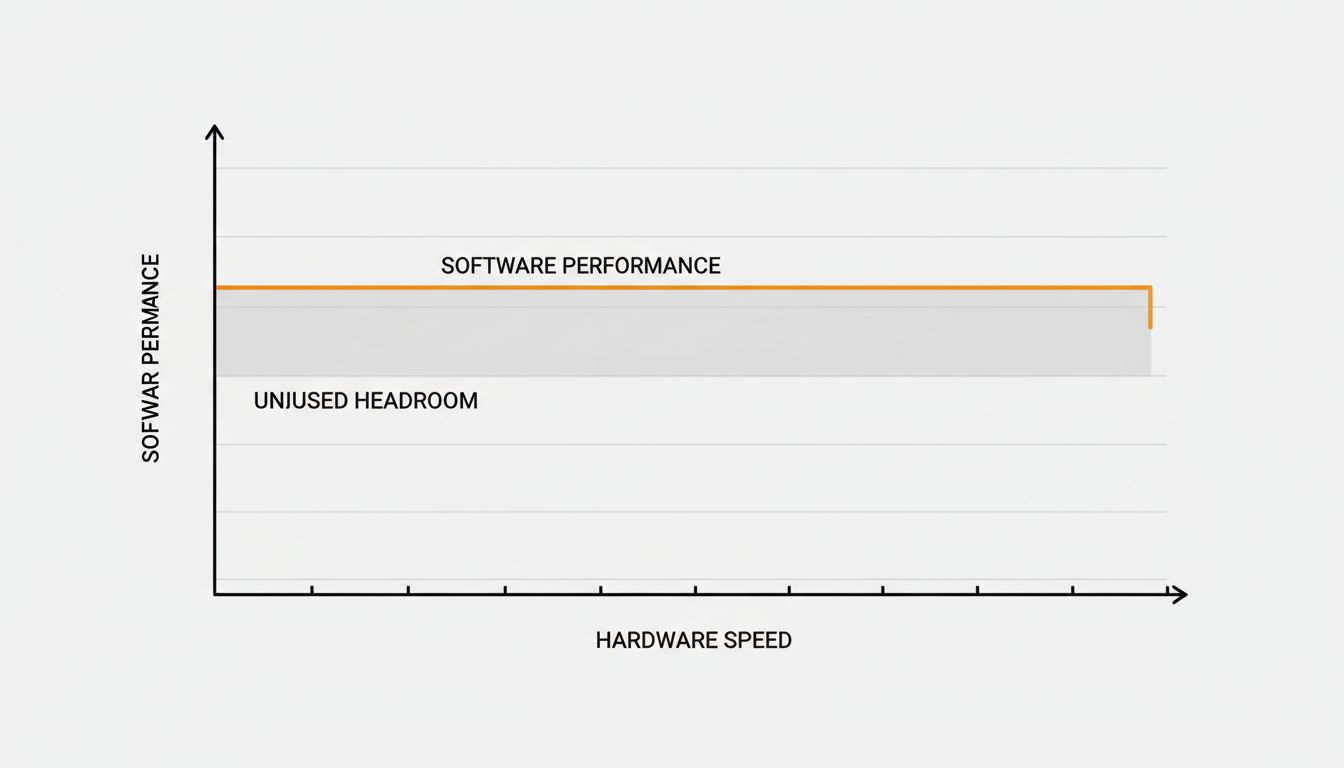

The conventional wisdom is that software and hardware improve together, a rising tide of capability that lifts all boats. The reality, if you spend time profiling real applications on modern machines, is more uncomfortable: software frequently gets slower as the hardware it runs on gets faster. Not in every case, not uniformly, but often enough that it should embarrass us as an industry.

The reason isn’t mysterious. It’s a predictable consequence of incentives, and those incentives are structural.

Abundant Resources Are an Invitation to Stop Thinking

When a developer in the late 1980s wrote code for a machine with 640KB of usable RAM, every allocation was a negotiation. You thought hard about data structures because you had to. The constraint imposed discipline.

Modern developers work on machines with 32GB of RAM and processors that can execute billions of instructions per second. The constraint is gone, and with it, a significant chunk of the pressure to be thoughtful. Why write a careful O(n log n) sort when the naive O(n²) version returns in 40 milliseconds instead of 2? The user won’t notice. Ship it.

This isn’t laziness in the pejorative sense. It’s rational behavior under different constraints. The problem is that “the user won’t notice” compounds. Every layer of a modern application stack is making that same calculation independently. The database ORM issues an extra join because the query planner will handle it. The frontend framework re-renders the whole component tree because reconciliation is fast enough. The build tool loads dependencies into memory without checking whether they’re needed. Each decision is locally defensible. Collectively they produce software that requires a $2,000 machine to run a to-do list application.

The Abstraction Tax Is Real and Nobody Is Accounting for It

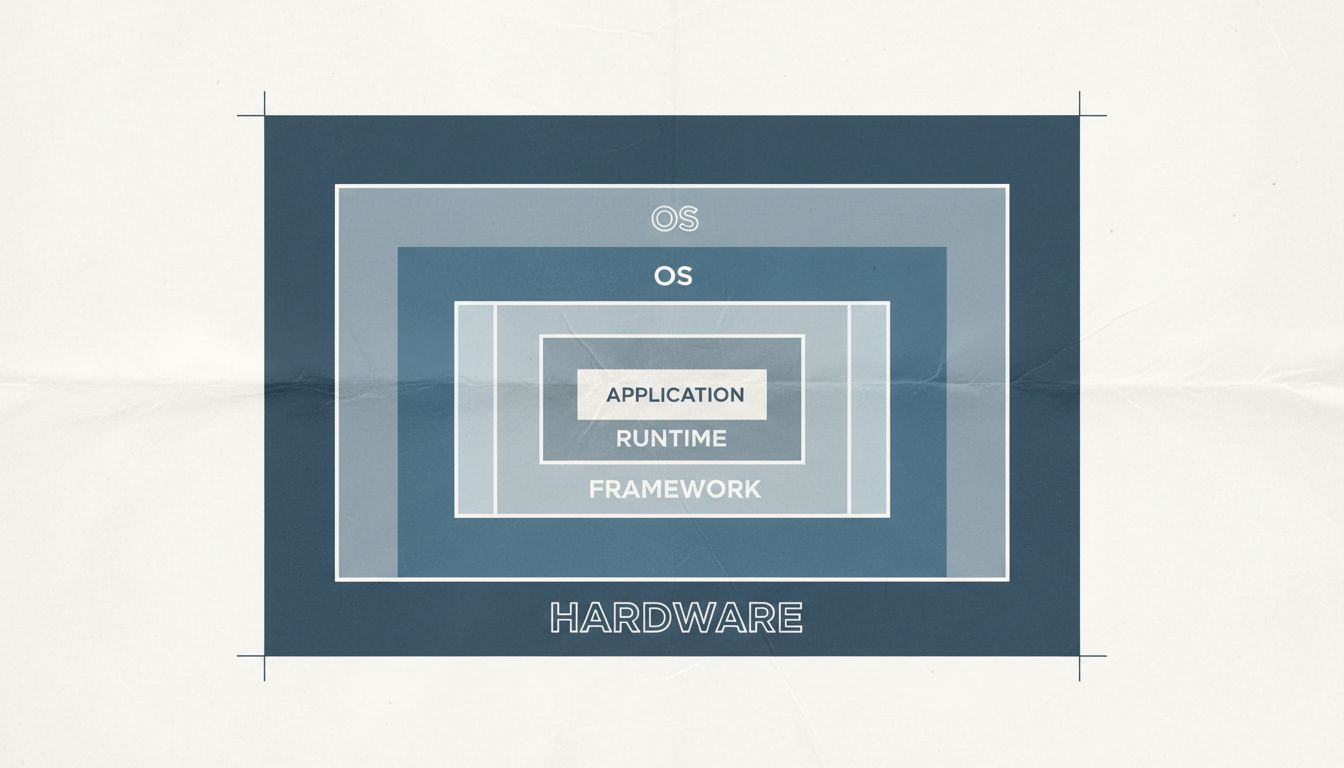

Every abstraction layer has a cost. This is not a controversial statement among people who profile code for a living. The controversy is in how much that cost matters.

Consider what happens when you write a web application in a modern JavaScript framework. Your code runs in a JavaScript engine (V8, SpiderMonkey, take your pick), which is itself a program running on an operating system, which is arbitrating access to physical hardware. Before your business logic executes a single instruction, you’ve paid the cost of the browser process, the JavaScript runtime’s garbage collector, the virtual DOM diffing algorithm, and any polyfills covering browser inconsistencies. Each abstraction layer was added to solve a real problem. Each one also costs CPU cycles and memory that could have gone to user-visible work.

The problem is that abstractions are adopted wholesale, not selectively. A framework that adds 200ms of startup latency might be perfectly appropriate for a complex application with thousands of interactive components. It is spectacularly inappropriate for a static documentation site. But developers reach for the same toolchain regardless, because the toolchain is familiar, well-documented, and because the hardware is fast enough that the startup penalty doesn’t feel catastrophic during development on a MacBook Pro with 16 cores.

It feels catastrophic on the user’s older machine, or on a mobile device with throttled CPU performance, or at the tail end of a performance distribution where you’re serving users in regions with lower-spec hardware. The abstraction tax is regressive: it hits users with worse hardware hardest.

The Development Environment Is a Lie

Here’s something I’ve watched happen on multiple teams: developers benchmark their own machines, declare performance acceptable, and ship. The CI pipeline doesn’t run performance regression tests because those are hard to write and maintain. Production is running on beefy servers or modern client hardware, so no alerts fire. Everyone moves on.

The problem is that the development environment is systematically different from the environments where software actually runs. Developer machines are fast, unloaded, and have SSD-backed file systems. Production has different characteristics: higher load, more memory pressure, more cache misses. And end-user machines span a huge range of capability.

This is why the reboot fixes it phenomenon exists in its current form. Memory bloat and resource leaks that are invisible on a freshly-started developer machine with 32GB of RAM become obvious over time on a user’s machine where the application is competing for resources with everything else they’re running. The developer never saw the degraded state because they restart their environment constantly.

The Counterargument

The reasonable pushback here is that software has gotten dramatically more capable, not just slower. Word processors now have real-time collaboration, version history, grammar AI, and cross-device sync. Comparing their performance to WordPerfect 5.1 is unfair because they’re not doing the same thing.

This is true, and I don’t want to romanticize the era of extremely constrained computing. Writing to fit in 640KB was not fun. Some of the apparent slowdown is genuine capability being paid for in compute.

But this defense doesn’t cover everything. Many applications have accreted features that most users never touch, and those features carry performance costs that everyone pays. The telemetry pipelines. The A/B testing frameworks. The feature flag evaluation that happens on every request. These aren’t user-visible capabilities; they’re organizational infrastructure that got embedded in the product. Users are subsidizing product teams’ instrumentation with their CPU cycles.

The Position Is Simple: Faster Hardware Is Not a Budget for Sloppiness

Software runs slower on faster hardware because the industry treats hardware improvements as an opportunity to defer optimization indefinitely. This is a choice, not an inevitability. The developers who built SQLite, which runs reliably on devices with kilobytes of available memory, made different choices under no more pressure than anyone else. The engineers who keep Nginx’s performance profile stable across versions are making active decisions about what discipline looks like.

Faster hardware is genuinely useful. It should allow software to do more things well. Instead, it too often allows software to do the same things badly and get away with it. The accountability mechanism that constrained resource scarcity provided has been removed, and we haven’t replaced it with anything.

Profile your code. Test on low-end hardware. Treat a performance regression as a bug. These are not exotic practices. They’re just the habits that disappeared when the hardware got fast enough to make them feel optional.