The Feature Nobody Asked For Is Performing Perfectly

You’ve probably dismissed a dozen AI features in the last six months. The automated summary you closed immediately. The smart reply you never used. The AI assistant button that appeared in your toolbar overnight, uninvited. Your instinct is probably that these are half-baked products, rushed out by teams that didn’t think hard enough about what users actually want.

That instinct is wrong, or at least incomplete. Many of these features aren’t failed attempts at building something useful. They’re successful executions of a strategy that has almost nothing to do with you.

Understanding why companies keep shipping AI tools that users routinely ignore, distrust, or actively resent tells you something important about how tech product decisions actually get made, and it changes how you should interpret the AI announcements that keep landing in your inbox.

The Investor Signaling Problem

When a publicly traded tech company ships an AI feature, the primary audience often isn’t the user base. It’s the analyst community and institutional investors who will be on the earnings call in three months asking, “What is your AI strategy?”

This isn’t cynical speculation. Microsoft’s rollout of Copilot across its product suite is a useful case study. Many of the features arrived in products where users hadn’t asked for them, worked inconsistently, and required significant behavior change to use at all. But Microsoft’s stock treatment during that period reflected the market’s appetite for any company that could demonstrate AI integration. The feature’s job was partly to answer a question that analysts were asking, not a question users were asking.

You see this pattern repeatedly. A company ships an AI feature, mentions it prominently in earnings commentary, and the feature quietly fades from active development six months later while the company moves on to the next announcement cycle. The feature wasn’t abandoned because it failed. It completed its actual mission.

The Positioning Tax You Pay Without Knowing It

There’s a second force at work, less tied to quarterly earnings and more about long-term competitive positioning. Tech companies are terrified of being categorized as AI-laggards the way companies in the 2010s were terrified of being called “not mobile-first.”

That fear is rational. Perception shapes hiring (good engineers want to work on cutting-edge problems), it shapes partnership discussions, and it shapes how enterprise customers think about vendor longevity. A product suite that can’t point to any AI integration starts looking legacy regardless of whether the integration actually improves anything.

So companies ship features not because they’ve solved the trust problem, but because presence in the category matters more than quality of execution right now. You could call this a positioning tax: the cost of staying visible in a narrative that’s moving faster than the underlying technology.

The uncomfortable implication is that the features you’re dismissing as low-quality are sometimes intentionally under-baked. Shipping something imperfect keeps you in the conversation. Waiting until you have something genuinely trustworthy means arriving late to a conversation that already moved on without you.

Why Trust Is Actually Hard to Build at Scale

Here’s the part that’s worth sitting with, because it’s not entirely cynical. Building AI features that users genuinely trust is a legitimately hard problem, and most companies are not being fully dishonest when they claim they’re working toward it.

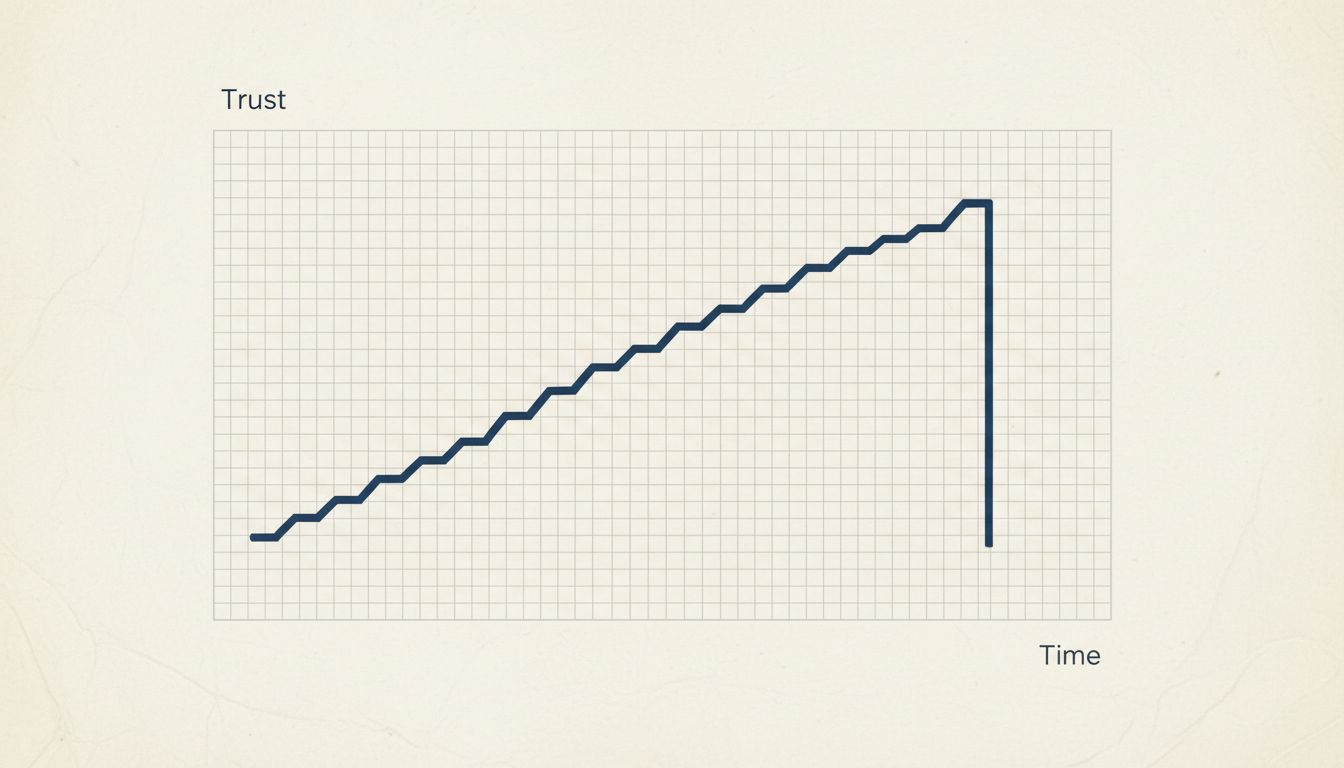

Trust in software usually builds through repetition. You use a feature, it works, you use it again. Over hundreds of interactions the feature earns a place in your workflow. AI features break this model in a specific way: they fail non-deterministically. A calculator that gives you the wrong answer once stops being a calculator you trust. An AI summarizer that occasionally produces a hallucination doesn’t just fail on that document. It contaminates your trust in every document it’s ever summarized for you.

This is the core design tension that companies are trying to work around, and most haven’t solved it yet. The approach many AI assistants take toward expressing uncertainty is partly a response to exactly this problem. Hedged outputs are less useful but less catastrophically wrong.

The result is a product category in an awkward adolescence: mature enough to ship widely, not mature enough to earn consistent trust, but with competitive and financial pressures that make waiting impossible. That’s not an excuse for the proliferation of mediocre AI features, but it is an explanation.

What You Can Actually Do With This

If you’re a user, the practical takeaway is calibration. When a new AI feature lands in your tools, the useful question isn’t “why didn’t they build this better?” It’s “who was this feature’s primary audience?” That reframe helps you spend less energy being frustrated by features that were never optimized for your satisfaction in the first place.

When a feature genuinely seems designed with your workflow in mind, that’s actually meaningful signal. It usually means a product team had enough internal cover to prioritize user value over announcement value, which is harder to achieve than it sounds.

If you’re building products, the calculus is more specific. There is real competitive cost to sitting out AI integration entirely. There is also real cost to shipping features that actively erode user trust, because trust is the thing that’s hardest to rebuild once lost. The companies getting this right are generally the ones treating their first AI features as trust-building exercises rather than positioning exercises: small scope, high accuracy, low stakes. They’re willing to ship something narrow that works rather than something broad that mostly works.

The companies getting it wrong are shipping to the press release and hoping the product catches up. Some of the time, it does. Most of the time, you end up with another AI button you’ve trained yourself to ignore.

That button isn’t an accident. It’s the outcome of a set of incentives that were never really pointed at making your day better. Knowing that doesn’t fix anything, but it does mean you can stop taking it personally.