The Settings Menu Is a Product Decision, Not a Utility

Most developers think of settings menus as the boring part of the job. You build the feature, then you wire up the toggles. The UX team handles the layout. Ship it and move on. This framing misses something important: for product managers optimizing engagement metrics or data collection rates, the settings menu is prime real estate.

The term for this is “dark patterns,” coined by UX designer Harry Brignull in 2010. The definition has evolved, but the core idea is consistent: interface designs that work against the user’s interests while appearing to serve them. Dark patterns in settings menus are a specific subspecies. They don’t trick you into clicking a button you didn’t mean to click. They work slower, by making the choices you’d actually want harder to find, harder to understand, and harder to complete than the choices that benefit the company.

This isn’t a fringe concern. The Norwegian Consumer Council published a detailed analysis in 2018 called “Deceived by Design” (later updated) examining Facebook, Google, and Windows 10. They found systematic use of design techniques that steered users toward privacy-invasive options through interface choices, not just persuasive copy. The EU used similar findings as partial justification for GDPR enforcement action. This is documented, studied behavior, not conspiracy theory.

How Friction Gets Weaponized

The most reliable dark pattern in settings menus is asymmetric friction. The option the company wants you to choose is one click. The option you might want is buried three menus deep, requires reading a paragraph of legalese, and resets itself after an app update.

Consider how cookie consent banners actually work. The “Accept All” button is large, prominently colored, and positioned for the natural reading path. “Manage Preferences” leads to a page where every vendor is opted in by default, the toggle controls are small, and disabling everything requires individual action on potentially dozens of entries. Some implementations require you to scroll past a long list before you can save your choices. There’s a name for this in HCI (human-computer interaction) research: “choice architecture.” You’re not being lied to. You’re being exhausted into compliance.

Another pattern is what researchers call “confirmshaming,” where the decline option is written to make you feel foolish for choosing it. “No thanks, I don’t want to save money” as the opt-out label is a well-known example. In settings menus, this manifests as labeling the privacy-protective option with something like “Limited Experience” rather than “Private Mode.” The choice itself is neutral. The framing loads the dice.

Default states are the most powerful lever of all. Behavioral economists have studied default effects for decades, and the conclusion is unambiguous: most people don’t change defaults. When a platform ships with “Share data to improve products” pre-checked, a substantial majority of users will never uncheck it, not because they actively want to share data, but because inaction is the path of least resistance. The company frames this as user preference. It’s closer to manufactured consent.

A/B Testing Made This Worse

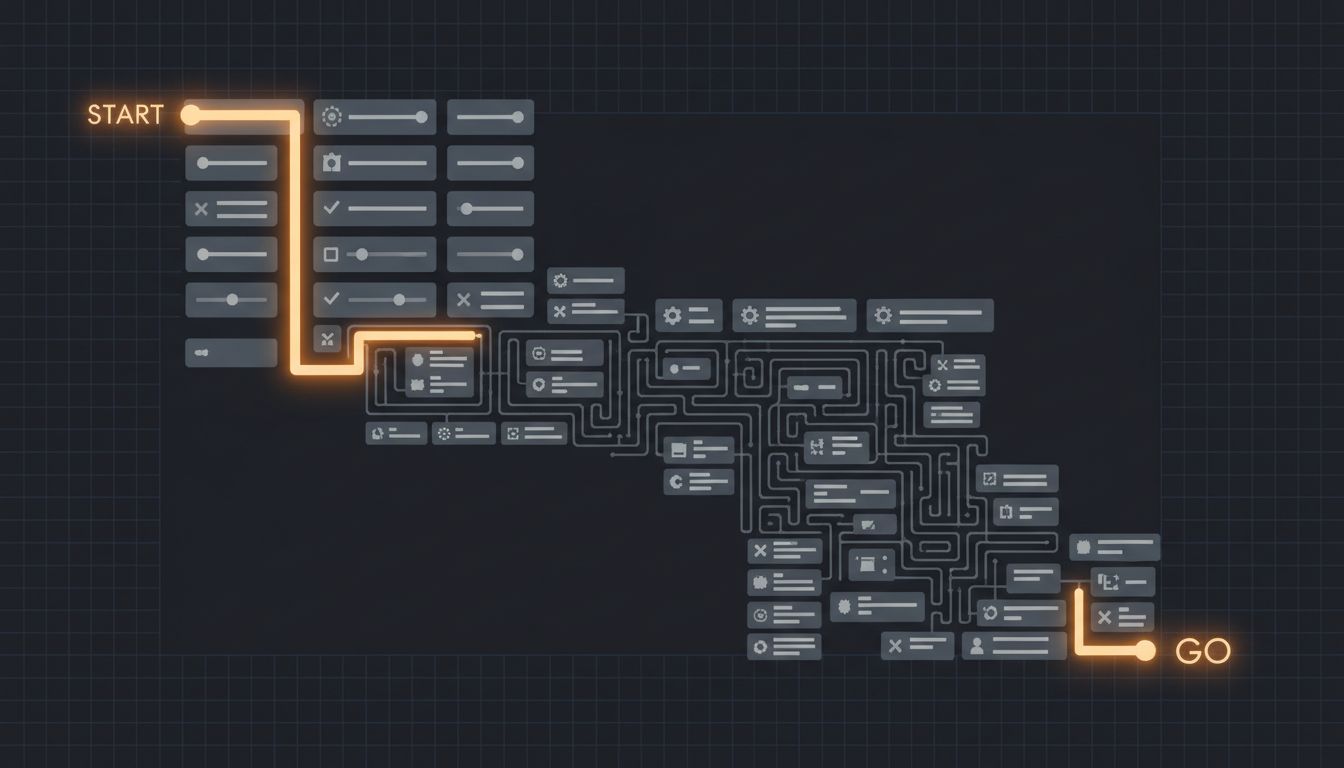

Here’s where the engineering process becomes complicit. Dark patterns don’t usually emerge from a single bad decision by a cynical product manager. They emerge from optimization loops. A team runs an A/B test comparing two versions of a consent flow. Version B, which uses smaller text and buries the opt-out, produces 12% higher opt-in rates. Version B ships. Nobody in that meeting made a decision to deceive users. They made a decision to ship the higher-performing variant, which happened to be the more deceptive one.

This is a structural problem. When your success metric is opt-in rate and not user trust, the optimization process will find manipulative patterns on its own. You don’t need intent. You need misaligned incentives and enough test cycles, and the friction will self-assemble around whatever choice benefits the business.

The pattern also compounds across products. Once one major platform ships a dark-pattern consent flow that survives legal review, competitors have both a template and a competitive justification. Diverging from the pattern means accepting lower opt-in rates than your competitors, which is a hard argument to win in a planning meeting.

What Regulators Are Actually Doing About It

Regulation has been slow but is becoming more specific. The GDPR requires that consent be “freely given, specific, informed and unambiguous,” which sounds comprehensive until you realize the enforcement is inconsistent and national data protection authorities have very different levels of resourcing. The French CNIL fined Google and Facebook in 2022 for making it harder to reject cookies than to accept them, citing exactly the asymmetric friction pattern described above. That’s a meaningful precedent.

California’s CPRA (the amended version of CCPA) includes provisions specifically targeting dark patterns in privacy choices, defining them as consent obtained through “any practice which has the substantial effect of subverting or impairing user autonomy, decision-making, or choice.” Whether enforcement matches the statute remains an open question, but the legal framework now has vocabulary for the problem.

The FTC has also been more aggressive. Their 2022 report on dark patterns explicitly named settings-related manipulation and signaled potential rulemaking. Companies paying attention to regulatory risk have started auditing their own flows, which is a different motivation than user welfare but produces some of the same outputs.

What You Can Actually Do as a Developer

If you build products, you will eventually be asked to implement something in this category. It might be framed as “optimizing the onboarding flow” or “improving feature adoption,” but if the proposed change makes the company’s preferred option easier and the user’s preferred option harder, you’re looking at a dark pattern.

The useful question to ask in those design reviews is: if we reversed the defaults and symmetrized the friction, what would opt-in rates look like? If the honest answer is “much lower,” that tells you something important about whether the current design is actually serving users or just exploiting inertia. That’s not always enough to change a decision, but naming the dynamic clearly is the first step.

For users, the practical advice is tedious but real: treat default settings as hostile until proven otherwise, especially for anything involving data sharing, location access, or notification permissions. These defaults were almost certainly not chosen with your preferences in mind. They were chosen based on what the company needed and what a test population failed to change.

The settings menu feels like an afterthought. For the teams optimizing it, it’s anything but.