The Beta Label Is Doing a Lot of Work

When Google kept Gmail in “beta” for five years after launch, most observers assumed it was corporate caution, legal cover, or a quirky inside joke. It was none of those things. The beta label was a permission structure, a way to collect behavioral data at massive scale while managing user expectations about quality. The software worked well enough to be useful. It just wasn’t finished, and Google knew exactly what it still needed to learn.

That logic hasn’t changed. What has changed is how explicit companies have become about it. The modern beta release, especially in AI and consumer software, is less a development milestone than a research instrument. The brokenness isn’t incidental. It’s the point.

What Internal Testing Actually Cannot Do

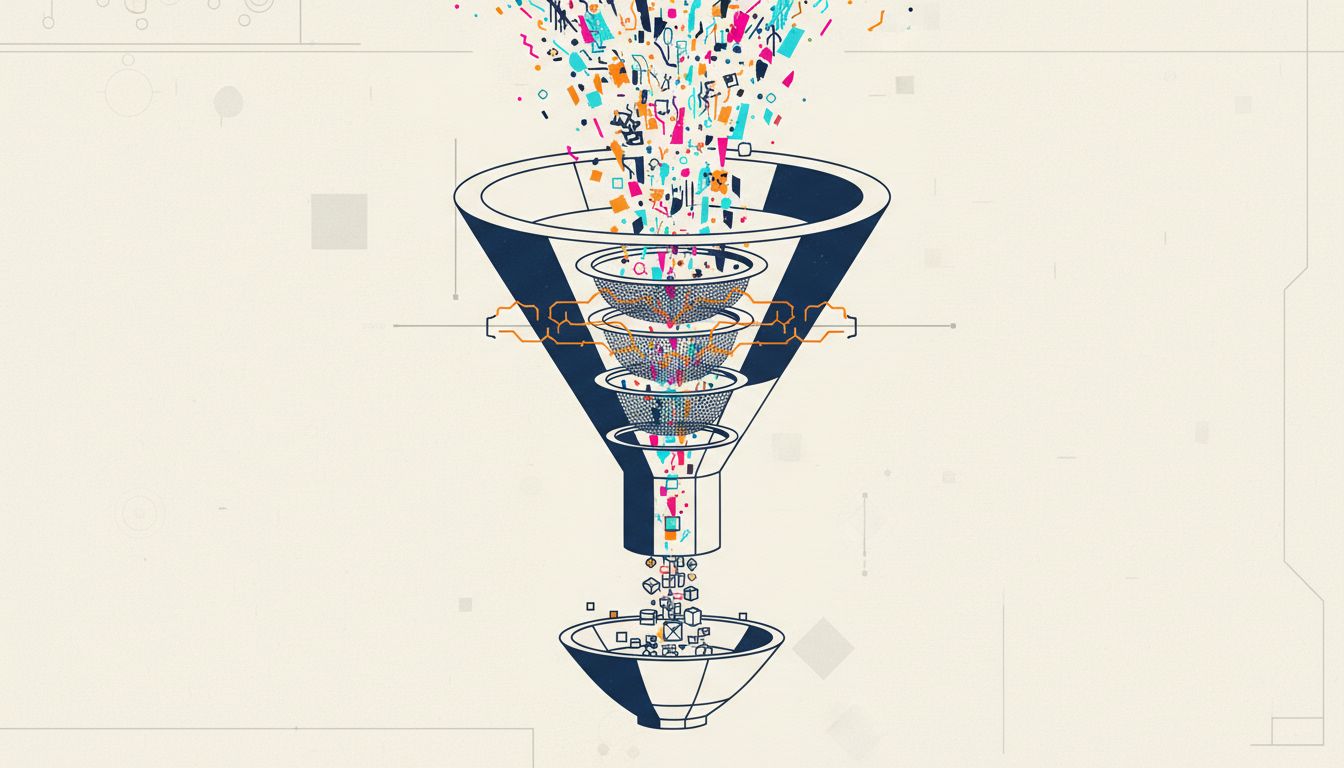

The standard explanation for releasing buggy software is that you can’t anticipate every use case in-house. That’s true, but it undersells the actual problem. Internal testing is structurally limited in ways that no amount of engineering rigor can fix.

Test environments are controlled. Real users are not. They run software on machines with conflicting drivers, unusual regional character sets, accessibility tools, corporate proxies, and browser extensions that interact with your code in ways you’d never think to simulate. They try to accomplish goals your product team never imagined. They make mistakes that reveal how your error-handling actually behaves under pressure, not how it behaves when a QA engineer deliberately triggers a known edge case.

More importantly, internal testers know what the software is supposed to do. Real users only know what they’re trying to do. That gap produces failure modes that are invisible until you have millions of people using something in production.

The result is that a beta release to even a modest user base generates failure data that would take years to synthesize internally. For AI products specifically, the gap is even wider. AI models give different answers to the same question partly because the input space is so vast that internal red-teaming can only ever sample a tiny fraction of it. Real users probe the edges constantly, and not because they’re trying to break anything.

The Economics of Crowdsourced QA

There’s a harder-edged version of this story that doesn’t get discussed enough: beta programs are largely free labor.

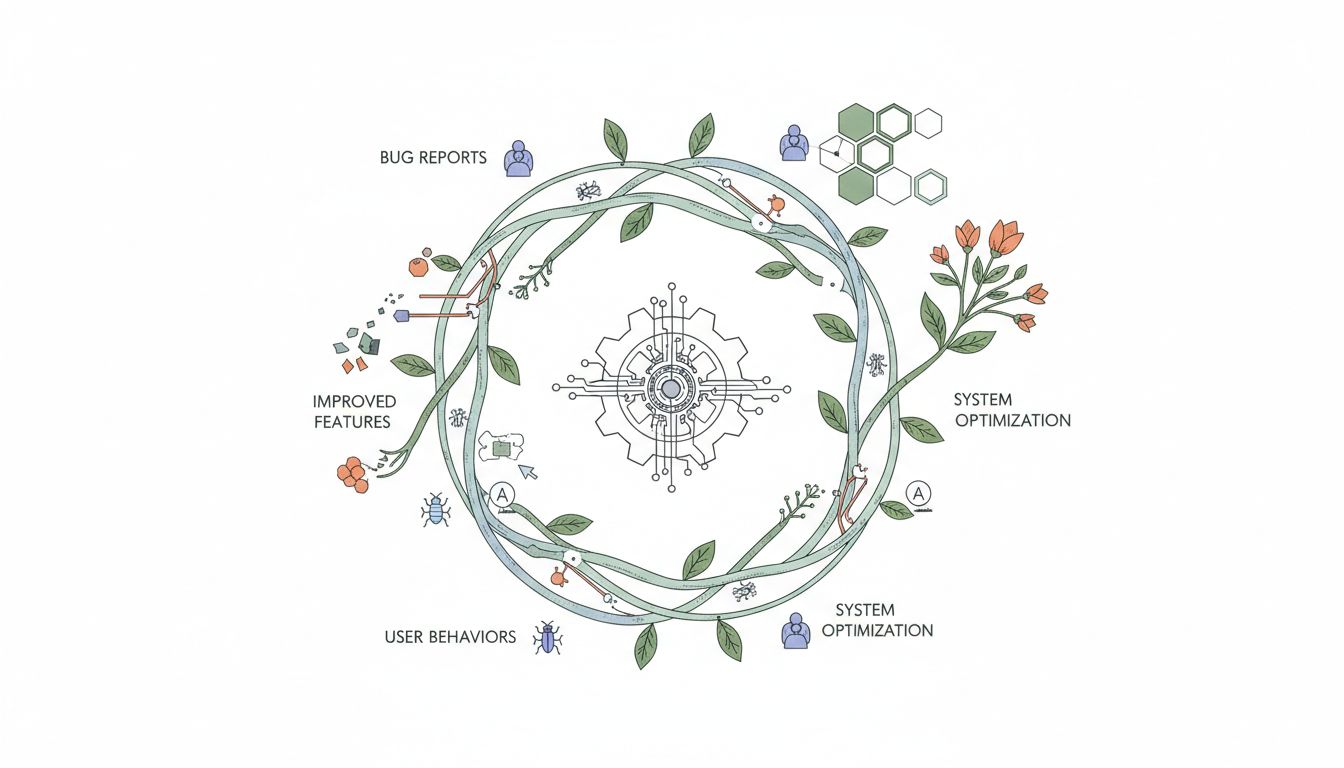

A traditional QA cycle is expensive. You need skilled testers, reproducible environments, detailed bug reporting workflows, and time. Lots of time. A public beta outsources most of that cost to people who are, in many cases, eager to participate. Early adopters want access to new features. Enthusiasts want to be first. Enterprises want to evaluate fit before committing budget. All of them generate bug reports, usage telemetry, and behavioral data that feeds directly back into the development cycle.

The companies that run these programs understand the asymmetry clearly. The cost is some reputational risk and a support burden for a product that isn’t finished. The benefit is a testing corpus that would be impossible to buy at any reasonable price. When Microsoft releases a Windows Insider build with known issues documented in the release notes, they’re not apologizing for the bugs. They’re describing the research agenda.

This dynamic is especially pronounced in AI, where the feedback loop is particularly valuable. When OpenAI released early versions of ChatGPT, the volume and variety of user interactions served as a continuous fine-tuning signal. Users weren’t just stress-testing the infrastructure. They were, in aggregate, helping shape what the model learned to do well. The broken parts were features in the sense that they revealed what needed fixing faster than any internal process could.

The Expectation-Setting Function Nobody Talks About

There’s a third reason that’s more psychological than technical: broken betas calibrate expectations in ways that help companies later.

If you release software that works perfectly from day one, users establish a baseline of perfection. Any subsequent bug, any performance regression, any moment of friction gets measured against that standard. You’ve given yourself no room to fail.

But if you release a beta that users know is rough, something interesting happens. When things break, users attribute it to the beta status rather than to fundamental product quality. When things work well, users are pleasantly surprised. You get credit for improvement rather than blame for imperfection. And by the time you hit general availability, you’ve already enrolled your most engaged users in a narrative where they participated in making the product better. That psychological investment is worth something real.

This isn’t manipulation exactly, though it’s close enough to be worth examining honestly. It’s closer to expectation management as a product strategy. The companies doing it most effectively, the ones whose public betas generate genuine community investment rather than frustration, tend to be transparent about what’s broken and why. They treat beta users as collaborators rather than as unpaid testers who don’t know what they signed up for. The distinction matters for retention, and most product teams know it.

When It Becomes a Problem

None of this means releasing broken software is always justified. The calculus changes significantly depending on what’s broken and who’s using it.

Security vulnerabilities in beta software affect real systems. AI models that confidently produce harmful outputs during a public beta cause real harm. Enterprise customers who adopt beta features into production workflows and then get burned by breaking changes have legitimate grievances. The “it’s beta” disclaimer only provides so much cover, and companies that lean on it too hard in genuinely high-stakes contexts are making a different kind of bet, one about how much trust they can spend before users stop giving them the benefit of the doubt.

The deeper issue is that “beta” has been so thoroughly used as a liability shield that it’s lost most of its informational content. When a product has been in beta for two years, serving millions of active users, processing payments, storing sensitive data, and being marketed to enterprises as a serious solution, the label is no longer describing a development stage. It’s just a disclaimer.

What distinguishes legitimate beta strategy from abuse of the label is specificity and honesty. Responsible beta releases tell you what’s incomplete, what data is being collected, and what threshold will trigger a production release. They treat the relationship with beta users as a genuine exchange rather than a one-way extraction. The companies that do this well build products that are genuinely better for the process. The ones that don’t are just shipping broken software and hoping the label is enough.