In 2016, a team at Google Brain published a paper that quietly unsettled a lot of assumptions about how machine learning should work. They had trained image recognition models on datasets where a meaningful fraction of the labels were intentionally corrupted. Wrong labels. Deliberately. The finding was not that the models broke down. The finding was that, in many cases, they still generalized well. The models had learned something real about the world even though they had been fed a diet of partially wrong information.

That result planted a seed that has grown into one of the stranger truths in applied AI: the organizations building the most capable models are often well aware that chunks of their training data are incorrect, and they train on it anyway. This is not a confession of carelessness. It is, in many cases, a considered engineering decision. Understanding why requires stepping back from the intuition that better data always means better models.

The Setup: What We Mean by “Wrong” Data

When engineers talk about wrong data in a training set, they mean a few different things. There are mislabeled examples, where a photo of a cat is tagged as a dog. There are outdated records, where a product description reflects a version that no longer exists. There are contradictory annotations, where two human reviewers labeled the same piece of text with different sentiment scores. And there are adversarial or noisy examples introduced deliberately during data augmentation.

The instinct is to clean all of this before training. Spend weeks on data hygiene. Write validation pipelines. Hire more annotators. And for narrow, high-stakes applications (medical imaging, fraud detection) that instinct is correct. But for large language models and general-purpose classifiers, the calculus is more complicated.

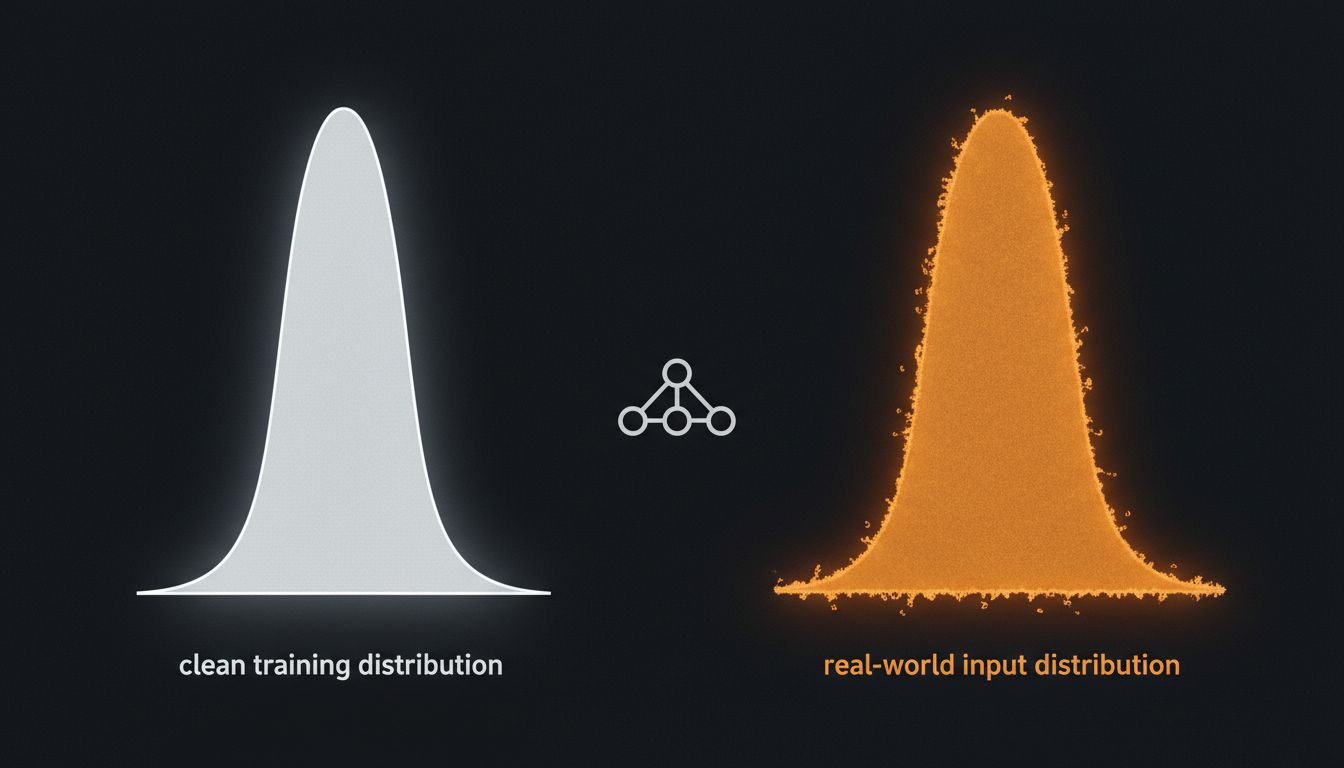

Here is the core tension: a perfectly clean dataset is also a perfectly curated one, and curation introduces its own distortions. You end up training on data that reflects what your annotators thought was canonical rather than what the real distribution of inputs looks like. Users are not canonical. They misspell things, contradict themselves, and ask ambiguous questions. A model trained on perfectly labeled formal prose will struggle with the actual population of prompts it receives.

What Happened: The ImageNet Lesson, Applied to Language

ImageNet, the benchmark dataset that effectively started the deep learning era, is famously imperfect. Researchers have found systematic labeling errors across many categories. A 2019 study from MIT found that even widely-used benchmark test sets had error rates high enough to meaningfully affect how we compare models against each other. The models trained on ImageNet still became the foundation for essentially every computer vision application built in the subsequent decade.

The lesson absorbed by the field was not “fix ImageNet.” It was something more nuanced: noise in training data acts as a regularizer. Regularization is a technique for preventing overfitting, which is what happens when a model memorizes its training data so thoroughly that it performs well on training examples but fails on anything new. A model that has been force-fed a few mislabeled examples cannot simply memorize its way to a correct answer. It has to find underlying patterns robust enough to survive the noise. That is, in a meaningful sense, closer to how you want it to behave in production.

This insight scaled forward into the large language model era. When OpenAI, Anthropic, Google DeepMind, and others assembled the massive text corpora used to pre-train their models, they made choices about filtering that are more nuanced than “remove all the bad stuff.” Common Crawl, one of the largest sources of web text used in training, is famously dirty. It contains spam, misinformation, poorly written content, and text in languages the scrapers did not intend to collect. Teams do filter it, but they do not filter it to clinical purity. They filter it to a level they believe produces useful generalization, then stop.

The “stop” is doing real work there. Further cleaning costs annotator time and compute. It introduces the risk of removing informative edge cases. And past a certain threshold, cleaner data may actually hurt performance on the messy real-world prompts the model will encounter. This connects to a broader pattern in how models can confidently fail at tasks they were never really trained for, even when they appear capable on benchmarks. As we’ve written before, smarter AI models fail simple tasks because they were never actually trained to do them.

Why It Matters: The Accuracy-Usefulness Gap

The philosophical shift here is worth naming clearly. Traditional software has a fairly clean relationship with correctness. A function either returns the right value or it does not. A database query either retrieves the right rows or it does not. The measure of quality is fidelity to a specification.

Neural networks, especially large ones, operate under a different optimization target. You are not optimizing for correctness on individual examples. You are optimizing for useful behavior across a distribution of inputs. That distribution, in practice, includes noise, ambiguity, and error. A model trained only on pristine data is not more correct in any useful sense. It is correct in a narrower sense, which is not the same thing.

This is why “accuracy” as a single metric is insufficient for evaluating production AI systems. A model can achieve high accuracy on a clean benchmark while failing on the distribution of inputs it will actually receive. Teams that understand this build evaluation suites that include adversarial examples, out-of-distribution inputs, and deliberately noisy prompts. The goal is to measure robustness, not just performance on the nicest version of the problem.

What We Can Learn

The companies doing this well are not being cynical. They are being precise about what they are actually optimizing for. A few things follow from that.

First, data quality is not a binary property. “Clean” and “dirty” are shorthand for a spectrum, and the right point on that spectrum depends on what your model needs to do. A customer support classifier probably wants some noise in its training data because support tickets are noisy. A radiology tool does not get that luxury.

Second, the decision to train on imperfect data should be explicit and documented, not accidental. The difference between a team that knowingly accepts some label noise because it improves generalization and a team that ships messy data because cleaning it felt tedious is a difference in engineering rigor even if the resulting datasets look similar. This mirrors a broader problem in software quality: a system can appear to pass all its checks while quietly harboring the conditions for failure. Your CI pipeline passes. Your software is still broken.

Third, and most importantly: the usefulness frame changes how you should think about AI failures. When a model gets something wrong, the first question is not always “how do we remove this type of error from training data?” Sometimes the question is “is this error type actually informative about the real distribution of inputs, and would removing it make the model more brittle on inputs we care about?” That is a harder question to ask and a harder one to answer, but it is the right question.

The instinct to treat data quality as a precondition for good models is understandable. Clean inputs, clean outputs. But the empirical record from the last decade of deep learning keeps returning the same uncomfortable lesson: the models that generalize best are often the ones that have seen the mess. Not because mess is good, but because the world is messy, and you have to train for the world you are deploying into.