The Consent Flow Is a User Experience Problem, Solved Against You

When a company wants to expand what data it can collect, it has a few options. It can ask clearly and risk a ‘no.’ It can hide the change in a terms-of-service update and hope nobody reads it. Or it can do something more sophisticated: present the change in a way that’s technically transparent but cognitively overwhelming, so that your brain processes it as noise rather than a decision worth making.

The third option is what I’d call strategic confusion. It’s not deception in the classic sense. The information is usually present. The choice is technically available. But the experience is engineered so that the path of least resistance leads you exactly where the company wants you to go.

This is worth unpacking mechanically, because the techniques are precise and deliberate, and once you can name them you’ll see them everywhere.

Cognitive Load as a Design Primitive

Your capacity to make careful decisions is finite. Psychologists call this cognitive load, and it’s not a metaphor. It’s a measurable constraint. When you’re asked to process too many things at once, your brain starts making decisions based on pattern matching and defaults rather than actual evaluation.

Product teams know this. The field is well-documented enough that ‘dark patterns’ has its own Wikipedia page, its own academic literature, and now its own regulatory attention from bodies like the FTC and the EU’s data protection authorities.

The specific technique for privacy changes works like this: you present the user with a modal dialog, usually at a moment of task interruption (you’re trying to do something else), that contains multiple sections, layered options, and a primary button that says something like ‘Continue’ or ‘Accept and Proceed.’ The alternative, if it exists, is a smaller link that says ‘Manage Preferences’ and leads to another screen with 12 toggle switches, each pre-set to the company’s preferred configuration.

At each step, the interface is trading on your desire to get back to whatever you were doing. The cost of declining is friction. The cost of accepting is abstract and future-dated. Your brain correctly identifies which cost is immediate and acts accordingly.

The Vocabulary Is Doing Work

Language choices in these flows are not accidental. Legal teams review them. UX writers test them. The specific words are selected through A/B testing for which versions produce the highest acceptance rates.

Consider the difference between these two framings of the same thing:

‘We will share your location history with advertising partners.’

vs.

‘Enable personalized experiences by allowing us to use your activity to improve recommendations across our partner network.’

The second version is technically accurate. Location history is a form of activity. Advertising partners are a partner network. But the first version triggers evaluation. The second version triggers something closer to agreement, because it frames data collection as a service being provided to you.

This mirrors a well-understood pattern in interface design. The consent architecture built into most major platforms relies on this kind of language laundering: turning a transaction that benefits the company into something that sounds like a feature you’re enabling for yourself.

The word ‘personalized’ is doing enormous lifting in most of these dialogs. It has positive connotations (relevant, tailored, attentive) without specifying mechanism. You’re not told that personalization requires a detailed behavioral profile. The mechanism stays abstract.

Timing and Context Are Weaponized

The when of these prompts matters as much as the what.

Consider the pattern used by mobile apps: they request permissions and surface policy changes at the moment of first use or at moments of high engagement. You just opened the app for a specific reason. You’re already invested. The interruption cost of stopping to carefully read a privacy change is higher than it would be if the same dialog appeared at a neutral moment.

This is related to a well-documented phenomenon in behavioral economics: switching costs. When you’re mid-task, the cognitive cost of fully evaluating a new prompt is higher because you have to hold your original task in working memory while processing the new one. Product teams empirically know this. Apps that push notifications and permission requests during active sessions see higher acceptance rates than those that surface them cold.

There’s also the update pattern. Apps push a version update, and buried in the update is a revised privacy policy that now includes new data-sharing provisions. The update prompt is framed around bug fixes or new features. The privacy change is present in the release notes if you know to look, but the primary CTA (call to action, the button you’re being nudged to press) is ‘Update Now.’

You think you’re updating for improved stability. You’re also consenting to expanded data collection. Both things are true. The interface only made one of them salient.

The Asymmetry of Opt-Out

Let’s talk about the mechanics of the opt-out option, when it exists.

A well-designed opt-out, from a user-rights perspective, would be: same visual prominence as opt-in, same number of steps to complete, and equally final (meaning it actually sticks without requiring periodic re-confirmation).

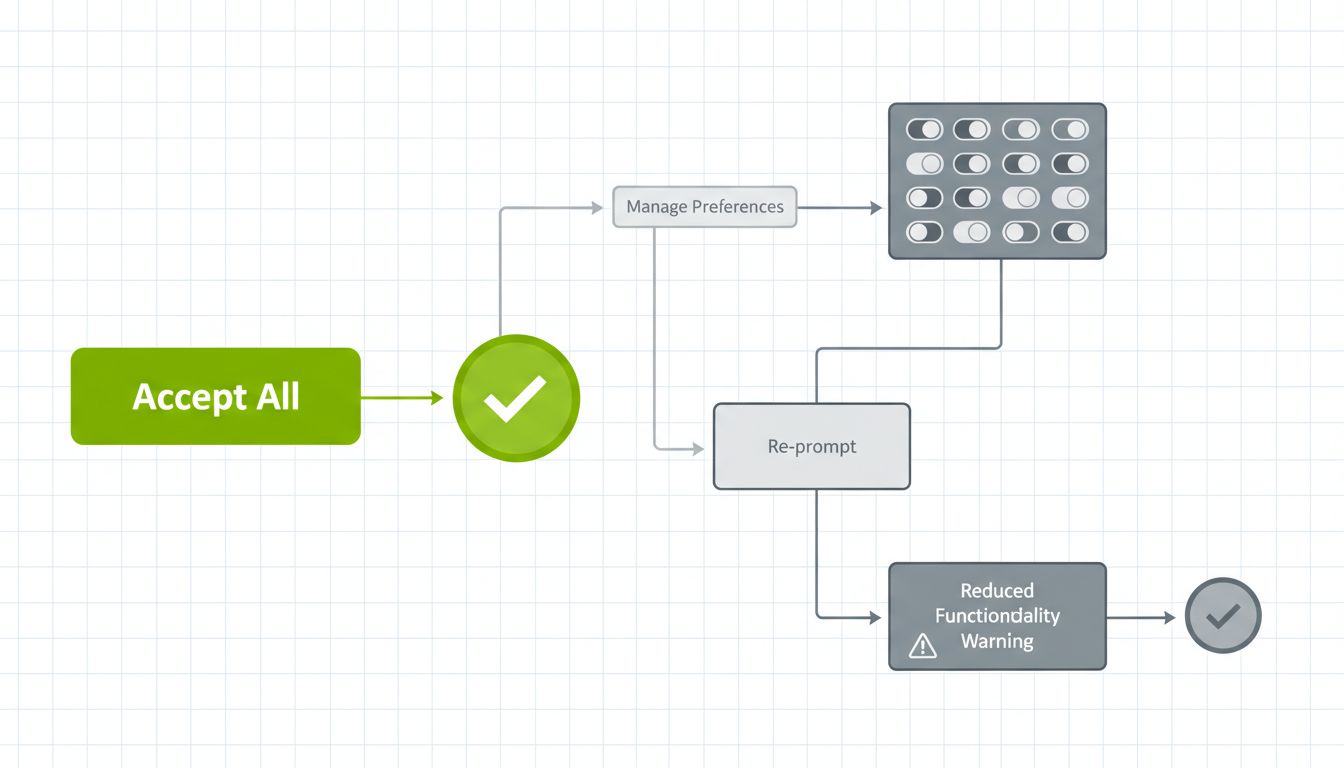

What companies actually build is different. The ‘Accept All’ button is large, primary-colored, and present on the first screen. The ‘Decline’ or ‘Manage’ option is smaller, in a secondary color or plain text, and links to a preferences panel where each category of data collection has its own toggle. Those toggles are set to ‘on’ by default. Turning them off requires interacting with each one individually.

If you make it through that process and actually decline everything, some services will then tell you that certain features require the data collection you just declined, and offer you a re-prompt to reconsider. The ‘no’ you expressed is treated as the beginning of a negotiation rather than a final answer.

From an engineering standpoint, this asymmetry is trivially easy to avoid. Setting defaults to ‘off’ is one line of state initialization code. Making the ‘decline’ button the same size as ‘accept’ is a CSS change that takes seconds. The asymmetry is not a technical constraint. It’s a product decision.

Why Regulators Are Catching Up Slowly

GDPR, which came into force in 2018, technically requires that consent be ‘freely given, specific, informed, and unambiguous.’ It also requires that withdrawing consent be as easy as giving it. These are clear standards.

In practice, enforcement has been uneven. The Irish Data Protection Commission, which handles complaints against many large tech companies because of where those companies are headquartered in Europe, has been criticized for slow investigation timelines. A ruling that a company’s consent flow violates GDPR can take years, during which the company continues running the same flow and collecting data.

The California Consumer Privacy Act (CCPA) and its successor CCPA regulations take a similar approach. The requirements are clear on paper. Enforcement actions happen, but they happen slowly relative to the pace at which companies can iterate on their consent flows.

The result is a regulatory environment where companies have strong incentive to push the boundaries of acceptable design, because the downside risk is a fine that arrives years later and often amounts to less than the value of the data collected in the meantime.

The Arms Race Between Awareness and Interface Design

Here’s what I find genuinely interesting about this problem from a technical angle: it’s essentially an adversarial system, and the two sides are iterating against each other.

Browser extensions like Privacy Badger, uBlock Origin, and more recently tools that auto-reject cookie consent banners (Consent-O-Matic is a good example) represent attempts to automate the decline path that companies make so difficult manually. They’re essentially scripts that navigate the dark-pattern maze faster than you can, clicking all the ‘reject’ options before you even see the screen.

Companies respond by making their consent flows harder to automate: using non-standard HTML structures, dynamically generated IDs, and visual-only interactions (like slider controls) that are harder to script against. It mirrors the same cat-and-mouse dynamic you see in web scraping and bot detection.

The underlying problem is that the system is asymmetric in resources. A company can employ full-time teams to design and optimize consent flows. A browser extension is maintained by volunteers or small non-profits. The side with more resources tends to win iterative arms races.

What This Means

Strategic confusion in privacy consent is not a conspiracy. It’s a rational response to incentives. Companies are optimizing for data collection because data collection has value, and the regulatory cost of aggressive consent design has historically been low. The tools being used, cognitive load exploitation, language framing, asymmetric UX design, and adversarial timing, are all well-understood techniques applied with precision.

As a user, knowing the pattern helps. When you see a consent modal with a large ‘Accept’ button and a small ‘Manage Preferences’ link, you’re looking at a deliberate design decision. The link that’s hard to find is the one they don’t want you to click.

As an engineer, there’s a direct question worth sitting with: if you’re building these flows, you have the technical knowledge to understand exactly what you’re building. Setting defaults to ‘off’ is trivially easy. Making the decline path equal in prominence to the accept path costs nothing in engineering hours. The complexity is in the politics of the product decision, not in the implementation.

Regulatory solutions exist but move slowly. The more durable fix is probably cultural: the same way accessibility has become a professional norm among good engineers, so might honest consent design. Not because the law requires it, but because designing a system specifically to exploit users’ cognitive limits is exactly the kind of thing a senior developer should refuse to ship.