In the summer of 2012, a small team at a financial data company was losing sleep over a bug that didn’t exist — at least, not reliably. Their high-frequency data ingestion service would occasionally produce corrupted records. Not always. Not on any predictable schedule. Maybe twice a week, maybe not at all for ten days, then three times in one afternoon. The records were wrong in ways that were hard to characterize: timestamps off by arbitrary amounts, fields from one record bleeding into another, totals that simply didn’t add up.

When they ran the same code in staging, nothing. When they wrote unit tests targeting every edge case they could imagine, nothing. When they added logging and watched the service closely, it behaved perfectly for two weeks, then corrupted three records on a Tuesday at 2:47 AM.

The temptation to close the ticket as “intermittent, low priority” was real. They resisted it.

The Setup

The service was written in C, which matters. It was processing market data feeds from multiple sources concurrently, using shared memory buffers to avoid the overhead of copying data between threads. The logic had been running for about eight months without obvious problems. A new engineer had refactored the buffer management code four months before the corruption started appearing, but the timing seemed coincidental. The refactor had passed code review and all existing tests.

The engineers added more logging. They added checksums on write and verified them on read (a practice that, once you’ve seen what it catches, you never skip again). The checksums confirmed corruption was happening but told them nothing about when or why. The corrupted records appeared to come from different threads, different data sources, different times of day.

They were looking at noise. Or what looked like noise.

What Actually Happened

The refactored buffer code had introduced a subtle race condition. The original code had used a lock around the entire write-then-signal operation. The refactored version, trying to reduce lock contention, split the operation: write the data, release the lock, then signal waiting readers. This is a reasonable optimization in many contexts. In this context, it was wrong.

Between the lock release and the signal, another thread could acquire the lock, begin writing to the same buffer region, and get interrupted mid-write when the signal finally fired and a reader woke up. The reader would then consume a buffer containing a partial write from thread A interleaved with a partial write from thread B.

This only manifested under specific combinations of thread scheduling, data arrival timing, and system load. In staging, the load was lower and the timing windows narrower. In production, with real market data arriving in bursts, the race condition had room to breathe.

Finding it required rebuilding the whole mental model of what the refactored code was actually doing, not what the engineer intended it to do. Once they had that model, they could construct a synthetic load test that reproduced the corruption on demand. From there, the fix was straightforward: move the signal back inside the lock. The optimization was abandoned. The corruption stopped.

Why This Matters

This story is not unusual. What makes it instructive is the pattern it represents.

Non-reproducible bugs have a reputation as flukes, as ghost stories. Engineers often treat them as something to monitor rather than something to solve. This is almost always wrong. A bug that appears intermittently in production but never in testing is not random. It has specific causes. The randomness is in your ability to observe those causes, not in whether they exist.

Race conditions are the canonical example, but the category is broader. Memory corruption from buffer overflows that only trigger with specific input sizes. Floating-point errors that accumulate differently under different compiler optimization levels. Network timeout handling that only exercises the error path when latency hits a narrow window. These bugs don’t come and go. They’re always there, waiting for the conditions that expose them.

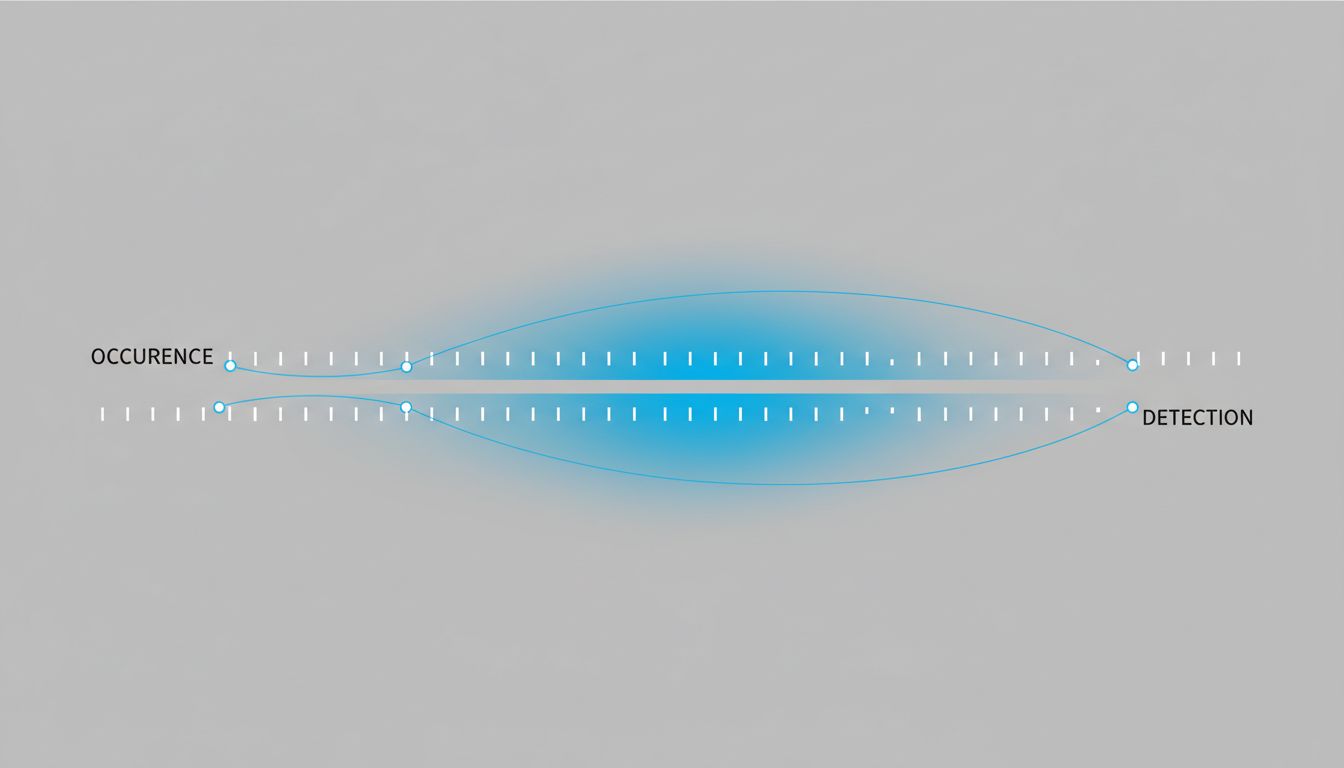

The financial data team’s bug had been present in the code for four months before it caused visible corruption. Every day during those four months, the race condition existed. The threads just didn’t collide often enough, under the right circumstances, to produce a corrupted record that anyone noticed. The bug was not intermittent. The detection was intermittent.

This distinction changes how you approach the problem. If the bug is random, you can’t do much except hope it becomes less frequent. If the detection is intermittent, you can improve your detection.

What We Can Learn

The team eventually established a set of practices they applied to any bug that appeared in production but not in testing, and which I think generalize well.

Build observability into the thing being observed. The checksums were the right instinct. When you can’t reproduce a failure, add structure around the failure site that tells you more about the state of the world when it happens. Logs matter less than structured state snapshots. You want to know not just that something went wrong, but what the system looked like a few hundred milliseconds before it went wrong. Tools like core dumps, memory sanitizers (AddressSanitizer and ThreadSanitizer in the C/C++ world, for instance), and detailed crash reports serve this purpose.

Treat production as a different system than staging, because it is. Staging will always differ from production in ways that seem minor until they aren’t. Load patterns, connection counts, data distributions, hardware timing characteristics. Why staging passes and production still catches fire is a real and persistent problem precisely because these differences are easy to underestimate. The financial data team’s race condition needed real market data volumes to manifest. Staging, with its synthetic and lower-volume test data, couldn’t generate the thread contention required.

Don’t let “can’t reproduce” become “won’t pursue.” The team in this story took two weeks to find the bug’s root cause. During that time, the correct stance was: this is real, it has a cause, and finding the cause is worth the cost. The alternative was shipping corrupted financial records with no understanding of why or how often. That’s not a tradeoff worth making to avoid a hard debugging session.

Understand changes in terms of their behavioral guarantees, not their intentions. The refactored buffer code was intended to reduce lock contention. That intention was reasonable. But the change also altered the atomicity guarantees of the write-signal sequence, and nobody caught that in code review because everyone was thinking about what the code was supposed to do rather than what it actually guaranteed. Concurrency bugs in particular almost always come from this gap between intention and guarantee.

The deepest lesson from this case isn’t technical. It’s epistemic. The bug you can’t reproduce isn’t the bug that doesn’t exist. It’s the bug you haven’t yet understood well enough to reconstruct. That’s a solvable problem. It just requires treating the gap in your understanding as the actual thing you’re working on, not a side effect of the thing you’re working on.

The financial data team closed the ticket eleven days after opening it. The fix was four lines. The understanding that produced the fix was everything.