The Simple Version

When you write code and run it, you might assume the computer executes roughly what you wrote. It doesn’t. The compiler, the program that translates your source code into machine instructions, routinely rewrites your program in ways that would surprise most working developers.

What a Compiler Actually Does

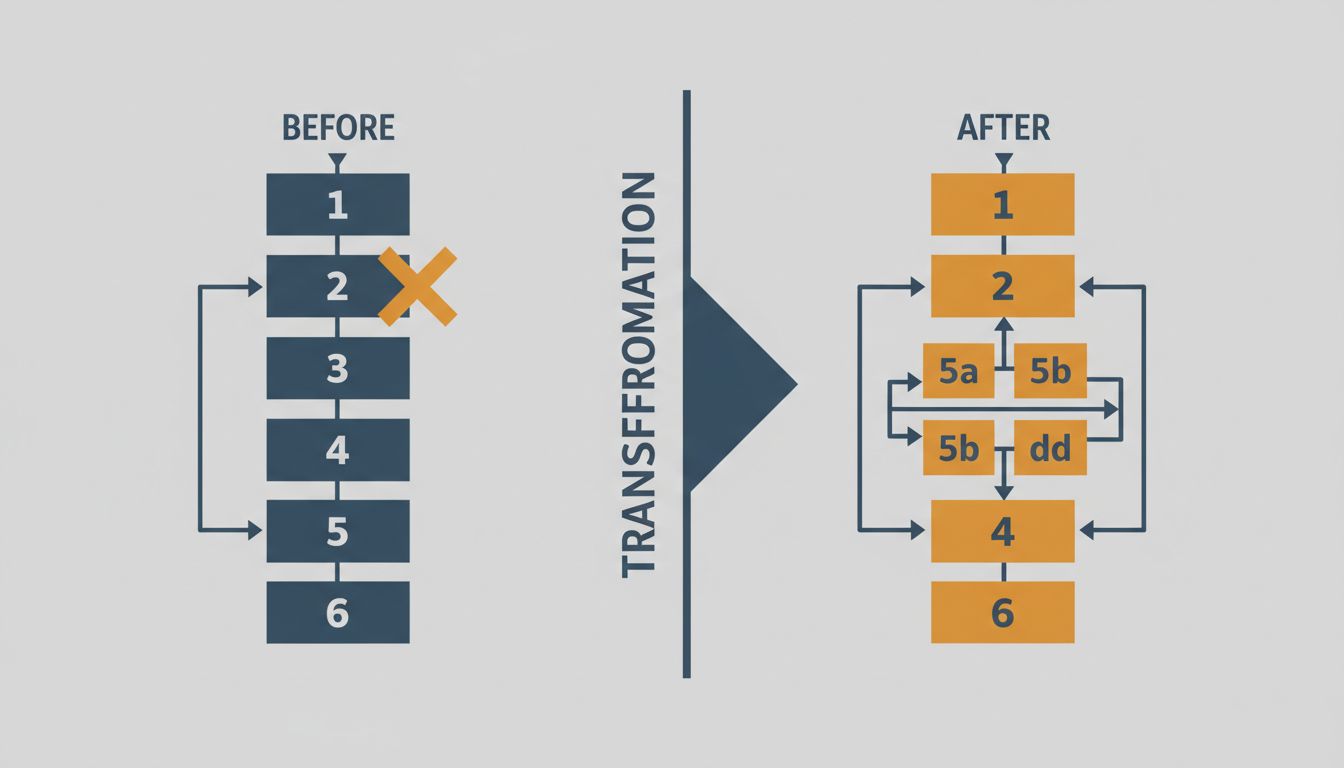

The naive model of compilation goes like this: you write instructions, the compiler converts them to machine code, the processor runs them. Tidy, linear, respectful of your intent.

The real model is messier and more interesting. Modern compilers, particularly mature ones like GCC and LLVM (which powers Clang, Rust’s compiler, and Swift’s compiler), treat your source code as a description of a desired outcome, not a script to be followed. The compiler’s job is to produce machine code that achieves the same observable result. What happens in between is the compiler’s business.

This means the compiler may reorder your operations, eliminate code it can prove will never affect output, duplicate loops to avoid branches, merge separate variables into a single memory location, or convert a function you called once into inline instructions scattered across the binary. When you compile with optimization enabled, the assembly output can be unrecognizable compared to the source that produced it.

The Specific Ways It Rewrites Your Program

Constant folding is the simple case. If you write x = 60 * 60 * 24, the compiler calculates 86400 at compile time and replaces the expression with a literal. Your arithmetic never runs.

Dead code elimination goes further. If the compiler can prove a branch is unreachable, or a variable is set but never read, it removes that code entirely. This sounds like helpful cleanup, but it creates a genuine hazard: security-sensitive code that clears a buffer of secret data can be silently deleted if the buffer is never read afterward. This is not theoretical. The C standard explicitly notes that compilers may optimize away calls to memset used to zero memory, and real cryptographic implementations have been broken this way. The fix, using functions like memset_s or compiler-specific memory barriers, exists precisely because developers learned this the hard way.

Loop optimizations are where things get counterintuitive. Compilers perform a transformation called loop unrolling, where a loop that executes four times might be rewritten as four consecutive copies of the loop body, eliminating the overhead of checking the loop condition. More aggressively, compilers can vectorize loops, converting sequential operations into SIMD (Single Instruction, Multiple Data) instructions that process multiple data elements simultaneously. A loop you wrote to process an array one element at a time may execute four or eight elements at once in the compiled output.

Inlining is perhaps the most dramatic transformation. A function you defined separately, called in ten places, might not exist as a function at all in the compiled binary. The compiler may copy the function’s body into each call site, trading binary size for the overhead of function calls. Conversely, a recursive function might be rewritten as an iterative one.

Why This Matters Beyond Curiosity

The implications are practical, not just academic.

Performance intuitions are frequently wrong. Developers routinely optimize the wrong things because they’re reasoning about source code, not compiled output. A loop that looks slow may already be vectorized. A function call that looks cheap may have been inlined and optimized away entirely. A variable that looks like it occupies memory may live in a register. Profiling tools measure what actually runs; source-level reasoning does not. This is one reason senior engineers reach for profilers before optimizing, and why advice like “avoid function calls, they’re expensive” often leads developers to write worse code for no gain.

Concurrency bugs are harder to reason about than they appear. The compiler’s freedom to reorder operations interacts badly with multithreaded code. Two operations that appear sequential in your source may be reordered by the compiler because, from a single-threaded perspective, the reordering is safe. In a multithreaded program, another thread might observe the intermediate state. This is why languages like Java and C++ have formal memory models, and why concurrency primitives like atomics and mutexes exist at the language level rather than just the OS level. The memory model constrains what the compiler is allowed to optimize.

Debugging optimized builds is genuinely difficult for this reason. Variables don’t exist where you expect them. Breakpoints land on unexpected lines. Stack traces omit inlined functions. The program you’re debugging is not the program you wrote.

The Compiler’s Contract

None of this is reckless. The compiler operates under a strict contract called the “as-if” rule: the compiled program must behave as if the original source were executed faithfully, as far as any legal observer can tell. The compiler cannot change the observable behavior of a correct program.

The catch is that word “correct.” Undefined behavior in C and C++ is a well-known minefield, but the depth of it surprises even experienced programmers. If your code contains undefined behavior, the compiler’s contract no longer applies. It may do anything, and aggressively optimizing compilers will exploit undefined behavior in ways that appear to delete logic or change control flow. Signed integer overflow in C is undefined behavior. Dereferencing a null pointer is undefined behavior. Reading from memory you’ve freed is undefined behavior. When these occur, the compiler is not required to produce a sensible result, and often won’t.

This is a sharp edge. Compilers have become more aggressive about undefined behavior optimizations over time, and code that worked for years on older compiler versions has broken on newer ones when an optimization pass found a new way to exploit the undefined case. The popular OpenSSL project has run into this. So has the Linux kernel, which now maintains a list of GCC flags that disable specific optimizations the kernel cannot tolerate.

What Smart Developers Do Differently

Understanding the compiler as a collaborator rather than a transcription service changes how experienced engineers write code. They write for correctness first and trust the compiler to handle performance details. They use the compiler’s own tools, like __builtin_expect in GCC or [[likely]] in C++20, to provide hints rather than trying to outmaneuver the optimizer. They read assembly output when something doesn’t perform as expected, using tools like Compiler Explorer (godbolt.org), which lets you see the compiled output for any snippet in real time.

They also take undefined behavior seriously as a correctness issue, not just a theoretical concern. A program with undefined behavior isn’t slow. It isn’t buggy. It’s wrong, and the compiler is under no obligation to make it appear otherwise.

The compiler’s job is to be smarter than you about machine code, and it generally succeeds. The developer’s job is to be precise about intent and correct about invariants. When both sides hold up their end, the result is code that runs faster than either party could have produced alone.