The Interrupt Handler Your Brain Never Asked For

There’s a concept in operating systems called an interrupt. When a hardware device needs the CPU’s attention, it sends an interrupt signal that causes the processor to pause whatever it’s currently doing, save its state, and jump to a handler function. The handler runs, then the CPU tries to restore what it was doing. This works well for processors because restoring state is cheap and deterministic. Load the registers, resume the instruction pointer, continue.

Your brain is not a processor. And the cost of context-switching in a human cognitive system is neither cheap nor deterministic.

This is the part that most writing about notifications gets wrong. It focuses on the time you spend looking at your phone or reading the message. That’s measurable and obvious. The more important cost is what happens in your brain between the moment a signal arrives and the moment you’ve fully returned to the work you were doing. Those are two different events, and the gap between them is where the real damage lives.

Anticipatory Attention Is a Real Cognitive Load

When you hear a notification sound or feel a vibration, your attentional system doesn’t wait for you to consciously decide what to do. It fires automatically. This is sometimes called the “attentional capture” effect, and it’s been documented in cognitive psychology for decades. Your brain allocates processing resources to evaluate the potential significance of the signal before you’ve made any deliberate choice to do so.

What’s less obvious is what happens when you decide not to look. You’ve consciously chosen to stay on task. But a part of your working memory is now holding a thread open: something arrived, I don’t know what it is, I should probably check eventually. That open thread consumes working memory capacity. Working memory (the cognitive system that holds information you’re actively using) is limited, and it doesn’t multitask gracefully. Any resource devoted to holding that unresolved notification is a resource not available to the problem you’re trying to solve.

Researchers at the University of Florida published work showing that even receiving a notification you don’t act on produces task performance decrements comparable to actually responding to it. The phone doesn’t need to be in your hand. The signal alone is sufficient to fragment attention.

This is why “just ignore it” is not a real solution. You can train yourself to delay your response. You cannot easily train your attentional system to stop registering the signal as potentially significant. That system evolved to detect change in the environment as a survival mechanism. An app notification and a rustling in tall grass activate the same basic machinery.

The Recovery Time Is Not What You Think

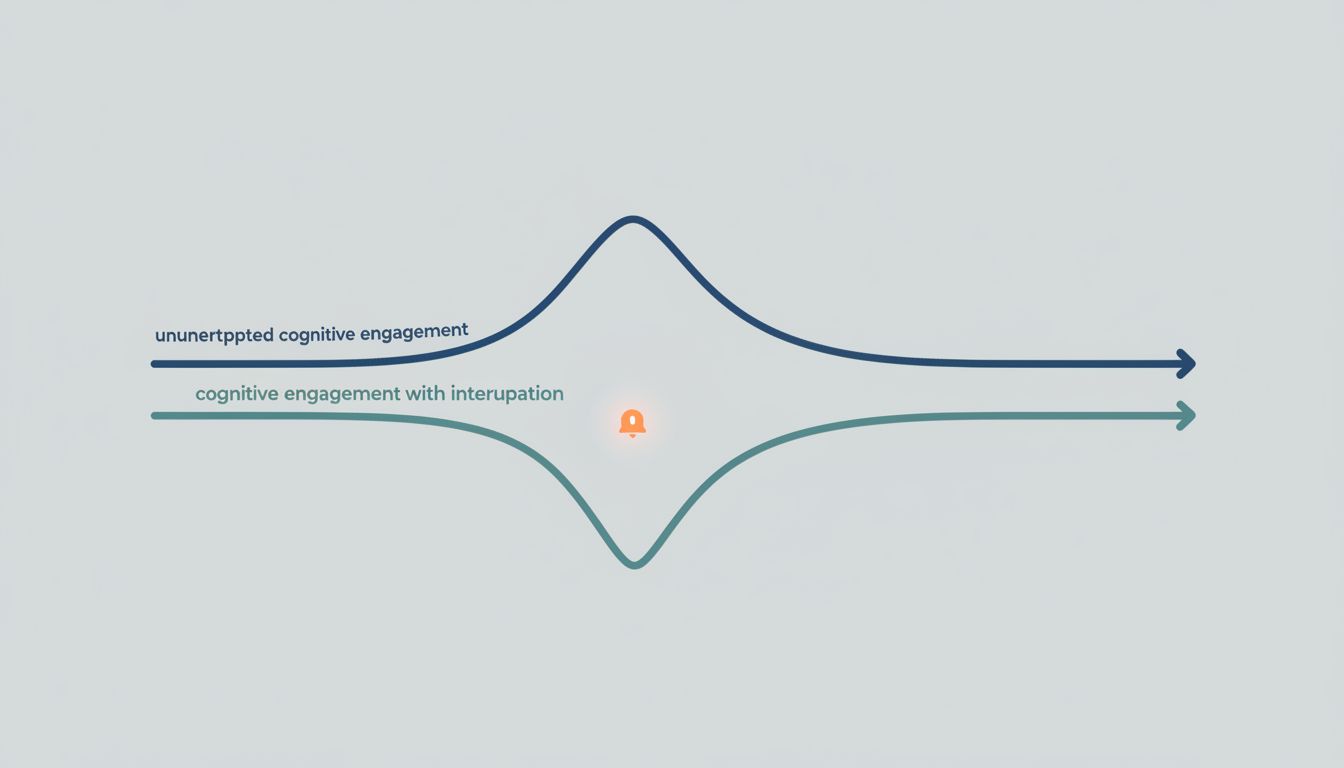

The most-cited figure in this space is 23 minutes, from Gloria Mark’s research at UC Irvine, which found that it takes on average 23 minutes and 15 seconds to fully return to a task after an interruption. That number gets repeated constantly, often skeptically, and it’s worth being precise about what it means. It doesn’t mean every interruption costs exactly 23 minutes. It means that when people return to a complex task after being interrupted, the full resumption of that task takes substantially longer than people assume.

The reason has to do with how cognitive engagement works on complex problems. When you’re deep in a difficult task, you’re not just thinking about the immediate next step. You’re holding a mental model of the problem: constraints you’ve already ruled out, promising directions you’re exploring, the particular framing you’ve chosen to approach the problem with. That model isn’t stored anywhere explicit. It’s an emergent property of active, ongoing cognitive engagement. Interrupt that engagement, and the model degrades. You have to rebuild it.

Think of it like an in-memory cache. When you’re working on something hard, you’ve loaded a lot of state into fast, volatile storage. An interruption doesn’t corrupt that state immediately. But attention is what keeps it warm. The longer you’re away from the task, the more that cache cools, and the more expensive it becomes to reload. Some of what you had is simply gone and has to be reconstructed from slower storage, which means re-reading notes, re-reading code, re-thinking conclusions you’d already reached.

What “Do Not Disturb” Actually Solves (and What It Doesn’t)

The mechanism that matters here is not notification management. It’s signal elimination. There’s a meaningful difference.

Notification management means things like grouping notifications, batching them, or setting priority filters. These reduce the volume of signals but preserve the underlying architecture: your device can still interrupt you, it just does so more selectively. This is better than nothing, but it doesn’t remove the core problem. If your phone can still vibrate during a focused work session, you still carry the cognitive overhead of knowing it might.

Signal elimination means removing the possibility of interruption from the environment entirely during defined periods. Phone in another room, not on silent but physically absent. Notifications off at the OS level, not suppressed by an app. This sounds extreme, and people resist it because it feels like being unavailable. But “available” and “interruptible at any moment” are not the same thing, even though modern communication culture has collapsed them into synonyms.

The argument against this is always some version of “what if something urgent comes up.” This is worth examining honestly. Most people, when they audit their actual notification history, find that a very small fraction of interruptions were genuinely time-sensitive in a way that required sub-hour response. The rest were emails, Slack messages, and app updates that could have waited. The urgency is often manufactured by the medium itself, not inherent to the content.

Designing Your Environment Around the Actual Cost

The practical implication is that notification hygiene is fundamentally an environment design problem, not a willpower problem. You shouldn’t need discipline to resist checking your phone every few minutes. You should be operating in an environment where the friction of checking is high enough that your default behavior is staying on task.

This is an idea that good software engineers already apply to their systems. You don’t rely on developers remembering to write tests. You build a CI pipeline that makes deploying without tests painful. You don’t rely on people remembering to handle errors. You make the type system enforce it. Well-designed software optimizes for specific behaviors through defaults and friction, not good intentions.

Apply the same logic to your working environment. Default state: signal-free during focused work blocks. Make interruptions opt-in, not opt-out. Set explicit windows where you process messages, and make those windows known to the people who regularly contact you. This is the equivalent of committing your dependencies to a lockfile instead of resolving them fresh each time. You’re making the environment deterministic so that cognitive resources go to the work, not to managing the environment itself.

The notifications are not the problem, exactly. The problem is a system designed to make you interruptible by default, in every context, at all times. That’s not a feature. It’s a bug in how most knowledge workers have configured their professional lives. And like most bugs, it’s easier to fix at the architecture level than to paper over with workarounds.