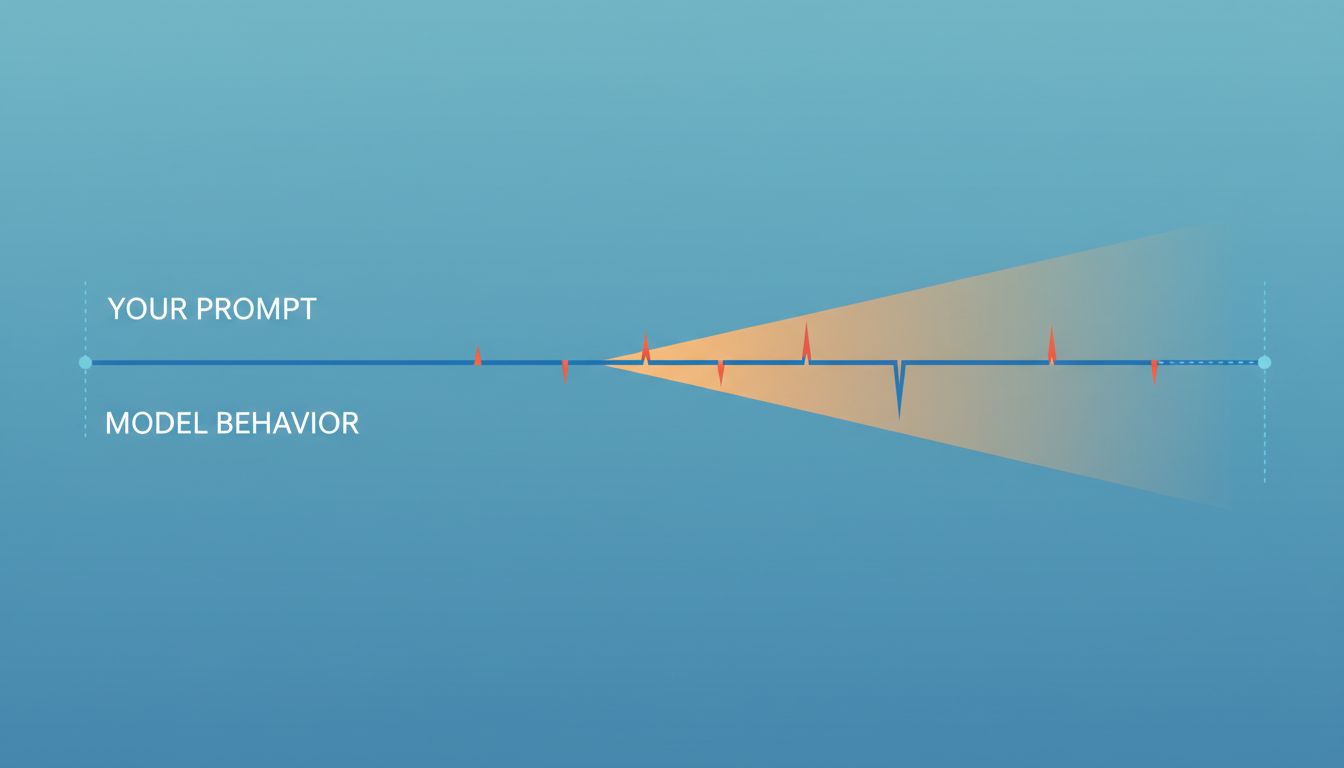

You spent an afternoon getting a prompt exactly right. The output was clean, the format was perfect, the tone matched what you needed. You saved it to a doc, maybe a Notion page, maybe a shared folder labeled something like “prompts that work.” Three months later, you ran it again and got something noticeably different. You assumed you’d changed something. You probably hadn’t.

This is the quiet failure mode of building workflows on top of language models. The model changes. Your prompt doesn’t. And unlike a broken API that throws an error, a degraded prompt just gives you worse output while looking like it’s still working.

1. Models Update Without Telling You

OpenAI, Anthropic, Google, and every other major model provider iterate on their models continuously. Sometimes those updates are announced; often they’re not. When OpenAI updated GPT-4 Turbo’s training cutoff and adjusted its system behavior in early 2024, many developers noticed their prompts produced different outputs without any breaking change in the API response format. No error. No warning. Just drift.

This matters more than most people realize because prompts are tuned against a specific model’s behavior, not against some abstract platonic ideal of a language model. When you write “respond only in bullet points” and it works, it works because of how that particular model version weighted that instruction relative to everything else in its training. Change the training, and the instruction carries different weight.

2. Specificity Is Both the Solution and the Trap

The natural response to unreliable prompts is to make them more specific. Add more constraints. Specify the format in more detail. Include examples. This works, up to a point, and it’s generally good advice. But extreme specificity creates its own fragility.

A prompt that depends on a model reproducing a very precise output structure (say, a JSON object with a specific nesting pattern and exact field names) will break harder when model behavior shifts than a prompt that asks for something more flexible. You’ve optimized for one model’s quirks, and those quirks will change. The most robust prompts tend to be clear about the goal rather than prescriptive about every step of how to achieve it. There’s a meaningful difference between “return a JSON object with fields X, Y, and Z” and “format your response so that my downstream parser, which expects keys named X, Y, and Z, can read it without modification.”

3. Temperature and System Prompt Defaults Shift

Most developers know they can set temperature explicitly. Fewer pay attention to what happens when they don’t, or when the model’s interpretation of a given temperature value changes between versions. A temperature of 0 feels like it should mean “deterministic,” but in practice it means “as deterministic as this model allows,” and that ceiling has changed across versions of the same model family.

System prompt behavior is even murkier. How strongly a model follows system instructions versus user instructions, how it handles conflicts between them, and how it interprets ambiguous system-level constraints are all parameters that get tuned during model updates. If your workflow depends on a system prompt overriding something a user might say, you should be testing that assumption regularly, not assuming it’s stable.

4. The Context Window Changes What “Good” Looks Like

As models get larger context windows, their behavior in shorter contexts sometimes changes. This is counterintuitive but documented: models trained on very long context tasks sometimes behave differently when given short inputs because the training distribution shifts. The model has learned to expect more context and may handle sparse inputs differently than an earlier version did.

Practically, this means a prompt that worked well with 500 tokens of context might behave differently once the same model has been fine-tuned on 100k-token documents. The model’s sense of “what comes next” has changed, and your prompt is operating in a different statistical environment than it was designed for.

5. Fine-Tuned and Base Models Get Confused in the Wild

Many teams use the same prompt templates across different model variants without tracking which variant each template was designed for. A prompt optimized for a base model often doesn’t perform the same way on an instruction-tuned version of that model, and vice versa. Instruction-tuned models are trained to be helpful and follow explicit directions; base models are trying to complete text. The same words mean different things in those two contexts.

This problem compounds when providers release new fine-tuned variants (as both OpenAI and Anthropic do regularly) without clearly documenting how they differ from prior versions in terms of instruction-following behavior. You end up with prompts that were tuned against one training objective running against a model with a different one, and the gap shows up as subtle quality degradation that’s easy to attribute to something else.

6. Your Evaluation Is Probably Manual, Which Means It’s Slow

The root problem with all of this is that most teams don’t have automated evaluation for their prompts. They notice something is wrong when a user complains, or when a developer happens to run the prompt and notices the output looks off. By then, the degradation may have been happening for weeks.

The answer isn’t complicated, even if it takes some upfront work: build a small test set of inputs and expected outputs for any prompt that matters to your product. Run it on a schedule. Compare results across model versions. This is exactly what you’d do with any other piece of software that has external dependencies, and a third-party model is an external dependency in every meaningful sense. The fact that most teams skip this step explains why “the AI got worse” is such a common complaint with such fuzzy timelines.

7. Versioning Your Prompts Is Not Optional

If you’re using prompts in production, they need to be versioned the same way your code is. This sounds obvious and most teams don’t do it. A prompt stored in a Google Doc or a Notion page has no history, no rollback, and no way to correlate a change in output quality with a change in the prompt itself.

Store prompts in version control. Tag them with the model version they were designed for. When you update a prompt, treat it like a code change: review it, test it against your eval set, and document why you changed it. This isn’t bureaucracy; it’s the minimum infrastructure needed to debug a system where your dependencies update silently and your outputs degrade gradually. The teams building reliably on top of language models treat prompt management as an engineering problem. Everyone else is just hoping nothing changes.