Picture this: you’re scrolling through Y Combinator’s latest batch, and half the companies have “AI-powered” in their tagline. Six months later, you check back and most of their websites return 404 errors. If you’ve been in tech long enough, this pattern feels eerily familiar—like the mobile app gold rush of 2012 or the blockchain bonanza of 2018.

But here’s what’s different about the AI startup graveyard: most of these companies didn’t fail because their technology was bad. They failed because they fundamentally misunderstood what they were building. After watching dozens of AI startups rise and fall, I’ve identified the core patterns that separate the survivors from the casualties. Spoiler alert: it has very little to do with having the fanciest neural networks. Just like how successful tech giants understand the psychology behind their design choices, successful AI companies understand that technology is only half the equation.

The Model-First Fallacy

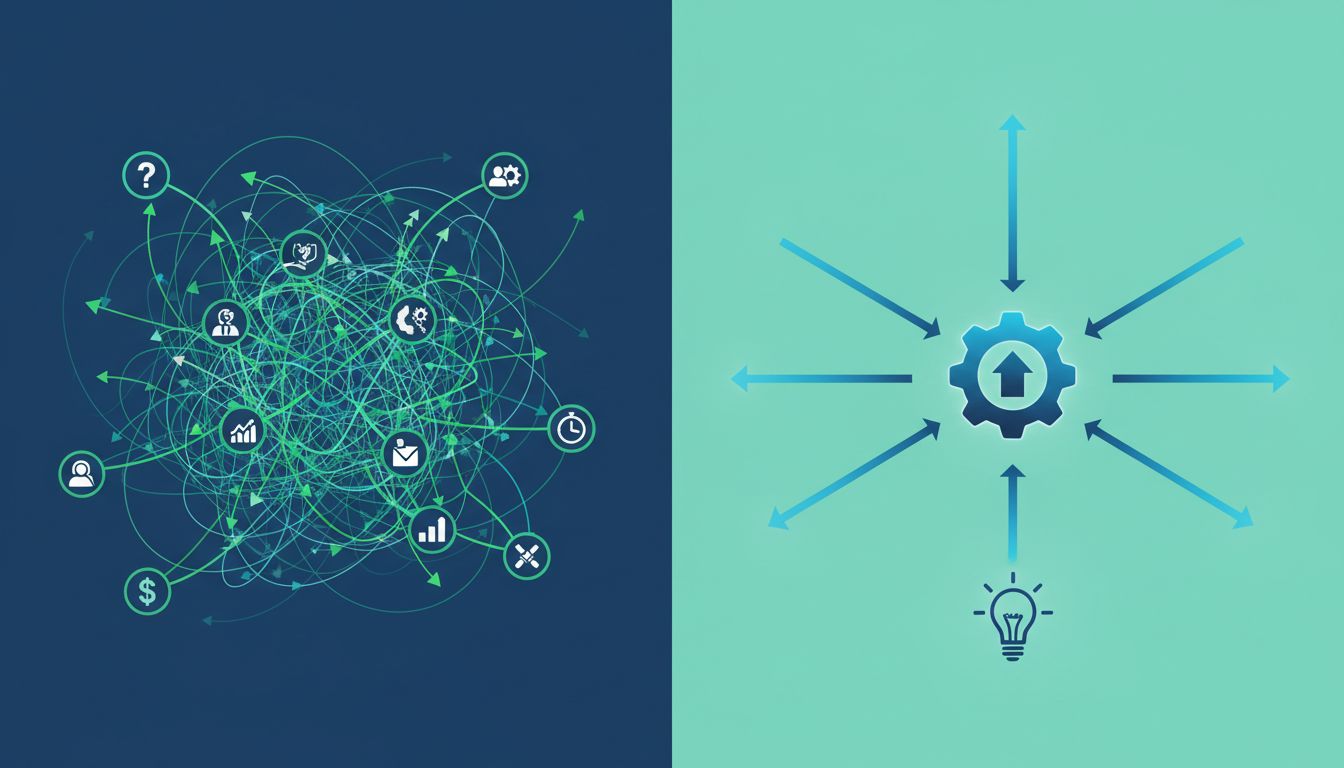

Most AI startups begin their journey by falling in love with a model. They read a paper about transformer architectures, fine-tune a large language model, and immediately start hunting for problems to solve. This is like building a Ferrari engine and then trying to figure out whether you’re making a race car or a lawnmower.

I call this the “model-first fallacy,” and it’s everywhere. A startup will spend six months perfecting their computer vision pipeline, achieving 94% accuracy on their test dataset, only to discover that their target market can’t actually use anything less than 99.8% accuracy. Or they’ll build a natural language processing system that works beautifully in English but completely falls apart when their first enterprise client needs multilingual support.

The survivors do it backwards. They identify a specific, painful problem first, then work backward to figure out which AI techniques (if any) can actually solve it. Sometimes the answer isn’t even AI—sometimes it’s better data pipelines, smarter APIs, or just good old-fashioned software engineering.

The Infrastructure Reality Check

Here’s something they don’t teach you in machine learning courses: the hardest part of an AI startup isn’t training your models—it’s keeping them running in production. I’ve seen companies burn through their entire Series A just trying to scale their inference pipeline from 100 to 10,000 requests per second.

Consider the infrastructure stack for a typical AI startup. You need data ingestion pipelines, model training infrastructure (probably distributed across multiple GPUs), model versioning and experiment tracking, inference servers that can handle real-time requests, monitoring systems to detect model drift, and rollback mechanisms for when things go sideways. Oh, and all of this needs to be secure, compliant, and cost-effective.

Most founders vastly underestimate this complexity. They prototype on a single machine with a clean dataset, then get blindsided when they need to handle real-world data that’s messy, inconsistent, and arrives at unpredictable intervals. It’s like the difference between writing a hello-world script and building a distributed system that processes millions of transactions. The principles are the same, but the execution complexity increases exponentially.

There’s a reason why companies like Google and Meta still rely on programming languages and architectures from decades ago—stability and predictability matter more than cutting-edge features when you’re running systems at scale.

The Data Quality Death Spiral

Every AI startup eventually faces the same brutal truth: garbage in, garbage out. But here’s the twist—most don’t realize their data is garbage until they’re already in production.

The pattern goes like this: a startup trains their model on carefully curated datasets, achieves impressive benchmark scores, and launches with great fanfare. Then real users start feeding the system real-world data, and performance plummets. Customer complaints roll in. The team scrambles to retrain the model, but the new data is inconsistent with their original training set. Model performance becomes erratic and unpredictable.

I’ve watched companies spend months building sophisticated data cleaning pipelines, only to discover that their core problem wasn’t dirty data—it was data that didn’t actually represent their problem space. For example, a startup building a medical diagnosis tool might train on pristine hospital data, but their actual users are uploading blurry smartphone photos taken in poor lighting conditions.

The successful AI startups I know spend at least 60% of their time on data strategy: how to collect it, how to label it, how to validate it, and how to keep it relevant as their product evolves. They build data pipelines like infrastructure—boring, reliable, and thoroughly tested.

The Talent Trap

The AI talent market is absolutely bonkers right now. Fresh PhDs with machine learning backgrounds are commanding $300K+ starting salaries, and experienced AI engineers can write their own tickets. But here’s the counterintuitive part: hiring the most impressive AI talent often accelerates startup failure.

Why? Because top-tier AI researchers are trained to push the boundaries of what’s possible, not to build stable, maintainable systems that solve mundane business problems. They want to publish papers and attend conferences, not debug data pipelines and optimize inference costs. It’s like hiring a Formula 1 driver to deliver pizza—technically they’re overqualified, but it’s not a good fit for anyone involved.

The startups that survive this phase figure out how to balance research talent with engineering pragmatism. They hire one brilliant AI researcher to guide the technical vision, then surround them with experienced software engineers who know how to build reliable systems. The magic happens at the intersection of cutting-edge research and boring-but-effective engineering.

The Product-Market Fit Mirage

Traditional startups can iterate their way to product-market fit relatively quickly. Build a feature, measure user response, adjust, repeat. AI startups face a much more complex feedback loop because changing the product often means retraining models, which can take weeks or months.

This creates what I call the “product-market fit mirage.” Early customers get excited about the potential of AI technology and provide encouraging feedback, even when the product doesn’t quite solve their problem yet. Founders interpret this as validation and double down on their current approach, not realizing that excitement about potential isn’t the same as willingness to pay for a solution.

By the time they discover the mismatch, they’ve burned through months of runway and built technical debt that makes pivoting exponentially harder. Unlike a traditional web app where you can refactor the frontend in a weekend, changing an AI product’s core functionality might require rebuilding your entire training pipeline.

The survivors solve this by obsessing over one specific use case until they nail it completely. They resist the temptation to build a general-purpose AI platform and instead become the absolute best solution for one narrow problem. Only then do they think about expanding to adjacent use cases.

Building for the Long Game

The AI startups that make it past their second birthday share one crucial characteristic: they treat AI as a means to an end, not the end itself. They understand that sustainable businesses are built on solving real problems consistently, not on having the most sophisticated technology.

They also embrace the boring fundamentals that make software companies successful: understanding their customers deeply, building reliable systems, managing cash flow carefully, and creating repeatable sales processes. Just like how established tech companies balance innovation with operational excellence, successful AI startups learn to balance cutting-edge research with practical business needs.

If you’re building an AI startup, remember this: your users don’t care how clever your algorithms are. They care whether your product solves their problem better than the alternatives. Focus on that, and the technical challenges become much more manageable. Ignore it, and even the most brilliant AI won’t save you from joining the 85% that don’t make it.