AI coding assistants have gotten genuinely impressive, and they are making the average developer measurably faster at shipping code. That part is real. What is also real, and talked about much less, is what you quietly give up every time you accept a suggestion without fully understanding it.

The core problem is not that the tools are bad. It is that fluency and understanding are not the same thing, and autocomplete is very good at producing fluency.

Autocomplete Rewards Acceptance, Not Comprehension

Every time you press Tab to accept a suggestion, you are making a small bet that the generated code is correct, idiomatic, and appropriate for your specific context. Sometimes that bet pays off. But the more capable the tool becomes, the more convincing the suggestions look, and the less you feel the need to verify them.

This is not a flaw in the tool. It is a flaw in how humans respond to fluent output. Research on what psychologists call “automation bias” is consistent here: when a system presents confident-looking output, people defer to it even when they have the knowledge to catch errors. The suggestion looks right. The syntax is valid. It compiles. You move on.

The problem surfaces later, when the code you accepted turns out to be subtly wrong for your use case, or when you need to debug something you never really understood in the first place.

You Learn by Struggling, and You Are Struggling Less

There is a well-documented phenomenon in skill acquisition called “desirable difficulty.” Struggling through a problem, making mistakes, and correcting them is how deep learning happens. Making things easier in the short term often undermines retention and capability in the long run.

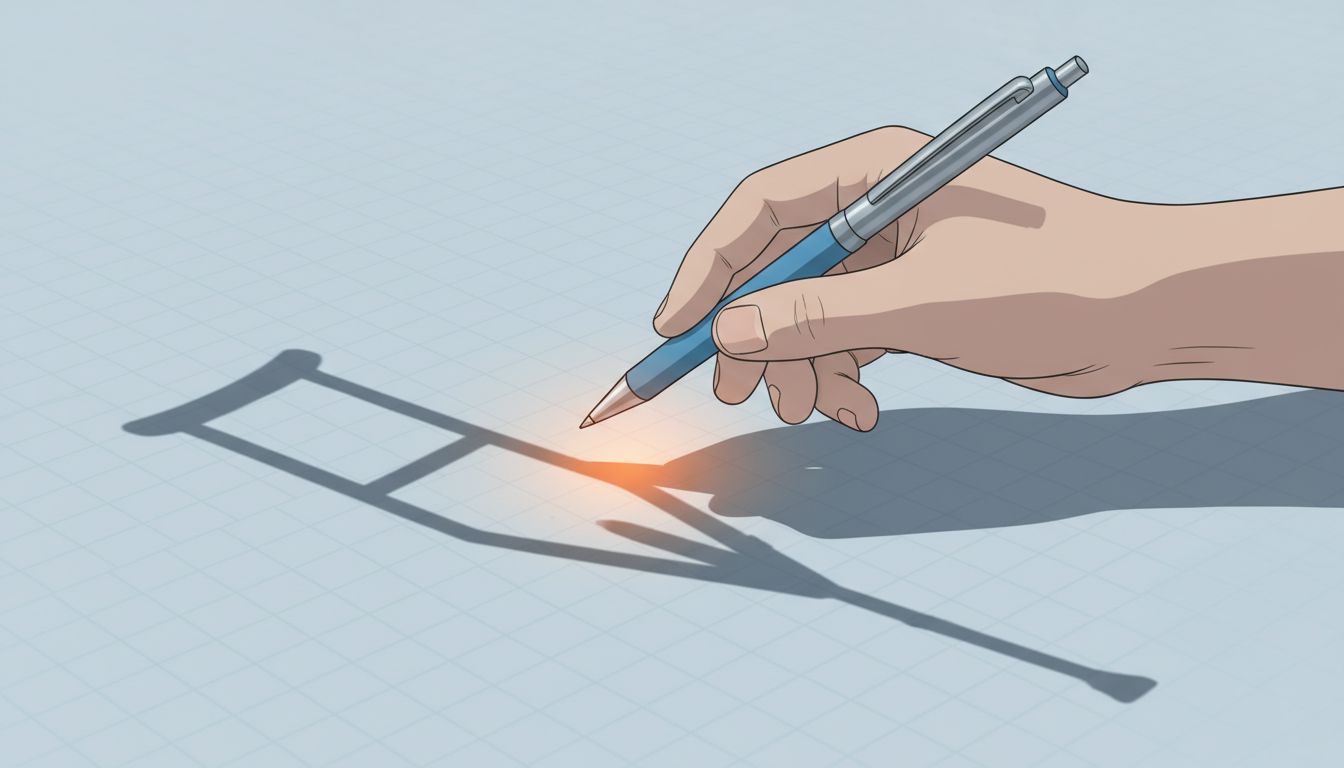

Writing code from scratch is full of desirable difficulty. You look things up. You make wrong guesses and see why they fail. You build a mental model of how the pieces fit together. Autocomplete short-circuits a lot of that. You stop at the moment of uncertainty, a suggestion appears, and you never have to sit with the discomfort long enough to actually learn.

Junior developers adopting these tools early are the most exposed to this effect. They are building their mental models right now. If those models are scaffolded primarily by accepted autocomplete suggestions rather than genuine problem-solving, they are going to find themselves dangerously dependent on the tools when the tools get something wrong in a domain they have never actually understood.

The Code Review Problem Gets Worse, Not Better

Good code review catches logic errors, architectural problems, and decisions that will hurt you in six months. It works because the reviewer brings independent understanding to the code.

When both the author and the reviewer are heavy AI tool users, you lose that independence. The reviewer is looking at code that was generated by a model and is evaluating it with pattern-matching intuitions that were themselves shaped by similar models. Studies on groupthink in decision-making show that shared information sources reduce the chance that any individual will catch what others miss. The same dynamic applies here.

There is also a more mundane problem: AI-generated code tends toward verbosity and a kind of averaged-out stylistic correctness that is easy to skim and hard to read deeply. It looks reviewed even when it has not been. The staging environment problem is already a classic example of a safety theater trap in software development. AI code review is heading toward a similar dynamic.

The Counterargument

The reasonable pushback here is that every generation of tooling has raised the same alarm, and every generation of developers has adapted. Compilers replaced assembly programmers. IDEs with syntax highlighting replaced text editors. Stack Overflow normalized looking things up. None of these killed the profession.

That is a fair point, and I do not think AI coding tools are going to produce a generation of useless engineers. But there is a meaningful difference between tools that help you do what you already understand and tools that replace the act of understanding itself. A compiler takes your logic and translates it. Autocomplete supplies the logic. That is a different kind of assistance.

The engineers who will thrive with these tools are the ones who use them to move faster through the parts they already understand, not the ones who use them to skip building that understanding in the first place. The most expensive engineers are expensive precisely because they catch what the tools miss.

What You Can Actually Do About This

None of this means you should stop using the tools. It means you should use them deliberately.

First, hold yourself to a rule: before accepting any suggestion longer than a line or two, you should be able to explain what it does and why it is correct for your context. If you cannot, write it yourself or look it up until you can. Second, protect some of your work time for problems where you do not reach for autocomplete at all. Solve some things slowly. Third, when you review code, actively ask whether you understand the logic or just recognize the pattern. Those are not the same thing.

The tools are going to keep getting better. The suggestions are going to keep getting more convincing. Your ability to evaluate them critically depends entirely on the understanding you build while the suggestions still make you a little uncertain. Protect that uncertainty. It is doing more for you than the acceptance rate ever will.