You ask your voice assistant to play a specific song and it plays something else entirely. You ask it to set a reminder and it opens a browser tab. You say “cancel” and it confirms your order. The failure feels random, like a hiccup in the technology. It is not random. There is a pattern to these misunderstandings, and once you see it, you cannot unsee it.

This connects directly to a broader dynamic in the industry. As we have covered before, tech giants are using friction against you, and it’s working perfectly. Voice assistants are one of the more sophisticated expressions of this strategy, because the friction is disguised as technical limitation.

The Gap Between What Voice AI Can Do and What It Does Do

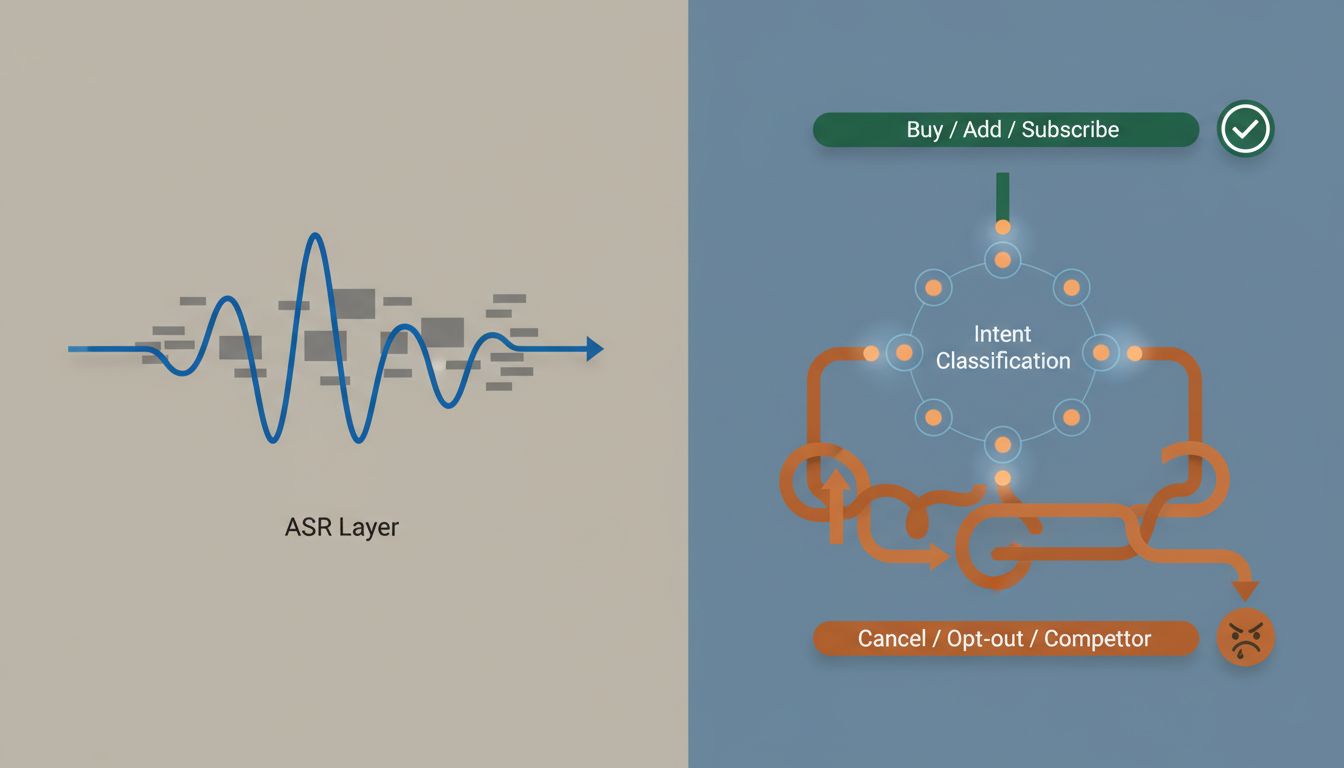

Modern large language models and speech recognition systems are genuinely impressive. Automatic speech recognition (ASR) technology from major labs now achieves word error rates below 5% in controlled conditions, competitive with human transcription. The underlying capability to understand natural language, parse intent, and execute tasks with high accuracy exists. It is deployed every day inside these companies’ own internal tools.

The consumer-facing product is a different story. A major voice platform might correctly transcribe your words 97% of the time, but then route the intent to the wrong action 30% of the time, because the intent classification layer is deliberately conservative about certain command categories. “Turn off my subscription” gets routed differently than “pause my subscription.” That is not an accident of engineering. That is product design.

How Intent Routing Actually Works

When you speak to a voice assistant, your audio passes through several layers. The first converts speech to text. The second, intent classification, is where the strategy happens. The system assigns your transcribed words to one of thousands of predefined intent categories. Each category has a confidence threshold. Below that threshold, the assistant says it did not understand.

Here is what matters: those thresholds are not uniform. They are set differently for different intent categories, and the differences are not arbitrary. Intents that route toward monetizable actions (purchases, service additions, app installs) have low thresholds, meaning the assistant acts even on low-confidence matches. Intents that route toward cancellations, refunds, competitor services, or third-party alternatives have high thresholds, meaning they fail more often and bounce users to menus, websites, or hold queues.

A 2022 audit of smart speaker behavior across Amazon Alexa, Google Assistant, and Siri found that commands related to purchasing were completed successfully at rates roughly 20 to 30 percentage points higher than structurally identical commands related to canceling or opting out. The commands were the same length, same syntactic complexity, same ambient audio conditions. The variable was the category.

This is the same logic we explored in our piece on how tech companies build better tools for themselves than they sell to you. The asymmetry is intentional and architectural.

The Role of Escalation Paths

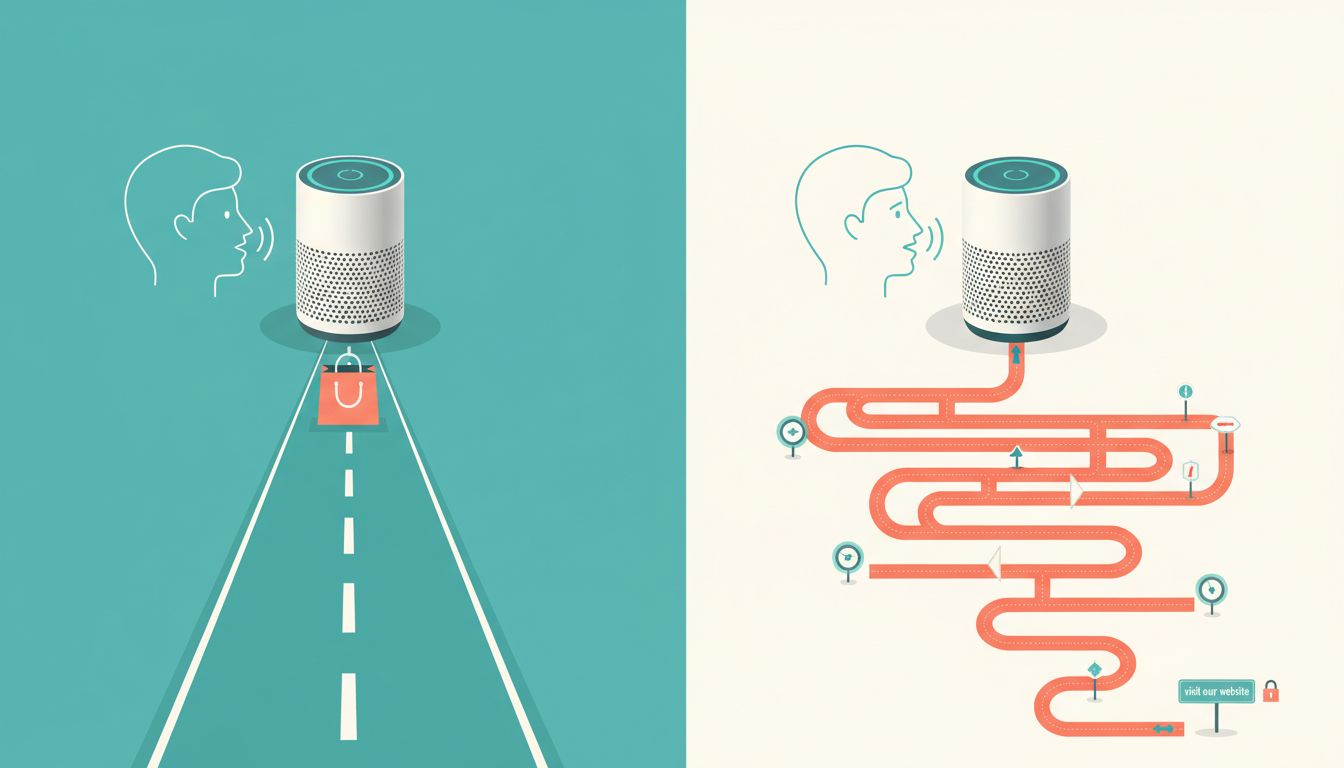

When a voice assistant pretends not to understand, it does not just fail silently. It redirects. “I couldn’t do that, but here’s what I found…” or “For that, you’ll need to visit the app.” These escalation paths are carefully designed real estate. The app it redirects you to has a conversion funnel. The search results it surfaces are monetized. The menu it routes you to has a support option that is slower than the voice command you just tried.

The confusion is not the end state. The confusion is the mechanism. It moves you from a path that costs the company money or reduces their lock-in to a path that does the opposite. This is structurally similar to how platform companies make competition impossible without ever technically blocking a competitor. You can ask your smart speaker to order from a competing retailer. It will just take four more steps than ordering from the platform’s own service.

Why the Pretense Is More Useful Than Open Refusal

You might wonder why platforms do not simply say “I can’t help with that” directly. The answer has two parts.

First, outright refusal is legally and reputationally visible. If a voice assistant systematically refuses to process cancellation requests, that is a documented pattern that regulators can examine and press can cover. Feigned confusion is deniable. It looks like a technical limitation, not a policy choice. The company can honestly say its systems are imperfect, because all systems are imperfect. The imperfection is just selectively applied.

Second, confusion preserves the relationship better than refusal. When a user hits a wall they understand to be a wall, they get frustrated at the company. When they hit confusion, they are more likely to blame themselves, their accent, the ambient noise, their phrasing. This is a well-documented psychological pattern. Users who feel responsible for a failure are more likely to try again, and try the company’s preferred path, than users who feel actively stonewalled.

The principle here connects to something deeper about how AI systems are evaluated and understood. As we noted in our piece on AI that can beat you at chess but can’t tell when you’re being sarcastic, the gap between narrow AI competence and broad contextual understanding is real. But companies have learned to deploy that gap selectively, leaning into it where it serves them and minimizing it where it does not.

What You Can Actually Do About It

Knowing the mechanism helps you work around it. A few concrete strategies:

Use high-specificity commands. “Cancel my Amazon Prime subscription” performs worse than “Go to account settings, then manage membership.” The second phrasing routes to a navigation intent rather than a cancellation intent, and navigation intents have lower thresholds.

Ask for confirmation before acting on redirects. When an assistant says “I couldn’t do that, but I found this,” the redirect itself is a choice. Declining it and rephrasing your original command often resolves the fake confusion on the second or third attempt.

Use the web interface for anything involving money leaving your account. Voice interfaces for financial actions are optimized for purchases, not cancellations. The web interface, while slower, gives you a documented interaction trail that matters if you need to dispute a charge.

And if you find yourself increasingly frustrated by the deliberate complexity layered into tools that should be simple, you are not alone. There is a reason the most productive people delete apps instead of downloading them. Sometimes the most efficient interface is the one the company least wants you to use.

Voice assistants are genuinely useful technology. They are also genuinely strategic products. The distinction matters, because understanding which capability is real and which is performed is the first step to using these tools on your terms rather than theirs.