The simple version

Your app is probably slow because of how it’s structured, not what language it’s written in. Rewriting it in a faster language copies those structural problems into a new codebase while adding months of new bugs.

Where the intuition comes from

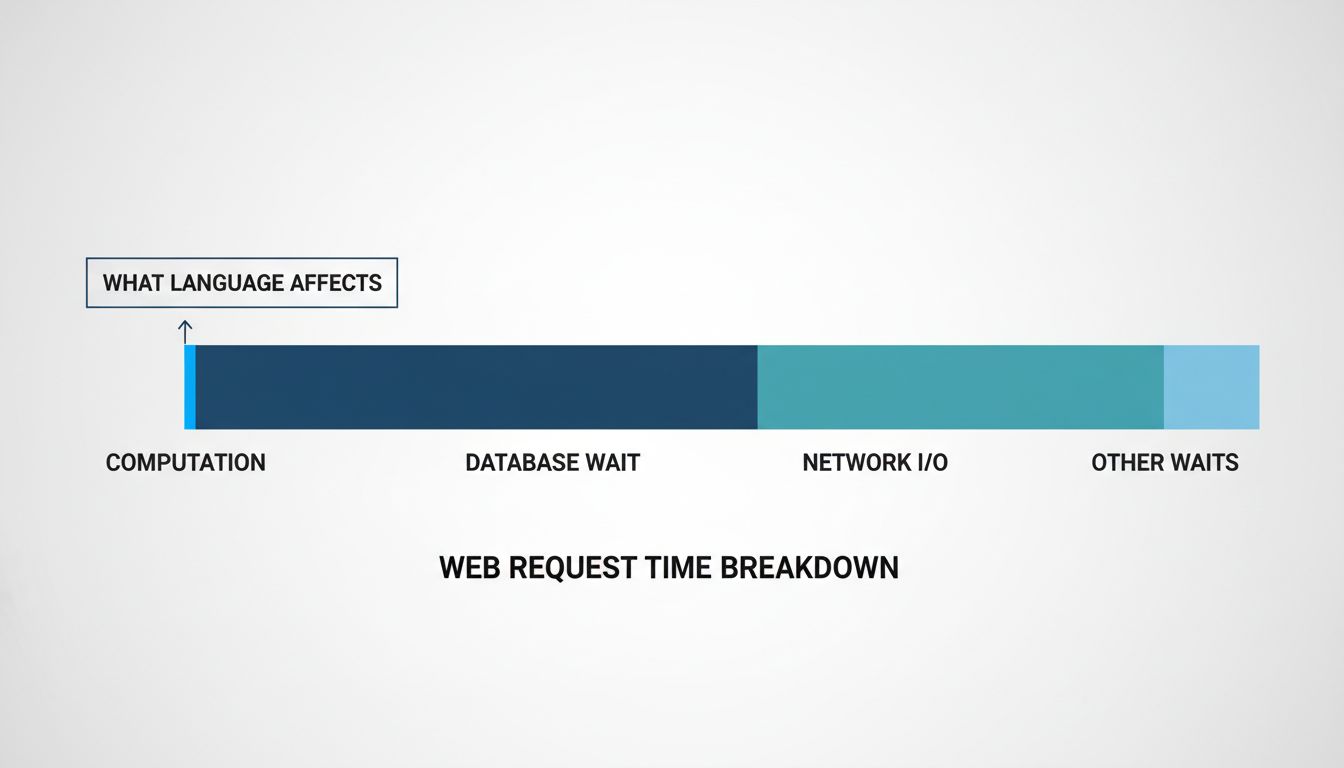

The intuition isn’t crazy. Rust and C++ execute instructions faster than Python or Ruby. Benchmarks show this clearly. If you’re doing heavy numerical computation, the language absolutely matters. But most applications don’t spend most of their time executing instructions. They spend time waiting.

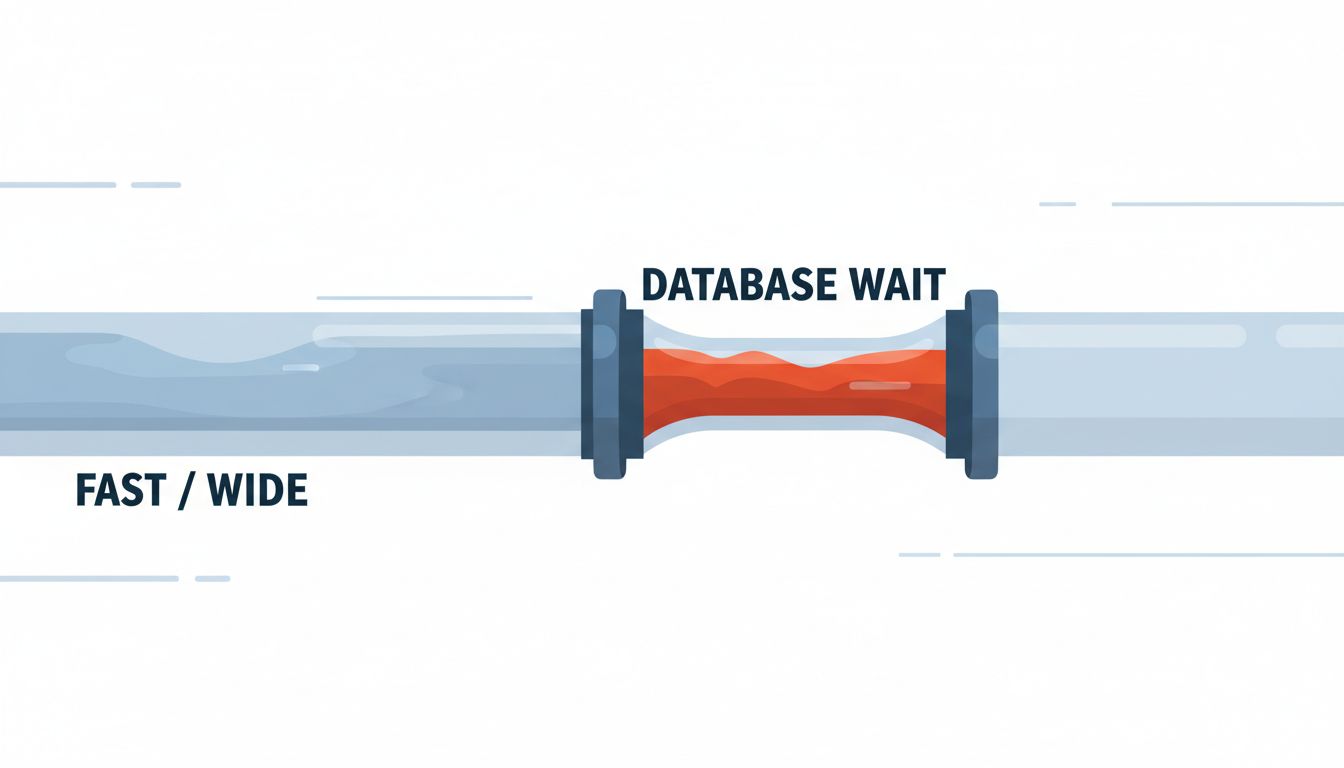

Waiting for a database query to return. Waiting for a network response. Waiting for a disk read. Waiting for a lock to release. These pauses swallow the speed advantages of any language. Rewriting your Python service in Go can make the 2% of time spent on pure computation ten times faster, while leaving the 98% of time spent waiting completely unchanged.

This is Amdahl’s Law in action. The speedup you get from improving part of a system is limited by how much of the total time that part actually takes. If your endpoint spends 5ms computing and 200ms waiting on a database, a 10x faster language gives you a 4.7ms improvement. That’s not nothing, but it’s not the fix you were picturing.

The rewrite introduces new slowness

Here’s the part that actually makes rewrites slower in practice: you don’t just copy your code, you copy your assumptions. And you lose all the accumulated tuning.

Production code is slow in interesting ways. The weird N+1 query that only shows up when a user has more than fifty items in their cart. The connection pool that’s sized for the traffic you had two years ago. The caching layer someone added in 2019 that you forgot about. These are the things that actually determine your application’s performance profile.

When you rewrite, you rebuild the straightforward version of your system. That version hasn’t been through two years of production incidents and performance fixes. It’s a clean, unoptimized starting point. So you’re not comparing your tuned Python app against your tuned Rust app. You’re comparing your tuned Python app against your fresh Rust app, and the Rust app loses.

Teams regularly discover this six months after completing a rewrite. The new system is fast in benchmarks and slow in production because nobody has yet found and fixed the production-specific pathologies.

What actually determines application speed

Four things matter more than language choice for most web applications:

Query patterns. How many database queries does a single request generate? Are you loading data you don’t need? Are you missing indexes on columns you filter by? A single missing index on a large table can turn a 10ms query into a 4-second one. No language change touches this.

I/O concurrency. If your service calls three external APIs in sequence when it could call them in parallel, you’re doing 3x the necessary waiting. This is an architectural decision, not a language one. Python’s asyncio, Node’s event loop, and Go’s goroutines are all capable of fixing this. So is restructuring your calls in synchronous code.

Memory allocation patterns. Excessive garbage collection, unnecessary data copying, holding large objects in memory longer than needed. These hurt performance in any language, and they’re usually fixable without changing languages.

Caching. The fastest computation is one you don’t do. If you’re recalculating something on every request that changes once per hour, you have a caching opportunity that will improve performance by orders of magnitude more than a language change.

Fix these and your Python app might be fast enough. Break any of them and your Rust app will still feel sluggish.

When the language actually is the problem

There are genuine cases where language choice is the bottleneck, and you should know what they look like.

If your service does CPU-intensive work on every request (image processing, video encoding, running ML inference, cryptographic operations), compute time starts to matter in a real way. Scientific computing and data processing pipelines that are genuinely computation-bound benefit from lower-level languages. Real-time systems with hard latency requirements can be affected by garbage collection pauses in ways that matter.

The tell is your profiler output. If you profile your application and find that execution time is dominated by your own code doing computation, rather than waiting on I/O or sitting in framework overhead, you might have a language bottleneck. If your profiler shows you waiting on network calls and database queries, you don’t.

This is also where targeted rewrites make sense over full rewrites. Discord’s well-documented move of a single latency-sensitive service from Go to Rust, after profiling identified a specific garbage collection issue in that service, is a good model. They didn’t rewrite everything. They found the specific place where the language was causing a measurable problem and changed that piece.

The practical path forward

Before you start a rewrite, spend two weeks doing this instead.

Profile your application under real production load. Not synthetic benchmarks. Find out where time is actually going. If you don’t have good observability set up, add it first. Six things developers get wrong about latency vs throughput is worth reading before you start interpreting what you find.

Look at your slowest endpoints. How many queries do they generate? Pull up your database’s slow query log. Check whether your connection pool is sized appropriately for your concurrency. Look for sequential I/O calls that could be parallelized.

Fix the three most obvious things you find. Measure again. In most cases, this gets you to acceptable performance without touching your language at all.

If you do this work and your profiler still shows execution time dominated by your own computation code, then a targeted rewrite of that specific component is worth considering. But a full-application rewrite should be a last resort, not a first response to a slow P95.

The seductive thing about rewrites is that they feel like progress. You’re building something, making decisions, moving fast. But a rewrite that ships in nine months and performs the same as what you had is nine months of opportunity cost. Profile first. You’ll almost always find the answer is something more boring and more fixable than the language.