What A/B Testing Actually Is

A/B testing, in its original form, is a sensible idea. You have two versions of something (a button color, a checkout flow, a page layout) and you want to know which one performs better. So you randomly split your users into two groups, show each group a different version, measure the outcome you care about, and pick the winner. It’s essentially a controlled experiment, the same structure a pharmaceutical trial uses, adapted for software.

The mechanics are straightforward. You assign each incoming user to a bucket (usually by hashing their user ID or session token to get a deterministic assignment), you log which variant they saw, and you measure a target metric against a baseline. A p-value below 0.05 (a measure of statistical confidence, roughly meaning the result has less than a 5% chance of being a fluke) and you ship the winner. Most mature engineering organizations run dozens or hundreds of these simultaneously using frameworks like Facebook’s PlanOut, Google’s Overlapping Experiment Infrastructure, or Optimizely.

That’s the textbook version. The problem is what happens when you change the thing being optimized.

When the Metric Is Your Attention, Not Your Satisfaction

Here is where the engineering story gets uncomfortable. A/B testing is only as ethical as the metric you’re optimizing for. If you’re testing two versions of a checkout flow and measuring completion rate, you’re probably trying to reduce friction for users who already want to buy something. That’s benign. But if you’re testing two versions of a notification system and measuring “daily active users” or “time on platform,” you’re in different territory.

The 2012 Facebook emotional contagion study is the canonical example of this going wrong publicly. Facebook modified the content shown to roughly 700,000 users (without explicit consent beyond the terms of service) to test whether emotional tone in the News Feed could influence users’ own emotional states. It could. Users shown more negative content wrote more negative posts. The study was published in the Proceedings of the National Academy of Sciences, which is how the public found out it happened at all.

Facebook’s defense was that this fell under their terms of service. Legally they were probably right. Ethically, the argument falls apart quickly. Nobody reading a social network’s terms of service expects to be a subject in a published psychological study. “We reserve the right to use your data for research” does not communicate “we will manipulate what you see to observe changes in your mental state.”

The contagion study was unusual only in being documented and disclosed. The underlying practice, running behavioral experiments on users without informed consent, is standard industry practice.

The Consent Problem Is Structural, Not Incidental

Let me be precise about what informed consent means in a medical or research context, because the contrast with tech is instructive. An IRB (Institutional Review Board) is the oversight body that approves human subjects research at academic and medical institutions. Before a study runs, researchers must describe what participants will experience, what data will be collected, what risks exist, and how participants can withdraw. Participants must affirmatively agree.

Tech companies are not subject to IRB review. They have no equivalent oversight body. The “consent” that exists lives in terms of service documents that the Federal Trade Commission’s own research has found almost no one reads in full. One study by Lorrie Faith Cranor and colleagues at Carnegie Mellon estimated that reading every privacy policy you encounter in a year would take roughly 76 work days. Nobody does this. The terms of service model of consent is, functionally, not consent.

This matters because the scope of experimentation is enormous. Netflix has publicly described testing thumbnail images, copy, and content ordering. Google runs experiments across search, ads, and Maps simultaneously. Amazon tests pricing, page layout, and recommendation algorithms continuously. Every time you use one of these products, there is a non-trivial probability you are in an active experiment. You were enrolled automatically. You cannot opt out. You will not be told when you exit the experiment or what the results were.

For most product experiments, this is a low-stakes situation. Whether a button is blue or green probably doesn’t matter to you. But the same infrastructure that tests button colors also tests notification frequency designed to create habitual checking behavior, default settings engineered to maximize data sharing, and pricing displays structured to make subscription cancellation feel more costly than it is.

The Architecture of Manipulation

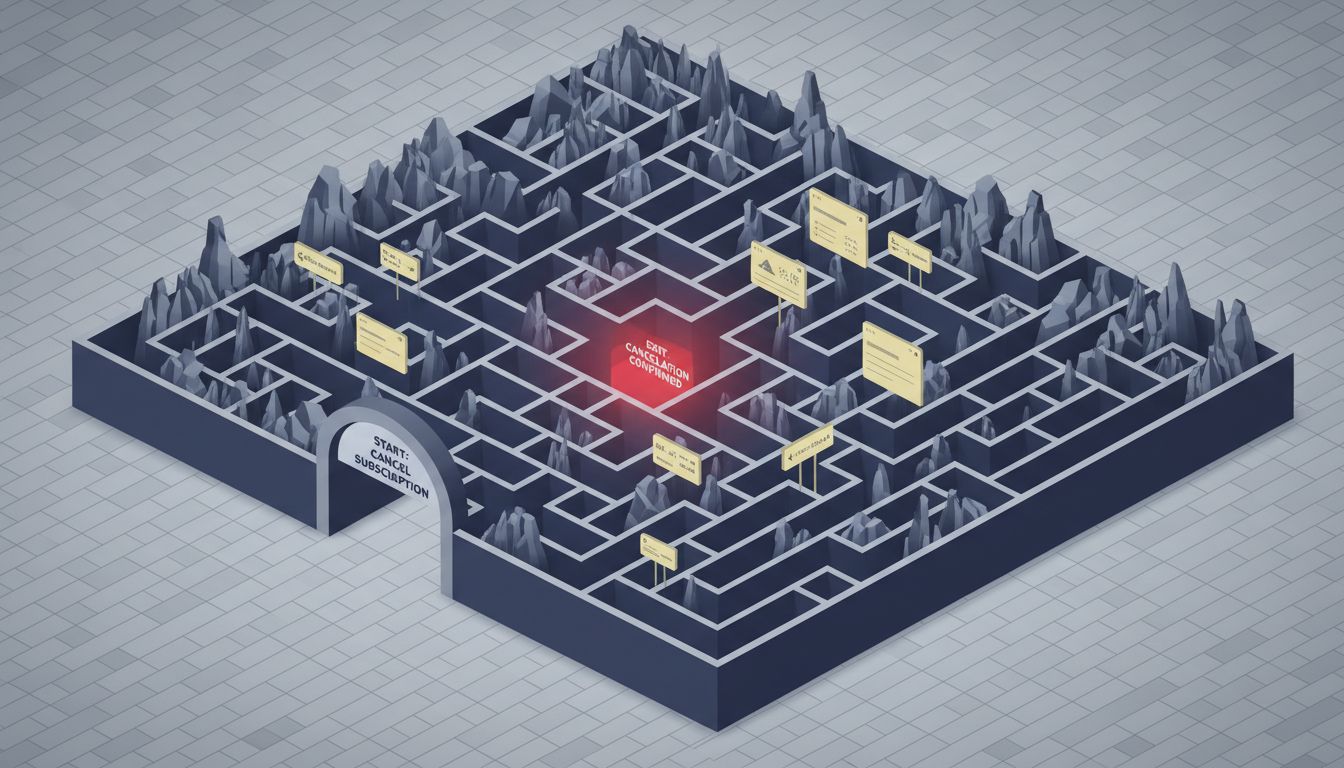

Dark patterns (design choices that are intentionally designed to work against the user’s interests) and A/B testing are a natural pairing, and this is where the technical picture gets genuinely concerning.

Consider a subscription cancellation flow. A company can A/B test: how many steps are required to cancel, whether a “pause instead” option appears, what emotional framing surrounds the cancel button (“Cancel my subscription” versus “Give up my benefits”), whether a retention offer appears and at what point, and how visible the final confirmation is. Each individual test might produce a result that looks like an engagement or retention win by the product team’s metrics. The aggregate result is a flow deliberately engineered to make users who want to leave feel friction, guilt, and confusion.

This is not hypothetical. The FTC has taken action against companies including Amazon, whose Prime cancellation process (called “Iliad” internally, a name that inadvertently captures the intended experience) required multiple screens and obscured the final cancel option. The FTC’s complaint against Amazon, filed in 2023, describes a deliberate internal process where employees who tried to simplify the cancellation flow were overruled by leadership because simplification hurt retention metrics.

The A/B testing framework is what makes this scalable and deniable. Each individual decision looks like an optimization. The pattern only becomes visible when you look at the cumulative design, which requires either internal documents or regulatory investigation to surface.

Why Engineers Build This Anyway

I want to address this honestly rather than just identifying a villain. Most of the engineers building these systems are not thinking “I am going to manipulate users.” They’re thinking about their metrics, their sprint goals, and whether the test reached statistical significance.

The problem is that A/B testing infrastructure creates an abstraction layer between individual engineers and the downstream effects on users. You’re working on a notification system. Your job is to improve D7 retention (the percentage of users still active seven days after signing up). You run a test that increases notification frequency for one cohort. Retention improves. You ship the winning variant. You don’t see the users who started finding the product annoying, turned off notifications, or developed mild anxiety about the red badge count.

This is a feedback loop problem. The metrics that are easy to measure (clicks, sessions, retention, revenue) get optimized. The things that are hard to measure (user wellbeing, trust, long-term relationship with the product) don’t enter the optimization function. Over time, products systematically get better at the measurable things and worse at the unmeasurable ones.

The technical solution would be to include adversarial metrics in the experiment evaluation, metrics you’re actively trying not to hurt. Some companies do this. They’ll measure customer support contacts, uninstall rates, or net promoter scores alongside the primary metric. But this requires organizational will to ship a “worse” result on your primary metric if the adversarial metrics look bad, and in practice that will is rare when it conflicts with quarterly growth numbers.

What Regulation Has and Hasn’t Done

The GDPR (Europe’s General Data Protection Regulation, in force since 2018) and the CCPA (California’s Consumer Privacy Act, 2020) have moved the needle somewhat, but less than advocates hoped. Both regulations include provisions about processing personal data for purposes beyond what users reasonably expect, which is a reasonable description of behavioral experimentation. In practice, enforcement has focused more on data storage and transfer than on experimentation frameworks.

The EU’s Digital Services Act, which came into force for large platforms in 2023, includes provisions about “recommender systems” and requires large platforms to offer versions of their services that don’t profile users for targeting purposes. This is closer to addressing the experimentation problem, but it’s still limited to recommendation systems rather than arbitrary behavioral experiments.

The honest assessment is that regulation has been a weak check on this practice. The companies involved have significant lobbying capacity, the technical complexity creates plausible deniability, and regulators generally lack the technical staff to audit experiment infrastructure effectively.

What This Means

A/B testing is not inherently unethical. Testing whether a clearer error message helps users recover from problems is a straightforward use of the tool. The method itself is just empiricism applied to software.

The problem is that the same infrastructure, when pointed at engagement metrics and combined with organizational incentives that reward growth over user welfare, becomes a systematic mechanism for behavioral manipulation. Users are not informed participants in experiments. They have no meaningful recourse. And the companies running these experiments have strong financial incentives to optimize for metrics that do not correspond to user interests.

The most defensible standard, which some companies have begun adopting voluntarily, is to ask: if we told users exactly what this experiment is testing and what a win means for us, would they still consider it acceptable? For button color tests, almost certainly yes. For experiments designed to make cancellation harder or notification anxiety higher, almost certainly no. The gap between those two categories is where the ethical line sits, and right now there’s nothing stopping companies from operating entirely in the second category as long as their terms of service are long enough.