The Green Check Mark Is a Promise You Made to Yourself

Every engineer knows the feeling. You push a change, the pipeline runs, everything goes green, and you merge with confidence. CI was supposed to solve the problem of “works on my machine.” In many ways, it did. But somewhere along the way, teams started treating a passing build as proof of correctness rather than proof of consistency. Those are not the same thing.

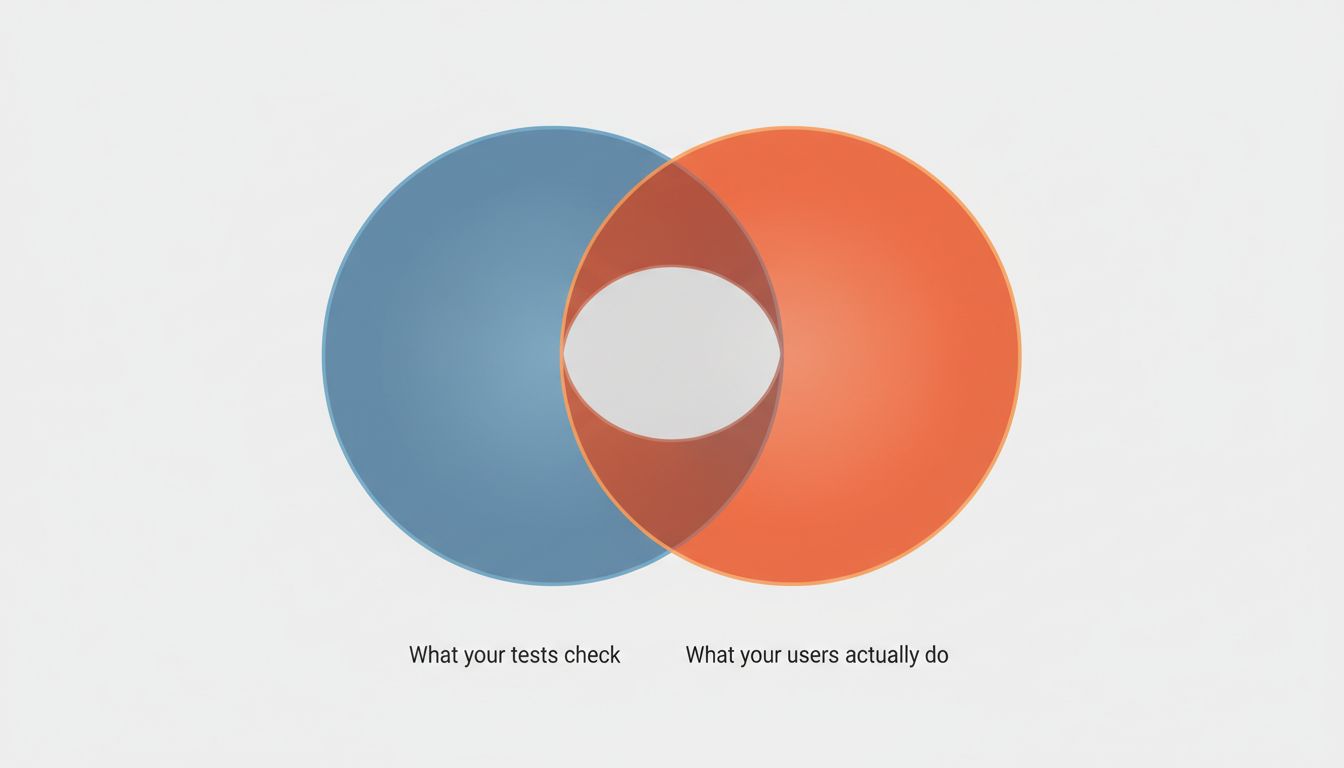

A CI pipeline verifies that your code behaves the way your tests say it should behave. That’s it. If your tests are incomplete, wrong, or testing the wrong things entirely, your pipeline will pass enthusiastically while your users hit real failures. The pipeline is not a quality oracle. It’s a mirror. It reflects exactly what you asked it to check and nothing more.

Tests Are a Model of Reality, Not Reality Itself

This sounds obvious until you watch a team spend three days debugging a production incident caused by behavior their test suite never covered. The bug wasn’t untested by accident. It was untested because no one imagined it could happen.

Test suites are models, and models have assumptions baked in. Unit tests assume the unit behaves correctly in isolation, which is fine until your system’s failure modes are almost entirely about how units interact. Integration tests assume your test environment resembles production, which it usually doesn’t in ways that matter: different data volumes, different timing characteristics, different third-party service behavior. End-to-end tests assume your scenarios reflect actual user behavior, which requires you to know how users behave in advance.

The Knight Capital incident from 2012 is instructive here. A deployment activated dormant code that hadn’t been used in years. Their software passed whatever tests existed. The tests didn’t cover the state that emerged from partial deployment across servers with mismatched code versions. In 45 minutes, the firm lost $440 million. The CI pipeline, had one existed in its modern form, would have been green.

What Passing Tests Actually Guarantee

A passing CI pipeline gives you a narrow but real set of guarantees. It tells you the code compiles. It tells you the behaviors you explicitly specified haven’t regressed. It tells you integration points work in your test environment with your test data. These are worth having. Regression protection alone justifies the investment.

What it doesn’t tell you is whether the software handles inputs your tests didn’t anticipate. It doesn’t tell you how the system behaves under load, under partial failure, or when dependencies return unexpected responses. It doesn’t tell you whether users can actually accomplish what they’re trying to do. It doesn’t tell you whether the feature you shipped is the feature anyone wanted.

This gap gets wider as systems get more complex. A microservices architecture might have every individual service passing its own tests while the system as a whole fails on interactions between them. Timezone handling is a classic example of this: each service stores and retrieves time correctly according to its own tests, and they still produce wrong results when they talk to each other because they’re making different assumptions about context.

The Confidence Trap

The real danger isn’t that CI pipelines are imperfect. Everything is imperfect. The danger is that a green build creates a level of confidence that isn’t warranted, and that confidence changes behavior. Teams ship faster after CI adoption, which is good. But some of those teams also do less manual verification, less exploratory testing, less thinking about edge cases, because the pipeline is supposed to catch problems. It doesn’t catch what it wasn’t told to look for.

This is a version of Goodhart’s Law: when a measure becomes a target, it stops being a good measure. Build success becomes the target, teams optimize for build success, and build success stops telling you much about actual software quality. You end up with codebases where test coverage is high on paper because tests were written to cover lines of code rather than to probe behavior. The pipeline passes. The software is fragile.

AI-assisted coding tools are making this more acute. They’re genuinely useful for generating tests, but they generate tests that verify the code they just wrote behaves the way they just wrote it. That’s circular. It’s testing implementation, not intent. The test suite grows, coverage metrics improve, and the pipeline becomes even greener while the gap between “what the tests check” and “what users need” potentially widens.

What to Do About It

The fix isn’t to distrust CI. It’s to be precise about what CI tells you and build additional verification for what it doesn’t.

Property-based testing, where tools like Hypothesis (for Python) or fast-check (for JavaScript) generate thousands of random inputs to find edge cases, catches categories of bugs that example-based tests miss by definition. Chaos engineering, running controlled failures in staging environments, tests how your system behaves when the assumptions your tests make stop being true. Observability in production catches what all your pre-production testing missed, which will always be something.

Exploratory testing by humans who are actually trying to break things remains undervalued. A skilled tester spending a few hours on a new feature before release will find things no automated suite will find, because they’re not constrained by the test author’s imagination.

Most importantly, be honest about what your pipeline represents. It’s a consistency check, not a correctness proof. Treat it as one signal among several, not the final word. The green check mark means you can proceed with appropriate caution. It has never meant you can proceed without any.