The Word ‘Reasoning’ Is Doing a Lot of Work

When you ask a large language model to “reason through” a problem, you probably imagine something like deliberation: the model considering options, weighing evidence, arriving at a conclusion. That intuition is useful enough for everyday prompting, but it quietly smuggles in a misconception that leads people to trust these systems in the wrong situations and distrust them in the right ones.

What an LLM is actually doing is closer to very sophisticated pattern completion over a compressed representation of an enormous amount of human text. That sounds reductive, but it isn’t meant to be. The capability that emerges from that process is genuinely remarkable. Understanding it accurately makes you better at using it.

What Chain-of-Thought Actually Does

The most concrete thing to understand is what happens when you prompt a model with “let’s think step by step” or ask it to show its work. This technique, formalized in a 2022 paper by Wei et al. at Google Brain, is called chain-of-thought prompting, and it produces measurably better results on multi-step problems. But why?

The conventional explanation is that forcing the model to articulate intermediate steps gives it “more compute” to apply to the problem. That’s partially true in a mechanical sense. When a transformer generates tokens sequentially, each token it produces becomes part of the context for the next one. So if you write out “Step 1: identify the variables” before answering, you’ve literally given the model more input to condition its answer on. The scratchpad is real.

But there’s something subtler happening too. Training data is full of worked examples, textbooks, Stack Overflow threads, and forum posts where humans reason aloud. When you prompt a model into that register, you’re activating patterns associated with careful, systematic thinking. You’re not just giving it more compute. You’re steering it toward a region of its learned distribution that contains better answers.

This distinction matters practically. Chain-of-thought works best when the problem type resembles the kinds of problems that appear with written-out reasoning in the training data. It works less well when you need the model to reason about genuinely novel situations, or when the training data for similar problems was sparse or low quality.

The Difference Between Appearance and Process

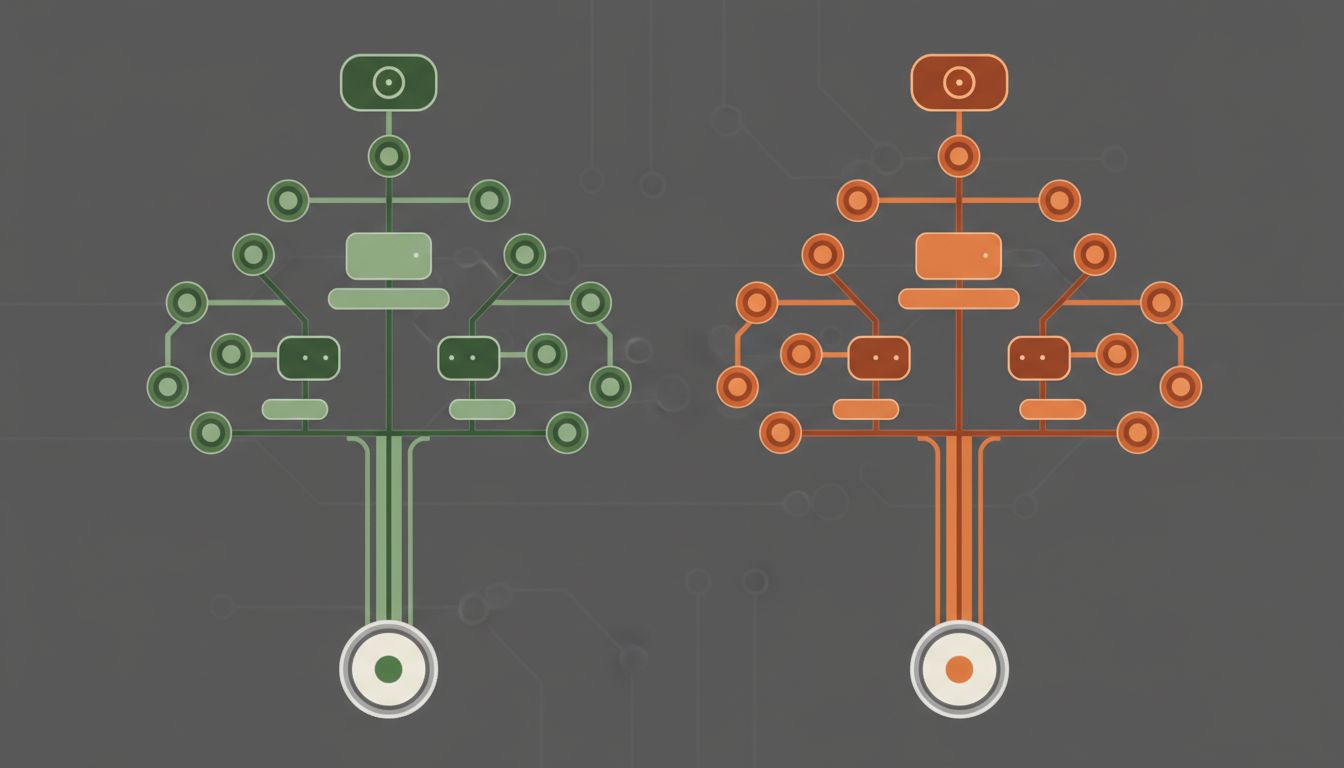

Here’s the part that trips up even technical users: an LLM can produce fluent, logically structured output without performing anything that resembles logical inference in the way a symbolic reasoner would.

A symbolic system (think Prolog, or a chess engine) applies explicit rules to explicit representations. You can inspect each step. The process is the proof. An LLM generates text that looks like step-by-step reasoning, but the internal computation that produced it is a cascade of matrix multiplications and attention weights over learned parameters. The visible “steps” are the output. They are not the process.

This creates a specific failure mode you should watch for: models that produce confident, well-structured reasoning chains that are nonetheless wrong, because the plausible-sounding intermediate steps were generated by the same statistical process as the conclusion. The scaffolding looks load-bearing but isn’t. Researchers call these “reasoning hallucinations,” and they’re more dangerous than straightforward factual errors because the structure makes them harder to spot. If you want to understand the underlying mechanism that makes this possible, the word ‘attention’ in AI means something precise and almost nobody explains it correctly.

This is why you should never treat a well-reasoned-looking LLM output as verified just because it shows its work. The format of rigorous reasoning and the substance of rigorous reasoning are separable.

Where the Process Actually Helps You

None of this means chain-of-thought prompting isn’t valuable. It absolutely is. You just need to apply it where it has real leverage.

The strongest use cases are ones where the answer space is constrained and verifiable. Math problems with checkable answers. Code that either runs or doesn’t. Logical puzzles with ground truth. In these cases, the model’s tendency to produce plausible-sounding steps is anchored by the structure of the problem itself. The training distribution for “working math problems” is dense and consistent, so the statistical patterns that emerge are reliable.

Where you should be more cautious: open-ended strategic questions, causal claims about complex systems, and anything where the model’s confidence is entirely self-referential. “Should we expand into this market?” generates a convincing chain of reasoning regardless of whether that reasoning reflects anything real about the market.

A practical habit that helps: after getting a reasoned response, ask the model to argue the opposite position with equal rigor. If it can produce an equally convincing chain of reasoning for the reverse conclusion, that’s a signal the problem isn’t well-constrained enough for you to defer to either answer. Use the model’s output as a starting framework, then stress-test it with your own knowledge.

How to Prompt for Better Reasoning

Given how the mechanism actually works, a few concrete adjustments improve your results:

Specify the problem type explicitly. Instead of “reason through this,” say “treat this as a debugging problem” or “analyze this the way a financial auditor would.” You’re steering the model toward a more specific region of its training distribution, which means the patterns it activates are more targeted.

Ask for uncertainties, not just conclusions. Prompt the model to flag where its reasoning is weakest or where it’s making assumptions. This doesn’t magically make it accurate, but it tends to surface the places where you should apply your own scrutiny.

Break complex problems into stages you control. Rather than handing the model a sprawling question and asking it to reason to a conclusion, structure the stages yourself and use the model for each discrete step. You maintain the logical spine; the model fills in the substance. This sidesteps the failure mode where confident-sounding intermediate steps compound errors.

Check the work against external anchors. Any conclusion that matters should be verified against sources the model didn’t generate. This sounds obvious, but in practice people accept well-reasoned LLM outputs as finished products far more often than they should.

The underlying system is powerful, and “reasoning through” a problem with an LLM is a genuinely useful thing to do. You just get more out of it when you understand what you’re actually working with: a pattern-completion engine trained on an extraordinary amount of human thought, capable of producing structured outputs that reflect the shape of good reasoning without necessarily replicating its guarantees. That’s a tool worth using well.