The coding bootcamp industry has spent years arguing about its own success rate. Bootcamps publish placement figures. Critics attack the methodology. Independent researchers find the numbers optimistic. Everyone focuses on whether graduates get jobs, and almost no one asks a more interesting question: why do the ones who don’t get hired fail in the specific way they fail?

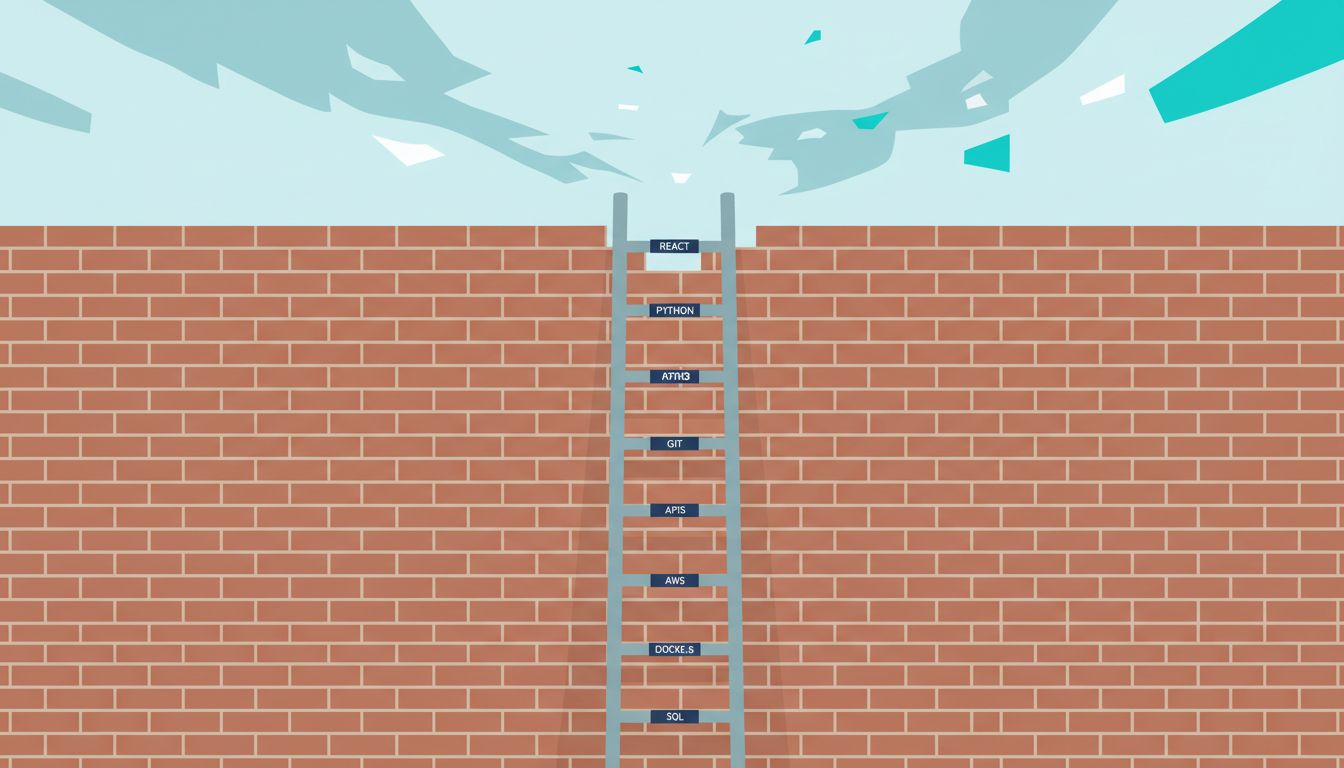

The answer is not that they can’t code. Most bootcamp graduates, by the end of a rigorous program, can write functional JavaScript or Python. They can build a CRUD application. They can push to GitHub. The technical floor is genuinely higher than it was a decade ago. The problem runs deeper than syntax, and it has almost nothing to do with the curriculum.

The Interview Is Testing Something Else

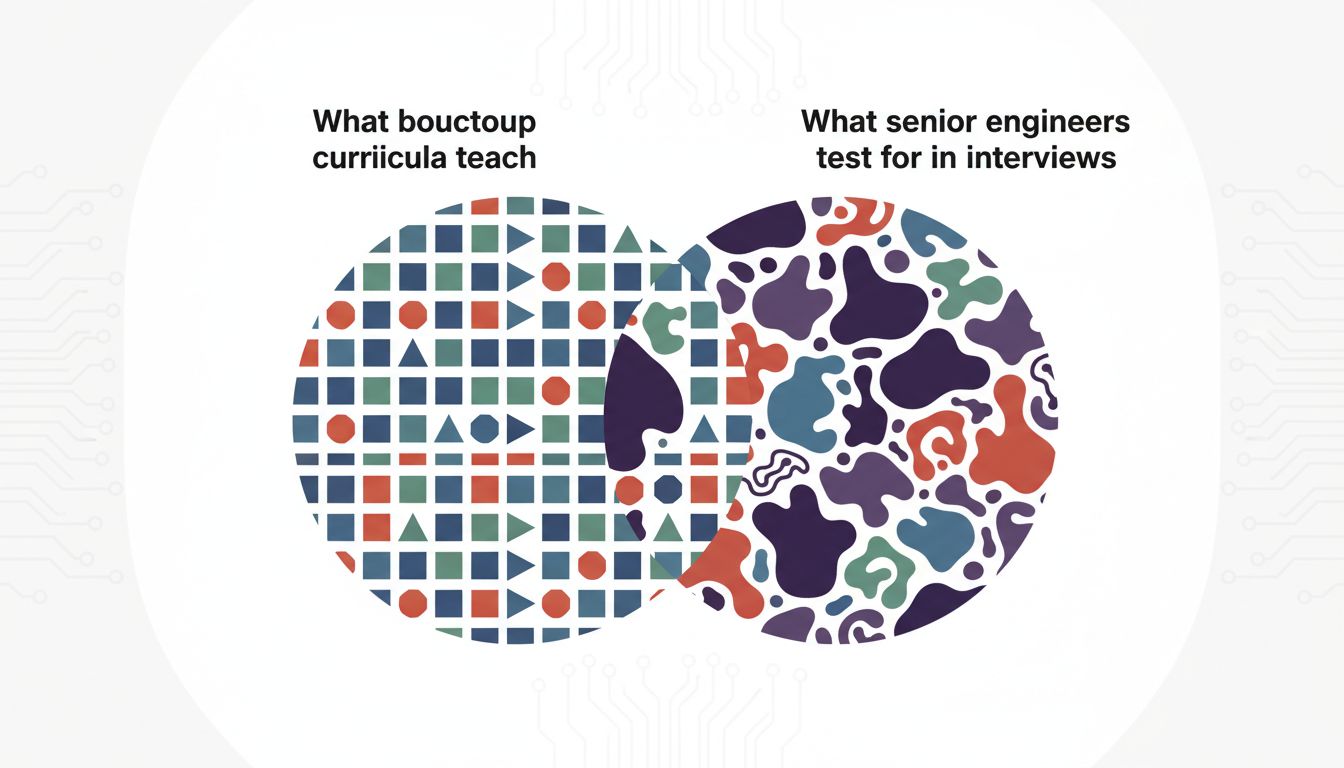

When a senior engineer sits across from a bootcamp graduate in a technical interview, they are not primarily assessing whether the candidate knows how to reverse a linked list. They are assessing something harder to name: the quality of a candidate’s thinking under uncertainty.

The linked list question is a proxy. What interviewers are actually watching for is whether you pause when you hit an unfamiliar problem, whether you ask clarifying questions before charging ahead, whether you narrate your reasoning or go silent, whether you recognize when your approach is failing and pivot without prompting. These behaviors are legible to experienced engineers in minutes. They are almost impossible to fake.

Bootcamp curricula are, almost by structural necessity, optimized for certainty. Students learn frameworks with known answers. Projects have specifications. The path through a twelve-week program is deliberately designed to minimize confusion, because confusion produces dropouts, and dropouts destroy placement statistics. The incentive structure of bootcamps, as explored in detail in the economics of bootcamp optimization, produces graduates who are extraordinarily good at learning within a system, and genuinely unprepared for work that requires operating without one.

The Mental Model Problem Nobody Mentions

Here is the gap that actually matters. A computer science graduate who spent four years learning theory has, embedded somewhere in their understanding, a rough mental model of what a computer is actually doing. They know what a process is. They have a conceptual grasp of memory allocation. They understand, at least abstractly, what happens when code runs.

This knowledge is rarely tested directly in bootcamps. It is also rarely needed to build a functional React application. You can ship real code without understanding what the event loop is doing. For months, maybe years, in certain kinds of work, it doesn’t matter.

Until it does. Until something breaks in production and there is no Stack Overflow answer for this specific combination of failures. Until the application is slow and the cause is not obvious and someone needs to reason from first principles about what the runtime is doing.

This is the moment that separates developers who can grow from developers who have topped out. And interviewers who are good at their jobs can often sense, in a forty-five minute conversation, which side of that line a candidate is on. Not because they ask gotcha questions about operating systems, but because the absence of a mental model shows up in how someone reasons about problems they’ve never seen before.

The Portfolio Illusion

Bootcamps push graduates toward portfolios as their primary differentiator. Build projects. Show your work. GitHub commits as proof of competence.

This advice is not wrong. But it has produced a generation of portfolio projects that experienced engineers find actively discouraging rather than impressive. Not because the projects don’t work, but because they reveal a pattern of learning that concerns hiring managers.

A typical bootcamp portfolio project is a complete application built to a specification the student more or less chose in advance. It works. It has a README. The code is probably reasonably clean. What it almost never has is evidence of genuine problem-solving: a messy commit history where the architecture changed because the first approach was wrong, a documented decision about why one library was chosen over another, a section of code that is obviously harder than it needs to be because of a real constraint the student ran into.

The best junior developer portfolios look a little rough because genuine learning is rough. The most polished bootcamp portfolios can look, to a senior engineer reading the code, like work that was never truly struggled with. That suspicion, fair or not, costs candidates.

The Cohort Effect and What It Does to Your Thinking

There is a subtler problem that almost no one talks about. Bootcamp graduates spend twelve to twenty-four intensive weeks learning in a cohort, with instructors who are experts in the curriculum, surrounded by peers at exactly the same level. The social dynamic of this environment is almost perfectly engineered to reward confidence in the things you know and to make the things you don’t know feel temporarily irrelevant.

This produces a specific kind of overconfidence, not arrogance exactly, but a miscalibration about what you know versus what you think you know. A bootcamp graduate who built six React applications in twelve weeks has genuine experience with React. They also have very little experience being the least-informed person in a technical conversation, being wrong about something they were certain of, or sitting with a problem for days without making progress.

Early careers in software engineering involve a lot of all three. The graduates who navigate this well are the ones who developed some tolerance for not-knowing during their program. The ones who struggle are often the ones whose programs were most efficient at eliminating confusion.

What Hiring Managers Are Quietly Looking For

There is a set of signals that hiring managers at companies with good engineering cultures consistently describe, usually in terms that sound vague but mean something specific.

Curiosity about how things work underneath the surface. Not obsessive low-level knowledge, but genuine interest in the layer below whatever you’re working in. The frontend developer who has spent an afternoon understanding what the browser is actually doing when it renders a page. The backend developer who has read at least the introduction to how the database engine they use every day works.

Comfort with ambiguity. The ability to make reasonable progress on a problem that is not fully specified. This is what most software work actually looks like, and it is almost entirely absent from bootcamp training.

A history of learning from failure specifically. Not just learning, but learning from things that went wrong. The relationship between deliberate problem-solving and apparent struggle is something senior engineers understand intuitively, even if they don’t frame it that way. They want to see evidence that a candidate has been genuinely stuck and found their way out.

None of these things appear in a resume. Most of them don’t surface cleanly in a portfolio. They emerge in conversation, and candidates who have them tend to know how to surface them. The ones who don’t have them tend not to notice they’re missing.

The Structural Fix Nobody Wants to Implement

The bootcamp industry could address most of this. The interventions are not complicated: build deliberate confusion into curricula, assign projects with incomplete specifications, create experiences where students have to debug code they didn’t write, and spend meaningful time on computer science fundamentals, not to turn graduates into systems programmers, but to give them the mental models that make professional growth possible.

The reason most bootcamps don’t do this is the same reason they publish optimistic placement statistics. Confused students drop out. Students who drop out don’t pay tuition. Programs that have high dropout rates don’t attract students. The business model of a bootcamp is structurally opposed to the pedagogical approach that would produce better engineers.

A smaller number of programs have started to figure this out. The ones with the best long-term outcomes, measured not just by first-job placement but by where graduates are three years in, tend to be the ones that have made their programs harder to finish, not easier.

What This Means

If you are a bootcamp graduate who has not yet landed a job, the problem almost certainly isn’t that you can’t code. The problem is that coding was never the main thing being evaluated. Interviewers are trying to assess whether you can function in an environment defined by uncertainty, partial information, and problems you have never seen before. The way to address that gap is not to practice more LeetCode. It is to deliberately spend time in the uncomfortable territory the bootcamp kept you out of.

Read about what your tools actually do underneath. Build something with a vague goal and no tutorial. Find code you don’t understand and sit with it until you do. The graduates who break through are, almost uniformly, the ones who figured out that they needed to re-engineer their own learning environment after graduation rather than waiting for an employer to do it for them.