The simple version

Cloud providers like AWS, Google, and Microsoft need to have spare server capacity ready before demand spikes — not after — so they’ve built forecasting systems that treat human behavior and physical weather as predictable inputs.

Why prediction matters more than reaction

Most people assume cloud infrastructure scales the way a light switch works: demand goes up, more servers turn on, problem solved. The reality is slower and more expensive. Spinning up physical hardware in a data center takes time. Ordering new servers, shipping them, racking them, configuring them — that process takes weeks or months. Even within existing data centers, allocating compute resources and cooling capacity isn’t instantaneous.

This means cloud providers can’t simply react to demand. They have to anticipate it. Get it wrong in one direction and customers experience slowdowns or outages. Get it wrong in the other direction and you’re running expensive hardware at 20% utilization, which is money evaporating into warm air.

The forecasting problem, then, isn’t a nice-to-have. It’s central to profitability. And it turns out weather is one of the better predictors they have.

How weather drives compute demand

The connection between weather and server load isn’t abstract. Consider a few concrete mechanisms.

When a major storm system moves through a densely populated region, people stay home. Streaming traffic climbs. Gaming sessions get longer. E-commerce orders spike as people avoid going out. All of that behavior flows through cloud infrastructure. Netflix, for example, has publicly discussed how bad weather correlates with viewership surges, and their content delivery infrastructure runs on AWS. The relationship is tight enough to be predictable, not just anecdotally.

Temperature extremes work similarly. Heat waves drive up demand for weather apps, air conditioning purchases, utility company portals, and health services. Cold snaps trigger heating oil delivery apps, road condition services, and flight rebooking traffic. Each of these generates compute load that’s geographically specific and temporally predictable if you have the forecast.

Seasonal patterns compound this. Retail cloud workloads have been well-documented to scale dramatically around Black Friday and the weeks leading up to major holidays. AWS has been running “pre-warming” programs for enterprise customers for years, where retailers work with AWS engineers ahead of peak seasons to provision capacity in advance. Weather interacts with this seasonality: an early cold snap in October can shift holiday shopping behavior by weeks.

What the forecasting models actually do

The systems cloud providers use to predict demand aren’t weather apps with a server rack attached. They’re multi-variable forecasting models that treat weather data as one signal among many.

The inputs typically include historical demand patterns (broken down by geography, time of day, day of week, and season), current and forecast weather data from national meteorological services and commercial weather data providers, major calendar events (sports championships, product launches, elections, holidays), and real-time signals from the network itself, like traffic patterns starting to shift.

The models learn correlations over time. A major snowstorm hitting the northeastern United States on a Sunday afternoon has a predictable signature: streaming demand climbs, food delivery apps spike, travel booking sites see cancellations and rebookings, and certain types of enterprise workloads drop because office workers aren’t logging in. The system has seen this pattern dozens of times and can start pre-allocating resources when the storm shows up in the seven-day forecast.

This is genuinely a machine learning problem, not just a lookup table. The interactions between variables are complex. A heat wave in Phoenix affects data centers differently than a heat wave in Seattle, because Seattle has far less air conditioning infrastructure, which changes consumer behavior in specific ways. The models have to be geographic, not global.

Google has been relatively transparent about using ML-based forecasting for their own internal data center operations, including using DeepMind models to reduce cooling energy consumption. The same predictive infrastructure that optimizes cooling can be applied to capacity planning.

The physical constraint that makes all of this necessary

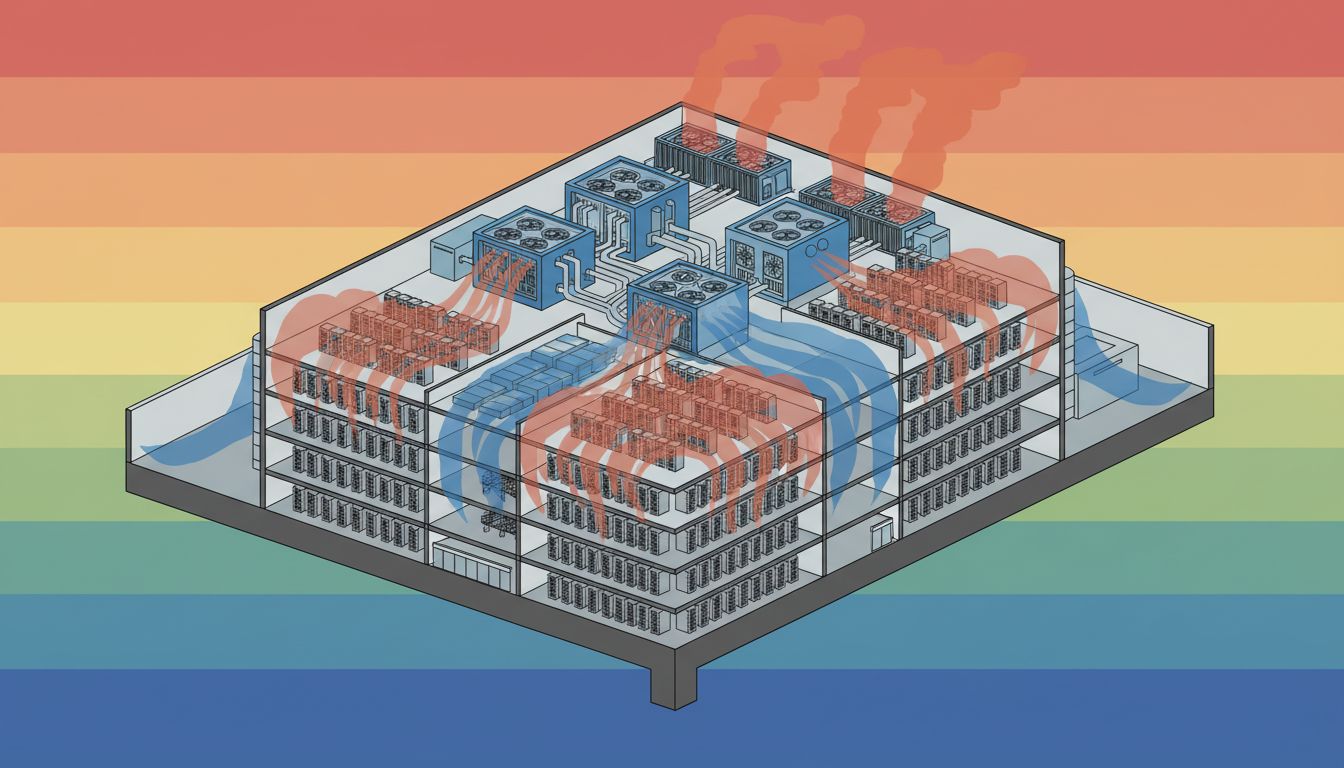

There’s a deeper reason this problem is hard: data centers aren’t infinitely elastic, and neither is the power grid.

A large data center might draw 100 to 300 megawatts of power at full load. You can’t just plug in more servers the way you’d plug in a space heater. The power contracts are negotiated in advance, the cooling systems are sized for specific thermal loads, and the physical space has limits. When AWS or Microsoft builds a new data center, they’re committing years in advance to a specific location, a specific power contract, and a specific capacity ceiling.

This is why the forecasting has to be accurate at multiple time horizons simultaneously. Short-term forecasts (days to weeks) drive immediate resource allocation decisions within existing infrastructure. Medium-term forecasts (months) drive decisions about activating additional capacity in nearby facilities. Long-term forecasts (years) drive decisions about where to build the next data center.

Weather interacts with all three horizons. A three-day forecast influences this week’s capacity allocation. Multi-year climate trends influence where you build. Google and Microsoft have both cited climate modeling in their site selection decisions, particularly around water availability for cooling and the reliability of renewable energy sources.

The competitive advantage hiding in plain sight

Here’s what’s easy to miss: the cloud providers that forecast demand more accurately aren’t just saving money. They’re selling a more reliable product.

When AWS or Azure promises enterprise customers that their applications will scale seamlessly during peak events, that promise is backed by the forecasting infrastructure. A retailer who signs an enterprise cloud contract is essentially buying the provider’s ability to predict their needs before they know what those needs are. The economics of what you’re actually paying for in cloud services are almost never what they appear on the surface.

Smaller cloud providers and regional competitors have a harder time building these models because they lack the data volume. Forecasting accuracy improves with scale — more customers generating more demand signals across more geographies means the model has seen more edge cases and more combinations of variables. This is one of the less-discussed structural advantages of the largest providers, and it compounds over time.

The weather, it turns out, is just information. Cloud providers have learned to read it the same way a farmer reads the sky, not with superstition, but with a systematic understanding of what the patterns mean for what comes next.