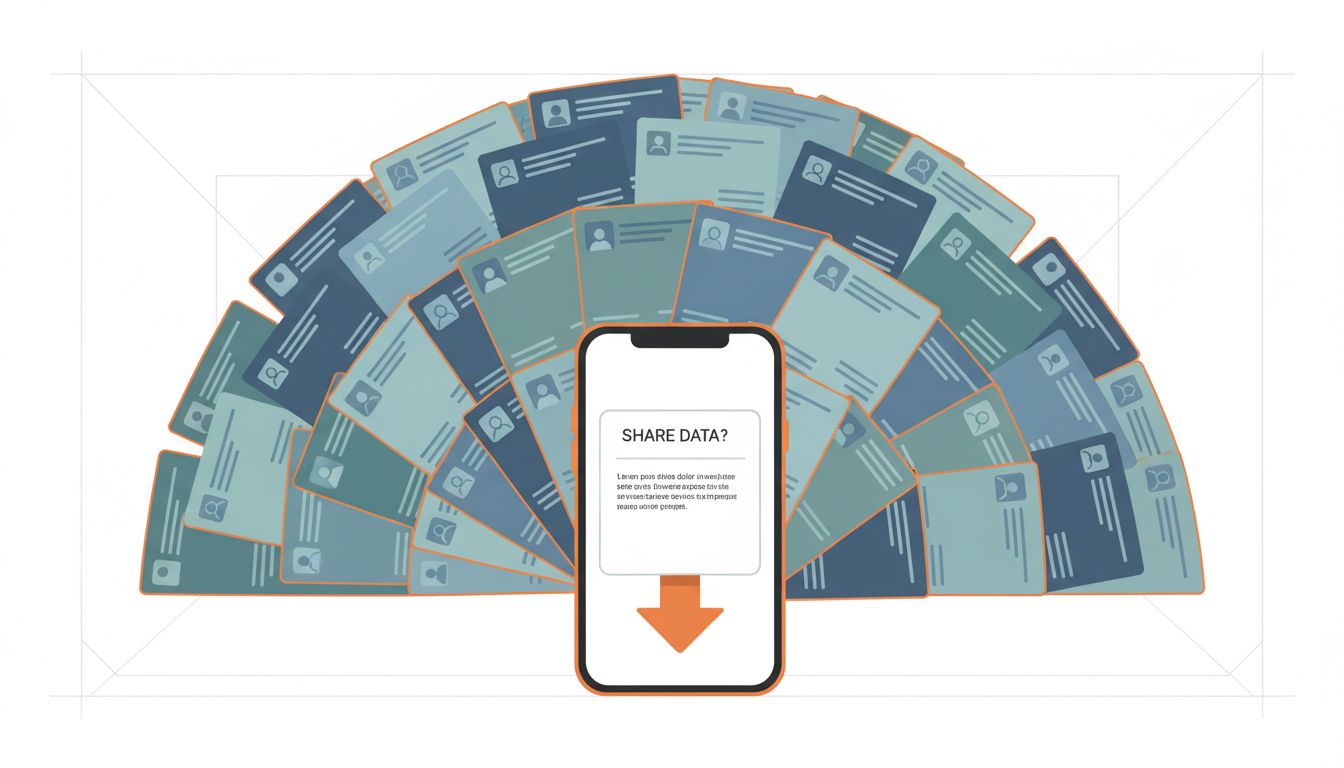

Your contacts list is one of the most intimate datasets on your phone. It contains your doctor, your therapist, your ex, your landlord, the number you saved as “do not answer.” When an app asks to access it, the permission dialog implies a simple transaction: give us access, get a feature in return. That framing is almost entirely wrong.

The Feature Is Usually Pretextual

The stated reason for contact access is almost always “find your friends” or “import your address book.” This sounds reasonable. If you’re joining a new messaging app, you want to know which of your contacts is already there. The feature is real. But it doesn’t explain the scope of the access being requested, or what happens to the data afterward.

When an app requests contact access on iOS or Android, it typically gets everything in your address book: names, phone numbers, email addresses, physical addresses, job titles, company names, and any notes you’ve attached. It gets this all at once, often with no technical requirement to transmit the full dataset to a server. A well-designed feature could check contact hashes against a server list without uploading raw names and numbers. Many companies don’t build it that way. They upload the whole thing.

The reason isn’t engineering laziness. It’s that the full dataset is more valuable than the narrow use case requires.

What They’re Actually Building

Contact data is relationship data. When an app uploads your contacts, it isn’t just learning about your contacts. It’s learning about you, specifically about who you know, how frequently you interact with them, and how your social network is structured.

This is sometimes called a “social graph” and it has well-understood commercial value. Advertising platforms use it to infer interests, political affiliation, income level, and life stage. If your contacts list includes several OB-GYN offices and baby supply stores, that’s a strong signal. If it includes addiction treatment centers, that’s a different kind of signal. None of this requires you to have typed a single preference into the app.

But there’s a second-order effect that’s even more significant: your contacts didn’t consent to any of this. When you grant contact access, you’re sharing information about other people who have no idea their data just moved to a third-party server. A person who has never installed a given app can still appear in that company’s database, potentially hundreds of times, because hundreds of people who do have the app listed them as a contact. This is how companies build profiles on people who have actively avoided their products.

Facebook’s “People You May Know” feature, at its most aggressive, demonstrably surfaced connections between people who had no mutual friends and had never interacted on the platform. The most likely explanation, consistent with reporting from Kashmir Hill and others, was that contact data uploaded by mutual acquaintances was being used to infer the relationship. The people being connected never agreed to participate in that inference.

The Regulatory Framework Was Not Built for This

GDPR in Europe and CCPA in California both nominally address this problem. The issue is that consent frameworks are built around the assumption that the person consenting has standing to consent. When you hand over your address book, you are consenting on behalf of everyone in it. No existing privacy regulation handles this cleanly.

Apple introduced App Tracking Transparency in 2021, which requires explicit permission for cross-app tracking. This was a meaningful change. But ATT doesn’t cover what happens to data once it reaches an app’s own servers, and it doesn’t address the baseline contact-upload problem. You can deny tracking permission and still have your contact data uploaded, processed, and used to enrich behavioral models that don’t technically qualify as “tracking” under Apple’s definitions.

As tech companies have increasingly designed consent mechanisms to obscure what users are actually agreeing to, the gap between what users think they’re authorizing and what they’re actually authorizing has grown. The permission dialog says “access your contacts.” It does not say “upload your contacts list to our servers, retain it indefinitely, and use it to build behavioral inferences about you and every person you know.”

Why Apps Keep Asking Even When They Don’t Need To

Some apps have legitimate, ongoing reasons for contact access: a native phone dialer, a messaging app with real-time sync, a CRM tool. Many don’t. A photo editing app that asks for contacts on first launch has no functional need for that data. A game that asks for contacts is being straightforwardly predatory.

The reason these requests keep appearing is that the cost of asking is low and the cost of denial is zero. If you tap “don’t allow,” the app still works. This tells you the access was never necessary for core functionality. The request is speculative data collection, placed behind a consent dialog that most people tap through out of habit.

This is worth understanding clearly: when an app asks for something it doesn’t need and works fine without it, the ask itself is the product. The app is attempting to acquire a persistent asset (your relationship graph) at the moment you’re least likely to scrutinize the request (first launch, high motivation to get the app working).

What You Can Actually Do

The practical advice here is simpler than most privacy guidance: default to denying contact access for any app that doesn’t have an obvious, immediate need for it. Messaging apps and phone replacements have a case. Utilities, games, and most productivity apps do not. You can always grant access later if a specific feature requires it, and you can revoke it in settings after the onboarding flow that wanted it.

On iOS, you can audit which apps have contact access under Settings > Privacy > Contacts. Many people who check this list are surprised by what they find.

The broader issue won’t be fixed by individual behavior changes. It requires either regulatory frameworks that extend consent rights to the people whose data is being shared secondhand, or platform-level enforcement that distinguishes between functional contact access and speculative data harvesting. Neither is close to existing. Until they do, the default assumption when an app asks for your contacts should be that it wants them for reasons that have nothing to do with making the app work better for you.