In 2007, a Google employee named Googler X (the name has been lost to internal lore) calculated that he hadn’t left the Googleplex campus for a meal in eleven days. Everything he needed was within walking distance: sushi, burritos, wood-fired pizza, a cereal bar with thirty varieties, and an espresso machine that would have cost more than his first car. He wasn’t unusual. He was the point.

Google’s food program is the most studied, most copied, and most misunderstood perk in Silicon Valley history. On the surface it looks like extravagance. By the mid-2010s, Google was spending an estimated several hundred million dollars annually on food across its campuses. For context, that figure exceeds the total venture funding many Series A companies ever see. The natural reaction from anyone operating a lean startup is bewilderment, maybe resentment. The actual lesson is more interesting.

The Setup

Google didn’t invent the idea of feeding employees at work. Factories did that. Military installations do that. What Google did, starting in earnest after the company moved to its Mountain View campus in 2004, was treat food as a strategic input rather than a staffing convenience.

The man most responsible for building the program was Charlie Ayers, the company’s first executive chef, hired before the IPO when Google had a few hundred employees. Ayers came from catering for the Grateful Dead, which is either the most Silicon Valley origin story imaginable or a clue that unconventional thinking was baked in from the start. His mandate wasn’t to save money on cafeteria contracts. It was to make staying on campus more appealing than leaving it.

This sounds like a minor operational decision. It wasn’t. It was a labor strategy disguised as hospitality.

What Happened

The program scaled as Google scaled. By the time Google went public in 2004, free food had become a core recruiting tool. By the time the company hit a thousand employees, it had become a retention mechanism. By the time it hit ten thousand, it was something more structural: a way of collapsing the boundary between working hours and non-working hours without anyone noticing.

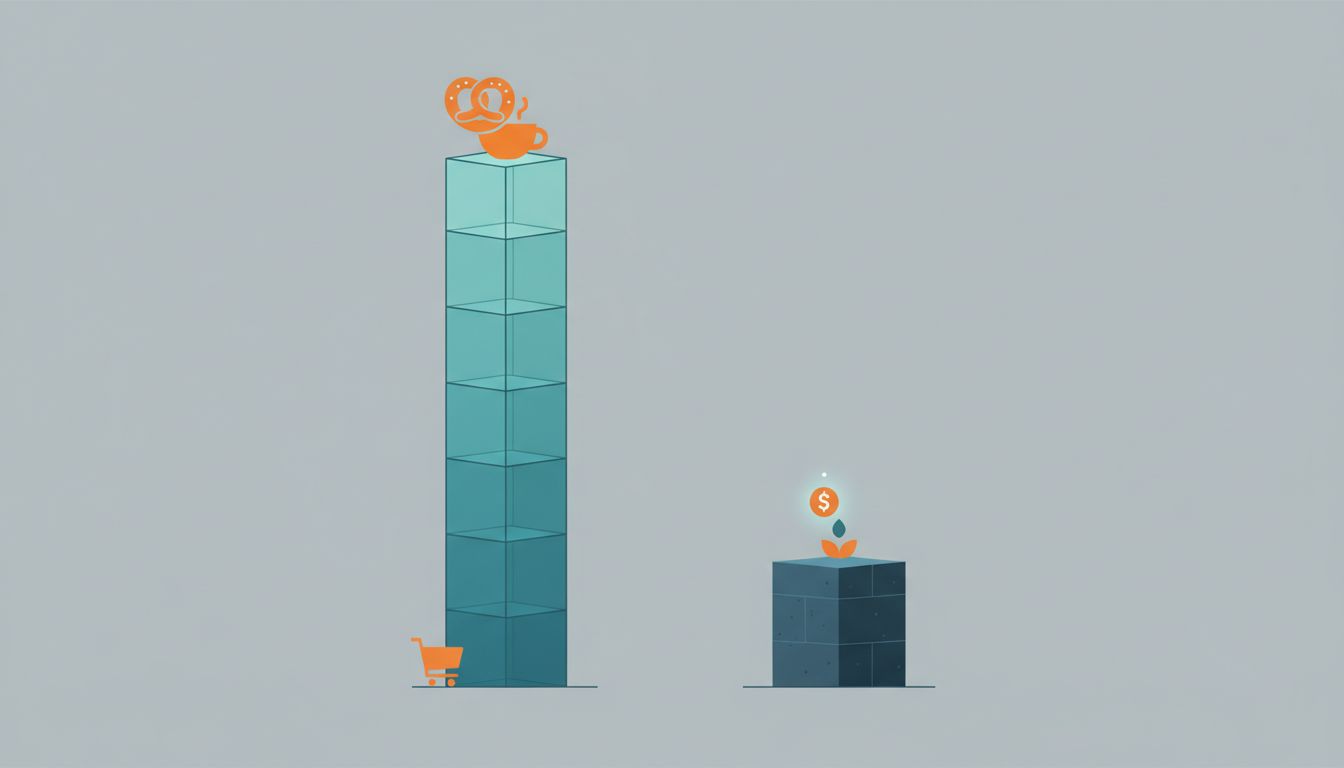

Here’s the economics as Google understood them. A senior software engineer in the Bay Area earns well into six figures. When you account for recruiting costs, onboarding, ramp time, and the opportunity cost of an unfilled seat, replacing that engineer costs somewhere between half and double their annual salary. Keeping them on campus for an extra thirty minutes at lunch, reducing their cognitive overhead around meal planning, making the campus itself feel like a self-contained environment worth staying in, all of that has a measurable retention value. You don’t need to be precise about the number to see that free lunch for an engineer who might otherwise be recruited away is a cheap trade.

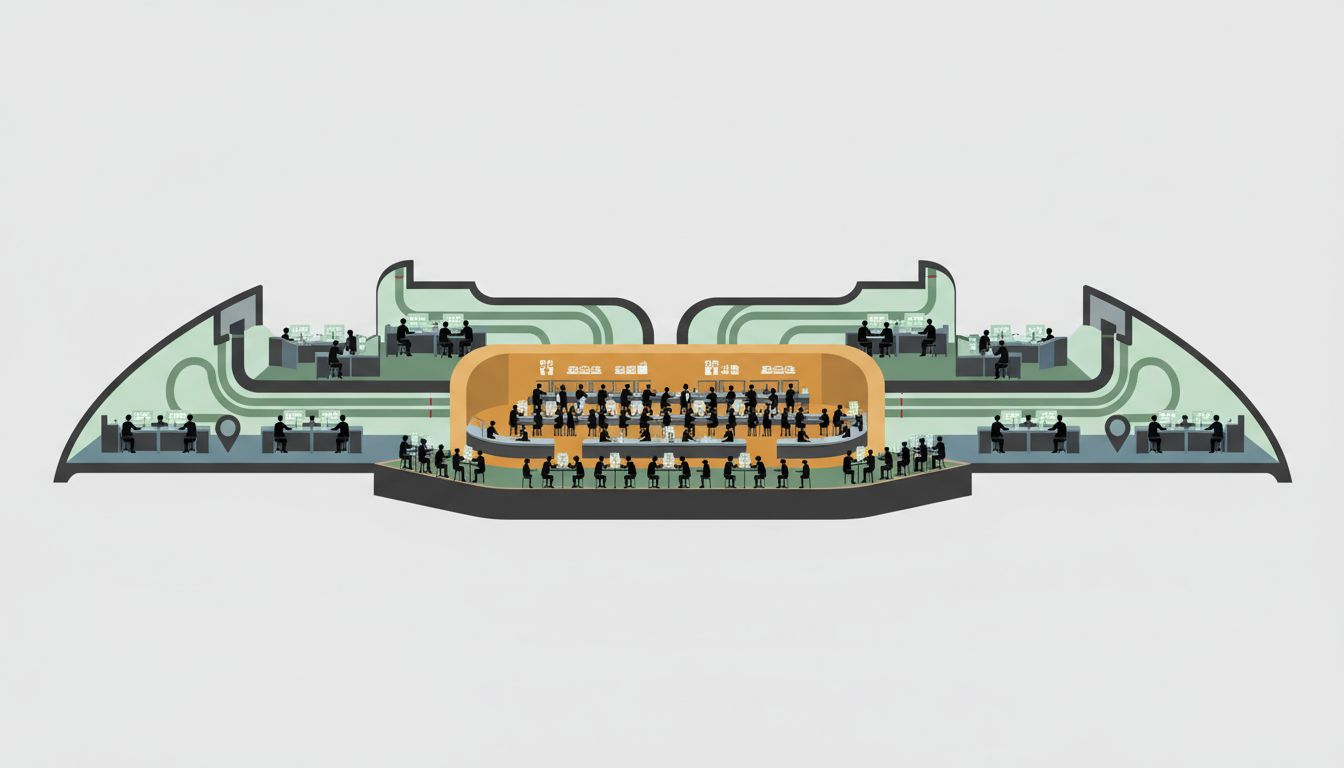

Google’s own internal research supported this. The company studied where engineers had informal conversations, where ideas crossed team boundaries, where serendipitous collaboration happened. The answer, consistently, was around food. The cafeteria lines, the kitchen nooks between engineering pods, the communal tables. The food program wasn’t just keeping people fed. It was engineering the informal social infrastructure of the company.

This is the part that gets lost when people talk about perks as a compensation arms race. Google wasn’t throwing money at the problem of attracting talent. It was spending money to build a specific kind of working environment, and then calculating whether that environment produced better output than the money spent. The conclusion, for a long time, was yes.

Why It Matters

What Google built became a template that the entire industry copied without fully understanding the logic underneath it. Facebook, Twitter, Dropbox, Airbnb, and dozens of other companies built cafeterias, stocked kitchens, hired culinary teams. The perk became industry standard. Which is exactly when it stopped being a competitive advantage and started being a baseline expectation.

By the early 2010s, a startup that didn’t have a well-stocked kitchen was signaling that it was behind the times. Seed-stage companies with twelve employees were spending meaningful portions of their limited budgets on cold brew on tap and snack walls. The logic that made sense for Google, a profitable company with thousands of engineers whose marginal retention had enormous value, was being applied wholesale to companies where the calculus was completely different.

This is the trap. A strategy that works for one company at one stage of growth gets treated as a universal truth, and then everyone performs the ritual without asking whether the ritual serves the underlying goal.

The honest version of the Google food story is that it worked for Google because Google had solved a harder problem first: it had work worth doing, engineers who wanted to do it, and enough density of talent on campus that the informal collisions actually produced value. The food was an accelerant applied to something that was already burning. For a twelve-person startup with a product that isn’t working yet, the same accelerant does nothing except drain the bank account.

What We Can Learn

The first lesson is about what perks are actually for. The companies that do this well treat amenities as environmental design, not compensation substitutes. Google’s cafeteria wasn’t meant to offset a below-market salary. It was meant to make the campus a place people wanted to inhabit for long stretches, because the work that happened in those long stretches was worth more than the food cost. When you use perks to substitute for competitive pay or interesting work, you attract people who care primarily about the perks, and those are not the people who build great products.

The second lesson is about copying strategies without copying the conditions. The companies that spent heavily on office culture during the late 2010s startup boom were often doing it because their investors expected it, their recruits expected it, and everyone in the industry was doing it. That’s not strategy. As a counterpoint, Basecamp famously refused this logic and built a profitable, durable company without an office kitchen worth photographing for TechCrunch. The question isn’t whether to spend on environment. It’s whether the spending serves the actual work.

The third lesson is about what these programs reveal about a company’s theory of value creation. Google’s food program was, implicitly, a bet that proximity and informal interaction between smart people produced better software than remote, distributed, heads-down work. That bet looked correct for a long time. The pandemic forced every company to test it under duress, and the results were more complicated than either side of the debate wanted to admit.

What didn’t change is the underlying logic Google was applying in 2004: the environment you build shapes the work that happens inside it. Whether that means a campus cafeteria or a well-designed async culture depends entirely on what kind of work you’re doing and who you’ve hired to do it. The mistake is cargo-culting the output of someone else’s thinking instead of doing the thinking yourself.

Google’s food program spent hundreds of millions of dollars and, for a significant stretch of the company’s growth, probably earned back multiples of that in retention, recruiting, and the intangible output of a campus where engineers stayed late because they wanted to, not because they were told to. It was a good strategy, executed well, at the right scale, for the right company.

It was also specifically theirs. That’s the part everyone forgot to copy.